Developing a Strategy to Evaluate the National Climate Assessment (2024)

Chapter: 3 Framework of an Evaluation

3

Framework of an Evaluation

Two central goals of the National Climate Assessment (NCA) are to “combine and interpret data from various sources to produce information readily usable by policymakers attempting to formulate effective strategies for preventing, mitigating, and adapting to the effects of global change” (Global Change Research Act of 1990 [GCRA] § 104(d)(3), 15 U.S.C. § 2934(d)(3)) and to “consult with actual and potential users of the results of the Program to ensure that such results are useful” (GCRA § 102(e)(6), 15 U.S.C. § 2932(e)(6)). Any evaluation of the NCA or other products of the U.S. Global Change Research Program (USGCRP) needs to acknowledge these ambitious goals. The committee sets out a way to do that in the next four chapters.

This chapter begins with an overview of key evaluation principles, including defining and identifying different types of evaluation. It includes a description of core components of common evaluation frameworks and applies them to the task at hand. One of the foundational elements of an evaluation is developing a preliminary understanding of the ways in which the program being evaluated might achieve its goals, often depicted in the form of a logic model. That logic model will then be tested and refined through the course of the evaluation.

In this chapter, the committee presents a preliminary logic model, both to demonstrate its utility in evaluation and to serve as a starting point for evaluators. It is also a starting point for much of the discussion appearing later in this report. Based on the logic model, this chapter concludes with a list of the overarching questions that an evaluation might seek to answer. The logic model and overarching evaluation questions, when finalized by an evaluation team, would lay the groundwork—or conceptual model—for an evaluation.

It is worth noting that creating and using the NCA and related products is a social process, and that an evaluation should inquire into the activities of USGCRP, its federal agency partners, the author team of the NCA, and the wide range of people who make use of the NCA both directly and indirectly. The substance of the NCA and related products draws heavily on the natural sciences, including extensive datasets; models; and tools for utilization, such as the NCA Atlas. An evaluation of the NCA needs to draw upon the social sciences to frame and conduct the evaluative process. Both the natural and social sciences provide benchmarks and insights into the substance of federal climate research and are needed to gauge the value of the information being shared in the NCA.

Because an evaluation of the NCA is a complex inquiry into multiple complex social processes, requisite expertise must be engaged from the beginning and throughout the process. First, USGCRP must determine the scope of the evaluation, what the team will look like, the budget, and whether the needs can be met internally or externally or through some combination of personnel. At this stage, engagement and buy-in to the evaluation by USGCRP leadership is critical as these individuals have the seniority, authority, and responsibility to define the scope. Early in the process, USGCRP may also consider forming an evaluation team, made of leadership, staff, key partners,

and/or others to guide the evaluation process and provide diverse perspectives. USGCRP may consider hiring one or more dedicated staff to work directly with the evaluator(s), acting as a liaison between the evaluation activities and the broader organization. In hiring evaluators, USGCRP could consider individuals and teams with experience and skills aligned to the goals of the evaluation, such as familiarity with the program, fit with the organizational culture, and methodological approaches that meet the needs. In summary, there is a lot of flexibility in who engages in the work of the evaluation and how they work with each other to accomplish the goals. USGCRP would benefit from thinking carefully about how to engage its capacity to accomplish the evaluation. As such, the committee’s recommendations call on USGCRP as the primary actor responsible for the decisions. However, at times in the text, the committee elaborates on actions and activities that are anticipated to be carried out specifically by evaluators who have expertise in designing and implementing evaluation.

OVERVIEW OF EVALUATION

The American Evaluation Association (AEA, n.d.) defines evaluation as “a systematic process to determine merit, worth, value or significance” (p. 1). The U.S. Centers for Disease Control and Prevention (CDC, 2023a) echoes this emphasis on a systematic approach in its definition of program evaluation,1 which it defines as “a systematic method for collecting, analyzing, and using data to examine the effectiveness and efficiency of programs and, as importantly, to contribute to continuous program improvement” (CDC, 2023a, “What Is Program Evaluation”). In the committee’s discussions with USGCRP and with prior evaluators of the NCA, the importance of being able to use evaluation findings to enhance future products was clearly articulated. As such, the committee believes that this program evaluation framing responds to both the expressed need and the ongoing development of the NCA.

Types of Evaluation

Following are several types of evaluation, offering the following descriptions (EvalCommunity, n.d.):

- Process/implementation evaluation determines whether program activities have been implemented as intended.

- Outcome/effectiveness evaluation measures program effects in the target population by assessing the progress in the outcomes or outcome objectives that the program is to achieve.

- Impact evaluation assesses program effectiveness in achieving its ultimate goals.

The distinction between the latter two types of evaluations is whether the focus is on more direct, shorter-term goals of a program (in this case, those goals include the ability of USGCRP products to meet the information and decision needs of key audiences, which it accomplishes through efforts such as creating products that are considered relevant and trustworthy and fostering networks of peers) or more distal goals (in this case, those impacts include mitigation and adaptation). This is further illustrated in the logic model that follows.

The prior NCA evaluation, conducted by Dantzker and colleagues (2016) (see Chapter 2 in this report), was primarily focused on understanding the effectiveness of the writing and review process and analyzing the reflections of participants in the process of creating the fourth NCA. While the present report does not emphasize process evaluation, the committee anticipates that USGCRP will continue to collect information on an ongoing basis about what is working well and where there are opportunities for improvement in the processes of developing and disseminating the NCA and other products. For example, USGCRP will likely want to gather feedback on meetings and workshops to be able to implement, test, and adapt process improvements quickly to better understand and meet audiences’ and participants’ needs (Keith et al., 2017).

The evaluation this committee is suggesting focuses on a set of key audiences and how well the NCA and USGCRP products meet those audiences’ decision and informational needs. For this purpose, an outcome

___________________

1 The CDC (2023a) defines program very broadly, encompassing “any set of related activities undertaken to achieve an intended outcome” (“What Is Program Evaluation”). In this chapter, the committee uses the term program to denote the development and dissemination of the NCA and other USGCRP products.

evaluation is appropriate because it will help determine the extent to which the program has the intended effect on prioritized audiences, which is consistent with the committee’s Statement of Task.

An impact evaluation would speak to the ultimate impact of the NCA and related products. These impacts include mitigating greenhouse gas emissions, increasing adaptive capacity, and adapting to the impacts of climate change. While an impact evaluation also provides valuable information, measuring such impacts is outside the committee’s Statement of Task and is not addressed here. Furthermore, an impact evaluation documenting the impact of the NCA in the complex and multifaceted context of climate change adaptation and mitigation would be challenging (Joseph et al., 2023). Instead, the goal of an outcome evaluation would be to investigate whether USGCRP products are having the intended outcomes of enabling key audiences to make informed decisions. As such, the evaluation would demonstrate a key portion of the theoretical pathway toward achieving those long-term impacts. In that way, it builds understanding of how USGCRP’s work contributes to the ultimate policy and societal impacts of adaptation and mitigation.

Although the evaluation approach described in this report is primarily an outcome evaluation, it may include elements of other evaluation types. For example, when the evaluators collect information from those individuals and organizations that participated in the development of the NCA, they will inevitably gather valuable insights on the effectiveness of the process. Similarly, the committee theorizes that the NCA will have a long-term impact on increasing adaptive capacity. When exploring how audiences use the information in the NCA, the evaluation may reveal improvements in those individuals’ or organizations’ adaptive capacity. This blurriness between boundaries of different evaluation types is to be expected and is desirable from the perspective of supporting USGCRP’s goal of using the data to improve future outcomes.

Another important note is that throughout the course of this report, the committee refers to evaluation in the singular. However, this guidance might be applied to multiple evaluations if USGCRP chooses to evaluate different products separately or chooses a continuous process of evaluation and improvement. It is also possible (as discussed further in Chapter 7) that the evaluation may be divided into phases. The approach described here could, therefore, be applied to a single overarching outcome evaluation or multiple evaluations of narrower scope.

Frameworks for Evaluation

The committee reviewed several evaluation frameworks that can be used to design an evaluation focused on impact or outcome. These include the CDC (2023a) Framework for Program Evaluation in Public Health, Utilization-Focused Evaluation (Patton, 2003, 2012), Practical Program Evaluation (Chen, 2005), Participatory Evaluation (Cousins and Whitmore 1998), Contribution Analysis (Mayne, 2012), and Equitable Evaluation (Stern et al., 2019). These frameworks often provide suggested steps for the evaluation process and/or standards for determining whether the evaluation itself is meeting its intended goals. The evaluation team may want to select one particular evaluation framework or use concepts from several to guide their work. This section describes significant components that are common across several evaluation frameworks and can be applied to the evaluation at hand. These components are also highlighted as recommendations for inclusion in the final evaluation.

Describe the program, its goals, and the pathways for achieving those goals.

Evaluators often begin by developing a detailed understanding of the program and its intended effects. Clarifying the program and its key goals will help evaluators establish hypotheses for testing how the program is meeting its objectives. Logic models are one common mechanism of depicting the relationships between the program activities and short-term outcomes and longer-term impacts (Julian, 1997). In the next section, the committee presents a preliminary logic model to demonstrate how this can be used to provide direction to the evaluation.

Design the evaluation with its use, intended users, and impacted parties in mind.

Utilization-Focused Evaluation is particularly relevant here, as that framework “begins with the premise that evaluations should be judged by their utility and actual use; therefore, evaluators should facilitate the evaluation process and design any evaluation with careful consideration of how everything that is done, from beginning to end, will affect use” (Patton, 2013, p. 1). In order to ensure the usefulness of an evaluation, it is important to consider which are the entities most likely to use the evaluation. This list will overlap with the intended audiences of the NCA and related products (described in Chapter 5), but it is not necessarily the same. For example, USGCRP itself is likely the

highest-priority evaluation user, as it will incorporate learnings from the evaluation into future products. Similarly, the funders of USGCRP (i.e., Congress) may use the evaluation to inform decisions about what aspects of the NCA and similar products are most effective and where it is most appropriate to direct resources. The federal agency partners of USGCRP would likely be considered key evaluation users as well. Identifying and engaging these high-priority users in the evaluation design can increase the likelihood that the finished product will meet their needs. This might include holding workshops with key users to develop or refine the logic model, vetting overarching evaluation questions and data collection instruments, and sharing early findings to provide perspectives on interpretations of results and to determine reporting and dissemination strategies.

In addition to users of the NCA, interested and impacted parties also need to be included in the evaluation design. These include potential users who may never read the NCA but have an interest in how it is used.

As described in Chapter 2, supporting continuous improvement has been a priority for USGCRP. As a result, it is important to ensure that the information gleaned from the evaluation will inform efforts to improve the process and products. For example, when designing data collection instruments, the evaluators might want to gather very granular information about what parts of the NCA were most helpful in meeting audiences’ decision-making needs and why, as well as which were not helpful and why not. This could lead to specific adjustments in future products.

Engage priority USGCRP audiences in implementing the evaluation.

Chapter 5 describes a broad array of audiences for USGCRP products and presents guidance on setting priorities among them. As described in Chapter 6, an evaluation will seek to collect information from a prioritized set of audiences via various data collection efforts (e.g., surveys or focus groups). In addition to collecting information from them, evaluators may seek to engage representatives from those audiences in the design of the logic model, evaluation, and/or interpretation of the results. The extent to which these audiences are engaged in these design and interpretation steps can range greatly (Chouinard et al., 2013), but many frameworks encourage some level of engagement. Additionally, attention will be needed on how to sample among and within audiences for the purposes of data collection. Chapter 5 provides guidance on criteria for selecting audiences to include in the evaluation. The evaluator will also need to determine a sampling approach for selecting within prioritized audiences. Because a representative sample may be difficult to obtain given the breadth of audiences, random or convenience samples may not seek to achieve representativeness but instead ensure a diversity of voices is heard.

Design and implement the evaluation with equity at its center.

Careful consideration will be needed when designing and implementing the evaluation to place equity at its center. The entire process—the team carrying out the evaluation, the audiences included, the evaluation itself, and strategies to communicate and disseminate its findings—all need to be anchored in equity principles. A growing body of literature emphasizes equity in evaluation design, including work focusing on equity as a key dimension in the evaluation itself, as well as broader, more holistic approaches to an equity-centered evaluation (Kallemeyn, 2009; Stern et al., 2019; Thomas, 2020; Whyte, 1989), which would be appropriate to consult.

Research and practice suggest a number of key principles of equitable evaluation, starting with the evaluation team. Evaluator teams are stronger when they include members from diverse backgrounds, perspectives, and lived experiences. This will help reduce biases and ensure that all voices are heard and considered during the evaluation process. Similar considerations should be applied when engaging interested parties and NCA audiences—evaluators should seek to engage a wide range of individuals from an array of backgrounds, locations, and varying levels of access to resources. When designing the evaluation, evaluators should use methods that are sensitive to the values, norms, and beliefs of the communities or audiences being evaluated, and that respect their time and resources (see Chapter 7). For example, principles from the co-production of knowledge (Djenontin and Meadow, 2018; Jagannathan et al., 2020) or action research (Lewin, 1946), such as reflective practice and action-learning cycles (Smith, 2001), may be appropriate. Evaluation approaches tailored to Indigenous peoples, including principles of cultural humility, use of multiple channels of communication, and learning from or including Indigenous strategic evaluators may also be considered (LaFrance et al., 2012; Whyte, 1989, 2017). Finally, best practices in inclusive communication can be used to reflect back to evaluation participants what evaluators heard and learned, and what is being done with evaluation findings.

Use concepts like contribution analysis to reflect the complexity of USGCRP’s products.

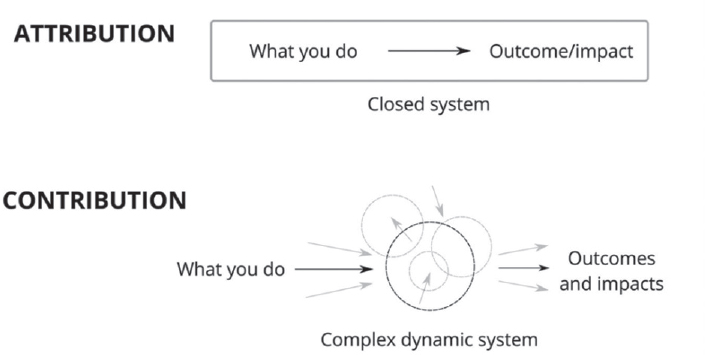

When looking at complex systems, attribution or cause and effect are difficult to detect and measure because multiple factors may

NOTES: Understanding cause and effect is important for evaluation. Attribution is easy to document in a closed system but difficult to detect in a complex dynamic system. A contribution model is a better fit for this environment.

SOURCE: Morton and Cook, 2023. © Reproduced with permission of the Licensor through PLSclear.

produce or help produce a single outcome, and with the factors operating in overlapping ways, it is difficult to isolate the effect of each (see Figure 3-1). Contribution analysis (Mayne, 2008) embraces this complexity and fosters understanding about multilayered change in a complex, dynamic system (Montague, 2012; Morton and Cook, 2023). While contribution analysis is still a relatively new concept, it has been applied to evaluations similar to the one proposed here—for example, when assessing the impact of advocacy on policymaking (Kane et al., 2021) or evaluating “large-scale transformational change processes” (Junge et al., 2020, p. 228). It is a multistep process that entails establishing a cause-and-effect relationship to be studied and its associated theory of change; it then uses an iterative process to gather multiple streams of evidence for use in assessing, challenging, revising, and strengthening that contribution story (Mayne, 2011). Core components of this approach include a participatory process for developing the theory of change, the gathering of different types of evidence, and the consideration of alternative causal theories to determine which one is best supported by the evidence (Wimbush et al., 2012).

Logic Models

While many organizations and programs can articulate their actions and desired global impact, elucidating the mechanisms of that transformation proves to be more challenging. An important first step of an evaluation involves establishing an initial conceptualization of how the project, program, or organization to be evaluated attains its objectives, which is typically illustrated through a logic model, which may also be called an outcome map, program theory (Weiss, 1998), theory of action (Patton, 2003) or impact pathway analysis (Morton and Cook, 2023).

A logic model is a process-based figure that describes how change is expected to happen in complex systems, setting out the mechanisms that link an activity to a desired outcome or impact (University of Wisconsin Extension, 2024). By making explicit the activities and outcomes, and how they are connected, the logic model spells out the intentions of the program—how it thinks its actions lead to the outcomes desired (CDC, 2018). Logic models are a powerful tool for fostering a shared understanding of the program by the staff, evaluator, and users of the evaluation. The logic model helps the evaluator generate hypotheses about relationships between people, activities, and outcomes that make a program work and how they should be measured. The evaluation then tests these hypotheses against observations.

There are many ways to structure and visualize a logic model, with much variation in format, level of detail, and method of organization (CDC, 2018). In its most basic form, logic models usually describe inputs, outputs, and outcomes or impacts (University of Wisconsin Extension, 2024). However, a logic model may include many other types of information to reflect the nature of the program, including audiences, activities, outcomes at different scales

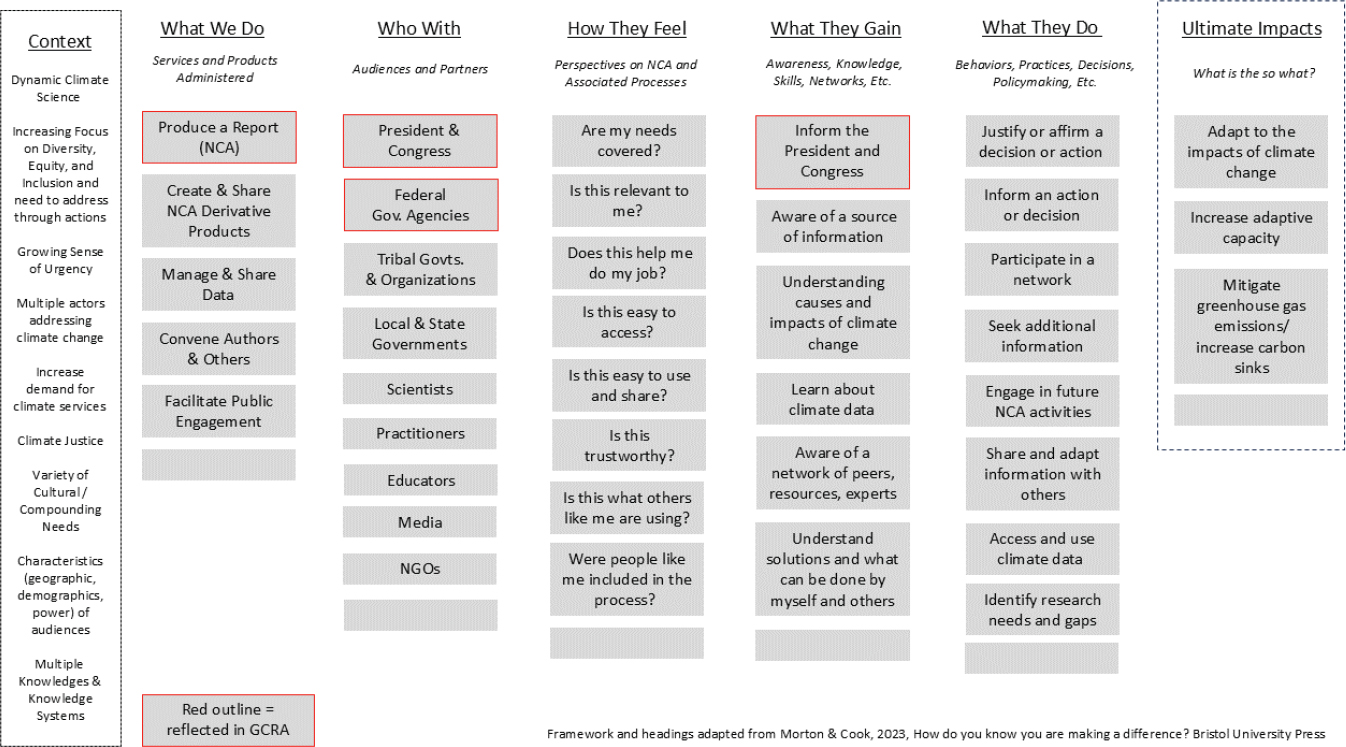

SOURCE: Morton and Cook, 2023. © Reproduced with permission of the Licensor through PLSclear.

(e.g., short term or intermediate), contextual factors, or moderators. Morton and Cook (2023) present an approach to creating a logic model that instead uses short phrases to describe these same basic components (Figure 3-2). If USGCRP chooses to develop a logic model as a foundation for the NCA evaluation, it could consider a variety of approaches to determine which fits best.

To illustrate, this chapter (and report) includes a preliminary logic model for NCA based on the approach presented by Morton and Cook (2023). Their approach assumes that when people gain new perspectives and knowledge, they act in different ways, leading a program to make a difference. The columns (see Figure 3-2) describe key elements of the program. This includes “What We Do,” which describes the activities, services, and products generated by the program. “Who With” describes the groups of people who are engaged in the program in various ways. This section could include more than one group of people, and the groups may vary in terms of level of engagement, but anyone served by the program should be identified here. Chapters 4 and 5 address the challenge of identifying and engaging with the wide span of audiences and participants with which USGCRP works.

Outcomes are the changes resulting from an activity or service provided (Blamey and Mackenzie, 2007; Morton, 2015; Rogers, 2008) and are also included in the logic model. Similar, alternative logic model frameworks exist. The Morton and Cook (2023) approach discerns three types of outcomes: “How They Feel,” “What They Learn & Gain,” and “What They Do Differently.” Although observed change in behavior—”What They Do Differently”—is often what is meant by the outcome of a program, Morton and Cook (2023) posit that subjective outcomes matter as well. Outcomes describing “How They Feel” relate to people’s feelings, beliefs, and perspectives. Outcomes describing “What They Learn & Gain” relate to changes in awareness, knowledge, or skills. These changes often precede or are associated with a change in behavior. “What They Do Differently” describes the behaviors or decisions that people would be expected to take if the program achieves its intended results. Finally, “What Difference Does It Make?” articulates the broader outcomes the program hopes to achieve. This column presents the vision of change that the program is working toward. In other words, if the program activities lead to the expected outcomes, then the program contributes to making a broader difference in the world.

Morton and Cook’s (2023) approach to organizing information in a logic model may be uniquely relevant to NCA in that it encompasses the complex dynamics between attitudes or perspectives, cognitive or rational processes, and decision-making or behavior. For example, dual-process theories explain how at times experiences, emotions, and heuristics guide decision-making, but at other times people process information slowly,

deliberately, and analytically (Kahneman, 2011). In the context of program evaluation, how people feel about the information, service, or product being provided is an important outcome to consider because feelings—such as the credibility and legitimacy of the information (Cash et al., 2003) or trust in the messenger (Besley and Dudo, 2022)—precede or are associated with other important outcomes such as attention or use. In addition to feelings about the information, the NCA might prompt feelings about climate change as an issue or it might motivate behavior (Steg, 2023).

Across the breadth of audiences and partners that NCA serves, it can be expected that some will be strongly motivated to attend to this information in great depth. For example, someone working with a climate adaptation science center might read multiple chapters of the report, review the background literature, and think deeply about how to translate the information for a specific set of decision-makers they serve; their attitudinal responses may be less important. In contrast, attitudinal responses to the NCA may be very impactful in determining its use by a local public health official who is just starting to understand the impacts of climate change on human health in their county. Being unfamiliar with climate science or USGCRP, this person may need to feel that the information is trustworthy and useful, before attending to the information in greater depth or sharing it with others.

Although the columns are arranged sequentially in the logic model (see Figure 3-2), they are interrelated, and causality can run in multiple directions. The activities, audiences and partners, and outcomes in a logic model can be connected by specific pathways, as Figure 3-2 illustrates. Pathways specify chains of causation through which a program achieves its outcomes, and they can be organized by audiences, program activities, or impacts (Morton and Cook, 2023). Each portion of the logic model can be used to generate evaluation questions and suggest ways in which the various items within the logic model are interrelated. According to Morton and Cook (2023), the pathways are units of analysis in evaluation and the connections between boxes help define the variables of interest and the types of evidence to be gathered.

While useful for planning, implementing, and evaluating a program, there can be several challenges associated with logic models, their development, and maintenance. A logic model is by nature an oversimplification and may not capture the full range of activities, audiences, and outcomes associated with a program. Furthermore, logic models are based on assumptions; if those assumptions are incorrect or biased, the model may be inaccurate. The process of developing a logic model is time-consuming and usually requires significant input from key partners and audiences. Once created, a logic model ought to be a living document and regularly assessed and updated. Updates are important because the logic model constitutes a theory of change shared by the evaluator and the audiences of the program. An outdated logic model can accordingly become a liability that limits or misinforms the program’s response to evolving situations and conditions.

In summary, elucidating the mechanisms by which a project, program, or organization contributes to a desired impact or change in the world is a complicated yet necessary endeavor for conducting an evaluation. A logic model provides a conceptual framework for fostering a shared understanding of how activities link to outcomes. There are many ways to go about developing a logic model; one approach is described here. The model can be developed at the project, program, or organization scale and can be nested to show how all the work of an organization contributes to an anticipated impact. By leveraging logic models as a tool, USGCRP can articulate its intentions and explain the connections that it believes lead to program effectiveness. The logic model may continue to evolve as more nodes are detected and the relationships between nodes are better understood.

Illustrative Logic Model Applied to the NCA

This section presents an illustrative logic model for the NCA, using the approach discussed above. However, because the committee’s knowledge is incomplete, the logic model presented here is incomplete. A logic model is usually generated through extensive discussion and deliberation, in which the people who shape and define the program describe who engages with the activities and what they feel, think, or do differently because of their engagement. While the committee offers an example here to aid in the framing of the evaluation recommendations, the report recommends that USGCRP, with evaluators it selects, engage in the process of either refining the logic model presented here or developing a new one that more fully reflects USGCRP’s understanding of how its work contributes to informed decision-making.

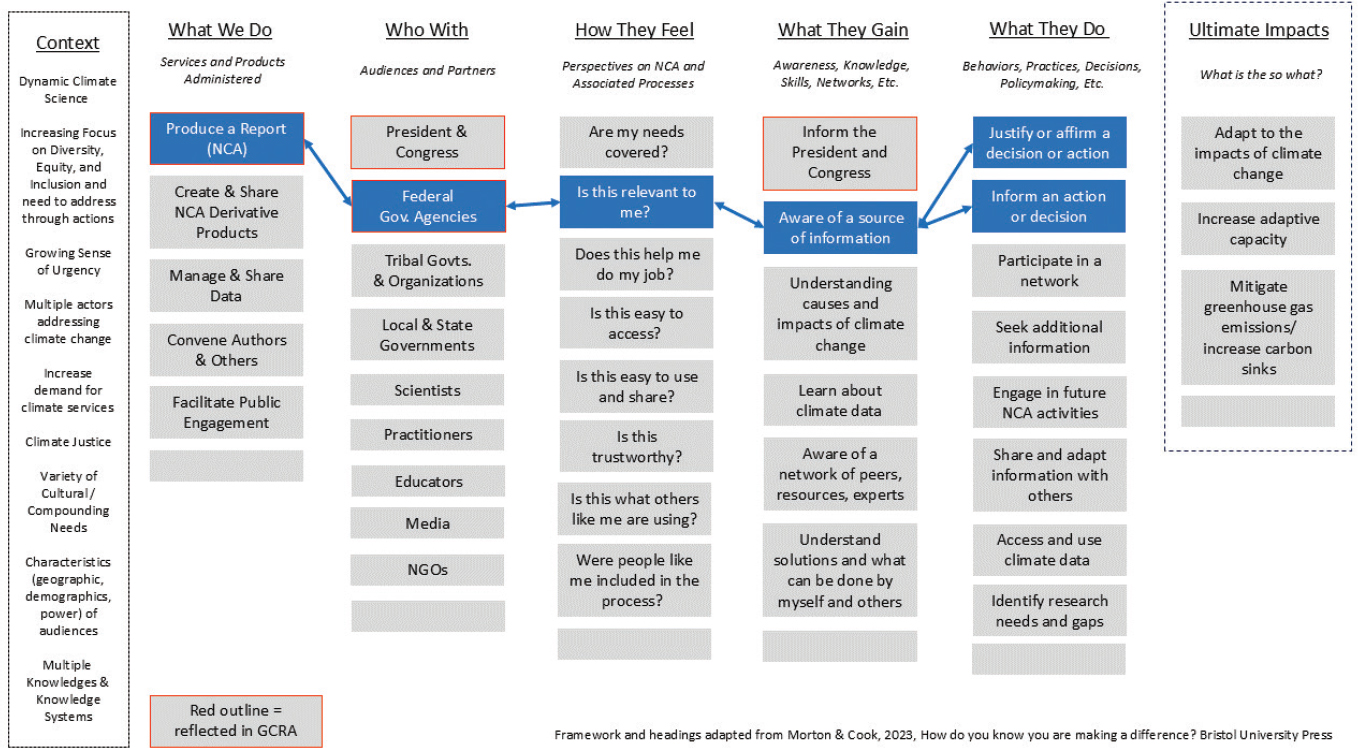

As shown in Figure 3-3, the illustrative logic model for USGCRP is organized into columns with labels adapted from those in Figure 3-2. “What We Do” includes some of the services, products, and activities administered by USGCRP, including the NCA, climate data, and public engagement activities. This logic model also includes expected outcomes. “How They Feel” includes some of the feelings about NCA that might be related to other outcomes, such as whether people feel it meets their needs or helps them with their job or whether the information is trustworthy. “What They Gain” includes primarily cognitive outcomes, such as awareness of the assessment, knowledge about climate change, or familiarity with sources of climate data. Awareness is particularly key, as it mediates many other outcomes. Awareness of information and knowledge of climate changes and impacts is important but insufficient to prompt action in response.

The next columns in the logic model provide critical insights into conditions and prompts for decisions and actions. “What They Do” includes some of the actions NCA users might take. This includes justifying an existing decision, informing a new decision, or sharing NCA or climate change information with others.

The committee modified the Morton and Cook (2023) model by adding a “Context” section to the logic model, to make transparent the dynamic social environment and background conditions that may affect everything presented in the logic model. (See Chapter 2 for additional discussion of historical and contextual factors.) Contextual factors are important because they may independently cause or alter some of the effects of the NCA (e.g., by increasing or decreasing its perceived credibility, by altering the salience and usability of federal research as a source of information, or by expanding the size and composition of the potential audiences interested in climate change and its impacts on different geographies and populations).

As previously discussed, a logic model also usually includes a description of the broader societal impact, called “Ultimate Impact” in Figure 3-3. In this illustrative logic model, the end goal that the NCA supports is to inform decisions on adaptation to the impacts of climate change, increasing adaptive capacity, and mitigating greenhouse gas emissions. These ultimate impacts unfold over a long period of time, however, in a complex and rapidly changing setting. In a practical evaluation, as called for in the Statement of Task, observing such impacts and relating them to a specific NCA or product is not feasible.

The committee recommends that USGCRP develop a logic model as outlined above (Recommendation 3-3). We highlight several considerations in developing this logic model:

- Map out the activities and outcomes: Determine what activities USGCRP carries out in order to produce the outcomes. Define the different types of outcomes and sort them into categories (e.g., “What They Feel”).

- Define the ultimate impact: Identify the broad impact to which the program contributes. What are the broad and long-term effects that USGCRP and the NCA have on people and society?

- Develop connections: Identify high-priority pathways (connections linking boxes). These correspond to the intentions of the Program in creating and disseminating the NCA. These pathways are hypotheses to be tested in an evaluation.

- Consider the context and assumptions: Define the contextual factors that are important to program implementation and the outcomes to which implementation contributes.

- Utilize participatory approaches: Throughout its history, USGCRP has involved key participants in the preparation of the NCA, both in federal agencies and beyond. It is therefore appropriate to involve some of these participants in developing the logic model to ensure that it reflects important perspectives and priorities.

- Choose a visual representation: Use a visual representation that reflects the logic model, so that it is easier to understand.

- Iterate and revise: Use the logic model to articulate causal assumptions embodied in USGCRP’s intentions. The logic model is a tool for creating a common understanding of the ends and means pursued in the NCA. Thus the logic model needs to be modified as the evaluator prompts USGCRP to reconsider its assumptions in light of the findings of the early stages of the evaluation.

NOTE: GCRA = Global Change Research Act of 1990; NGO = nongovernmental organization.

SOURCE: Generated by the committee, adapted from Morton and Cook, 2023.

Networked Nature of NCA Use

The committee calls specific attention to the outcome labeled “Share and Adapt Information with Others” because USGCRP provides information that is often adapted and shared with others through people and organizations that span boundaries (Goodrich et al., 2020). Many people likely receive extracts, modifications, or customizations of the information from the NCA through other sources rather than from the NCA directly. As described in Chapter 2, USGCRP organized the NCANet in 2012, a formal network that grew to more than 225 organizations collaborating to connect their communities to the NCA process and products. Support for NCANet ended, but outreach to audiences continues. Some audiences who have received climate science information may not know that a National Climate Assessment exists. To these audiences, what matters is that they are getting relevant information from a trusted source—a leader of their network. Ideally, this iterative diffusion process leads to tailored information more amenable to specific uses (Kalafatis et al., 2015), with some organizations even forming strategic partnerships with others in these networks to scale up their ability to translate and engage effectively with information users (Bidwell et al., 2013; Lemos et al., 2014). However, scientific messages can also become distorted as they spread through networks, potentially undermining scientific credibility and resulting in unintended consequences (West and Bergstrom, 2021).

Information diffuses from the NCA within a structure: it spreads via existing networks, both formal and informal. Over time, these networks have become an increasingly complex and dynamic web of interconnected organizations and actors operating from the global to local levels across many sectors. In this sense, dissemination of the NCA and its products can promote social learning throughout these networks. Social learning through networks can in turn affect beliefs and behaviors, and in some cases contribute to collaboration in solving social problems (Bener et al., 2016; Henry, 2020).

Obtaining NCA information through third parties may also result in people using the NCA without knowing the original source. It is important to account for such indirect contacts in order to avoid underestimating the impact of the NCA. Chapter 4 elaborates on this “network of networks” idea, explaining how network analysis can help contextualize this transmission of information. Because of the networked nature of NCA information, an evaluation of use should trace these flows of information, answering questions such as which nodes have the largest role in transmitting information, which are considered most trustworthy, and how the information might be modified or customized in the process.

Defining Audiences, Participants, and Pathways

A key component of a logic model is defining and discussing who the project, program, or organization serves (Bamzai-Dodson et al., 2021). In addition to identifying groups of people the program involves or serves, it is also important to identify those who are not currently being served but who could be reached if specific efforts were made.

Any evaluation will reflect the world views or cultures of those conducting the evaluation and that no evaluation is free of these lenses. The American Evaluation Association (AEA, 2011) published guidance on how to ensure a “culturally competent” evaluation; some of these suggestions also address larger equity issues facing any evaluation. The AEA guidance defines culturally important factors as including, but not limited to, “race/ethnicity, religion, social class, language, disability, sexual orientation, age, and gender,” as well as consideration of “[c]ontextual dimensions such as geographic region and socioeconomic circumstances” (AEA, 2011, “What is culture?”). A course correction may be necessary if the assessment emphasizes culturally significant factors and the emerging meanings of one group over those of others; an additional concern is that the data produced may not be valid internally and externally for the larger population under consideration (AEA, 2011). Here, the phrase internal and external validity broadly refers to whether researchers are measuring what they set out to measure (internal) and whether the results are applicable to broader populations or contexts (external) (Crano et al., 2014). Note that some scholars apply issues of internal and external validity to qualitative designs as well (Thomann and Maggetti, 2020). Fundamentally, questions about validity ask whether the research is both meaningful and trustworthy. If a culturally significant factor is prioritized for use without being a specific goal of the research design, the data may suffer from reduced validity. For example, if the resulting data were intended to measure general usage, and instead all users were from a specific socioeconomic class or geographic area, then the results

might not be valid with regard to overall usage; conversely, findings that are valid in general may not be valid for some subgroups. Minorities, who may be disproportionately affected by climate change (or by melioration and mitigation activities), may have needs that are not reflected in the overall findings.

The AEA guidance document specifies that the assessment should strive for cultural competence, meaning that the evaluators should be self-reflexive about their own identity(ies) and have the capacity to respect and comprehend other cultural perspectives (AEA, 2011). AEA offers cultural competency practices including (1) recognize the complexity of cultural identity; (2) aim to use methods of knowing and measuring that take into account how different cultures define concepts in ways that are salient to them and may not necessarily align with classical ways of knowing; and (3) be mindful of the evaluators’ limitations and worldviews and how these may affect the evaluation (AEA, 2011).

In Figure 3-4, the audiences mandated by the Global Change Research Act2—Congress and the president—are identified in red. This is one pathway that can be investigated in an evaluation: does the NCA inform Congress and the president? Figure 3-4 also highlights graphically another, more detailed pathway that depicts how the NCA interacts with federal agencies. Chapters 5 and 6 discuss this and other pathways, in order to illustrate the advice the committee provides on how to design an evaluation. Each pathway identified for study is central to the design of an evaluation because it specifies a hypothesis describing how the NCA contributes to impacts on specific participants and audiences. The evaluation will include gathering information to explore such hypotheses.

Consider the pathway marked in blue in Figure 3-4. When the NCA is released to federal agencies, how does the report affect their feelings, knowledge, skills, and actions? A necessary precondition is that the audience be aware of the NCA, leading to questions about awareness of the NCA within agencies. Then one can break down categories of outcomes (feelings, knowledge, and actions) into more specific areas of relevance (e.g., which specific parts of the NCA were relevant to the person’s needs), which ultimately can be converted into questions appearing on a data collection instrument (e.g., interview protocol, survey questionnaire), producing measures that can be analyzed.

Identifying pathways important to USGCRP’s goals also facilitates the integration of relevant theories and existing measures. For example, ideas related to the use of science in decision-making can inform the operationalization of concepts and the way they are measured. Whiteman (1985) theorized that there are two types of use of science in policy: (1) strategic use, which is using science to defend or support an already established policy, or (2) substantive use, which uses science to inform a policy decision. The connections between each portion of the logic model can be used to help determine what kinds of interrelationships are likely among items, setting up potential cross-tabulations, regression analyses, or other analytic tools. The next section discusses how the logic model can guide the formulation of overarching evaluation questions, which can then be elaborated further to investigate specific areas of interest.

Illustrative Evaluation Questions: What Will Be Learned from the Evaluation?

An important part of planning an evaluation entails evaluators engaging with the intended users of the evaluation to determine what they hope to learn from the evaluation. Working with the users will help produce a set of overarching evaluation questions that address the core of the information needed about the program (EvalCommunity, 2024). Notably, these are not the specific questions that would be included in a survey or data collection instrument; instead, they are the broad concepts that the evaluation will address. Six overarching evaluation questions are proposed below, as well as subquestions that further illustrate the concepts each overarching question seeks to address (Table 3-1). Even these subquestions would not necessarily be asked in data collection efforts; surveys or interviews will typically ask even more granular questions,3 such as Can you provide examples of specific ways in which NCA products have helped you in your work? For each example, what specific parts were most helpful and why?

___________________

2 Global Change Research Act of 1990, 15 U.S.C. Chapter 56, Public Law 101-606, 104 Stat. 3096-3104.

3 To illustrate the difference between the questions used in data collection and the overarching evaluation questions/subquestions, consider Rodriguez-Franco and Haan (2015). That study used a survey with 64 multiple-choice questions as the primary data collection instrument. However, the overarching evaluation questions might be summarized as: What are Forest Service resource managers’ perceptions of climate change? What are their science delivery needs related to climate change and its natural-resources impacts?

NOTES: A potential user pathway is mapped to explain how a person within a federal agency may use the National Climate Assessment (NCA). This individual would need to be aware of the NCA and its relevance before the pathway is followed in an action or decision. Pathways in a logic model help to define questions that could be asked and evidence to be gathered in an evaluation. For example, individuals in a given position or agency could be asked questions in a survey related to the relevance of NCA, its specific chapters, or derivative products. GCRA = Global Change Research Act of 1990; NGO = nongovernmental organization.

SOURCE: Generated by the committee.

TABLE 3-1 Preliminary Overarching Evaluation Questions for the National Climate Assessment (NCA)

| Evaluation Questions and Subquestions |

|---|

|

|

|

|

|

|

|

|

|

|

|

|

Which NCA products or parts of products were not helpful? What was the result of your use of the NCA products? An example of a data collection instrument of this kind is in Rodriguez-Franco and Haan (2015).

Note that each of the six overarching questions has a different purpose. In order, these are awareness, information needs, decision needs, products and processes, contextual factors, and the network of networks. These topics have some overlap; for example, if a product is poorly organized (Question 4), that may affect the degree to which information needs and decision needs are met. Still, each question plays an important role. For example, if the NCA meets people’s information needs but not their decision needs, then it is not fully meeting its purpose.

Working with evaluation users to develop overarching questions (and potentially priorities among them) is important because it places the focus on what those evaluation users hope to learn. This, in turn, is a key step in determining which methods are most appropriate to apply to which question. For example, if evaluation users are interested in learning the extent to which the NCA or other products are being used, then conducting broader surveys or leveraging existing data (e.g., citation analysis) may be more appropriate. However, if evaluation users are interested in gaining an in-depth understanding of how audiences use products, a series of interviews or focus groups, culminating in a small number of case studies, might be more appropriate. Because the overarching evaluation questions here are best answered collectively using a combination of methods, Chapter 6 offers guidance on selecting an effective blend of methodologies.

The questions in Table 3-1 are examples of the types of overarching evaluation questions that might guide an evaluation of the NCA. They are closely aligned with the logic model presented in Figure 3-3. For example, some of the questions below reference “contextual factors”—those refer specifically to the items highlighted in the far-left column of the logic model. Similarly, other questions refer to how users feel, what they gain, and what they do—those refer to Columns 4–6 of the logic model, respectively.

Because of the way the evaluation questions are structured, it is necessary to refer to the logic model when interpreting them. For example, Question 3 speaks of the effects of USGCRP products on the full range of actions that are described in “What They Do”(see Figure 3-3). As such, this question encompasses a wide array of potential actions, including making decisions about policy, additional research to conduct, and whether to participate in further USGCRP activities or networks. Because the Statement of Task did not provide insights about what types of decisions (e.g., decisions about how to reduce emissions or about adaptation or risk management strategies) or types of information (e.g., about tipping points or about the distribution of extreme risks) are of greatest interest, the evaluation questions do not include that level of specificity. The committee anticipates that USGCRP will often include greater specificity in the questionnaires or interview protocols. Some topics could be listed as individual items within a “check all that apply” type of question; others could be examined in greater depth. Because of this alignment between the logic model and the questions below, the overarching questions will need to be revised if the evaluators propose a logic model with a different structure. In addition, as discussed above, it is imperative that the evaluators work with the priority users of the NCA to determine what information the evaluation must yield to be useful. Therefore, the final overarching questions will differ from those proposed below because they will be informed through that discovery process.

The preliminary overarching evaluation questions are based on the logic model, the Statement of Task, and the discussions the committee had during its open meeting. These questions cover the same themes as those in the Statement of Task, but they have been reorganized and reframed, in part to reflect the logic model. Table C-1 (Appendix C) provides a crosswalk between the questions below and those in the Statement of Task, to demonstrate that all the concepts in the Statement of Task are incorporated.

The committee determined that to best understand how the NCA meets the needs of priority audiences, it is important to assess how information flows through the sprawling networks connected to USGCRP. A changing climate affects everyone, but the NCA may reach only a small fraction of people in the United States. Chapter 4 introduces network analysis, a branch of applied mathematics that studies nodes, such as organizations, and the connections that link them, such as the transmission of information. USGCRP is a central node in the interagency research network producing reliable climate science. Chapters 4 and 6 discuss how network concepts can be used to design an evaluation so that it illuminates how information in the NCA reaches those making decisions. And, as discussed in Chapter 2, USGCRP has at times employed a formal network of collaborating organizations, each of which is a node in its own network, to engage audiences and disseminate the information in the NCA. In these and other ways, USGCRP interacts with a network of networks in the transmission of climate science.

What Will Be Done with Evaluation Findings?

As emphasized throughout this chapter, a core principle of utilization-focused evaluation is ensuring that evaluation findings will prove useful. As such, it is critical to consider how individuals and organizations intend to use the evaluation from the beginning of the process, including in the development of the overarching evaluation questions and logic model and in the prioritization of evaluation participants (as described in Chapter 5). Chapter 2 suggests two modes through which the evaluation findings might be applied—to support continuous assessment and improvement, and to illuminate strategic questions raised by the broadening of the NCA’s scope over time.

With regard to continuous improvement, there are many ways in which findings from the evaluation could be translated into recommendations for enhancing or refining USGCRP processes and products. For example, overarching evaluation Question 1 will yield information about how individuals become aware of NCA products and gaps in awareness. As USGCRP learns about the relative effectiveness of different dissemination mechanisms, it can shift resources from less-effective to more-effective tools. Similarly, by determining which parts of the network of networks are most effective in disseminating USGCRP products (Question 6), lessons can be learned from those entities that can be shared with others. This ability to apply findings from the evaluation on an ongoing basis will be important, given that the sixth NCA (NCA6) is already under development. Subquestions 3b and 4b probe for details about what aspects of the fifth NCA were most useful to audiences. Understanding those elements could directly affect planning for NCA6. In addition, as described in Chapter 6, the evaluation may help establish some practices of ongoing data monitoring (e.g., by setting up targets and systems to track media references) that could be employed by USGCRP on a continuous basis to inform changes in dissemination strategies.

A second mode of learning from the evaluation is focused on broader decisions about the direction of USGCRP and its products. Within this context, it is important to consider that there may be a distinction between the types of information that could be learned from an evaluation and how that information may be used. For example, as part of its work in encouraging researchers and policymakers to collaborate in producing the NCA, USGCRP has effectively broadened the scope of the NCA, making it not only a report for Congress and the president but also an arena for a broader community of researchers, decision-makers, and information users (see Chapter 2). The evaluation can provide information on how well that broadened scope has worked in practice (e.g., Question 4 will provide insights on what specifically makes USGCRP products effective to a range of audiences, while Question 6 will further clarify the mechanisms of usefulness). In addition, the evaluators could include questions in their data collection instruments to ask audiences their perspectives about USGCRP’s scope and priorities (e.g., Questions 2c and 3c suggest that surveys or interviews might include questions about audiences’ expectations of the NCA and USGCRP products).

Ultimately, however, the use of that information—in other words, determinations about whether that broadened scope is appropriate—will be in the hands of decision-makers at USGCRP and its sponsors. They may consider this information, along with values, resources, and constraints, when determining priorities and scope. When determining the overall evaluation questions, the evaluation team considers whether the knowledge that would be gleaned in answering them would provide sufficient information to inform decision-makers.

This discussion underscores the importance of establishing a logic model that comports with evaluation users’ understanding of what USGCRP products are meant to achieve. If the ultimate impacts and the intermediary outcomes are not those with which decision-makers are most concerned, the evaluation will not provide usable information to meet evaluation users’ goals. Similarly, the prioritization of USGCRP audiences and partners, which in turn affects who will participate in the evaluation (as described in Chapter 5), is key to ensuring that the evaluation will learn from those audiences who are most likely to influence the outcomes that matter.

Ultimately, an evaluation requires many applications of judgment; as such, it reflects an organization’s culture and values. Clearly defining an ultimate impact and intermediate outcomes that demonstrate progress toward that impact involves making value judgments about what is important to an organization, program, or project—and what is not. The types of indicators selected, data gathered, and how those data are interpreted in an evaluation can also be colored by the values of the organization. For example, organizations with a propensity for quantitative data may choose an evaluation design that looks very different from an organization that favors more interpretive, qualitative types of data (Morton and Cook, 2023). Selecting which audiences will be a focus of the evaluation, and how they are engaged in the evaluation process, also reflects values. For example, the evaluation design could prioritize

mutual benefit with communities currently underserved by the NCA, which could reflect USGCRP’s values of addressing diversity, equity, and inclusion efforts. Reflexively considering values throughout the evaluation design can increase the likelihood that the evaluation leads to more relevant, meaningful, and actionable insights.

To increase the utility of the evaluation, the design process should begin by considering what information would best help evaluation users make decisions, on both the more process-oriented scale and the larger strategic one. Making sure the overarching evaluation questions, logic model, and the evaluation participants who are prioritized align with evaluation users’ priority interests will help the evaluators establish the right methods to yield valuable insights.

CONCLUSIONS AND RECOMMENDATIONS

Conclusion 3-1: An outcome evaluation is an appropriate approach to addressing USGCRP’s interest in understanding the extent to which its process and products have the intended effect on prioritized audiences.

Conclusion 3-2: Adapting well-established frameworks—such as utilization-focused, participatory, and equitable evaluation—from the beginning of the evaluation can provide standards and guidance to ensure that the evaluation will meet the needs of its intended users and incorporate equity considerations appropriately.

Conclusion 3-3: A logic model provides a useful conceptual framework for fostering a shared understanding of how activities are linked to outcomes.

Recommendation 3-1: The leadership of the U.S. Global Change Research Program should engage from the start in defining the evaluation scope and should ensure that the leadership perspective, as well as the necessary evaluation expertise, is incorporated throughout the design and implementation of the evaluation.

Recommendation 3-2: The U.S. Global Change Research Program should conduct an evaluation that can illuminate the outcomes of highest importance to the Program, including how the National Climate Assessment and related products inform significant decisions. The design and implementation of the evaluation should aim to be equitable and inclusive in both process and result, drawing upon the emerging knowledge of how to take diversity and justice into account.

Recommendation 3-3: The U.S. Global Change Research Program should develop a logic model to describe how its products, including the National Climate Assessment, are hypothesized to achieve their intended outcomes.

Recommendation 3-4: The U.S. Global Change Research Program should use a logic model developed for the evaluation to generate a set of overarching evaluation questions and should consult with partners and selected evaluation users to ascertain whether answering those evaluation questions will meet evaluation users’ needs.