In the Beat of a Heart: Life, Energy, and the Unity of Nature (2006)

Chapter: 5 Networking

5

NETWORKING

THERE WAS A SAYING going around the Los Alamos National Laboratory in the early 1990s: Biology would be to science in the twenty-first century what physics had been in the twentieth. The researchers at the lab—a physics powerhouse, where the atomic bomb was invented—realized the gathering momentum of fields such as genetic engineering and neuroscience and wondered whether it was biology’s turn to change the world the way quantum theory and relativity had.

If biology was truly about to supersede physics as the cradle of scientific revolutions, Geoffrey West had reason to be glum. West had been working at Los Alamos since 1976 and had become head of the lab’s particle physics group. But there were signs that the tide was turning against his discipline. At the end of 1993, the U.S. Congress pulled the financial plug on the Superconducting Supercollider, a giant particle accelerator intended to be built near Dallas, Texas. The public, it seemed, was unwilling to spend $11 billion to probe the building blocks of matter. There would be no new accelerators—and so probably no major developments in particle physics—for more than adecade.

The talk of biology’s coming preeminence was probably right, thought West. But the idea that this meant physics would go out of fashion struck him as ridiculous. Like D’Arcy Thompson, he saw mathematical descriptions as the root of any true science, and most areas of biology were still lacking in such theories. To West’s mind this gave physicists an entry into biology. He began thinking about what sort of biology problems he might tackle.

West had recently turned 50, and his mind had turned to thoughts of mortality. He decided to look into what determined life span. On the one hand, longevity is predictable. Insurance firms and actuaries can calculate life expectancy based on diet, occupation, income, and so on. Half-an-hour’s research would have given him a good idea of how much longer he had left. On the other hand, although biologists have some ideas about the mechanisms of aging, no one could explain why a mouse should live for a year or two and a man, built from much the same molecules, with much the same genes and biology, should live for a century. Explanations based in genetics or physiology struck him as superficial. “If biology is to be a real science, you ought to have a theory that can predict why we live 100 years,” says West. “That’s real science, not some qualitative nonsense about gene expression. That’s not an explanation of anything.”

He began to teach himself biology in the evenings and on weekends, picking up bits and pieces of knowledge wherever he could. It was, he says, “like learning about sex on the street.” The library at Los Alamos is devoted almost entirely to physics, so he resorted to reading his children’s high school biology textbooks. He discovered that larger species live longer and that across species life span increases proportional to the 1/4 power of body mass. And he discovered that many researchers had linked life span to metabolic rate, believing that animals that burn energy relatively quickly live shorter lives than the slow burners. But he also discovered that no one could explain why a species’ average life span was as long as it was.

West, too, has a background in scaling. Like living things, the behavior of physical systems depends on their size, and the laws of physics are a matter of scale. Quantum theory is most useful for describing what happens inside the atom. Relativity comes into its own

when considering interstellar distances and velocities close to light speed. Classical Newtonian physics is good for everything in between, from colliding billiard balls to satellite trajectories. And since the Big Bang, the Universe has been growing. “I viewed the unification of forces, and the origin of the Universe, as a scaling problem,” West says. “Since the Universe defines space and time, and it is continually changing its size, what space and time are becomes a scaling problem.”

His fumble with the biology literature convinced West that, to understand life span, he needed to understand metabolic rate. To understand metabolic rate, he needed to understand biological scaling. And to understand scaling, he needed to understand the ubiquitous power laws. There was, he realized, a branch of mathematics devoted to just this task—fractal geometry.

Branching into Biology

Crudely put, a fractal is a branching shape that divides and divides, becoming more intricate by producing an ever-larger number of ever-smaller branches akin to the first branch. Since their discovery, such shapes have been associated with natural forms, and many plant and animal structures, such as fern leaves, tree branches, and the air passages of the lungs, look a lot like the fractals drawn by computers, and vice versa. The power of fractals—and a property that suggested nature would use them—is that they use a small amount of information to generate a large amount of complexity. All you need do is repeat a few simple rules: Grow, branch, and shrink; grow, branch, and shrink; grow, branch, and shrink. Instead of encoding an entire shape in their genes—every leaf, every branch and tube—organisms would need just the rules for generating the fractal, in the same way that the equation for a spiral describes the shape of shells and horns.

This repetitive rule means that every bit of a fractal looks the same, regardless of how far back you stand from it or how closely you zoom in on it. And if you chop it into bits, like the sorcerer’s apprentice, you get new fractals that look like copies of the original. Identical branches and trees appear ad infinitum. This property is called self-similarity, and it is the link between fractals and power laws. Physicists realized

that, when a system can be described using power laws, it is a clue that it is made up of many self-similar parts interacting with one another. Like a power law, a fractal is a rule that generates a hierarchical structure and describes how each level in that structure relates to those above and below it.

The mathematics of fractals opened up new ways of thinking about biological scaling, and of connecting what happens at small scales with what happens at large ones. Before the arrival of fractals, structures such as lungs had been described using vague metaphors such as tree-or cloudlike. But fractal geometry provided a way to replace verbal with mathematical descriptions, just as allometry did for simpler biological forms half a century earlier.

West’s chain of reasoning went something like this. Scaling laws are power laws. Fractals describe power laws. So, he asked himself, what fractal might lie behind the scaling of metabolic rate? The answer, he decided, was the system of blood vessels, the network of pipes that carry food and oxygen to the cells, and take carbon dioxide and other waste away from them. This system is obviously like a fractal. It branches from one large tube, the aorta that leaves the heart, and becomes a series of smaller blood vessels. Arteries, such as the carotid, which takes blood to the head, or the femoral, which runs down the leg, lead to narrower arterioles, which lead eventually to capillaries, which reach between the cells and deliver their life-giving cargo to the tissues. West defined metabolic rate as the rate at which resources were supplied to the cells, via the blood system, and he reasoned that the scaling law behind metabolic rate—Kleiber’s rule—was a consequence of how the geometry of this supply network changed as animal size changed.

Blood vessels start with one wide tube and branch, like a fractal, to create many small vessels.

Credit: Reprinted with permission from Science, vol. 272, p. 122. Copyright 1997 by the American Association for the Advancement of Science.

When he started looking at the blood system, West had to leave Euclidean geometric similarity behind. To the trained eye, a cat’s femur and a cow’s femur are recognizably the same bone. The big animal needs proportionately thicker bones, to resist the stronger pull of gravity, but the basic structure of its skeleton does not change. But unlike bones, you cannot look at a blood vessel and tell what size of animal it came from. A wide tube and a narrow one might be the same artery from different-sized beasts or tubes from different bits of the same animal. Such big and small blood vessels are, however, geometrically similar to one another. Euclidean similarity is lost; self-similarity is gained.

So West tried to build a fractal that described blood vessels and relate that to metabolic rate. This would, he realized, be an abstraction, a sort of cartoon of how animals worked. It would not account for all the complexity and variability seen in real vascular systems, but West thought that, by ignoring the details of particular systems, he could get to deeper principles underlying them. Physics has often worked like this, by discarding much of the detail in the systems it seeks to study, such as friction in mechanics. For example, when Galileo dropped objects off the Leaning Tower of Pisa, he ignored their differences in air resistance and concluded that they all fell at the same speed, thus paving the way for a theory of gravity. West is fond of saying that, had Galileo been a biologist, paying more attention to the details, he would have ended up writing tomes on how every object falls at its own unique speed. He (West, that is, not Galileo) can talk like this because, as a physicist, he is not too concerned about annoying biologists.

So West began building a fractal that described blood vessels, to work out how fluids would flow through such a network and to relate that to metabolic rate. He eventually designed such a network, but it was a sorry creature, even as a cartoon, bearing little resemblance to any real blood vessels. West realized that he didn’t understand the biology of blood vessels very well and furthermore that some of his mathematics and physics were mistaken.

As a scientist looking to jump fields, however, he had one big advantage: the Santa Fe Institute. The institute was set up in 1984 by a group of senior researchers at Los Alamos, along with other eminent physicists from across the United States, to address the problems in

chaos theory, complex systems, and emergence that were beginning to make an impact across the physical, biological, and social sciences—problems that seemed somehow to combine hideous complexity with tantalizing flashes of order. Such complex dynamics and emergent order were a common feature of any system consisting of many interacting parts, be it stock markets, cells, ecosystems, or societies. The institute was intended to be truly multidisciplinary—it has no departments, only researchers. Since then, Santa Fe and complexity theory have become almost synonymous.

Twenty years on, the institute, now situated on a hill on the town’s outskirts, must be one of the most fun places to be a scientist. The researchers’ offices, and the communal areas they spill into for lunch and impromptu seminars, have picture windows looking out across the mountains and desert. Hiking trails lead out of the car park. In the institute’s kitchen, you can eavesdrop on a conversation between a paleontologist, an expert on quantum computing, and a physicist who works on financial markets. A cat and a dog amble down the corridors and in and out of offices. The atmosphere is like a cross between the senior common room of a Cambridge college and one of the West Coast temples of geekdom, such as Google or Pixar.

At the time he began thinking about metabolism, West was a visiting fellow at the institute, spending one day a week there. Through Santa Fe, he met two biologists, based 60 miles down I-25 at the University of New Mexico in Albuquerque, who had also been thinking about metabolic rate.

Enter the Ecologists

Brian Enquist began his undergraduate education hungry for big unsolved problems that would need big ideas to explain them. The last place he expected to find them was in biology—Darwin seemed to have sewn up the market more than a century before. Enquist thought he might major in philosophy. But then in his initial biology lectures he saw the graph of body mass versus metabolic rate and learned that there were still big patterns in biology that cried out for big ideas to explain them. He got hooked on scaling.

Every biologist has his or her favorite branch of life—birds, bees, moths, water lilies, coral reef fish, or whatever. For Enquist it was trees. Ever since he began climbing them as a child, he had had a thing about them. They were impressive and important. If you stand in a forest, the world seems to be made of trees. He was also impatient, and botany is a good discipline for a naturalist in a hurry, because you don’t have to sit in a hide all day waiting for trees to come to you, and it doesn’t take a week to get your sample size into double figures. And for a young biologist beginning his career and looking for big questions to answer, botany was fertile ground. The main thrust of the science has been to classify and describe plants, and there is relatively little theory or mathematics in the discipline; new ideas in biology have tended to be developed and explored by researchers working on animals. Enquist decided to investigate scaling in forests, to see how the sizes of individual trees influenced the form of the whole forest.

For graduate school he went to work in Jim Brown’s lab at the University of New Mexico. More than a decade before Enquist joined his lab, Brown had decided that energy was the key to understanding biodiversity. He had begun his career in the 1960s as a physiological ecologist, studying the biology of energy in animals, looking at how the challenge of keeping warm affected their energy budgets and how it would affect their behavior and where they could live. One of his early studies was on the metabolic rate of weasels, and the cost of being long and thin, with a relatively large surface area—a weasel burns energy twice as quickly as a round animal of the same weight, he found. Lately, he had come to see energy as an organizing principle for the whole of nature. How organisms got energy and divided it between themselves could, he believed, explain biodiversity: Why different environments contain different numbers of species, why species live where they do, why certain species are found together or not, and why some are common and some are rare.

And the foundation of any investigation into energy and biodiversity, Brown believed, should be body size. Body size controls how much energy plants and animals need and so how much is left over for other individuals and species. Body size is also closely related to virtually everything else that ecologists are interested in, such as how much

land animals need, how quickly their populations grow, and how many young they produce.

The ecological importance of body size makes it of practical as well as academic interest. To conserve a species, we need to understand it. As the biologist William Calder wrote: “A conservation biologist trying to prepare a protection plan without data on the species’ biology is like an insurance underwriter issuing a policy in ignorance of the applicant’s age, family status and medical history.” Yet conservationists often work in almost perfect ignorance of what they are trying to save. It is likely that at least 90 percent of species, and possibly a much higher proportion, have not yet been discovered, described, and named. And our knowledge of most of those that have stops with their existence—we have only a pressed leaf or a bug pinned out in a draw. No one knows how widely they are spread, how great their numbers are, what they eat, what parasites assail them, how many young they produce and how often, what foods or habitats they prefer, or how they behave. Of the species we do know something about, the majority are birds, mammals, and flowering plants. Of the most diverse groups, such as insects, fungi, and bacteria, we know practically nothing. Most species live in tropical forests, through which it is difficult to travel and in which it is difficult to spot things. The number of biologists and the resources they have to fund their discovery and description of species are both limited, particularly in tropical countries, which tend to be among the poorer nations. Our destruction of biodiversity outpaces our knowledge of it.

We need rules of thumb that, in the absence of detailed knowledge, can help us predict which species are most vulnerable to threats such as habitat destruction or hunting and so which are most in need of conservation. Rules based on body size have two great advantages. Size is informative, and it is easy to measure. Even if we know nothing else about a species, we almost always know how big it is. It’s been said that ecologists report body size in the same way that journalists report their subjects’ ages—practically as a reflex. It takes only a moment to weigh an animal; taking such a measurement requires little equipment or expertise, its accuracy can usually be trusted, the animal doesn’t have to be alive to be weighed, and if it is, you don’t need to interfere

with it or detain it for long. Size is the only universal piece of biological data.

One of the most reliable rules of conservation is that it is bad to be big. Across the animal kingdom, big species are more at risk than their smaller brethren. Orangutans and elephants are disappearing more quickly than rats and rabbits. Rhinos are more endangered than antelopes. Large birds, such as albatrosses and eagles, are in more trouble than warblers and finches. Whales are more endangered than porpoises. This rule holds even for reptiles and insects: The planet’s largest known earwig, a 3-inch-long giant from the Atlantic island of St. Helena (being an island species is another almost guaranteed recipe for trouble), has not been seen for 20 years.

It’s easy to see what makes large species more prone to extinction. They need more food and so more land. They are often found higher up the food chain and so depend on everything below them staying in good shape. All of this means that there are fewer of them to start with. Big animals take longer to reach breeding age, breed less often, and produce smaller numbers of young when they do. The fossil record shows that, throughout history, carnivorous mammals, such as cats and dogs, have experienced a high degree of evolutionary churn. Species come along, dominate for a few million years, and then disappear, at which point a new group comes along to do the same job. There are obvious benefits to a predator in being big and fierce, but this might also paint a species into an evolutionary corner. Populations become smaller and more spread out, and anything that reduces their food supply or splits a population into isolated fragments, as deforestation and urbanization are doing for large carnivores these days, will hit them harder than smaller species with less grandiose diets.

There’s no such thing as small-game hunting, and human hunters’ lust for large prey has exacerbated big species’ vulnerability. Since humans appeared on the scene, a swath of large mammals, such as mammoths, mastodons, and giant ground sloths, have gone extinct. The same goes for the largest birds—the moas of New Zealand, the elephant birds of Madagascar, and perhaps the largest of them all, Genyornis, an Australian species that weighed 100 kilograms. When humans arrived in Australia the continent was also home to a lizard,

Megalania, which was 5 meters long and weighed 600 kilograms. This too is no more. The trend continues to this day: Fish stocks of large-bodied, slow-growing species are slower to recover from fishing and so more vulnerable to overfishing. Big charismatic animals are the poster children of conservation. We study them more intensively, bias our conservation efforts in their direction, and value them aesthetically and spiritually, but it hasn’t done them much good.

So when we plan where to spend scarce conservation money, working out which species are most likely to be at risk of extinction, there is a good case for biasing our efforts toward large species. And when we find a new species or population, we should be more concerned for its future if it is an ape or a deer than if it is a rodent.

The Insurance Man

When Jim Brown and Brian Enquist began thinking about metabolism, they were more interested in explaining nature than saving it. The search for general principles that apply across the living world, the pair believed, should focus on a combination of energy and allometry. This call to make energy the center of ecology was not unprecedented. Ecology is the study of nature’s economy, and energy has long been one of the currencies tracked by ecologists, through food webs, for example, to explain how much life a habitat can support or as a way to understand why animals prefer to eat certain foods. Ecology has also been one of the areas of biology most receptive to ideas from physics. Around the turn of the twentieth century, ecologists seized on concepts then influential in chemistry, such as equilibrium and thermodynamic descriptions of energy flow, and applied them as metaphors for explaining ecological phenomena such as the stability of populations and the coexistence of species.

The person who did the most to get ecologists thinking like physicists was Alfred Lotka, born in Austria in 1880. Lotka did nearly all his scientific work in his spare time. He trained as a physical chemist and emigrated to the United States in 1902. There he worked at the General Chemical Company, in a patent office, at the U.S. Bureau of Standards, and as a science journalist. He ended his working life, following a brief

stint in the 1920s as a full-time researcher at Johns Hopkins University in Baltimore, at the Metropolitan Life Insurance Company, where he made seminal contributions in applying mathematics to the study of human populations, such as calculating how mortality changes with age.

Beginning in the early 1900s, Lotka began thinking about how physics might be applied to biology. Like D’Arcy Thompson, he made no distinction between biological and physical systems. But Lotka saw life in terms of the exchange of energy, rather than being governed by physical forces and geometry.

Lotka was not the first person to have this idea, but he pursued it harder and farther, and with greater mathematical rigor, than anyone else. Also like Thompson, he worked outside the academic mainstream and had little contact with other scientists. A quarter of a century of such work culminated in his 1925 book, Elements of Physical Biology. In the book Lotka imagines physical biology, which he defines as “the application of physical principles in the study of life-bearing systems,” as analogous to the then-voguish physical chemistry. Scarcely any life-bearing system escaped his gaze. He tackled growth and population dynamics, the cycling of chemical elements from the environment into life and back again, behavior, the senses, communication, travel, and consciousness. Often he took examples from economics and sociology. Lotka showed that the sheep population of the United States matches the sheep consumption of its citizens and argued that predators similarly control the numbers of their wild prey. And he speculated on the immense impact that the newfound ability to take nitrogen from the air and turn it into fertilizer would have on humanity and the planet. Lotka wanted to build an intellectual framework that would unify physics, biology, and the study of human society.

As well as trying to understand biology in terms of physical principles, Lotka sought to solve biological problems using the tools of physics. He drew analogies between evolution and thermodynamics, but he also thought that organisms were far too complicated to be understood simply by the application of thermodynamics. It would be “like attempting to study the habits of an elephant by means of a microscope.” But the mathematical techniques of physics could still be

used for studying life, by treating plants and animals as if they were particles: “What is needed is an altogether new instrument, one that shall envisage the units of a biological population as the established statistical mechanics envisage molecules, atoms and electrons.”

The world, said Lotka, is an engine, and although it is useless—all its work is used internally to feed and repair itself—the world engine’s great trick is its ability to improve its workings as it goes along, through evolution. He imagined life as being like a water wheel, turned by the energy flowing over it. Natural selection, he said, would make the wheel bigger and make it turn faster, favoring those organisms that were best at grabbing energy and those that used it most quickly. In consequence, the world engine would speed up. The law of evolution was the “law of maximum energy flux,” and natural selection was a law of thermodynamics.

Lotka’s thinking had a huge influence on ecologists. The Elements was a critical point in ecology’s long history of using mathematical models to convert verbal arguments about how nature works—“the birth rate will fall in crowded populations,” “predators will reduce the numbers of their prey”—into precise and testable statements, turning words into numbers. Now there is a whole subdiscipline devoted to mathematical models and computer simulations: theoretical ecology. The advent of theory has led to tension between some theoreticians, who see their naturalist colleagues as stamp collectors, and some naturalists, who suspect that the theoreticians, lost in their mathematical fairy world, could not tell the difference between a lion and a dandelion.

Thinking Big

As well as sharing an energy-centric worldview, Brown, like Lotka, thought that revealing the unity of nature required a new instrument. Brown had become frustrated with ecology’s emphasis on small spatial scales and short periods. The typical experiment tracked, say, the plants in one field over three years or the molluscs on a single beach over a single summer. A great many of the published studies focused on 1square meter of land or less. Such small-scale experiments revealed

the complexity and unpredictability of nature: The result of, say, removing a predator or adding nutrients to a site varies hugely between places and times. But faced with such variability, this approach offered little hope of saying anything universal about the living world. “There was the idea that to understand systems of many interactive components, such as ecological communities, we should take them apart, figure out what the components are and how they work in isolation, put them together in pairs, and then build up more complicated systems. That doesn’t seem to have worked,” Brown says. You can create a mathematical model of an ecosystem, but as you add variation and complexity, such as more species or different environments, trying to make the model more lifelike, the number of possible outcomes, such as which species will go extinct, which will spread, and how long all this will take, soon becomes astronomical. Tiny variations in conditions and assumptions lead to radically different outcomes. And there is usually no way to tell which path is most likely in the real world and why.

What was needed, Brown thought, was an effort to find out what united and divided environments and species right across the earth. It would be practically impossible and ethically wrong for a scientist to manipulate nature on this scale, but a program of measuring and observation should reveal the big picture. It would be a search for emergence: Just as physicists know how changing the temperature or pressure of a gas will affect its behavior without knowing what every molecule in that gas is up to, so statistical techniques applied to large groups of organisms—treated, as Lotka urged, as if they were particles—should reveal regularities.

Such large-scale observations had already revealed many large-scale trends in nature. There is a regular relationship between the size of a place and the number of species found there. Most species are rare, with small population sizes, while a few are common. Most species live in only a small area of land; a few roam across continents. Most of the higher groups used to classify plants and animals, such as genus (e.g., Homo), family (e.g., primates), and order (e.g., mammals), contain a relatively small number of species; a few, such as beetles, are fantastically diverse. And similar mathematics can be used to describe the ratio of rare to common, or small- to large-ranged species, or diverse to less

diverse groups. Something seems to be going on that is general but hard to explain.

What Brown did invent, along with his colleague Brian Maurer, was a name for this approach—macroecology. In 1995, Brown published a book of the same name. Not everyone agreed with this view of nature. Like West, Brown was willing to sacrifice the detail to see the generalities. “My knowledge of biology is more extensive than intensive, and I got a reputation as being a big-picture guy,” he says. “I have a very broad biological background, and a good feeling for how organisms and systems work, at the sacrifice of detail.” It’s like squinting at a picture so one can see the general patterns without being distracted by the brushwork. But squinting is a tricky thing—people see different pictures, and some see just a blur. Sometimes the eye of faith takes over, and some ecologists suspect Brown of having a mystical streak. There can also be snootiness about science that is based on observation rather than experiment. The results of experimental manipulations—discovering that changing system X using method Y gives result Z—are often seen as more conclusive, more revealing of underlying mechanisms and true causes, and somehow more scientific than statements about nature based on the passive observation of patterns. Then again, no one thinks astronomers are unscientific because they do not tinker with galaxies or geologists because they do not move tectonic plates themselves.

Into the Woods

In the summer of 1995, Brian Enquist took a copy of Brown’s Macroecology off on a field trip to the forests of Costa Rica. He returned with a question—did plant metabolism also scale to the 3/4 power of body mass? This is the sort of problem that biology graduate students often end up tackling; it’s known as the aardvark approach. (“Nobody’s looked at digestive enzymes/the genetics of eye color/parental care in the aardvark,” says the supervisor to her new student in need of a research project. “Why not do that?”) Brown told Enquist that nobody had looked to see if Kleiber’s rule held for plants. Enquist decided he would take a look.

The first task was to work out what a plant’s metabolic rate is and how to measure it. Plants don’t burn energy to keep themselves warm, and they don’t take in food—they make their own by photosynthesis. But they do respire, just as animals do, and take in oxygen and give off carbon dioxide, so an experimenter could put a plastic hood over a plant and measure its gas exchange. But there is a problem with this procedure. For a start, trees are big and difficult to seal inside an airtight bubble. More fundamentally, when they respire, plants give off water vapor, just as we do. But unlike us, they have no lungs to pump this water vapor out. The rate at which they lose water from their leaves depends instead on the amount of water in the air, the difference in humidity between their insides and outsides. Evaporation from the leaves draws water in through the roots, from which it flows through the plant in tubes called xylem. Sticking a plastic bag over a plant makes its external environment more humid, which makes it harder for the plant’s leaves to lose water and lowers its metabolic rate. In other words, the experiment changes what it seeks to measure.

Instead, Enquist settled on using the rate at which fluid flowed through a plant’s xylem as a proxy for its metabolic rate. The rate of respiration, and the rate at which resources were consumed, he reasoned, ought to be proportional to the rate at which water flowed through the plant, because the former depended on the latter. Faster flow should translate into a faster metabolism. Foresters want to know trees’ flow rates for the same reason that farmers want to know animals’ metabolic rates, because it helps them work out how much water their crop needs and how much wood it will produce. They had already built up a handy number of measurements for different tree species, which they related to plant size.

So Enquist hit the library. After a few weeks of rummaging, he had found enough measurements relating plant fluid flow to size to allow a statistically solid analysis. Pooled, these measurements showed that fluid transport was proportional to the plant’s mass raised to the power of 0.733—as near to 3/4 as makes no difference.

Emboldened by this success, Enquist suggested to Brown that they seek a theoretical explanation for Kleiber’s rule. Brown had often wondered in passing why scaling rules tended to group around

multiples of one-quarter, but he had never mounted an assault on the problem. He agreed to tackle the project but warned Enquist of the question’s knotty history. It might take years of work, and there was no guarantee of ending up with anything to show for it.

The initial investigation into trees, and the way they transport water, set Brown and Enquist thinking along the same lines as Geoff West: networks. Like him, the two ecologists thought the answer to the puzzle of metabolic rate might lie in the geometry of the transport networks that move fluids around organisms. All large animals and plants are riddled with such networks. Vertebrates have blood vessels and plants xylem. Insects’ bodies are honeycombed by tubes, called tracheas, that carry air to their tissues. Perhaps the scaling of such networks could explain the scaling of metabolic rate. Unlike West, however, they began to design networks using graph theory, a branch of mathematics that deals with the properties of networks of interconnected points and lines. Unfortunately, all of the networks they produced using this technique delivered resources at a rate proportional to the 2/3 power of their size—they had reinvented the surface rule. Brown, a self-confessed “mathematical cripple,” realized they needed some help.

All for the Best

In the autumn of 1995, Brown and Enquist met West, through the Santa Fe Institute and a mutual acquaintance. The three realized they were taking a similar approach to the same problem. They also realized that working together would save a lot of time: West could handle the mathematics and physics; Brown and Enquist could tell him if the models made biological sense. As long as they trusted each other’s expertise, and as long as they took the time to explain things to one another, none of them would have to spend years blundering through a field about which he knew next to nothing. They began meeting on Fridays to work on networks and metabolism.

At first these sessions were slow going. West despaired of ever communicating with the two ecologists. “There were many times,” he says, “when I left Santa Fe and I thought this is just hopeless—they don’t

understand anything, and I don’t know what the hell they’re talking about.” Likewise, Brown and Enquist had to take the time to explain to West such concepts as how evolution works and what a species is. But after a couple of months, they felt they understood one another. Most importantly, they realized that, despite their different backgrounds, they shared a similar philosophy—that there is a unity underneath the diversity of life—and that they got along well enough to be able to work together.

Brown and Enquist put plants to one side, and all three began working on the vertebrate blood system. Most studies of metabolism have been done on mammals, so explaining their metabolic rate would be a good place to start. They began designing the blood system as if it were an engineering problem. If they started from scratch and tried to design a delivery system to distribute resources around a body, what would the resulting network look like? The way that metabolic rate changed with size, they believed, would reflect the way that these networks changed. (In basing their theory on fluid networks, they were also resurrecting one of biology’s oldest metaphors. In the seventeenth century, thinkers such as Descartes saw life’s workings in hydraulic terms, as a series of pipes, like the fountains and plumbing of Versailles.)

It might seem as if redefining metabolic rate as the speed with which tubes deliver resources gets things the wrong way around. Metabolism is a property of cells, not networks, and you might expect the network to accommodate itself to the cells’ demands, not the cells to adapt to what their suppliers can deliver. In fact, the two should go hand in hand. There’s no point in having blood vessels that can pump resources faster than cells can burn them, or in having cells that demand more energy than transport networks can deliver. Each link in the chain should be equally strong. Evolution, faced with many things to do and a finite amount of time, energy, and resources to do them with, must strike a balance, making life a matter of trade-offs. Reinforcing one part of one’s biological armory means neglecting another. Things need to be good enough to do their job, but not so spectacularly well constructed that they become cripplingly expensive to build and maintain. You wouldn’t waste money building a garden

shed to the same specifications as a nuclear bunker; a jalopy will sit in a traffic jam just as well as a sports car; and only an eccentric, when faced with an uncracked nut, would rush out and buy a sledgehammer.

Experiments support the idea that respiration is integrated and efficient in this way. One study looked at the maximum capacity of each part of the respiratory system—lungs, blood vessels, and mitochondria—in mammals ranging in size from shrews to cows. Removing each component from those up- and downstream, and supplying it with oxygen directly, showed how well each part could perform in isolation—if, say, the flow of oxygen to the blood did not depend on the lungs or if the supply to the mitochondria did not depend on the blood. It turns out that a blood vessel’s maximum capacity for carrying oxygen matches mitochondria’s maximum capacity to burn it. And the mitochondria of all mammals burn energy at the same rate, so metabolic rate depends on the number of mitochondria per cell, rather than the activity of mitochondria.

But West, Brown, and Enquist went beyond assuming that cells and networks were equally efficient. They sought to design a network that was maximally efficient, delivering the maximum amount of resources using the minimum amount of time and energy. This technique, called optimality modeling, is well established in biology, but it is also controversial. It works by calculating the best possible solution to a problem and then seeing whether reality conforms with that model. To give two examples: Is it better for a shorebird to eat a small clam that is easily pried open or to invest the time and effort in smashing open a big mussel with a thick shell? And should a male dung fly stay with his current mate, to prevent her from mating with other males and throwing away his sperm, or will he father more offspring by going off to look for another female? So, unlike an allometry model, when researchers look at nature and then see what mathematical expression best describes what they see, in optimality modeling the researcher asks him- or herself what the best way to do a particular job is, works out the biological consequences, and then tests the hypothesis on real animals. If your theory correctly predicts biological phenomena, you can be more confident that you have understood the processes underlying the phenomenon.

Optimality modeling has been contentious because, although organisms are clearly good enough at what they do to survive, there is no reason to assume they are as good as they can possibly be. Some biologists accuse optimality theorists of a blind faith that evolution has honed every trait to its keenest possible edge. Such skeptics, notably Stephen Jay Gould and Richard Lewontin, have accused optimality enthusiasts of pushing a “Panglossian paradigm,” after the character in Voltaire’s Candide who believed that everything must be for the best because this was the best of all possible worlds. The doubters point out many reasons to think that life is not optimal. You can’t just consider one feature in isolation. The aforementioned trade-offs mean that improving one thing, such as lungs, means taking from another, such as legs. Some biological necessities might contradict one another—there’s no point in a mouse going after some juicy berries if there’s a snake sitting on the same branch (although the berries will appear juicier, and the snake less scary, as the mouse gets hungrier). All organisms are in thrall to their evolutionary past. They cannot rip themselves up and start again, but must deal with current circumstances using what they’ve got. For example, many aquatic insects carry a bubble of air with them and must return to the surface for refills. Gills might be better, but these insects’ ancestors went too far down the air-breathing road to turn back. Evolution might not have caught up with environmental change, forcing organisms to play catch-up. For example, the migratory and breeding patterns of some European birds are now out of synch with the seasons, thanks to climate change, and only a handful of humans have immune systems capable of shaking off the AIDS virus. Finally, natural selection is not necessarily an optimizing process. It has no goal in mind, no notion of what is best.

But while it’s unreasonable to assume that plants, animals, or microbes are perfect, it’s reasonable to assume that evolution has made them good at what they do. Optimality theory is one way to work out what this means and ask questions about adaptation in precise and testable terms—it is a hypothesis, not an assumption. Used this way, optimality theory has been a useful tool for biologists, and living forms and animal behaviors often turn out to be close to the engineered solution to a problem. For example, a moose’s menu consists of land plants,

which are high in calories but low in essential sodium, and water plants, which are rich in sodium but bulky and poor in other nutrients. A moose chooses a diet that maximizes its energy intake while eating just enough pondweed to meet its sodium needs. The more important the task or structure, the greater the force that natural selection will have brought to bear on it. An organism’s distribution networks are just as crucial as its bones, so while we might not expect them to be as efficient as theoretically possible, optimality is a reasonable thing to look for in a model network. Or as D’Arcy Thompson wrote in On Growth and Form:

That this mechanism is the best possible under all the circumstances of the case, that its work is done with a maximum of efficiency and at a minimum of cost, may not always lie within our range of quantitative demonstration, but to believe it to be so is part of our common faith in the perfection of Nature’s handiwork…. To prove that it is the very best of all possible modes of transport may be beyond our powers and beyond our needs; but to assume that it is perfectly economical is a sound working hypothesis. And by this working hypothesis we seek to understand the form and dimensions of this structure or that, in terms of the work which it has to do.

Plumbing

Besides delivering resources quickly and economically, a well-designed network must also be comprehensive. No part of the organism should be more than about a millimeter—the distance over which diffusion can take over from pumping—from a branch of the network. So West, Brown, and Enquist decided that their ideal network must deliver to every nook and cranny of a three-dimensional space, using as little time and energy in the process as possible. A fractal is a design for transforming a tubular blood vessel into a space-filling solid. After a few rounds of branching and shrinking, the large original tubes have divided into a feathery mass that infiltrates every part of the volume it occupies. Instead of asking how metabolic rate changes with an animal’s mass, then, West and company were asking how energy requirements relate to a body’s volume, like their predecessors who had studied the surface law.

But natural structures aren’t true fractals. If you look at the fronds of a fern under an electron microscope, you don’t see atom-sized fern leaves, bearing still more fronds. At some point the branching stops. In the blood system this network terminus is the capillary. From a capillary’s viewpoint, animals all look the same. Capillary size varies little between animals; an elephant’s capillaries are about the same size as a mouse’s. The same goes for cell size—big animals don’t have bigger cells, just more of them. So although animals vary hugely in size, the ends of their distribution networks—the bits that plug into the cells—do not. The same goes for plants. The leaves on an oak tree are about the same size as those on a shrub, and the leaf stalks that deliver water to them are the same width. This is squinting at nature. Of course, cell and leaf size do vary—a rhubarb plant’s leaves are much larger than a giant sequoia’s needles. But the important thing is that leaf size and capillary size do not vary systematically with body size. Large leaves are as likely to be found on small shrubs as they are on giant trees. So when considering the effect of body size, this is one detail that can safely be discarded.

The effect of body size on transportation networks only starts to appear as you begin to travel up the system from the cells. On the reverse journey from the capillaries to the heart, the tubes become wider. It’s like journeying up your bathroom faucet, through the pipes of your house’s plumbing, and out into a water main. In blood vessels, you eventually reach the aorta leading from the heart. Here, differences in size do matter. Big animals need bigger hearts, as they must pump a larger volume of blood a greater distance, and they need wide tubes, to carry all that pressurized blood with little resistance. A human aorta, at about 2 centimeters wide, is less than a tenth as wide as a sperm whale’s—a whale’s heart would explode if it tried to pump into a human-sized aorta. So because both whale and human networks end in capillaries of the same width, networks must get to the same end point from different starting points. A bigger animal’s blood vessels have got a lot more narrowing to do.

I hope this is beginning to show how body size can affect network structure and so the delivery of resources. West, Brown, and Enquist reasoned that the changing journey from aorta to capillary would

determine how metabolic rate changed with size. These changing delivery routes also show how the scaling of networks differs from the scaling of body parts such as bones. Because the networks of different-sized animals have different journeys, their blood vessels cannot be geometrically similar, and the scaling rules relating surface area to volume are no longer useful. In this sense a big animal is not just an enlarged version of a small one. If an elephant’s capillaries were thousands of times bigger than a mouse’s, they would be useless at delivering resources because their surface area would be tiny compared with their volume. The larger animal would be unable to extract oxygen and nutrients from its blood quickly enough to sustain itself. What an elephant needs, compared with a mouse, is more of the same—more capillaries. But more capillaries means more branches in the network, which means a longer journey from heart to cell, which means a slower supply of resources to the cells, which means a slower metabolic rate. The challenge is to put this in mathematical terms and work out where 3/4 comes from.

So the rule of network design is to start with one big tube and turn it into a three-dimensional fractal, until you have made enough capillary-sized tubes to service your entire body, and then stop. To do this, the tube must branch. The total number of capillaries is the number of branches produced at each junction, multiplied by itself once for every junction traveled through. So an animal with three junctions, whose tubes split into two at each junction would have 2 × 2 × 2 = 8 capillaries. As junctions are added to the network, the number of capillaries rises exponentially, so filling a big animal with capillaries needs only a few more junctions than filling a small one. Again, fractals are the economical solution.

At each level of the network the tubes downstream are narrower and shorter than those that lead into them. To go straight from an aorta-sized tube to a mass of capillary-sized ones would be as disastrous as trying to drain a sperm whale’s heart into a human aorta. The turbulence, and resistance, in the tube would shoot up and so would the energy needed to force the fluid down the pipe. What is needed is a series of junctions easing gradually from big tubes into small. West, Brown, and Enquist were like plumbers trying to bolt a

bunch of smaller pipes onto one big pipe. They had to choose how much narrower each pipe should be than its predecessor to most efficiently carry the fluid that was gushing down the big pipe. They also had to bear in mind their destination, the mass of capillaries spread out through the body that need to be hooked up to the network. This presented them with another choice: how much space to leave between junctions to keep the total journey as short as possible. In the final network, ease of flow depends on tube width, and journey time depends on tube length.

To work out how much narrower and shorter than its predecessor each successive section of tubing in a perfect network should be, the trio turned to the laws of fluid dynamics. Using fluid dynamic principles, they were able to show that, to minimize congestion in the system, the total number of downstream tubes should have a combined cross-sectional area equal to those upstream of the junction, a property called area-preserving branching. So if the aorta split in two, for example, each offshoot would have half of the aorta’s area. The belief that this is how real networks behave goes back centuries: Leonardo da Vinci noticed that the total area of a tree’s branches is approximately the same at each level, from trunk, to branches, to twigs. To achieve area-preserving branching throughout the network, the tube’s width needs to decrease by a constant proportion at every split. The second requirement, to fill the volume with a minimum length of tubing, is achieved by maintaining a constant ratio of lengths between each junction—each pipe might be half or a third as long as its predecessor, for example.

Let us return to the whole network and sum up its assembly instructions. Start with a single fat tube of a certain length and split it into a number of smaller tubes that are scale models of the original. Split the daughter tubes again, into another generation of tubes that are scale models of their parents, using the same proportions as the first split. (The exact proportion by which each tube should get shorter and narrower turns out to depend on the number of branches added at each junction.) Repeat until you reach the capillaries.

Here we run up against another trade-off. More capillaries ought to be better. More capillaries means more resources supplied to the cells and a faster metabolic rate. Using the construction rule I just

described, the way to make more capillaries is to build a bigger network with more branches. But this is not the network’s only job. It must also fill three-dimensional space and have tubes that narrow and shrink so as to make the flow of fluid as smooth as possible and the journey to the capillary as short as possible. West, Brown, and Enquist found that this combination of criteria meant that a large body’s network produced proportionately fewer capillaries than a small one. In a large animal each capillary must supply more cells. So each cell gets a smaller proportion of that capillary’s supply and must slow down its energy consumption accordingly. An animal’s metabolic rate is proportional to its number of capillaries.

If the number of capillaries was a simple linear function of body size every animal would have the same relative metabolic rate, directly proportional to body mass. But bigger animals have relatively fewer capillaries. In fact, the rules for the optimal network produce a number of capillaries that is proportional to body mass raised to the power of 3/4.

This, West, Brown, and Enquist believe, is why metabolic rate is proportional to the 3/4 power of body mass. It is the number, and therefore surface area, of capillaries that is similar to body size in the same way as metabolic rate, not the relationship between their external surface and volume, as Rubner thought, or the amount of muscle needed to stop them from buckling under their own weight, as McMahon suggested. In bigger animals, resources have a longer journey to the cells and each capillary must supply more cells, so the cells must slow their energy consumption. The network theory predicts that, as body size grows, cells must slow their metabolic rates proportional to body mass raised to the power of −1/4. A cell’s metabolism goes as fast as it can, given the rate at which its body can provide it with resources. West compares cells to cars. A car might have a top speed of more than 120 mph, but it will not go nearly that fast in city traffic. Cells are similarly limited by all the other stuff around them. But if you take cells out of bodies and grow them in a dish, like a sports car on a country road, their metabolic rate speeds up until it hits maximum. Other scaling relationships, such as the breadth and length of the aorta, also fall out of the mathematics used to describe the ideal tubes. The

theory predicts that they should be proportional to the 3/8 power and the 1/4 power of body mass, respectively. Measurements of different species show that this is indeed so.

West, Brown, and Enquist’s model is an abstraction. It is an engineering solution derived from the laws of physics, rather than from any foreknowledge of biological systems. If you were going to design an animal, the model says, this is how you should do it. It is impressive that such a design reproduces key properties of living things, such as the way that blood vessels and metabolic rates change with body size. Real animals are bound to fall short of the theoretical ideal—their networks will not reproduce exactly the geometry of the model, nor will they change with size in the way it predicts. And in nature, metabolic rate is seldom if ever exactly proportional to the 3/4 power of body mass. But this shortcoming does not sink the whole theory. It would be very surprising to find a moose that ate the exact proportion of pondweed and land plants that, to the nearest gram, gave it the best diet. To see if moose eat optimally, we need to look at lots of them and see if their average diet is close to the theoretical prediction. Likewise, no animal is going to have a blood system built exactly according to the fractal rules. Network design is flexible—long-term exercise leads to more capillaries in a muscle, for example. And cells are flexible in the amount of energy they demand. But if there are underlying rules of metabolism, if we look at enough animals across a broad enough range of sizes, we should expect to see this theme underlying the variations that different species and individuals can play on it. And we do.

After doing some gnarly mathematics to explain how properties of the blood system, such as the jerky flow produced by a beating heart, would affect nutrient supply to the cells, West, Brown, and Enquist sent their model off to Science. Their paper was accepted, but only after several rounds of review involving eight reviewers (three or four is more usual). Three of the eight thought it was outstanding, revolutionary even. The Nobel Prize may have been mentioned. Three thought it was good. Two thought it was the dumbest thing they had ever seen. Lots of things about the fractal theory rub some biologists the wrong way. It is based on mathematics and physics, it is an abstraction, and it is a generalization. It throws away most of the nitty-gritty

of biology and most of what most biologists spend their time doing. None of these characteristics are likely to recommend it to biologists who favor staring over squinting—the physiologist who has spent a career describing the quirks of the cardiovascular system or a biochemist who spends his or her time describing the reactions of cellular respiration. And not everyone believes in Kleiber’s rule. Some biologists still think there is no one scaling law for metabolism and so nothing to explain. To them, West, Brown, and Enquist are squinting so hard that their eyes have closed.

It’s fair to say, however, that D’Arcy Thompson would have been delighted with the network model. Among his papers is the following note, similar although not identical to the passage in On Growth and Form that discusses blood vessels:

To keep up a circulation sufficient for the part and no more, Nature has not only varied the angle of the origin of the arteries to suit her purpose; she has regulated the dimensions of every branch and stem, and twig or capillary. The normal operation of the heart is perfection itself; we are told that even the amount of oxygen which enters and which leaves the capillaries is such that the work involved in its exchange and transport is a minimum. This perfect fitness, this maximal efficiency, we accept as a cardinal hypothesis; and we come to understand the form and dimensions of this structure or that by solving the problem of the work which they do.

That’s what I was trying to say. Sometimes I wonder why I bother.

Networks in Plants

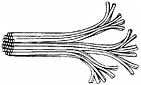

A tree looks like a naked fractal. In its branches can be seen the form of its distribution networks. But unlike blood vessels, the network of xylem tubes that carry water around the tree does not start with one big tube in the trunk and split into lots of narrow tubes as it approaches the leaves. Xylem is more like a bundle of electrical cables than plumbing; it is a group of tubes of the same size that split from each other as they near their destinations. Trees prompted Enquist to start thinking about networks, but could his theory apply to them?

In fact, plant networks reproduce many of the same features as animal networks, although for different reasons. As Leonardo saw, trees have area-preserving branching. And xylem vessels also taper, getting

The water-carrying vessels of plants begin as a bundle and then branch to go their separate ways.

Credit: Reprinted with permission from Science, vol. 272, p. 122. Copyright 1997 by the American Association for the Advancement of Science.

narrower as they reach the treetops. The problem with a tube of a constant diameter is that the longer it gets, the more work it takes to force fluids down it. Resistance to flow increases down the tube. If xylem vessels kept the same breadth all along their length, the top leaves of a tree would never get any water. But if a tube tapers at just the right rate, the resistance to flow remains the same along its length. The degree of tapering that keeps the pressure in a tube uniform regardless of how long it is—and so keeps all parts of a plant equally supplied—plugs into the fractal model to give a metabolic rate predicted to be the 3/4 power of the plant’s mass.

The mysterious number 4, the reason that scaling laws are built around multiples of one-quarter, and not the one-third powers that regular geometry predicts, is a consequence, as J. B. S. Haldane saw, of the pressure that natural selection exerts to fill an organism’s volume with as much surface area as possible. In combination the networks for transporting resources and the surfaces for processing them become a four-dimensional entity. Three of these dimensions come from the two-dimensional area of surfaces, the capillary walls across which resources travel. They are folded so much, and fill space so well, that they take on the geometrical properties of a three-dimensional solid. It’s as if you saw a ball, but realized on closer inspection that it was a crumpled sheet of paper. The fourth dimension is the distance resources must travel—the length of the tubes leading from point of uptake to point of delivery. Together, internal surface area and transport distance determine the rate at which an animal can burn energy. The number 3 in 3/4 arises because this four-dimensional network

must fit itself inside a compact body existing in a three-dimensional world.

Alternative Routes

West, Brown, and Enquist do not have the field to themselves. While some biologists were accusing them of oversimplifying biology in trying to explain metabolic rate, some physicists thought their model wasn’t simple enough. “We read the paper, and it seemed somewhat complicated,” says Jayanth Banavar, a physicist at Pennsylvania State University. “We thought there must be some other explanation.”

Banavar teamed up with two other physicists, Amos Maritan and Andrea Rinaldo, and a biologist, John Damuth, to design a network using different criteria. Rather than looking for a network that maximized energy supply, they sought one that minimized the rate of flow in the system and so the volume of blood. They considered a body as a set of delivery stations, cells, serviced by a network of pipes stemming from a single source. Unlike the fractal model, the network can flow through its destination and go on to somewhere else. Cells in this model are not like twigs at the end of a branch. They are more like stations on a railway line, where some people (resources) get off. As the network expands, the amount of fluid in the system must rise. The team showed that, in the most efficient three-dimensional network, where the distance from source to destination is minimized, the amount of fluid must rise proportional to the volume of the network raised to the fourth power.

This model got the desired number 4—and, incidentally, also predicted the geometry and flow rates of river networks—but it created a problem. As a network, or an animal, grows, the amount of fluid that is in transit at any time, and so not available to be used, must increase. So for the rate of supply to keep pace with network size, more and more fluid is needed. If animals were like this, big ones would need a volume of blood larger than the volume of their body. So Banavar’s team thought again and forced their model network to stay contained inside the body. In this case, as the network grows, its capacity to supply resources declines, and so the cells receiving the resources must adjust

their demands accordingly. The network that minimizes the amount of blood in transit delivers resources proportional to the 3/4 power of the body volume it supplies—as predicted by Kleiber’s rule.

For Banavar it is the fact that networks are directed—the flow only goes one way—rather than that the network is a fractal that is the key point. “The question,” he says, “is always ‘What are the essential features and what are less important?’”—which details you need, and which you can junk. The fractal model may be accurate, says Banavar, but he sees it as a special case of a more general set of networks described by his team’s more economical model. Unlike West, Brown, and Enquist’s, this model does not need the complications of fractals, area-preserving branching, or fluid dynamics. Nor does it assume that the networks get resources to the cells as quickly and efficiently as possible. Instead, Kleiber’s rule emerges as a general property of distribution networks—not just the best way to deliver resources but the only way. West, Brown, and Enquist point out that their model is better at predicting specific details about living things—mirroring some of the criticisms aimed at them by biologists who believe that the fractal model is too general and abstract.

The original West, Brown, and Enquist model reactivated interest in metabolic rate, and since they launched their theory still others have joined in with ideas and theories quite different from the two network models. One recent model based on the chemical structure of cell membranes argues that small animals’ membranes are softer and leakier than those of large animals, and so they use energy and process chemicals more quickly. Another argues that metabolic rate arises from the way that the amount of DNA in a cell affects its size (the more DNA an organism has, the bigger its cells). Still another looks at metabolic rate from the viewpoint of how body size affects an animal’s ability to obtain food and store it in the body. And another argues that no one factor depends on metabolic rate but rather that Kleiber’s rule is a consequence of the “allometric cascade” of the many different processes, involving the heart, lungs, and cells, that make up metabolism. And there are others I have not mentioned.

But in this crowded field the West-Brown-Enquist model is currently the front-runner. Some reasons for its lead are scientific. The

network model’s mathematics are complex, but its foundations are intuitive and its applications universal—all organisms must get stuff from somewhere to somewhere else. It’s difficult to see how else organisms could be constructed. The fractal model also makes predictions that match other aspects of biology, particularly its calculations of the width of tree trunks and aortas. The weakness of the network models is that they are difficult to test—it’s hard to see a killer measurement that might settle things one way or the other, particularly as these models are generalized abstractions of how life works. Other reasons for its preeminence are political: The team got there first, they have a long list of publications in the most prestigious scientific journals, and, as we shall see, they have been vigorous in using their model to explain other aspects of biology, which has raised their profile.

Nine years, at the time of writing, after the fractal model’s debut, researchers are divided along similar lines, in similar proportions, as the original reviewers that Science got to comment on the theory. Some are keen advocates, some are virulent opponents, and the majority are interested but undecided, waiting to see which way things go. The debate is still fierce, but no one has found a fatal flaw in the fractal theory to convince the scientific community that it is invalid. “If it’s wrong, it’s wrong in some really subtle way,” says West.

Single Cells, Virtual Networks

Another branch of the tree of life challenges the network theory. Many, probably most, species don’t bother sticking their cells together into organs, networks, and bodies. They live as single cells. But they are not beyond the reach of biologists looking for scaling laws. Single-celled organisms certainly come in lots of different sizes. The alga Ostreococcus tauri, discovered in the Mediterranean in 1994, has cells a millionth of a meter across. Another alga, Acetabularia, also known as the mermaid’s wineglass, has cells that are 5 centimeters long and 1 centimeter across. By my reckoning this is almost 10 billion times the volume of Ostreococcus; a whale is a mere 10 million times more massive than a mouse. Other unicellular organisms are even larger, although they pull some fancy tricks in the process. The alga Caulerpa taxifolia grows thin fronds up to a meter long, each of which is basically a single cell,

although one that is subdivided and reinforced. And some slime molds come together to form blobs that are tens of centimeters across and have no cell walls, although they may contain millions of cell nuclei (the bit of the cell containing the chromosomes). None of them have any blood vessels, xylem, or any other sort of plumbing. Yet there is evidence that the metabolic rates of unicellular organisms also scale as the 3/4 power of their mass. How can a theory based around distribution networks say anything about them?

In fact, unicellular organisms are not just blobs. Their resource distribution problems are similar to those of larger organisms, and they have come up with similar solutions. The cells belonging to the kingdom called eukaryotes, which includes plants, animals, fungi, and single-celled organisms such as algae and amoebas, have complex structures that are like miniature organs (the technical term is organelles), such as the fuel-burning mitochondria and plants’ carbohydrate-making chloroplast. Like capillaries in the blood system, these structures provide a surface for the transactions of biochemistry to take place. And like capillaries, these miniature organs keep the same size in different-sized cells—they are the terminal units in the cellular network. Evolution should drive a cell to speed its internal transport and maximize the surfaces where molecules are used—in the same way that larger bodies increase the surface area of their lungs and intestines—so that a cell can get on with its life as quickly as possible.

Even in unicellular organisms, the evolutionary drive to use resources as quickly and efficiently as possible should produce some sort of structured distribution network. Such a network could be physical—cells have microscopic cables and packages that shunt molecules around—or it could be virtual, with resources flowing by diffusion from where they are taken up at the cell membrane to the terminal organelles inside the cell. The distance from where cells take up chemicals to where they use them is analogous to the length of the tubes in the blood or xylem system, and as in larger organisms, evolution should seek to minimize it. This means that cells’ metabolic rates should scale as the 3/4 power of their body masses, regardless of the considerations that apply to tubular networks, such as how they branch or how fluids flow through them.

The metabolic rate of an isolated mitochondrion, sitting in a lab dish, also falls on Kleiber’s line. Even organelles have their own distribution networks. At the molecular level, their respiration is run by large, complex molecules. And even in these isolated molecules, the reactions of respiration run at the speed predicted by Kleiber’s rule, suggesting that within these molecules’ structure there are nanoscale distribution networks that move individual oxygen and ATP molecules around. From monsters to molecules, this line of reasoning extends the network theory’s reach over a size range of 27 orders of magnitude, or a thousand trillion trillion times. The same logic extends the theory to the bacteria, the vast group of single-celled organisms lacking mitochondria, chloroplasts, or nuclei, but that do have these large and complex molecules. West, Brown, and Enquist’s model describes these as “virtual fractals”: Resources are distributed in a branching pattern through the cell, even if they are not in tubes. For the model devised by Banavar and his colleagues, this isn’t such an issue—resources spread out from a central point, like food being shared from the head of a table to the guests seated around it.

It seems that natural selection has such a strong preference for efficient distribution that the same fractal network solution has evolved many times, at scales from molecules, to cells, to plants and animals, taking on many different forms and for many different substances but always converging on the same fundamental properties. These networks are such a versatile solution to the problem of supplying a body with resources that they have allowed life to evolve into a remarkable range of sizes. It’s as if a human engineer had invented a single mechanism that could power everything from silicon chips to supertankers.

So model networks can explain why the slope of the line linking mass and metabolism has a gradient of 3/4. But that leaves lots of variation. Animals of the same size in different groups of organisms have very different metabolic rates. Reptiles are slow, birds fast, and mammals somewhere in between. Plants are slowest of all. Even accounting for size, the speediest metabolic rates seen in nature run 200 times more quickly than the slowest. Body size controls the rate at which cells can be supplied with resources, but this is not the only

form of energy that influences metabolic rate. There is also the rate of the chemical reactions within cells. And this depends on temperature.

Of Reptiles and Bananas

I met Jamie Gillooly in an Albuquerque coffee shop. He picked up a banana skin. “If you correct for size and temperature,” he said, “this banana on the table, at least before I ate it, was respiring at a rate similar to everyone else in this room.”

In autumn 1999, Gillooly was completing his Ph.D. at the University of Wisconsin on the effects of size and temperature on the plankton in lakes. Biologists who study aquatic systems do not pay much attention to the work of those concentrating on land life, so he had not come across the scaling theory until Jim Brown came to Wisconsin to give a talk on it. The two met over breakfast to discuss their ideas; by the time they finished, they were “both foaming at the mouth with excitement,” says Gillooly. A few months later Gillooly was on his way to New Mexico to do a postdoc.

Life runs faster in the warm—this is why fridges slow the growth of mold. Temperature has an exponential effect on metabolic rate. A 5°C rise in body temperature will raise metabolic rate by 150 percent. It was West who suggested how to incorporate this effect into the equation for metabolic rate. The answer, he said, was the Boltzmann factor—named after the German physicist who believed that life was a struggle for energy and who also laid the foundations for statistical mechanics, the physical theory that explains how particles behave en masse. The Boltzmann factor is the probability that two molecules bumping into each other will spark a chemical reaction. The higher the temperature, the faster the molecules move, the harder they collide, the greater the probability of a reaction, and so the faster the chemical process. Like metabolism, the effect is exponential. West, Gillooly, and the rest of the team added the Boltzmann factor to Kleiber’s allometry equation relating metabolic rate to body mass raised to the power of 3/4.

The results were dramatic. Accounting for temperature in this way

mopped up much of the variation in metabolic rate that size alone could not explain. Correcting for temperature also brought different groups onto the same line: Reptiles’ relatively slower metabolic rates are a consequence of their lower body temperatures, showing that cold-and warm-blooded animals share fundamental metabolic processes. The same goes for plants and animals.

Like the network model, this is another simplification. The chemistry of metabolism involves many reactions, each of which will require a certain amount of energy to get going. Applied to metabolism, the Boltzmann factor is a black box. It could be a kind of average for all the hundreds of chemical reactions in metabolism, or it might be the energy needed to get over one crucial hump in the path. But including it reduces the size-adjusted variation in metabolic rates from a factor of 200 to a factor of 20.

Perhaps this remaining 20-fold variability is some indication of the wiggle room that organisms have that enables them to tinker with their metabolic rate to match their circumstances. Animals living in cold environments might crank their metabolic rates above the grand average, whereas plants in impoverished soils might depress theirs below it. Or perhaps it is a measure of how far organisms can deviate from the optimal network before they become so inefficient that natural selection weeds them out. This leftover variation, if you like, is what really needs to be explained—the place where biology will come into its own.

“I’d like for biology to have a sense of average idealized organisms that share similar principles, which can be understood mathematically—and that what you should be studying are the deviations from that,” says West. “At the end of this century there’ll be a fantastic theory that’ll predict lots of things—everything from ecology to how genes are turned on and off. And I’d like it if there were a footnote in the textbooks saying: ‘At the end of the twentieth century people started taking the problem seriously, and there were these guys who pointed the way to getting rid of the uninteresting part of the problem, the bit that depends on mass and temperature, that determines 90 percent of what we see. Now this real theory of biology is devoted to the other 10 percent.’”