Quality Management for Digital Model–Based Project Development and Delivery (2025)

Chapter: APPENDIX E: TASK 8 METHODOLOGY REVIEW PACKET

![The text reads: Page 41. N C DOT Survey Checklist PD N Stage 1 - Location and Surveys Q C Checklist SPOT I D or Project T I P number: Click to edit. County: Click to edit. Table header: 1 L S 1 Provide Photogrammetric Control and Initiate Surveys. Column headers: Item number, Review Item, Yes, No, and N A. For each subcategory, the cells under Yes, No, and N A have blank checkboxes. 1, Complete Photogrammetric Control for Preliminary or Planning Mapping (N C Grid Datum). 1.1 Contacted Property Owners. 1.2 Performed panel control target surveys. 1.3 Processed and Developed Panel Control. 1.4 Compiled QC Documentation (Project Review Checklist – P R C]. 1.5 Provided panel control to the Photogrammetry Unit. 2 Complete S U E Level D. 2.1 Researched existing utility records. 2.2 Compiled QC Documentation (Project Review Checklist – P R C]. 2.3 Developed and Provided SUE Level D C A D D File (N C Grid Datum). 3 Perform an Independent Review of Mapping Limits Polygon. 3.1 Reviewed mapping limits polygon. 3.1.1 Received mapping limits polygon from the Project Team? 3.2 Reviewed and evaluated mapping limits for adequate design and analysis. 3.2.1 Coordinated with the Photogrammetry Unit and Project Team, if applicable? 3.3 Revised and provided mapping limits polygon. 3.3.1 Final mapping limits file adheres to the latest approved N C DOT MicroStation version? 3.3.2 Provided final mapping limits to the Photogrammetry Unit and Project Team, if applicable. 4 Complete Photogrammetric Control for Preliminary or Planning Mapping (Local Datum). 4.1 Developed a local project control network using the current Nation Spatial Reference System. (N S R S) projected onto the North Carolina State Plane Coordinate System. 4.1.1 Is the Project Datum Projection in accordance with N C DOT Location and Surveys Local Project Coordinate Systems? 4.1.2 Has the Project Datum Projection followed standard Geomatics Engineering procedures related to distance, direction, and elevation constraints? 4.1.3 Have contiguous and or neighboring project projections been reviewed and incorporated as part of the Project Datum Projection? 4.1.4 Have Coordinate equalities, if unavoidable placed in project areas that have little to no impact on the proposed design? 4.2 Contacted Property Owners. 4.3 Performed Panel Control Target Surveys. 4.4 Processed and Developed Panel Control. 4.S Compiled QC Documentation (Project Review Checklist – P R C). 4.6 Provided Panel Control to the Photogrammetry Unit.](https://www.nationalacademies.org/read/29172/assets/images/img-120-1.jpg)

Glossary of Terms

This glossary contains terms related to 3D modeling and quality management principles relevant to project development and delivery. Each term is presented in the following format:

Term (ACRONYM) Synonyms: synonyms. Related terms: related terms. Definition in a sentence. Optional example of usage.

3D Coordination Synonyms: Clash Detection. The process in which models are interrogated to identify spatial conflicts. 3D coordination can be partially automated using spatial clash detection algorithms. It can be applied within a discipline (e.g., to check for conflicts between rebar and post-tensioning) or across disciplines (e.g., to check for conflicts between foundations and subsurface utilities).

Asset Synonym: Facility. Fixed facilities within a transportation network managed and operated by public agencies.

Asset Category Primary or top-level method of cataloging transportation assets. Agency priority asset categories include bridges and structures, pavements, drainage networks, traffic safety, and intelligent transportation systems.

Asset Class The second level of cataloging assets within an asset category hierarchy.

Auditor The person who audits a project’s quality documentation.

Audit Date The date on which the Auditor audited the quality documentation.

Attribute Related terms: Non-graphical Data, Property. Non-graphical data that is part of a model element definition. Modern modeling software includes property fields that can be used to embed pay item numbers as attributes to elements in a 3D model.

Back Checker The person who reviews the Reviewer’s comments and markups and resolves issues or areas of non-concurrence. This may be the Originator. Some DOTs do not require back checks for design reviews.

Back Checker Date The date on which the Back Checker completed the back check.

BIM Execution Plan (BEP) Synonyms: Digital Delivery Execution Plan. Related terms: BIM Manager, Building Information Modeling. A plan to manage the use of BIM, especially collaboration and information delivery, to accomplish project goals.

BIM Manager Related terms: BIM Execution Plan, Building Information Modeling, Model Author, Model Manager. The individual, normally identified in a BEP, who is responsible for overseeing BIM usage on a project.

Building Information Modeling (BIM) Related terms: BIM Execution Plan, BIM Manager. The use of a shared digital representation of a built asset to facilitate design, construction, and operation processes and to form a reliable basis for decisions. (ISO 19650-1:2018(E))

Computer Aided Design and Drafting (CADD) The process of creating computer models based on parameters

Calculation A mathematical process requiring a manual or automated way to extract numerical data via formulas, equations, and/or computer programs to achieve a numerical solution or interpretation of data.

Calculation Quality Control Form. A specific form to document and certify that the calculation review has been performed.

Check The act of inspecting or testing something to determine its accuracy or quality.

Certifier The Discipline Lead or Design Manager who certifies that the design was completed to the agency’s specifications and in alignment with a comprehensive quality management framework. Typically, only final deliverables are certified.

Certification Yes / No indicating whether the Certifier has certified the design.

Clash Detection Related terms: 3D Coordination. A technique used in BIM or digital delivery processes to identify conflicts or collisions between various model elements.

Common Data Environment (CDE) The agreed-upon source of information for a project or asset, which is used for collecting, managing, and disseminating each information container through a managed process. (ISO 19650-1:2018(E))

Contract Documents A collection of clearly identifiable documents that describe the requirements and terms for a project. Contract documents typically include plans, specifications, and working drawings. The specification defines plans and working drawings, as well as how to coordinate contract documents in the case of a conflict. Models and/or CADD documents may be included in the definition of Plans and Working Drawings or defined as specific contractual entities in the Specifications or Special Provisions.

Corrector The person who made corrections in design documents. This may be the Originator.

Correction Date The date on which corrections were completed.

Data Exchange The process of taking data structured under a source schema to transform and restructure into a target schema, so the target data is an accurate representation of the source data within specified requirements and minimal loss of content.

Design Authoring The process in which 3D design or modeling software is used to develop 3D models based on specific roadway and structural criteria to convey design intent for construction. Core functions of design authoring include development and analysis of design elements, while the functions of a modeling software include the development of 3D objects. Depending on the discipline, Design Authoring is the same as the Modeling software.

Design Element Synonyms: Model Element. A component of a model that represents a physical object (e.g., a sign) or abstract concept (e.g., alignment, north arrow).

Design Review The process in which a 3D model is used to review and provide feedback related to multiple aspects of design, including evaluation of design alternatives and environmental constraints, review and validation of geometric design criteria, and completeness or quality of overall design.

Digital Record Related term: Quality Artifact. A file that contains data stored in a digital format.

Discipline Related term: Functional Area. A description of the discipline that content being reviewed falls under.

Discipline-Specific Model Related term: Federated Model. A model or linked models related to a single discipline. The superstructure model, substructure model, and detailing models are linked together into a federated Structural Discipline Model.

Document Type A description of the type of document being reviewed, which could come from a lookup list (e.g., calculations, design model).

Documentation Documents or records that serve as a record.

Facility Any physical asset within a transportation or roadway network (e.g., roads, bridges, tunnels, overpasses, rest areas).

Federated Model Related term: Discipline-Specific Model. A model compiled by referencing models together using a common spatial reference frame. A federated model most commonly is used to combine all discipline-specific models together to represent the project as a whole. However, when an individual discipline uses multiple models to develop a discipline-specific design, a federated model could be used to represent a single discipline.

Functional Area–Related term: Discipline. A description of the sub-discipline/functional area that content being reviewed falls under.

Graphical Data Related terms: Spatial Data, Non-graphical Data. Data conveyed using shape and arrangement and/or location in space. Graphical data includes spatial data and non-spatial data.

Industry Foundation Classes (IFC) Related term: Open Data. A non-proprietary data schema and format to describe, exchange, and share the physical and functional information for assets within a facility.

Layer Synonyms: Level. A means of segmenting data within a file. Model entities are assigned a layer, and layer properties can be used to control the visual style of elements as well as the editability and the visibility of elements on the entire layer.

Level of Information Need (LOIN) Specifications The minimum requirements for each model element within a discipline and/or project model(s). LOIN defines both the level of detail of the geometry and the level of information attached to model elements. A LOIN specification may define requirements for the final deliverable or may define a progressive specification with increasing detail and information at successive milestones.

Library A software resource file that provides configuration or utilities to aid in the use of the software. A library may contain object definitions, styles, scripts, property sets, configuration data and more.

Generally, libraries are developed to reflect the agency’s model development standards and packaged into the modeling software configuration.

Metadata Information that describes the characteristics of a dataset. Metadata may include structural metadata, which describes data structures (e.g., data format) and descriptive metadata, which describes data contents (e.g., roadway design). Metadata is used to describe and manage documents and other information containers.

Milestone A description of the stage in the project development life cycle during which the review occurred. Should be populated by a lookup list.

Model A representation of a system that allows for investigation of the system properties. (EN ISO 29481-1:2016).

Model Author Related terms: BIM Manager, Model Manager. The individual, normally identified in a BEP, responsible for creating a specific model element or group of model elements.

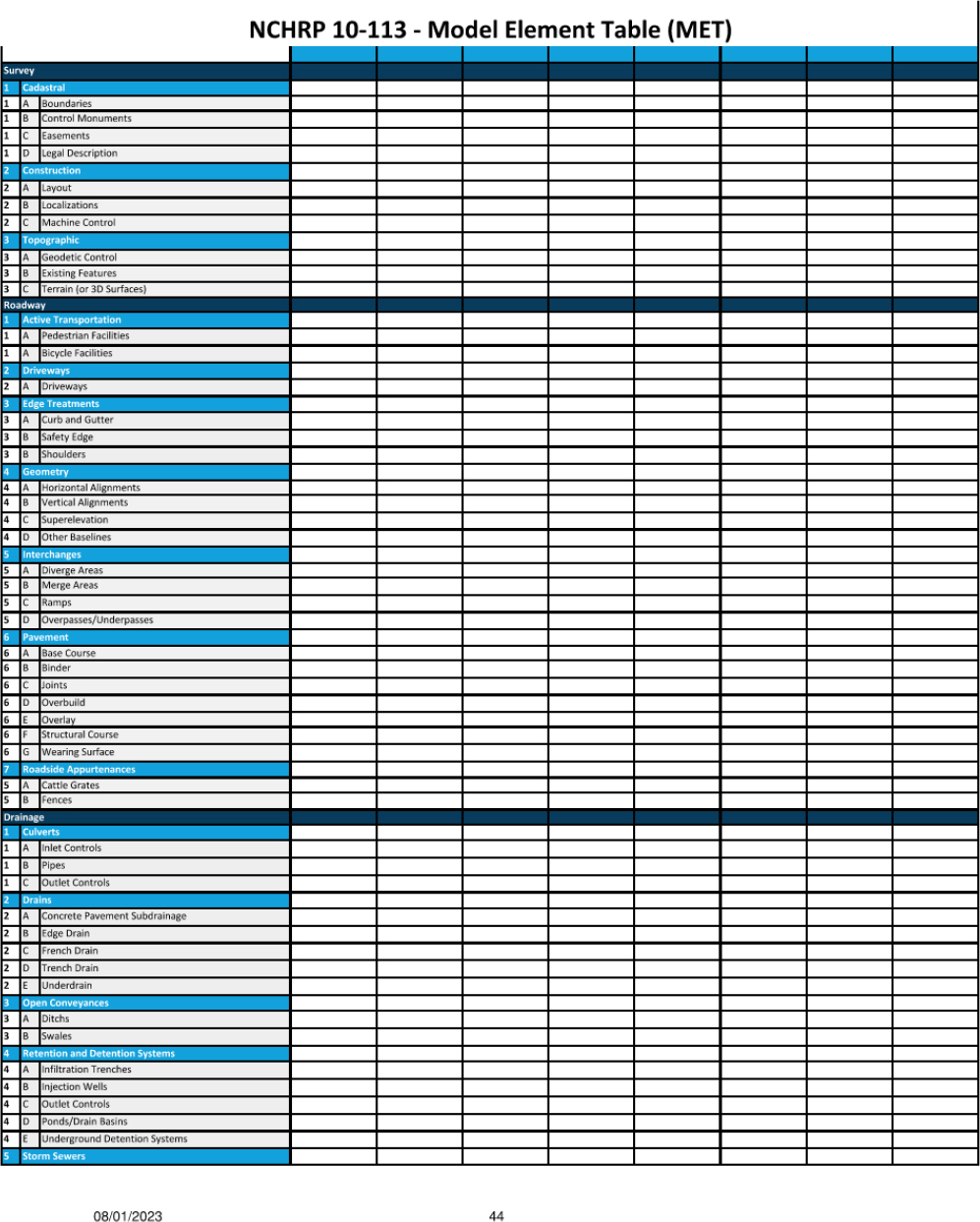

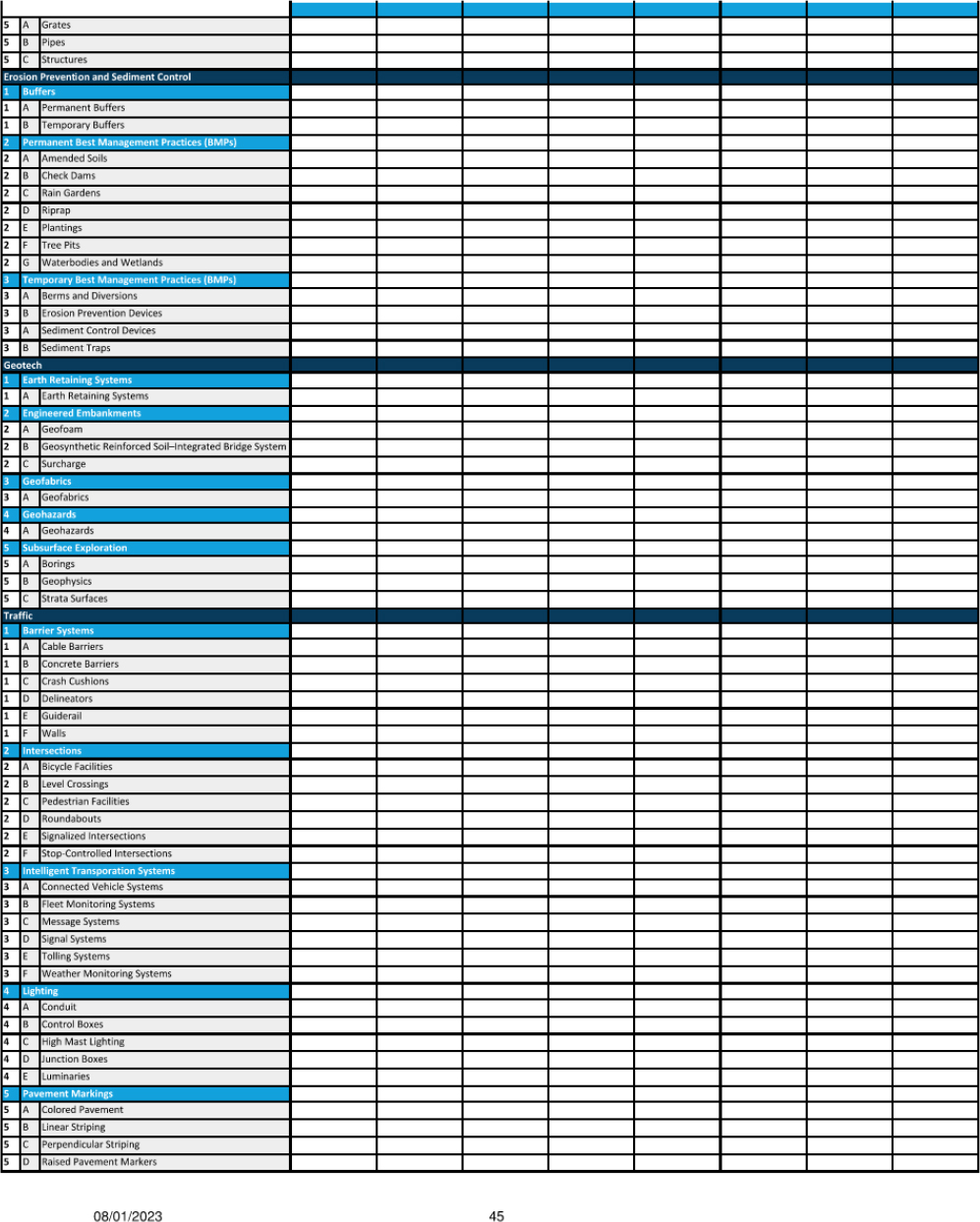

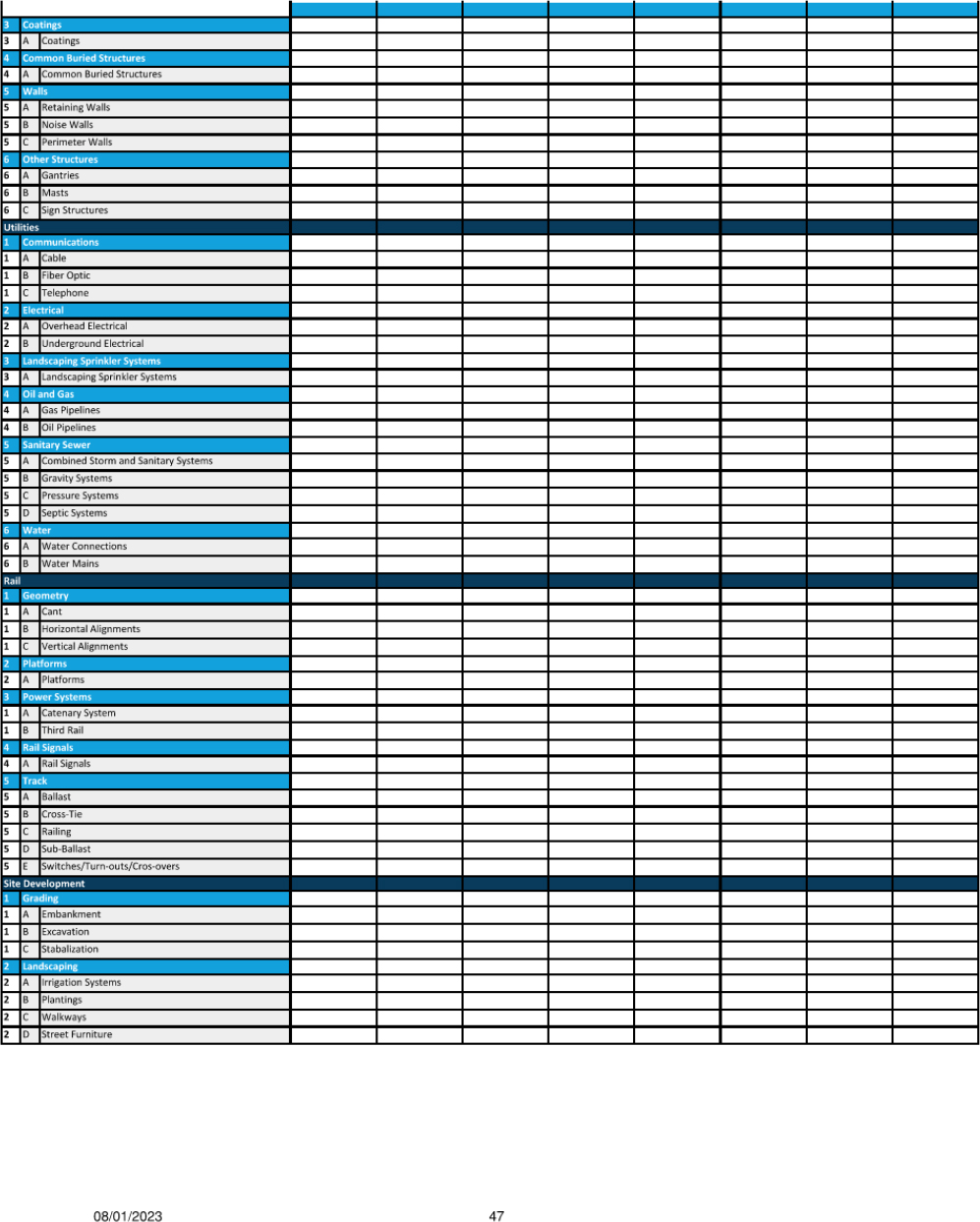

Model Element Table (MET) A classified list of model elements. A MET can be used to create a Model Progression Specification, which describes how elements of discipline-specific models increase in LOIN throughout the design process. The MET can also be used to document the quality management process.

Model Manager Related terms: BIM Manager, Model Author. The individual, normally identified in a BEP, responsible for a discipline-specific model. Model manager responsibilities are normally documented in the BEP. Typical responsibilities include managing design authoring within the discipline-specific model and implementing quality procedures for the discipline.

Non-graphical Data Related terms: Attribute, Graphical Data, Property. Data that describes attributes and properties of a model element that do not relate to its physical dimensions or location. A globally unique identifier is a common non-graphical attribute.

Open Data Format Related terms: Industry Foundation Classes, Proprietary Data Format. Data that is structured according to a schema has been published in a format that is free to use and redistribute. Open data formats are frequently supported by software products, enabling the exchange of data.

Originator The individual responsible for content being reviewed.

Project Identifier A project number or other identifier that can be used to connect review documentation to the project dataset.

Property Synonyms: Attribute. Non-graphical information that describes a model element. For instance, the Modulus of Elasticity is a property of a material (e.g., steel).

Proprietary Data Format Related term: Open Data Format. Data that is structured according to a proprietary schema. Data stored in a proprietary data format can usually only be read and written by one vendor’s software products.

Quality Artifact Related term: Digital Record. An auditable record of quality checks that have been performed.

Quality Assurance (QA) All the planned and systematic activities necessary to provide confidence that a product or facility will perform satisfactorily once in service; includes quality control, independent assurance, and acceptance as its three key components.

Quality Control (QC) Actions and considerations necessary to assess production and construction processes to control the level of quality being produced in the end product.

Reference Synonyms: Federated Model. An information container (e.g., a 3D model) stored in a separate file and federated with a 3D model as a read-only backdrop. While the visibility and visual styles of a reference can sometimes be adjusted, the data within the reference cannot be edited.

Review An assessment or examination of something. Review is used in context of examining design models.

Reviewer Synonym: Checker. The individual responsible for reviewing model content.

Reviewer Credentials The Reviewer’s credentials: some DOTs require specific reviewers to be a registered professional, such as Professional Engineer (PE), Structural Engineer (SE), or Land Surveyor (LS).

Review Criteria A description of the standard or code against the review is executed (design manual, standard code, checklist).

Review Date The date on which the Reviewer completed their review.

Review Title The title of the Reviewer: a way to demonstrate the reviewer’s expertise to justify their position as a reviewer. Some DOTs have seniority requirements for conducting some review types.

Review Type A description of the review’s specific purpose.

Rule Set A collection of criteria for implementing an algorithm. Rule sets are typically used with clash detection algorithms where they specify clearance envelopes around specific groups of model elements that would constitute a clash.

Saved View A predetermined set of saved attributes including viewpoints, scale, render style, orientation, and object and display settings saved for future retrieval.

Spatial Data Related terms: Graphical Data, Non-graphical Data, Model. Graphical data placed within a coordinate reference frame that is tied to a geographical coordinate system so that the information is tied to a physical location. Spatial data is often stored in 3D models and Geographical Information Systems (GIS).

Transmittal Date The date the Originator submitted materials for review.

Verifier The person who verified that corrections were adequately addressed. This may be the Reviewer.

Verifier Date The date corrections were verified.