Government-Industry Partnerships for the Development of New Technologies (2003)

Chapter: Federal Partnerships with Industry: Past, Present, and Future

Federal Partnerships with Industry: Past, Present, and Future

A BRIEF HISTORY OF FEDERAL SUPPORT1

The earliest articulation of the government’s nurturing role with regard to the composition of the economy was Alexander Hamilton’s 1791 Report on Manufacture, in which he urged an activist approach by the federal government. At that time, Hamilton’s emphasis on industrial development was controversial, although subsequent U.S. policy has largely reflected his belief in the need for an active federal role in the development of the U.S. economy.2 In fact, driven by the exigencies of national defense and the requirements of transportation and communication across the North American Continent, the federal government in that same decade played an instrumental role in developing new production techniques and technologies by turning to individual entrepreneurs with innovative ideas. Most notably the federal government in 1798 aided the foundation of the first

machine tool industry with a contract to the inventor Eli Whitney for interchangeable musket parts.3

A few decades later, in 1842, Congress appropriated funds to demonstrate the feasibility of Samuel Morse’s telegraph.4 Both Whitney and Morse fostered significant innovations that led to completely new industries. Indeed, Morse’s innovation was the first step on the road to today’s networked planet. The support for Morse was not an isolated case. The federal government increasingly saw economic development as central to its responsibilities. During the nineteenth century, the federal government played an instrumental role in developing the U.S. railway network through the Pacific Railroad Act of 1862 and the Union Pacific Act of 1864.5 While not without abuse, these acts provided very substantial financial incentives to the development of the U.S. rail network. The federal

government also played a key role in developing the farm sector through the 1862 Morrill Act,6 which established state agricultural and engineering colleges; the 1889 Hatch Act, which established state agricultural experiment stations; and the 1914 Smith Lever Act, which added state agricultural extension services.7 The Union Pacific act made it possible to build one of the world’s great transportation systems. It was also for its time a major partnership, both because market incentives were not sufficient and because the government provided major financial inducement to encourage this massive undertaking. The inducements were on the scale of the enterprise—and not without abuse—but the fundamental policy objectives of economic growth and national unity were achieved.

There were also major benefits in terms of management, organization, and market scale for many other American firms—what economists today would call positive externalities—created by the extension of a national railroad network. Alfred Chandler has observed that

As the first private enterprises in the United States with modern administrative structures, the railroads provided industrialists with useful precedents for organization building . . . . More than this, the building of the railroads, more than any other single factor, made possible this growth of the great industrial enterprise. By speedily enlarging the market for American manufacturing, mining, and marketing firms, the railroads permitted and, in fact, often required that these enterprises expand and subdivide their activities.8

This support continued and expanded into the twentieth century. In 1901, the federal government established the National Bureau of Standards to coordinate a patchwork of locally and regionally applied standards, which often arbitrary, were a source of confusion in commerce and a hazard to consumers. Later the federal government provided special backing for the development of (what we now call) dual-use industries, such as aircraft frames and engines and radio, seen as important to the nation’s security and commerce. The National Advisory Committee for Aeronautics, formed in 1915, contributed to the development of the U.S. aircraft industry, a role the government still plays.9 Similarly, the Navy was instru-

mental in launching the U.S. radio industry by encouraging patent pooling and by direct participation in the Radio Corporation of America (RCA).10

The unprecedented challenges of World War II generated huge increases in the level of government procurement and support for high-technology industries.11 Today’s computing industry has its origins in the government’s wartime support for a program that resulted in the creation of the ENIAC, one of the earliest electronic digital computers, and the government’s steady encouragement of that fledgling industry in the postwar period.12 Following World War II the federal government began to fund basic research at universities on a significant scale. This was done first through the Office of Naval Research and later through the National Science Foundation.13

These activities were complemented by aggressive procurement efforts during the Cold War, when the government continued to emphasize technological superiority as a means of ensuring U.S. security. Government funds and cost-plus

contracts helped to support enabling technologies, such as semiconductors, new materials, radar, jet engines, missiles, and computer hardware and software.14

In the post-Cold War period the evolution of the U.S. economy continues to be marked by the interaction of government-funded research and procurement and the activities of innovative entrepreneurs and leading corporations. In the last decade of the twentieth century government support was essential to progress in such areas as microelectronics, robotics, biotechnology, nanotechnologies, and the investigation of the human genome. Patient government support also played a critical role in the development of the Internet (whose forerunners were funded by the Defense Department and the NSF).15 Together these technologies make up the foundation of the modern economy.

As Vernon W. Ruttan has observed, “Government has played an important role in technology development and transfer in almost every U.S. industry that has become competitive on a global scale.”16 Importantly, the U.S. economy continues to be distinguished by the extent to which individual entrepreneurs and researchers take the lead in developing innovations and starting new businesses. Yet in doing so they often harvest crops sown on fields made fertile by the government’s long-term investments in research and development.17

CURRENT TRENDS IN FEDERAL SUPPORT

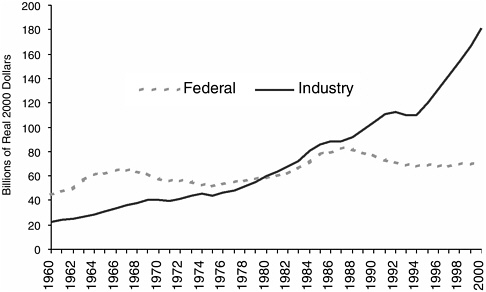

The federal government’s role in supporting innovation through funding of R&D remains significant although non-federal entities have increased their share of national funding for R&D from 60 percent to 74 percent between 1990 and 2000 (see Figure 1). Federal funding still supports a substantial component, 27 percent, of the nation’s total research expenditures.18 Significantly, federal expenditures constitute 49 percent of basic research spending. In addition, federal funding for research tends to be more stable and based on a longer time horizon than funding from other sources. Commitment of federal research spending is therefore an essential component of the U.S. innovation system.

FIGURE 1 Total real R&D expenditures by source of funds, 1960-2000.

Source: U.S. National Science Foundation, National Patterns of R&D Resources.

Changing Priorities and Funding

Shifts in the composition of federal research support therefore remain important both in their own right and for the impact these shifts may have on the future development of our most innovative industries, such as biotechnology and computers, which promise to be a source of substantial innovation and growth. In both cases the role and impact of federal R&D funding is of great importance. In the case of biomedicine the promise of better health, and the tangible benefits it represents has prompted a rapid expansion of federal support for the National Institutes of Health.19

By the late part of the 1990s this trend had steadily gained momentum, resulting in congressional agreement to double the funding for the National Institutes of Health (NIH) over five years. This agreement has led, through two administrations, to major yearly increases in federal funding for biomedical research. This has raised concerns, even among the NIH leadership,20 that other areas of

Box C.Federal Support of Biomedical and Information Technology Research The source of strength in the U.S. innovation system is the diversity in its funding sources, mechanisms, and missions. While it has had many successes, it is also true that the U.S. innovation system has alwys had imperfections with regard to the commercialization of promising ideas. In this context there are major challenges to achieving the promises of biomedical research. From a systemic perspective the main challenge is that the welcome increase in support for biomedical research has not been matched by increases in the supporting technologies required to effectively capitalize on the results of biomedical research. At the same time not only has support for the disciplines that drive progress in semiconductors, computers, and other key technologies not increased but has also seen reductions in real terms over a sustained period. In the view of leading figures from both the biomedical and information technology communities, this state of affairs puts at risk our ability to fully capitalize on the increasing investments in biomedical research, while equally putting at risk the economic growth that generates the revenue base for these R&D investments. Adapted from, National Research Council, Capitalizing on New Needs and New Opportunities: Government-Industry Partnerships in Biotechnology and Information Technologies, C. Wessner, ed., Washington, D.C.: National Academy Press, 2002. |

promising research that directly contribute to the development of medical technologies are suffering from relative neglect.21

As noted in Box C, shifts in federal spending for R&D are a cause for serious concern. There are two main issues: The first relates to the absolute amounts and allocations of spending. The second concerns the ability of the U.S. economy to capitalize on these investments. Each point is addressed below.

Shifts in Composition of Private Sector Research

Concerning the first point, there is a growing concern that the United States is not investing enough and broadly enough in research and development. In the private sector the demise of large industrial laboratories, such as IBM’s Yorktown facility and Bell Laboratories, has reduced the amount of basic research conducted by private companies.22 Hank Chesbrough, for instance, notes that large corporations “are shifting their resources away from basic discovery-oriented research to applied mission-oriented work. At the same time, … outsourcing more of their basic research work to small startups, independent research houses and contract research organizations, while also partnering with universities and national laboratories.”23 In light of these developments, the role of partnerships gains additional relevance. Private equity markets also influence the level and distribution of investment. While the equity market in the United States is among the most dynamic in the world, it does not equally address all phases of the innovation process. In fact, current trends in the venture industry—particularly the increase in deal size—make certain types of small, early-stage financing less likely, despite the overall increase in venture funding.24

Although private sector R&D has steadily increased in the United States in recent years, almost all of it has been product oriented rather than geared to basic research.25 In addition, the increase in corporate spending on research is concentrated in such sectors as the pharmaceutical industry and information technology. Within those sectors much of the R&D effort is necessarily distributed to product development, rather than to the more basic research questions.

Stagnation and Decline in Key Disciplines

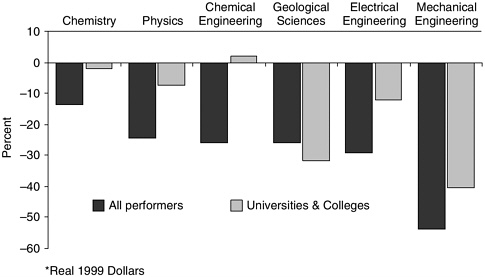

The second area of concern regards the allocation of federal research funds, specifically, the unplanned shifts in the level of federal support within the U.S.

public R&D portfolio. As highlighted in a recent National Research Council report,26 the United States has experienced a largely unplanned shift in the allocation of public R&D.27 The end of the Cold War and a political consensus to reduce the federal budget deficit has resulted in reductions in federal R&D funding in real terms for some disciplines.

In practice, for example, the decline of the defense budget corresponded with a slowdown in real terms of military support for research in physics, chemistry, mathematics, and most fields of engineering. This STEP Board study showed that in 1997 several agencies were spending substantially less on research than in 1993, even though the overall level of federal research spending was nearly the same as it was in 1993. The Department of Defense dropped 27.5 percent, the Department of the Interior was down by 13.3 percent, and the Department of Energy had declined by 5.6 percent.28 Declines in funding for the Departments of Defense and Energy are significant because traditionally these agencies have provided the majority of federal funding for research in electrical engineering, mechanical engineering, materials engineering, physics, and computer science.29

After five years of stagnation federal funding for R&D did recover in FY1998. In 1999 total expenditures were up 11.7 percent over the 1993 level. These changes were driven mainly by the increases in the NIH appropriations. Breakthroughs in biotechnology and the promise of effective new medical treatments have resulted in a substantial increase in funding for the NIH, which is slated for further increases by the current administration.30

26 | See Michael McGeary, “Recent Trends in the Federal Funding of Research and Development Related to Health and Information Technology,” 2002, op. cit. |

27 | See Stephen A. Merrill and Michael McGeary, “Who’s Balancing the Federal Research Portfolio and How?” Science, vol. 285, September 10, 1999, pp. 1679-1680. For a more recent analysis, see National Research Council, Trends in Federal Support of Research and Graduate Education, op. cit. For the impact of these shifts, see National Research Council, Capitalizing on New Needs and New Opportunities: Government-Industry Partnerships in Biotechnology and Information Technologies, op. cit. |

28 | See Michael McGeary, “Recent Trends in the Federal Funding of Research and Development Related to Health and Information Technology,” 2002, op. cit. |

29 | Ibid. |

30 | See American Association for the Advancement of Science, “AAAS Preliminary Analysis of R&D in FY 2003 Budget,” February 8, 2002, <www.aaas.org/spp/R&D>. The AAAS notes that under President Bush’s proposed budget “[n]on-defense R&D would increase by 7.8 percent or $3.8 billion. NIH would make up almost half of the entire non-defense R&D portfolio with another large increase, the fifth and final installment of a plan to double the NIH budget in the five years to FY2003. Excluding NIH, however, all other non-defense R&D would fall by 0.4 percent to $26.7 billion.” See also, National Research Council, Trends in Federal Support of Research and Graduate Education, op. cit., p. 2. |

FIGURE 2 Real changes in federal obligations for research, FY1993-1999 (in real 1999 dollars).31

Notwithstanding this change in overall R&D funding the most recent analysis shows that even with the increase in federal research funding after 1997, the shift in the composition of federal support toward biomedical research remains largely unchanged. In 1999 the life sciences had 46 percent of federal funding for research, (see Figure 2) compared with 40 percent in 1993.32 This difference in funding trends between the physical sciences and engineering on the one hand and the life sciences on the other hand is worrying insofar as progress in one field can depend increasingly on progress in others. Recent shifts in federal investment in research across disciplines may therefore have major consequences.

First, it will affect our ability to exploit fully the existing public investments in the biomedical sciences. Bringing biomedical products from the laboratory to the market is often dependent on information technology-based products and processes. Second, there is also growing concern over the cumulative impact the reduction in federal support for computing research and semiconductor technologies and the decline—over several years—in support for the disciplines that pro-

vide the research and students to the industry, may have for continued U.S. leadership in innovation and commercial applications in semiconductors, computers, and related applications.33

As we have seen, the federal government has long had a role in fostering scientific and technological progress. Yet the scope and diversity of this effort is not always fully appreciated by the general public, or often by the policy community. While support of universities and grant-making organizations—such as that by the NSF and the NIH—is well known, the important and unique roles that agencies, such as the Department of Energy and the Department of Defense, play in providing support to diverse academic disciplines and technological developments is less widely understood.34

In fact, the policy community has only recently begun to recognize the ramifications of these differential funding trends to the nation’s continued economic growth and industrial preeminence. The committee’s October 1999 conference on Government-Industry Partnerships in Biotechnology and Information Technologies helped to focus the public and policy maker attention on these concerns. The committee’s subsequent report on the topics raised at the conference represent one of the first efforts to document the extent of the decline in federal support to the disciplines that many believe are necessary to realize the promise of

our investments in science and technology.35 Box D gives a more in-depth view of the needs of strengthened investments in information technology and the opportunities for partnerships to help the nation capitalize on its investments in biomedical research.

MEETING TOMORROW’S CHALLENGES

The semiconductor industry illustrates the important role public policy on industry partnerships has played in the genesis, resurgence, and continual rapid growth of this industry.36 The implications of current trends in the allocation of federal support, and the recognition of future technical challenges, highlight the need for expanded public support for research—often through partnerships—if the exceptional growth of the information technology industry and the extraordinary benefits related to this growth are to continue.37

Federal Support

Public support played a significant role in the development and growth of the computer and semiconductor industries.38 The birth of the semiconductor industry can be dated with the invention of the first rudimentary transistor in 1947 at Bell Laboratories. Early transistor research received substantial public support; by 1952 the U.S. Army’s Signal Corps Engineering Laboratory had funded 20 percent of total transistor-based research at Bell Labs.39 The eagerness of the Defense Department to put to use this innovative and radical new technology encouraged the Army’s Signal Corps to fund half of the transistor work by 1953.40

This public support for the then nascent semiconductor industry became more prevalent after 1955, when R&D funds were allotted to companies other than Bell. This move followed an antitrust suit, pursued by the U.S. Department of Justice that pressured Bell into sharing its patents on transistor diffusion pro-

cesses.41 Some estimates show, that between the late 1950s and early 1970s, the federal government directly or indirectly funded up to 40 percent to 45 percent of industrial R&D in the semiconductor industry.42 On the demand side, federal consumption dominated the market for integrated circuits (ICs). ICs, for example, found their first major military application in the Minuteman II guided missile. Throughout the 1960s, military requirements were complemented by the needs of the Apollo space program.43

The Competitive Challenge of the 1980s

While the U.S. government was quick to provide early funding to promote the development of semiconductors for both military and space exploration programs,44 its subsequent role in assisting the commercial semiconductor sector was much more restrained. Meanwhile, governments abroad were more purposeful in supporting their domestic semiconductor industries. The Japanese government, for example, recognizing the country’s position as a late entrant in semiconductors, instituted policy measures to jump-start its industry in the 1970s. Under the guidance of the Ministry of International Trade and Industry (MITI) the country made a systematic effort to promote a vibrant domestic semiconductor industry.45 The vertically integrated structure of Japanese industry appeared to provide major advantages with respect to the capital-intensive investments required for manufacturing facilities. Japanese firms undertook a massive capacity build-up in the early 1980s, accelerating their gains in market share through aggressive price cutting. The total DRAM market share of U.S. industry sank from roughly 90 percent in the late 1970s to less than 10 percent by 1984-1985, with many U.S. firms exiting the DRAM market entirely.46 The dramatic impact of the Japanese competition led many informed U.S. observers to question the future viability of the U.S. semiconductor industry.47

Box D.Key Findings and Recommendations of the Committee for Government-Industry Partnerships in Biotechnology and Information Technologies The Committee’s report on Capitalizing on New Needs and New Opportunities: Government-Industry Partnerships in Biotechnology and Information Technologies, addresses a topic of fundamental policy concern. To capture the benefits of substantial U.S. investments in biomedical R&D, parallel investments in a wide range of seemingly unrelated disciplines are also required. This element of the Committee’s analysis draws attention to the fall-off in R&D investments in science and engineering needed to sustain the growth of the U.S. economy and to capitalize on the rapidly growing federal investments in biomedicine. It calls for greater support for interdisciplinary training to support such new areas as bioinformatics and urges more emphasis on and support for multidisciplinary research centers. Among its central findings Information Technology and Biotechnology Are Key Sectors for the Twenty-first Century. Advances in information technologies remain central to economic growth; they will also be critical to progress in the biotechnology revolution itself. At the same time, progress in biotechnology is increasingly dependent on the pace of advances in information technology. Multidisciplinary Approaches Are Increasingly Needed in Science and Engineering Research. Complex research problems require the integration of both people and new knowledge across a range of disciplines. In turn this requires knowledge workers with interdisciplinary training in mathematics, computer science, and biology. Government-Industry and University-Industry Partnerships Have Often Been Effective in Supporting the Development of New Technologies. Partnerships that have often provided effective support include the engineering research centers and science and technology centers funded by the NSF, the National Institute of Standards and Technology’s ATP program, and the Department of Defense’s former Technology Reinvestment program. They also included activities funded by cooperative research and development agreements (CRADAs). In addition, SEMATECH, initially government and industry funded, has played a key role in improving manufacturing technologies for the U.S. semiconductor industry. |

Federal R&D Funding and Other Innovation Policies Have Been Important in Supporting the Development of U.S. Industrial Capabilities in Computing and Biotechnology. The history of the U.S. biotechnology and computing sectors illustrates the value of sustained federal support. Limitations of Venture Capital. While the U.S. venture capital market— the largest and best developed in the world—often plays a crucial role in the formation of new high-technology companies, the provision of venture capital, with its informed assessment and management oversight, is but one element of a larger innovation system. It is not a substitute for government support of long-term scientific and technological research. Key recommendations of the committee

Adapted from, National Research Council, Capitalizing on New Needs and New Opportunities: Government-Industry Partnerships in Biotechnology and Information Technologies, C. Wessner, ed., Washington, D.C.: National Academy Press, 2002. |

The Policy Response

In response to intense competition from the Japanese producers, the U.S. industry launched a series of initiatives, some independently (e.g., the Semiconductor Research Corporation). As the crisis deepened, the industry concluded it needed to cooperate with the government, first to stop what it considered to be unfair trade practices by Japanese producers, and later to strengthen its domestic capabilities.48 The range of these initiatives was extensive. They included:

Founding of the Semiconductor Research Corporation (1982);

Creation of the U.S.-Japan Working Group on High Technology (1983);

Passage of the National Cooperative Research Act (1984);

Passage of the Trade and Tariff Act (1984);

Passage of the Semiconductor Chip Protection Act (1984);

Signing of the Semiconductor Trade Agreement by the United States and Japan (1986);

Founding of SEMATECH (1987);

Congressional approval of the formation of the National Advisory Committee on Semiconductors (1988);

Renewal of the Semiconductor Trade Agreement (1990); and

Beginning of the Semiconductor Roadmap process (1992).

Efforts to address issues in U.S. manufacturing quality (see below) proceeded in parallel with efforts to resolve questions about Japan’s trading practices. A series of trade agreements between Japan and the United States did not resolve trade frictions between the two countries, nor did the agreements redress the steadily declining U.S. market share. 49 At the urging of the industry, the federal government took several significant policy initiatives designed to support the U.S. semiconductor industry.

Following a series of unsatisfactory trade agreements, there was a significant shift in U.S. policy on trade in semiconductors, notably through the conclusion of

the 1986 Semiconductor Trade Agreement (STA) with Japan.50 The agreement sought to improve access to the Japanese market for U.S. producers and to end dumping (selling products below cost) in U.S. and other markets.51 After President Reagan’s decision to impose trade sanctions, the STA brought an end to the dumping in the U.S. and other markets and succeeded in obtaining limited access to the Japanese market for foreign producers, in particular, Korean and later Taiwanese DRAM producers.52

A second major step was the industry’s decision to seek a partnership between the government and a coalition of like-minded private firms to form the SEMATECH consortium, whose purpose was to revive a seriously weakened U.S. industry through collaborative research and pooling of manufacturing knowledge.53 A central element of the challenges facing the U.S. Semiconductor industry was manufacturing quality. By the mid-1980s, the leading U.S. semiconductor firms had recognized the strategic importance of quality and began to initiate quality improvement programs. A key element in this effort was the formation of

the consortium, which in part reflected the belief that the Japanese cooperative programs had been instrumental in the success of Japanese producers.54

The SEMATECH consortium represented a significant new experiment for government-industry cooperation in technology development. Conceived and funded under the Reagan administration, the consortium represented an unusual collaborative effort, both for the U.S. government and for the fiercely competitive U.S. semiconductor industry.55 The Silicon Valley entrepreneurs hesitated about cooperating with each other and were even more hesitant about cooperating with the government—an attitude mirrored in some quarters in Washington.56

Industry Leadership

From the outset, the industry took a leading role in setting its objectives, managing its resources, and measuring its accomplishments.57 The consortium showed substantial flexibility in its early years as its members and leadership struggled to define where it could make the maximum impact. After an early focus on developing a manufacturing facility to help solve production problems (rather than rely on a lab), the consortium eventually focused on three goals which involved improving:

Manufacturing processes;

Factory management; and

Industry infrastructure, especially the supply base of equipment and materials.58

The formation of SEMATECH thus provided an opportunity to bring together leading U.S. producers to focus initially on product quality. During this period, the U.S. industry also increased its capital expenditure and improved its ability to manage the development and introduction of new process technologies into high-volume manufacturing.59 The industry was aided in this process through its collaboration on common challenges under the auspices of SEMATECH.

The Impact of SEMATECH

While many factors affected the recovery of the U.S. industry, the public policy initiatives in trade and cooperative research were key among elements in the industry’s revival—contributing respectively to the restoration of financial health and product quality.60 The stronger performance of U.S. producers was revealed in gains in global market share that rested in part on improvements in product quality and manufacturing process yields, areas in which SEMATECH played a contributing role. As Flamm and Wang observe, though there are “a few vocal exceptions,” SEMATECH has been credited, within the industry, as playing some part in the “resurgence among U.S. semiconductor producers in the 1990s.”61 Perhaps the most compelling affirmation of the value of the consortium is the willingness of most of SEMATECH’s corporate members to continue participating in the consortium and to continue with this cooperation even after federal funding ceased.62 Other economists knowledgeable about the industry reach similar conclusions. For example, Macher, Mowery, and Hodges note that continued industry participation represents “a strong signal that industry managers believe that the consortium has produced important benefits.”63

59 | Ibid, p. 262. See also D.C. Mowery and N. Hatch, ”Managing the Development and Introduction of New Manufacturing Processes in the Global Semiconductor Industry,” in G. Dosi, R. Nelson, and S. Winter, eds., The Nature and Dynamics of Organizational Capabilities, New York: Oxford University Press, 2002. |

60 | See Jeffrey T. Macher, David C. Mowery, and David A. Hodges, “Semiconductors,” op. cit. pp. 266-267 and 277. |

61 | See Kenneth Flamm and Qifei Wang, Sematech Revisited: Assessing Consortium Impacts on Semiconductor Industry R&D. in this volume. Economists share this view. As Flamm notes, “Economists generally view the program as the preeminent model of a cooperative government-industry joint R&D venture.” See the presentation by Kenneth Flamm in National Research Council, Regional and National Programs to Support the Semiconductor Industry, op cit. For the views of a frequent critic, see T.J. Rodgers, “Silicon Valley Versus Corporate Welfare,” CATO Institute Briefing Papers, Briefing Paper No. 37, April 27, 1998. |

62 | At a meeting in 1994, The SEMATECH Board of Directors reasoned that the U.S. semiconductor industry had regained strength in both the device-making and supplier markets, and thus voted to seek an end to matching federal funding after 1996. For a brief timeline and history of SEMATECH, see <http://www.sematech.org/public/corporate/history/history.htm>. |

63 | See Jeffrey T. Macher, David C. Mowery, and David A. Hodges, “Semiconductors,” op. cit., p. 272. |

Perspectives from Abroad

The positive perception of the consortium has influenced the creation and structure of consortia abroad. Kenneth Flamm points out that the consortium is perceived as a success in Japan, directly influencing the formation and design of the ASET and SELETE programs (see Box B).64 SEMATECH appears also to have had a similar influence on the initiation and operation of European programs such as MEDEA and IMEC.65

An informed perspective on the positive model of the U.S. partnership is offered by Hitachi’s Toshiaki Masuhara who observes that there has been a good balance of support in the United States by government and industry for research through the universities. This has included “a very good balance between design and processing.” He adds that the overall success of U.S. industry appears to have come from the contributions of five overlapping efforts. These include:

The SIA roadmap to determine the direction of research;

Planning of resource allocation by SIA and SRC;

Allocation of federal funding through Department of Defense, National Science Foundation, and the Defense Advanced Research Projects Agency;

The success of SEMATECH and International SEMATECH in supporting research on process, technology, design, and testing; and

The Focus Center Research Project.66

In addition to these informed opinions, the willingness of new firms such as Infineon (Germany), Philips (the Netherlands), and ST Thompson (France) to join International SEMATECH is a further affirmation of the perceived value of the consortium’s research and related activities.

64 | As a leading Japanese industrialist observed, “A major factor contributing to the U.S. semiconductor industry’s recovery from this perilous situation [in the 1980s] was a U.S. national policy based around cooperation between industry, government, and academia.” Hajime Susaki, Chairman of NEC Corporation, “Japanese Semiconductor Industry’s Competitiveness: LSI Industry in Jeopardy,” Nikkei Microdevices, December 2000. |

65 | IMEC, headquartered in Leuven, Belgium, is Europe’s leading independent research center for the development and licensing of microelectronics, and information and communication technologies (ICT). IMEC’s activities concentrate on design of integrated information and communication systems; silicon process technology; silicon technology and device integration; microsystems, components and packaging; and advanced training in microelectronics. For more information on IMEC, see <http://www.imec.be/>. SEMATECH was emulated in the U.S. as well. For example, NEMI (The National Electronics Manufacturing Initiative) was formed in 1993 to focus on strategic electronic components and electronics manufacturing systems. NEMI is an industry-led consortium with fifty members. |

These positive views from leading figures in the industry, in both the U.S. and abroad, underscore Flamm and Wang’s observation that SEMATECH was thought to be a “privately productive and worthwhile activity.”67 Researchers such as Macher, Mowery, and Hodges, support Flamm, finding that “government initiatives, ranging from trade policy to financial support to university research and R&D consortia, played a role in the industry’s revival,” while adding, as do Flamm and Wang, that the “specific links between undertakings such as SEMATECH and improved manufacturing performance are difficult to measure.”68 Challenges of measurement notwithstanding, those most closely involved (i.e. leading figures in the industry) are thus positive in their overall assessment of the consortium.69

A Positive Policy Framework

This unprecedented level of cooperation, and the important corresponding collaborative activity among the semiconductor materials and equipment suppliers, thus appear to have contributed to a resurgence in the quality of U.S. products and indirectly to the resurgence of the industry.70 The collective accomplishments and impact of the consortium may well have been an essential element contributing to the recovery of the U.S. industry, though it should be underscored that its contribution and other public policy initiatives were by no means suffi

cient to ensure the industry’s recovery. Essentially, these public policy initiatives can be understood as having collectively provided positive framework conditions for private action by U.S. semiconductor producers.71

Technical Challenges, Competitive Challenges, and Capacity Constraints

For more than 30 years the growth of the semiconductor industry has been largely associated with the ability of researchers to shrink the transistor steadily and quickly and thereby increase its speed, without commensurate increases in costs (Moore’s Law). If Moore’s Law is indeed to be maintained, then a continuation of productivity increases will likely depend on the ongoing benefits associated with the process of “scaling” in microelectronics.72 There are, however, physical limits to miniaturization, including odd and undesirable quantum effects that appear under extreme miniaturization.73

The semiconductor industry also faces the challenge of soaring chip-manufacturing costs. When Intel was founded in 1968, a single machine used to produce semiconductor chips cost roughly $12,000. Today a chip-fabricating plant costs billions of dollars, and the expense is expected to continue to rise as chips become ever more complex. Adding to this concern is the realization that capital costs are rising far faster than revenue.74 In 2000, for example, average total expenditures for a six-inch equivalent “wafer” were $3,110, an increase of 117 percent over the average total costs for a six-inch wafer in 1989, and a 390 percent increase since 1978.75

71 | Many factors contributed to the recovery of the U.S. industry. It is unlikely that any one factor would have proved sufficient independently. Trade policy, no matter how innovative, could not have met the requirement to improve U.S. product quality. On the other hand, by their long-term nature, even effective industry-government partnerships can be rendered useless in a market unprotected against dumping by foreign rivals. Most importantly, neither trade nor technology policy can succeed in the absence of adaptable, adequately capitalized, effectively managed, technologically innovative companies. |

72 | See Bill Spencer’s discussion of semiconductors in: Measuring and Sustaining the New Economy, op. cit. |

73 | See Paul A. Packan, “Pushing the Limits: Integrated Circuits Run into Limits Due to Transistors,” Science, September 24, 1999. Packan notes that “these fundamental issues have not previously limited the scaling of transistors and represent a considerable challenge for the semiconductor industry. There are currently no known solutions to these problems. To continue the performance trends of the past 20 years and maintain Moore’s Law of improvement will be the most difficult challenge the semiconductor industry has ever faced.” |

74 | See Charles C. Mann, “The End of Moore’s Law?” Technology Review, May/June 2000, at <http://www.technologyreview.com/magazine/may00/mann.asp>. |

75 | These statistics originate from the Semiconductor Industry Association’s 2001 Annual Databook: Review of Global and U.S. Semiconductor Competitive Trends, 1978-2000. A wafer is a thinly sliced (less than 1 millimeter) circular piece of semiconductor material that is used to make semiconductor devices and integrated circuits. |

The consensus in the engineering community is that improvements, both large and small, will continue to uphold Moore’s Law for another decade or so, even as scaling brings the industry very close to the theoretical minimum size of silicon-based circuits.76 To the extent that physical constraints or cost pressures limit the continued growth of the industry they will necessarily influence the role of the industry in stimulating productivity growth in the broader economy. As capital costs rise, as worldwide fabrication capacity increases, and as alternative business models (such as the foundry system) gain prominence, the competitive position of U.S. firms may be challenged.77

The unprecedented technical challenges faced by the industry underscore the need for talented individuals—the architects of the future—to devise new solutions to these technical challenges.78 The simple and perhaps alarming fact is that this pool of available skilled and qualified labor is shrinking. Historically the U.S. government has supported human resources through its system of funding basic research at universities, whereby the work and training of graduate students and postdoctoral scholars are supported by research grants to principal investigators. However, the rapid growth in demand for skilled engineers, scientists, and technicians is creating challenges on several fronts.

This trend has been aggravated in recent years by the steep decline in federal funding for university research in the sciences relevant to information technologies, such as mathematics, physics, and engineering. As we noted in the previous section, these declines are the unintended result of unplanned shifts in the level of federal support within the U.S. public R&D portfolio.

Challenges to U.S. Public Policy

While federal funding for SEMATECH ended after 1996 at the industry’s initiation, the debate has continued in Congress and succeeding administrations as to whether and to what extent the U.S. government should continue to invest federal funds in supporting R&D in microelectronics.79 Some observers argue

76 | See discussion by Bob Doering of Texas Instruments on “Physical Limits of Silicon CMOS and Semiconductor Roadmap Predictions,” in Measuring and Sustaining the New Economy, op. cit. |

77 | See remarks by George Scalise, President of the Semiconductor Industry Association, at the Symposium Productivity and Cyclicality in the Semiconductor Industry, organized by Harvard University. |

78 | David Tennenhouse, Vice-president and Director of Research and Development at Intel, emphasized this point in his presentation at the joint Strategic Assessments Group and Defense Advanced Research Projects Agency conference The Global Computer Industry Beyond Moore’s Law: A Technical, Economic, and National Security Perspective, January 14-15, 2002, Herndon, VA. |

79 | At a meeting in 1994 the SEMATECH Board of Directors reasoned that the U.S. semiconductor industry had regained strength in both the device-making and supplier markets, and voted to seek an end to matching federal funding after 1996. For a brief timeline and history of SEMATECH, see <http://www.sematech.org/public/corporate/history/history.htm>. |

that the role of the government should be curtailed, asserting that federal programs in microelectronics represent “corporate welfare.”80 Advocates of R&D cooperation among universities, industry, and government to advance knowledge and the nation’s capacity to produce microelectronics argue that such support is justified, not only for this technology’s relevance to many national missions (not least defense), but also for its benefits to the national economy and society as a whole. 81

In fact, no consensus exists on this point or on appropriate mechanisms or levels of support for research. Discussions of the need for such programs have often been dogged by doctrinaire views as to the appropriateness of government support for industry R&D and domestic politics (e.g., balancing the federal budget) that have generated uncertainty about this form of cooperation, especially at the federal level.82

An effect of this irresolution has been a passive federal role in addressing the technical uncertainties central to the continued rapid evolution of information technologies. DARPA’s annual funding of microelectronics R&D—the principal channel of direct federal financial support—has declined, and is projected to decline further (See Figure 3).83 As noted above, this trend runs counter to those in Europe and East Asia, where governments are providing substantial direct and indirect funding in this sector. The declines in U.S. federal funding for research are of particular concern to U.S. industry.

FIGURE 3 Defense Advanced Research Projects Agency’s annual funding of microelectronics R&D. SOURCE: DARPA 2001-05 President’s Budget R-1 Exhibit

Addressing the R&D Gap: The Focus Center Programs

Reflecting this concern, the industry has initiated several new programs to strengthen the research capability of U.S. universities. The largest of these is the Focus Center Research program (FCRP), through which the U.S. semiconductor industry, the federal government, and universities work cooperatively on cutting-edge research deemed critical to the continued growth of the industry. This program is operated by the Semiconductor Research Corporation, which funds and operates university-based research centers in microelectronics.84 In cooperation with the government and leading universities, the industry plans to eventually establish six national focus centers and channel $60 million per year into new research activities. However, the recent sharp downturn in the industry has put in question this commitment.

84 | The FCRP is part of MARCO, the Microelectronics Advanced Research Corporation within the Semiconductor Research Corporation (SRC). See MARCO Web site, <http://marco.fcrp.org>. MARCO has its own management personnel but uses the infrastructure and resources of the SRC. |

In addition, International SEMATECH continues to promote greater cooperation among major firms and now includes international members, though this initiative appears to have been set back by the recent downturn in the industry. Still, there are some promising signs. The most recent budgets for the NSF and the Department of Defense include increases in some important semiconductor areas that had been reduced during the 1990s. These developments only emphasize the limitations of private-sector support, the risks of lag effects in the R&D pipeline, and the disconnect between research needs and resources.

These industry-university initiatives are valuable and merit additional support. The committee accordingly believes it is important to increase support for basic research in the sciences related to information technologies.85

MEETING NEW CHALLENGES—COUNTERING TERRORISM

For the current war on terrorism, partnerships have a demonstrated capacity to marshal the ingenuity of industry to meet new needs for national security. Because they are flexible and can be organized on an ad hoc basis, partnerships are an effective means to focus diverse expertise and innovative technologies rapidly to help counter new threats. As a recent report of the National Academy of Sciences notes, “For the government and private sector to work together on increasing homeland security, effective public-private partnerships and cooperative projects must occur. There are many models for government-industry collaboration—cooperative research and development agreements, the NIST Advanced Technology Program, and the Small Business Research Innovation Program, to cite a few.”86

Indeed, programs such as SBIR are being harnessed to bring new technologies to address urgent national missions. For example, the National Institute of Allergies and Infectious Diseases at the National Institutes of Health has rapidly expanded its efforts in support of research on possible bio-terrorism in response to recent threats and attacks. Specifically, NIAID has expanded research and development countermeasures—including vaccines, therapeutics, and diagnostic tests—needed to counter and control the release of agents of bio-terrorism.87

Appropriately structured partnerships can also serve as a policy instrument that aligns the incentives of private firms to achieve national missions without compelling them to do so. As the National Academy of Sciences report cited above further notes, “A more effective approach is to give the private sector the

85 | See National Research Council, Capitalizing on New Needs and New Opportunities: Government-Industry Partnerships in Biotechnology and Information Technologies, op. cit. |

86 | National Research Council, Making the Nation Safer—The Role of Science and Technology in Countering Terrorism. op. cit., 2002. |

87 | See NIAID FY2003 Budget Justification Narrative at <http://www.niaid.nih.gov/director/congress/2002/cj >. |

widest possible latitude for innovation and, where appropriate, to design R&D strategies in which commercial uses of technologies rest on a common base of investment. Companies then have the potential to address vulnerabilities while increasing the robustness of public and private infrastructure against unintended and natural failures, improving the reliability of systems and quality of service, and in some cases, increasing productivity.”

Dual-use strategies can play key roles in meeting critical short-term mission goals, as well as in developing over the longer term, more effective and lower-cost technologies.88 Additionally, joint interagency design and execution programs—with a single source of funds and joint decisions on each dollar to be spent—constitute one approach to address critically important national initiatives collaboratively. Partnerships, when properly structured and managed, can achieve more positive results than separately channeled funding.