Digital Transformation in the Department of the Air Force (2025)

Chapter: Appendix F: Framing the Engineering Factors

F

Framing the Engineering Factors

This appendix provides technical background on the three key aspects of systems and software engineering: the structure of what is built, the practice and process of building and evolving it, and the means by which judgments can be made about what is built.

- Systems structure: Technical architecture and implementation choices

- Practice of engineering: Development and operational processes, engineering data including models, process metrics, and associated tooling

- Evaluation of artifacts: Assurance, including capability, security, resilience, quality

Engineering managers make choices regarding these three aspects on the basis of business goals related to mission requirements, operational context, threat environment, potential interoperation, tempo of change, and cost and schedule constraints. It is important to highlight the curation and management of engineering models in digital engineering, because that engineering data affects all three aspects. For example, a consistent approach to the association of systems artifacts with engineering models is essential to successful analyses in evaluations for function, quality, and cybersecurity.

ASPECT 1: THE STRUCTURE OF WHAT IS BUILT: TECHNICAL ARCHITECTURE AND IMPLEMENTATION CHOICES

As noted in the main text, the growing complexity and interconnection of the DAF operating environment drives increasing emphasis on the explicit management of choices relating to system technical architectures, including the manner for expressing and evaluating those choices. These choices influence engineering factors ranging from supply chain and concurrent “agile at scale” development to cybersecurity, quality, and resilience outcomes.

With greater interconnection among systems, referred to as system-of-systems, communication patterns among system elements—components and services—must be managed to support cybersecurity, since these communication pathways expose internal attack surfaces, and also resilience, since it becomes more important for systems to continue in operation despite local failures and compromises.

The description in this section elaborates on the costs, benefits, and technical challenges of improving architecture management. One of the challenges in architecting systems is expressing the choices made in the architecture development process to enable them to be communicated and evaluated.

Technical architecture is a set of commitments to system structure (i.e., how elements of the system interconnect) and system semantics (i.e., technical rules of engagement within the system). This includes support for enterprise design—how multiple systems can interoperate to support integrated system-of-system functionalities. Although technical architecture is a key determiner of quality outcomes, it should be kept relatively minimal—enough commitment to gain the advantages, but not so much as to impede progress and limit opportunity in the future. In this regard, technical architecture is not a reference architecture, but rather consistent with the Modular Open Systems Approach (MOSA).1

The committee emphasizes technical architecture in the context of DT because the combination of DT features—use of modeling, simulation, and analysis, along with management of diverse kinds of engineering data—provides significant opportunity to gain advantage in the form of productivity, cost reduction, enhanced capability, higher levels of cybersecurity, and adaptability.

Ideally, technical architecture is planned early in a process, and with attention to the full range of consequences of architectural choices. When technical architecture is accidental or emergent, then adverse consequences of architectural features may be hugely challenging to remediate, and certain stakeholders, for example cybersecurity evaluators, may face disproportionate challenges to address their concerns.

___________________

1Defense Standardization Program, n.d., “Modular Open Systems Approach (MOSA),” https://www.dsp.dla.mil/Programs/MOSA, accessed June 18, 2025.

Here are some engineering factors that are strongly influenced by architectural choices:

- Agile at scale. Technical architecture, when constructed effectively, is the key enabler of “agile at scale,” where a larger scale iterative process is implemented using multiple small teams working relatively independently. The capacity for independent concurrent work among these teams is a direct consequence of technical architectural design choices related to the degree of coupling among system elements. When coupling is minimal–and well defined—concurrent effort is more feasible, since teams have less need to negotiate with other teams. It is ironic that the agile-at-scale process requires up-front planning, but without that planning engineering risks can be very high.

- Composition of models and analyses. The same goal of minimizing coupling among system elements is an enabler of composition of models and analyses. Composition means that the results of modeling and analysis of system elements (components or services) can be combined into results regarding the aggregate with reduced need to re-analyze the individual system elements. There are some properties, such as memory safety, for which composition is more readily achievable than others, such as meeting real-time deadlines in a cyber physical system. (Memory safety, roughly, is a technical approach to preventing separate computational processes within a shared system from interfering with each other, either inadvertently or intentionally.)

- Isolation of variabilities. Architectural design choices also can be made to encapsulate portions of a system likely to change at a higher frequency, thus insulating the other parts of the system from the effects of such changes. Importantly, this includes decisions regarding interfaces to underlying computing infrastructure. Adherence to standard hardware designs can enable a system to more easily “ride the curves” of advancement in computation infrastructure. This can be hugely significant, given the extraordinary pace of advance of computing capability in terms of performance, price, power requirements, and the like. (It is also important to mention that hardware “headroom” for software expansion is also an important consideration. In the earliest systems, insufficient hardware headroom was a cause of both increased costs and reduced flexibility in software.)

- Security and resilience. Certain critical security attributes are disproportionately influenced by early technical architectural choices. Good choices, for example, can lead not only to highly secure implementations, but also to tightly focused test-and-evaluation efforts where only small portions of an overall system (the “trusted computing base”) need the most stringent consideration. This was one of the reasons why the Defense Advanced Research

- Projects Agency’s secure quadcopter development withstood multiple red-team assaults.2 It is well understood among security engineers that “bolting on security” late in a development process or during sustainment activities is rarely successful. For larger distributed systems, there can be “internal attack surfaces” where attackers become able to engage with communications and data exchange pathways within the distributed system. These vulnerabilities can be reduced through careful design of data exchange formats and the generators and parsers at either end of communications links. For resilience, the bolt-on proposition is even more tenuous. This is because resilience emerges as a result of considered architectural decisions, such as control-loop models of sensing and response, capacity to separate and isolate system elements that are compromised, and in other ways take adaptive steps in response to cyber penetrations (and also to more ordinary local failures).

- Scale up and interconnection. Architectural choices also enable scaling and enhancement of systems, for example by implementing internal framework models (sometimes called “platform and payload”) that can admit incremental accretion of capabilities over time. Interconnection with other systems, when anticipated, can drive the design of application programming interfaces, protocols, data exchange formats, and other interfaces.

- Supply chain considerations. Business norms in supply chains are also an important architectural driver. Compatibility with established technical norms within supply chain ecosystems is essential to gain access to the elements of those ecosystems, whether they are vendor controlled or open source. An example is the development of internal web applications to provide access to mission data. It is advantageous to be able to employ existing (and in some cases proven) infrastructure, such as the NPM ecosystem3 (which includes more than 1 million components). Adapting the systems architecture to allow this opportunity would thus be beneficial in cost, maintainability, and speed of development. Similar considerations could come into play in the adoption of families of off-the-shelf sensor and other cyber-physical components. (It should be noted that compromise approaches can be taken to enable consistent interaction with subordinate capabilities that are subject to rapid change, such as commercial mobile devices or sensors. This can be accomplished by encapsulating the rapidly changing (and often less trustworthy) subordinate capabilities and providing system access through a “translation” interface, sometimes called a “facade” by software engineers.)

___________________

2K. Fisher, J. Launchbury, and R. Richards, 2017, “The HACMS Program: Using Formal Methods to Eliminate Exploitable Bugs,” Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences 375(2104), https://doi.org/10.1098/rsta.2015.0401.

3T.W. Garton, W.H. Brown, E.R. Mixon, and J.Q. Church, 2019, Engineered Resilient Systems, U.S. Army Engineer Research and Development Center, ERDC/ITL-19-3, p. 17.

- Modeling and simulation. Designs that accommodate modeling also enable use of simulation techniques for purposes ranging from early validation to operational refinement and training.

- Innovative systems concepts. For some categories of systems, technical architecture can be a focus of innovation and risk. An example of a “low risk” case is a web application that supports transactions on a database, for which there is abundant precedent. An example of a likely higher risk case—that is, with less precedent—is the development of command and control for highly distributed swarm-like configurations, for example of small attritable platforms such as drones. In a novel or higher-risk case, there is likely to be greater uncertainty regarding the consequences of potential design choices, which would lead architectural designers to inform themselves though modeling, analysis, and prototyping in order to achieve a confident outcome. There are other kinds of systems that are more highly precedented and for which consistency with normative architectures can reduce overall program risk.

A key issue in architectural planning is “ownership” of the architectural decision process and involvement of appropriate stakeholders. Most importantly, architecture needs to be a deliberate choice, not emergent or accidental. The inventory of consequences above illustrates why this is important. When there are conflicting equities among stakeholders, it is important to support a process that ensures all necessary considerations are addressed. There are various mechanisms available to address this. When there is a potential community of contractors contributing specific capabilities to an integrated system, there is a mechanism called an Associate Contractor Agreement that can enable collaboration among the various contractors.

Technical architectures can contain design elements that can beneficially be common across families of systems. Examples of these design elements include data exchange formats and frameworks for plug-and-play composable elements. One of the challenges of architecting is that as-built baselines tend to diverge from aspirational architectural designs that are formulated early in an engineering process. The effect of this divergence is that the benefits of the architecture can be lost–low coupling, isolation of variabilities, quality benefits, security and resilience benefits, and so on. There are ways to mitigate this divergence, however, when explicit formalized architectural models are used. Analyses can compare architectural models with structural characteristics of as-built baselines in order to track consistency.4

For example: are there hidden links between architectural elements, perhaps introduced late in development as a convenience, that should be removed to avoid security risks? Are there areas of design that are likely to change often, such as communications interfaces, that need to remain relatively isolated behind “façade” interfaces?

___________________

4G. Murphy, D. Notkin, and K. Sullivan, 1995, “Software Reflexion Models: Bridging the Gap Between Source and High-Level Models,” SIGSOFT Software Engineering Notes 20(4):18–28.

Architecture choices may benefit from limited ongoing adaptation. Early choices are important, since adaptation can lead to costly refactoring, Drivers of change could include unanticipated shifts in threat and mission, interoperation requirements, and emerging technologies.

A related issue falls under the rubric of “Conway’s Law,” which references a correspondence of architectural structure and interconnection with the structure and interconnection of the organization that is responsible for the system. For integrated systems, this includes the supply chain for components and services. This correspondence is an empirically observed phenomenon that also can be considered as advice to architects and organizational managers regarding the benefits of structural alignment.

ASPECT 2: PROCESS AND ENACTMENT, ENGINEERING DATA AND TOOLING

Choices regarding the structure of the enactment5 or execution of engineering process across life cycle phases are driven by several factors. A most significant choice is the basic decision regarding whether to adopt a traditional linear model or one of several possible iterative approaches. This is a choice that must be made in the very early stages, since it frames milestone gates, staging of capability, planning for test, evaluation, verification, and validation, and many other factors.6

Traditional “waterfall” or linear systems engineering methodologies are increasingly challenged by this dynamism because they are not designed to support a fast pace of adaptation and evolution. Consequently, a transformative approach to engineering complex aerospace systems is needed that embraces agility and a holistic view of the entire life cycle ecosystem.7

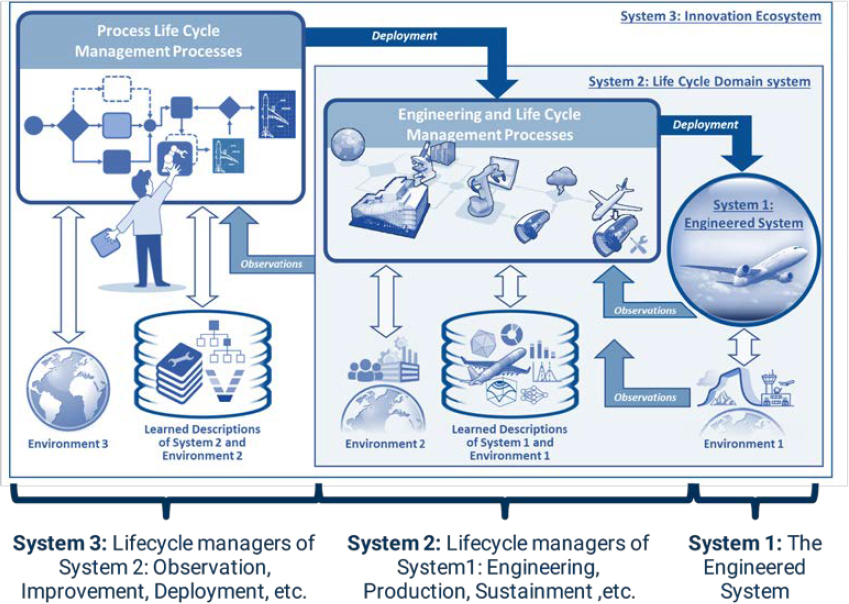

The American Institute of Aeronautics and Astronautics has introduced concepts and nomenclature regarding Digital Threats, distinguishing the engineered product itself from the environment within which the engineered system operates across its life cycle and, additionally, from the means by which the system is developed, produced, distributed, utilized, and sustained. The engineered product is referred to as System 1; the life-cycle management is referred to as System 2, and the overall system of improvement and innovation is System 38 (see Figure F-1).

___________________

5The term enactment is used in this context to avoid confusion regarding execution of engineering process and execution of software on a computer.

6Defense Science Board, 2018, “Design and Acquisition of Software for Defense Systems,” Department of Defense, https://apps.dtic.mil/sti/citations/AD1048883.

7American Institute of Aeronautics and Astronautics (AIAA) Digital Engineering of Systems Task Force, 2025, “Transforming the Practice of Engineering… Together,” Presented at the AIAA SciTech Workshop.

8W. Schindel, 2022, “Realizing the Value Promise of Digital Engineering: Planning, Implementing, and Evolving the Ecosystem,” Insight 25(1), https://doi.org/10.1002/inst.12372.

SOURCE: AIAA Digital Engineering Integration Committee, 2023, “Digital Thread: Definition, Value and Reference Model.” Reprinted by permission of the American Institute of Aeronautics and Astronautics, Inc.

Engineering uncertainty.

The choice of engineering process—linear or various approaches to iterative—is largely informed by considerations of engineering uncertainty. Engineering uncertainty refers to the inherent uncertainties that arise as engineering projects proceed. These uncertainties can relate to the outcomes of design and implementation decisions, for example performance and quality outcomes pertinent to mission success. Challenges can derive from factors such as measurement errors, model approximations, variability in material properties, and unpredictable external conditions, etc. When there are uncertainties, either present or future, iterative processes may enable more timely resolution of the uncertainties. This is because, in a traditional linear process, commitments may be made early on, but their consequences may be understood late in the process, leading to costly rework. If uncertainties are anticipated, then the costs and delays associated with rework can be significantly reduced.

Uncertainties in an engineering process can be increased when early engineering design decisions must be made for novel kinds of systems which lack precedent,

or prior experience. In these cases, a linear process could create amplified risk, since many decisions are made early, and consequences are not understood until much later, at which point revision of those decisions could be excessively costly or risky.

On the other hand, when systems are highly precedented, such as the development of a routine public-facing or internal operational website, linear processes with DevOps-style sustainment practices may be more appropriate, since designers can rely on experience to make confident decisions that are unlikely to have to be revised later in the process, for example in test and evaluation for functionality and security.

Iterative process may also be driven by a recognition that the system will need to be adapted on an ongoing basis, for example due to shifts in threat or advances in technology. Associated with the process choices are choices made in the identification of metrics that can enable a program office (and other stakeholders) to more effectively monitor progress on an ongoing basis.

The DoD Software Acquisition Pathway was developed in recognition of the flexibility required, including fluidity across traditional DoD research, development, test, and evaluation (RDT&E) budget categories.9

Iterative processes have long been employed in engineering projects, but were most visibly codified in the world of DoD programs by Barry Boehm when he named the spiral model in 1988.10 This was considered a novel approach for DoD programs, though it was already a de facto reality for many commercial software projects. Iterative approaches were refined and contextualized for defense programs in various stages over subsequent years, culminating in a 2018 report from the Defense Science Board (DSB), whose findings were applied as informal program metrics by the GAO in its annual survey of programs of record.11

Measures of progress.

Even though iterative methods are generally respected and increasingly appropriate to adopt, there is nonetheless often confusion regarding what are appropriate notions of measurable progress in iterative processes. The absence of such metrics can create risk for program managers, who may default to linear milestone-based processes as a result. Ironically, there are reports of contractors who actually enacted iterative processes but with linear-style reporting. In the absence of identified notions of progress, there is a risk that iterative process may converge slowly or not at all,12 with the latter activity described by Boehm as death spirals.

___________________

9Secretary of Defense, 2025, Directing Modern Software Acquisition to Maximize Lethality.

10B. Boehm, 1988, “A Spiral Model of Software Development and Enhancement,” Computer 21(5): 61–72.

11Defense Science Board, 2018, “Design and Acquisition of Software for Defense Systems,” Department of Defense, https://apps.dtic.mil/sti/citations/AD1048883.

12C. Mizell and L. Malone, 2009, “A Software Development Simulation Model of a Spiral Process,” National Aeronautics and Space Administration, KSC-2007-055.

What might the measures look like? Iterative process can, more precisely, be described as incremental and iterative development. Incremental means that capability is staged out in increments. As a consequence, operational stakeholders may have much earlier access to capability and create a validation feedback loop that informs choices made in later increments.

This, in turn, requires transitioning from stove-piped environments and data silos to collaborative digital ecosystems that provide a shared baseline understanding for partners and collaborators, enabling iterative capability maturation through rapid delivery of functional increments as opposed to a reliance on large, sequential system deployments.

For incremental development, it is also important to have an up-front plan for staging the increments, along with plans for both verification and validation of the results of each increment. This can be a significant risk reducer, since the early verification and validation (V&V) feedback can guide subsequent plans. Iterative means that internal design decisions are staged, and ideally in a manner where the consequences of a design commitment can be understood as soon as possible as the commitment is made. The benefit of this is that some high-risk commitments can be deferred until there is maximum information on hand to inform the choice and V&V activities can be staged to ensure feedback and adaptation have acceptable costs.

It is important to note that, for larger integrated systems, subsystems may have different associated process choices, because they can differ with regard to the criteria suggested above.

Sustainment.

Iterative techniques are also important during operations and sustainment, particularly when supply chains include separately sourced components and services that may have periodic updates that need to be evaluated and incorporated, for example to avoid security exposures.

Engineering data.

When information regarding engineering choices and their rationale is lost in an engineering process, subsequent engineering choices can become riskier, since their interaction with prior choices may be unclear. The prior commitments may impose, for example, certain technical rules of engagement in support of security or resilience properties. If these rules of engagement (what software engineers sometimes call invariants) are neglected, then certain security expectations may become invalid. The engineering data may also capture rationale for choices. When circumstances change and those choices need to be reconsidered, the rationale information is important to inform that reconsideration. The engineering data may also include key models regarding performance attributes. If these models are not kept current with the evolving as-built assembly, then they may no longer be predictive and thus useful in guiding future decisions.

In general, the committee uses the phrase engineering data to refer to a heterogeneous corpus of information that encompasses a diversity of models, analyses,

requirements documentation, test cases, metric calculations, and other information associated with a system in planning, development, operation, or sustainment. Digital continuity across these phases is beneficial to avoid information loss, as noted. There are approaches such as argumentation structures, often adopted by safety-critical and trusted systems engineers, that can be used to integrate the elements of the corpus. Many of the tools for managing argumentation structures are open source. An example of an argumentation structure that is relatively widely adopted is Goal Structuring Notation.13

ASPECT 3: ASSURANCE AND QUALITY

The goal of any systems assurance process is to make confident judgments that a system operates as intended and does not misbehave. System operation, in this context, encompasses both functional behavior—what the system does—and quality attributes—the diverse aspects of how the system performs its mission. These functional and quality attributes are conventionally organized around a key intermediate representation, which is a requirements specification. In modern mission engineering practice, requirements specifications can be less detailed, enabling interaction of development and operational stakeholders to continuously refine details, for example, in an incremental development process.14

Regardless, the presence of a specification enables a separation of verification, which is consistency of the as-built system with the specified requirements, from validation, which is the assessment of consistency of the specified requirements with actual mission needs. If there are errors in the specification, then there can be difficulties with respect to both verification and validation.

It is important to note that validation activities can start early in the life cycle, as requirements are being formulated. DT can contribute through the development of models associated with the requirements specifications, where the models can inform simulations, analyses, prototyping, and other activities intended to provide the earliest possible assurance of validity of the specification—that the specification is faithful to the mission needs.

As implementation-focused models and artifacts are developed, the DT-enabled management of engineering data can also provide earlier judgments of verification with respect to functional and quality aspects of the system. In other words, the emphasis in DT techniques on modeling and engineering data facilitates earlier

___________________

13P. Graydon, 2018, The Simple Assurance Argument Interchange Format (SAAIF) Manual, National Aeronautics and Space Administration, NASA/TM–2018–219837.

14Office of the Under Secretary of Defense for Research and Engineering Mission Capabilities, 2023, “Department of Defense Mission Engineering Guide Version 2.0,” https://ac.cto.mil/wp-content/uploads/2023/11/MEG_2_Oct2023.pdf.

assurances regarding both aspects of both verification and validation. The effect is to reduce uncertainty regarding engineering outcomes and, additionally, to reduce uncertainties and costs at Test and Evaluation (T&E) gates. This combination enables programs to be more nimble in the management of systems engineering projects, adapting more rapidly and confidently to changes in threat environment, technology opportunities, and pivots in mission concept.

A consistent corpus of engineering data can greatly reduce the cost and uncertainty associated with T&E activities. Development teams can anticipate T&E gates and, indeed, collaborate with T&E proponents to ensure that evidence in support of confident T&E judgments is amassed in an ongoing manner—and regularly evaluated—through development. Automated tooling and build pipelines can dramatically reduce the costs of this effort.

Of course, for most consequential systems there is a wide range of functional and quality attributes to be considered. For many systems, the most obvious quality attributes relate to size, weight, power, and cost. But there are also multiple quality attributes related to other significant factors. Many of these factors, particularly those related to security and resilience, are strongly influenced by the earliest decisions regarding requirements, technical architecture, and process structure. And in many of these cases are difficult to address later should they fail to be considered in these early decisions.

A good example is cyber resilience, which is the capacity of an integrated system to “operate through” a cyber-attack or partial failure due to faults within the system. Resilience is not readily “patched into” an existing system because it is largely a consequence of choices made regarding technical architecture. Additionally, as systems scale and become interconnected, resilience becomes more critical to mission success. Resilience also includes prevention of cascading failures when systems are interconnected with others, but they also occur in other domains such as telecommunications and financial markets.

More familiar examples relate to cybersecurity, which addresses the possibilities and consequences of attacks targeted at a system with intent to compromise integrity of operations, confidentiality of critical data, or availability of a system to support mission operations. Attacks can occur not just in the heat of operations, but also during the engineering process including within supply chains. These advance attacks can have purposes ranging from espionage and sabotage to prepositioning in support of attacks during operations (the most familiar examples are in power grids).15

The concept of attack surface is significant to technical architecture, mission architecture, and other aspects of security planning. Attack surface encompasses

___________________

15Government Accountability Office, 2022, Cybersecurity: Federal Response to SolarWinds and Microsoft Exchange Incidents, GAO-22-104746.

potential points of contact between an attacker and the system. These scope and extent of these points of contact are consequences of choices made both in engineering design and in the role the system has in operational workflows. These early mission choices could also influence the work factor, or friction presented to an attacker, of elements of the attack surface. For example, shifting online access to specific interfaces to close access can dramatically increase adversary work factor.

There are often perceived tradeoffs between levels of achievable capability and the capacity to deliver those with acceptable levels of security and resilience. DT can enable both easier navigation on this tradeoff curve, but also “pushing the curve outward” through more efficient practices based on more extensive use of modeling and engineering data.