Memory: The Key to Consciousness (2005)

Chapter: 3 The Early Development of Memory

3

The Early Development of Memory

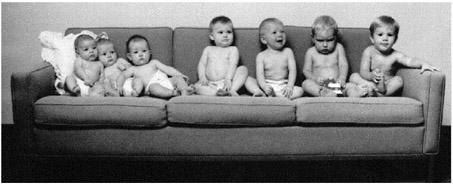

The development of memory by the human brain from conception to adulthood is an extraordinary story. The physical differences among the infants shown in Figure 3-1, who are arranged in order of increasing age from 2 to 18 months, are easy enough to see. Harder to see—but suggested by the differences in the sizes of their heads—are their brains, which have been growing, processing information, and creating memories, starting several months before birth.

The human brain has upward of a trillion nerve cells. A number like this is much too large for most of us to grasp (nevertheless, politicians seem to have no difficulty tossing such numbers around when they talk about federal budgets and deficits). A better idea of how large this number is comes from looking at how fast new neurons multiply over the nine months of the brain’s development. From conception to birth the growing human brain adds new neurons at the astonishing rate of 250,000 every minute!

Actually, a newborn baby’s brain has more neurons than at

FIGURE 3-1Babies from 2 to 18 months of age.

later ages. Some neurons die as the brain is shaped and sculpted by experience. We used to think that the total number of neurons present in the brain at birth was it. Some would die, but no new neurons would form. We now know that this is not the case. The brain forms neurons throughout life. We will look more closely at these surprising facts and how they relate to learning and memory later.

Have you ever encountered a young baby you didn’t know? Perhaps a chance encounter in an elevator with an infant lying in her carriage. She looks at you, at your face, with an intense blank stare. One has the impression that behind that stare is an incredibly powerful computer clicking away at high speed. Beginning well before birth, experience shapes the brain.

At what point in the development of the brain does learning first occur? The growing brain of the fetus shows a simple form of learning a month or more before birth. If a loud sound is presented close to the mother, her fetus will show changes in heart rate and also make kicking movements. If the sound is given repeatedly, these responses will gradually cease or habituate. If the nature of the sound is markedly changed, the fetus will again respond. This must mean that the fetus has learned something about the properties of the initial sound.

Habituation is the most basic form of learning. It is simply a decrease in response to a repeated stimulus and occurs in a wide

range of animals, in addition to humans. Habituation of such responses as looking at an object is widely used to study learning in newborns and young babies. You might be interested to learn that the rate of habituation of responses in very young infants is correlated with later measures of IQ in the growing child—faster habituation is associated with higher IQ. We know a good deal about how habituation occurs in the nervous system too, as we will see later.

Learning in Utero

What kinds of stimuli does the developing fetus experience? In heroic studies in which mothers have swallowed microphones, it is clear that sounds from the outside penetrate the womb. Not just loud sounds, but even conversations close to the mother can be detected. The voice that comes through loud and clear is the mother’s own voice. At birth an infant can hear more or less like a partially deaf adult, with thresholds considerably higher than those of normal adults (20 to 40 decibels higher), but by about six months of age an infant’s hearing is virtually normal.

Although the eyes develop early, as the fetus grows there is little light in the womb. A newborn baby has visual acuity resembling that of a rather nearsighted adult, somewhere between 20/200 and 20/600, but the direction of gaze can be somewhat controlled by the newborn. The newborn appears to be largely colorblind, but by two to three months color vision is normal. The reasons for the poor vision of the newborn lie not in the eyes but in the brain. The development of the visual part of the brain, and the role of experience in this development, is an extraordinary chapter in neuroscience (see Box 3-1).

It used to be thought that the mind of the newborn is a blank slate and that the world is a vast and meaningless sea of sensations. Part of the reason for this is that a newborn has little ability to control her movements. A young baby cannot lift her head, roll over, or even point at objects with her arms. How do you ask such a helpless little creature what she sees or knows? As it happens,

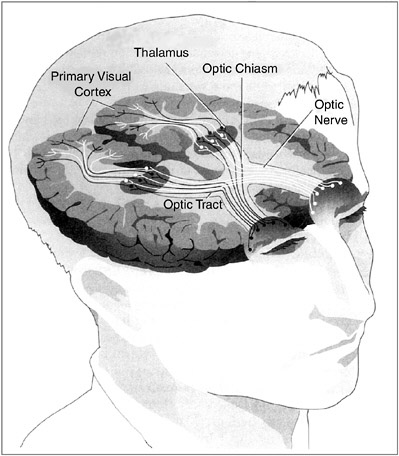

All visual systems begin with photoreceptors, which are cells that respond to light energy. Photoreceptor cells all contain one or more pigments that respond chemically to light, and much is known about the biochemistry of these pigments. Structurally, the visual chemical that responds to light is called retinal, a variation on the vitamin A molecule (which explains why one should eat foods containing vitamin A for good eyesight) and is associated with proteins called opsins. There are two types of photoreceptors in the vertebrate eye—rods and cones. In general, the rods, which mediate sensations of light versus dark and shades of gray, are much more sensitive to light than the cones: They have much lower thresholds and can detect much smaller amounts of light. Cones mediate acute detail vision and color vision. In the human eye there are three types of cones: one type that is the most sensitive to red light, one that is the most sensitive to green, and one that is the most sensitive to blue. Some animals, particularly nocturnal animals like rodents, have mostly or entirely rods, and others have mostly or entirely cones. Humans, apes, and monkeys have both rods and cones. Indeed, the retina of the macaque and other old world monkeys appears to be identical to the human one: Both macaques and humans have rods and three types of cones. It is now thought that the genes for the rod and cone pigments evolved from a common ancestral gene. Analysis of the amino acid sequences in the different opsins suggests that the first color pigment molecule was sensitive to blue. It then gave rise to another pigment that in turn diverged to form red and green pigments. Unlike old world monkeys, new world monkeys have only two cone pigments, a blue pigment and a longer-wavelength pigment thought to be ancestral to the red and green pigments of humans and other old world primates. The evolution of the red and green pigments must have occurred after the new world and old world continents separated, about 130 million years ago. The new world monkey retina, with only two color pigments, provides a perfect model for human red-green color blindness. Genetic analysis of the various forms of human color blindness suggests that some humans may someday, millions of years from now, have four cone pigments rather than three and see the world in very different colors than we do now. The ultimate job of the eye is to transmit information about the visual world to the brain. It does this through the fibers of the optic nerves. The cell bodies of the optic nerves, the ganglion cells, are neurons situated at the back of the eye in the retina and send their axon fibers to the brain. When a small spot of light enters a verte- |

brate eye, the lens focuses it on a particular place on the retina. As the spot of light is moved about, its image moves about on the retina. Suppose you are recording the electrical activity from a single ganglion cell, perhaps by placing a small wire near it. In darkness it will have some characteristic rate of discharge—say, it fires one response every second. When the light strikes the retina, it activates some rod and cone receptors. If these receptors are in the vicinity of the ganglion cell that is being monitored and are connected to it through the neural circuits in the retina, the light will have some influence on the rate of firing of the ganglion cell. Depending on the neural circuits, the ganglion cell will be excited (fire more often than the spontaneous rate) or inhibited (fire less often). In mammals the anatomical relations of projection from the visual field to the retina to the brain are a bit complicated. In lower vertebrates such as the frog there is complete crossing of input: all input to the right eye (the right visual field) goes to the left side of the brain, and vice versa. Such animals have no binocular vision. Primates, including humans, have virtually total binocular vision; the left half of each retina (right visual field) projects to the left visual cortex, and the right half of each retina (left visual field) projects to the right visual cortex. The optic nerves actually project to the thalamus, and the thalamus in turn projects to the visual cortex. This means, of course, that the right cortex receives all of its input from the left visual field, and the left visual cortex receives all of its input from the right visual field. Removal of the left visual cortex eliminates all visual input from the entire right half of the visual field of both eyes. Although a single eye can use various cues to obtain some information about depth, or the distance of objects, much better cues are provided by binocular vision, in which input from the two eyes can be compared by cells in the visual cortex. Among mammals, predators such as cats and wolves have good binocular vision; hence, they can judge the distance to prey very accurately. Many animals that are prey, such as rabbits and deer, have much less binocular vision. Instead, their eyes are far to the sides of their heads so that they can see movements behind them. The excellent binocular vision of primates is probably due to the fact that they live in trees, or at least their ancestors did. If one mistakes the distance to a branch that one is leaping for, one’s genes will not be propagated! The projections from the visual thalamus to the visual cortex are such that a given neuron in layer IV of the visual cortex receives input from one eye or the other but not from both. In fact, the cells of layer IV of the visual cortex are organized in columns in such a way that one column will respond to the left eye, an adjacent column will respond to the right eye, and so on. |

babies do one thing extremely well: they suck. They can also move their eyes and head from side to side to look at objects, and they can kick. We can use these simple behaviors and their habituation to ask the infant what she sees, hears, and can learn.

Newborn babies will learn to suck on an artificial nipple to turn on certain types of sounds. They particularly like the sound of their mother’s voice, much more than the speech of another mother. They have learned enough about their mother’s voice in utero to recognize it after birth. An infant can also display one response to its mother’s voice and another response to a stranger’s voice prior to birth. This was shown in a study of near-full-term fetuses in which each fetus was exposed to the recorded sound of its mother’s voice or the voice of a stranger. Fetal heart rate increased in response to the mother’s voice and decreased in response to the stranger’s voice. Newborn babies also prefer their native language to foreign languages.

Zak, my three-year-old, liked to rest his head on my enlarged abdomen and talk to his little sister-to-be. A few hours after Marie was born, I was breastfeeding her and she lay there with her eyes nearly closed. Zak came up and began talking to her. Immediately her eyes flew open and she stared at him. She clearly recognized his voice from her earlier experience in utero. She did not respond like this to the voices of strangers.

Similar results have been obtained for more complex speech, as shown by the remarkable study dubbed the “cat in the hat” experiment. Pregnant women read aloud twice a day during the last six weeks of pregnancy. Each mother was assigned one of three children’s books, one of which was Dr. Seuss’s The Cat in the Hat. The mothers-to-be who were assigned this story read it aloud faithfully twice a day for the entire six-week period. Recordings were made of the readings of all three stories. Three days after birth the infants were tested using the sucking response on a pacifier on all three stories. Infants that had, for example, been read The Cat in the Hat in utero sucked so as to produce this story over the other two, even if the story was read by another mother. (Never underestimate the power of Dr. Seuss!) Exactly

how the babies learned to recognize this particular story from experience in utero is a mystery, but learn it they did.

Learning in Newborns

What do newborns see? Or, more specifically, what do they like to look at? Faces are very potent stimuli for humans and other primates. If shown her mother’s face versus other women’s faces, a baby just a few hours after birth will look more at her mother’s face. What exactly tells the infant it is her mother? If the women wore scarves over their hair and part of their heads, the babies no longer showed any preference for their mothers. They apparently had learned a kind of global perception of the mother’s head rather than attending to smaller details of the face.

Shown drawings of faces versus drawings of mixed-up faces, newborns prefer to look at faces. Newborns show an extraordinary ability to learn about individual faces. When shown pictures of different individual women’s faces and then shown composite faces made up from individual faces that they had either seen or not seen before, babies show a clear preference for composites made up from faces they had seen before. This learning occurs in less than a minute in human infants as young as eight hours old!

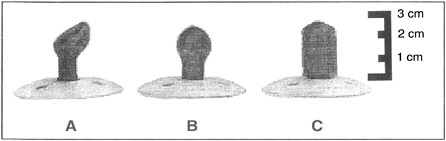

Equally remarkable is the sophistication of the kind of learning that human infants are capable of, even very early in postnatal development. Cross-modality matching refers to a process by which information experienced exclusively in one sensory modality (such as tactile stimulation) can “transfer” for use in connection with stimuli or events in another modality (such as vision). This was shown in a study in which infants first experienced a pacifier of a given shape (such as example A in Figure 3-2) solely by sucking on it—the infants never saw it. When the infant sucked hard enough on the pacifier, a computer monitor would present a visual display to the infant. The display showed a picture of the pacifier being sucked on or a picture of a differently shaped pacifier (such as example B) in a random order. Infants as young as 12 hours old spent more time looking at the picture of

FIGURE 3-2Infants can visually recognize the shape of the pacifier simply by sucking it.

the pacifier they had in their mouth during the testing. (As testing progressed, there was a shift to spend more time looking at the new pacifier; this is the novelty preference effect again.) It is not known how the infant brain is able to accomplish this kind of “going beyond what’s given”—that is, of abstracting information. It has become clear in recent years that many psychologists—including the renowned child cognitive psychologist Jean Piaget—have severely underestimated the learning and cognitive abilities of human infants.

Faces

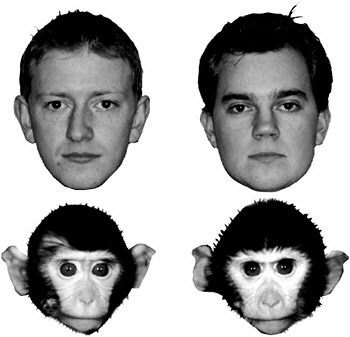

Adults recognize individual human faces far more accurately than individual monkey faces, and the opposite is true for monkeys (see Figure 3-3). These differential abilities seem to be due to early learning. Using the looking-preference procedure, studies have shown that six-month old infants are equally good at discriminating between individual human and individual monkey faces they have or have not seen before, but by nine months the infants are like adults: They readily distinguish between old (previously seen) and new human faces, but they no longer discriminate between old and new individual monkey faces.

There is a face “area” in the human brain, a localized region of cerebral cortex termed the fusiform gyrus. Patients with damage to this area can still identify faces as faces, but they can no

FIGURE 3-3Human and monkey faces.

longer recognize individual faces (a condition called prosopagnosia). They cannot recognize their own relatives or even spouses by face, only by voice. Indeed, they cannot even recognize themselves in the mirror—if they happen to bump into a mirror, they might apologize to the face in the mirror. If recording electrodes are placed in this brain area, nerve cells show face-selective responses.

There is a similar area in the monkey brain. This discovery, made by Charles Gross then at Harvard, is a dramatic case of serendipity. Gross and his associates were studying the responses of single neurons with a recording electrode in a region of the monkey cerebral cortex thought to be a higher visual area. The monkeys were anesthetized and then presented with various simple visual stimuli—spots of light, edges, and bars—the standard elementary visual stimuli used in such studies. The neurons in this area responded a little to those simple stimuli but not to

sounds or touches, so it seemed to be a visual area. But the neurons were not very interested in their stimuli. After studying one neuron for a long time with few results, they decided to move on to another. As a gesture, the experimenter said farewell to the cell by raising his hand in front of the monkey’s eye and waving goodbye. The cell responded wildly to this gesture. Needless to say, the experimenters stayed with the cell. This particular cell liked the upright shape of a monkey’s hand best. Other neurons in this region responded best to monkey faces. This region of cerebral cortex in the monkey corresponds more or less to the face area in the human cerebral cortex.

Is the face area formed by experience and learning or is it built into the brain? The answer seems to be both. A newborn infant prefers to look at faces rather than nonfaces but must learn to identify its mother’s face or other faces. This learning can be very rapid, so there must be a “face area” in the brain that is formed by the organization of the neuronal circuits in this region of the cerebral cortex. It is innate. But individual faces must be learned. Further, it seems that the memories for individual faces may be stored in this localized face area, given the devastating loss of ability to recognize individual faces when the area is damaged.

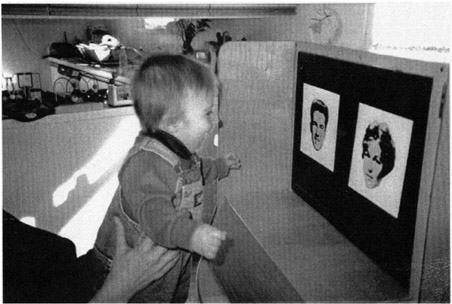

Studies of infant memories for faces have been performed using tests that do not require verbal responses or complex motor movements. One such procedure is based on the measurement of “looking” preferences. In the response-to-novelty task, an infant first looks at a picture of a face; then at varying intervals the infant is shown two pictures—the previously seen one and a new one—and the experimenter records the amount of time the infant spends looking at each (see Figure 3-4). The typical finding is that the infant spends more time looking at the new picture, which implies that the infant has retained information about the original picture.

As Learning Develops

An ingenious method of studying memory in young infants was introduced by Carolyn Rovee-Collier at Rutgers University. She

FIGURE 3-4A toddler looking at two pictures.

hung a mobile over an infant’s crib and attached a ribbon to the infant’s foot. When the infant saw an object she had seen before, she kicked more, showing that she remembered having seen it. This kind of task is termed operant training or conditioning. The infant is in control of her behavior. This procedure was initially developed by B. F. Skinner many years ago to probe the learning abilities of animals.

Two- or three-month-old infants were trained in the task and showed excellent retention at 24 hours. When tested with a different item, they did not respond at all. They showed both memory and discrimination at 24 hours. In a series of studies the duration of memory was determined to be a function of the infant’s age and to be virtually a straight-line function, reaching many weeks at 18 months. So memory improves dramatically with age.

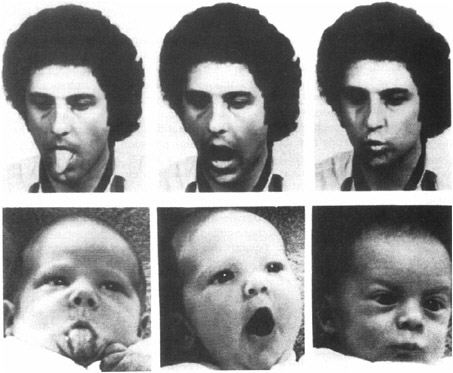

Even longer-lasting memories have been found for babies using imitation. Babies love to imitate. If you stick out your tongue at a young infant, she will do the same to you (see Figure 3-5). Imitation is one of the most powerful learning tools available to

FIGURE 3-5An infant imitating an adult.

infants and young children. Using imitation children learn to mouth their first words and to master the nonverbal body language of facial expressions and posture. Infants are also capable of deferred or delayed imitation. When shown a sequence of actions modeled by an adult, such as removing a mitten from a puppet’s hand, infants repeat the behavior. At six months of age infants are capable of imitating the action 24 hours after seeing it. At nine months, babies can remember for a month, but by the age of 20 months most toddlers remember this task for a year! This kind of memory is called recall memory. The baby has to remember how to remove the mitten. Simply recognizing the puppet is not enough. In general, recall memory is more difficult than recognition memory for adults as well as children and is especially difficult when it involves information about the order of events or actions.

Infantile Amnesia

Babies clearly develop long-term memories for past experiences beginning at the age of about six months or even earlier. By the end of the first year of life they are beginning to learn and remember words. Obviously, adults retain skills and information acquired during infancy and early childhood, yet adults seem unable to consciously remember and describe anything of their own experiences before about age 3. This is called infantile amnesia.

Sigmund Freud first described infantile amnesia. Freud thought the period extended back to age 6, but more recent studies indicate that it may extend to age 3 or 4. Freud initially thought this might be due to repression of existing memories but apparently later abandoned this idea.

As we have seen, infants learn and remember very well indeed, beginning at birth and continually improving with age. Why adults cannot remember much if anything about their own experience before age 3 or 4 is a mystery. We are referring here to what is called autobiographical memory. Such memories are described in words (that is, declarative memory, deliberate conscious recollection). Such memories are usually time tagged; we remember more or less when and where important events occurred in our lives. This is particularly true for very emotional experiences. Some researchers argue for autobiographical memory going back to as young as age 2, but the claim is controversial and the relevant research is hard to do. For example, in some studies researchers report having found memories in young adults of independently verified events such as injuries and hospitalizations that occurred around age 2, but a major and obvious problem here is the difficulty of ruling out the argument that such “recollection” may be based on later family discussions and reminders of the events.

Infantile amnesia has important relevance in real-world situations. Someone who claims to remember having been sexually molested at the age of six months is likely to have a false memory. Recent work suggests that infantile amnesia has much to do with language. It appears that we cannot describe in words events that

occurred in our lives before we knew the words to describe them. Sound confusing? Enter the “magic shrinking machine.” Investigators at the University of Otaga in New Zealand exposed children to a unique event at an age when they could just barely talk. They visited the homes of toddlers two to three years of age, bringing with them the magic shrinking machine. The child would place a teddy bear in the machine, close the door, and pull on levers. The machine would make odd sounds. The child would then open the door and—behold—the teddy bear was now much smaller. The toddlers greatly enjoyed this. Their language skills were also evaluated at that time.

A year later the investigators returned with the machine. The children, now a year older and with much improved language skills, recognized the machine. However, when asked to remember what it did, they used only the few words they knew a year earlier when they first saw the machine. People cannot reach back in time to non–verbally coded memories and describe them with words. These observations may provide at least part of an explanation of infantile amnesia. A complementary explanation concerns the fact that certain regions of the cerebral cortex, where we think language is learned and stored, require several years after birth to mature fully.

You may find it surprising that with all of the available evidence, there is still a great deal of controversy about the nature of infant memory. The debate centers on the vexing concept of consciousness. Recall that one of the defining features of episodic memory is that it consists of conscious recollection of events from one’s personal past. Some theorists claim that human infants lack this form of memory. We think the issue is irrelevant. As Carolyn Rovee-Collier points out, many of the basic processes of learning, retention, and forgetting can be found in infants in much the same way as in adults. What is different is language. Infants cannot store memories verbally because they haven’t yet learned to speak. As with the magic shrinking machine, preverbal memories cannot be expressed verbally, and words are the only tool we have to study consciousness, as when people describe their experiences.

What Things Are and Do

Looking at objects in a room, we see chairs, tables, and so on. For a long time it was believed that young infants did not actually see objects as separate entities. Again, the problem is that young infants are incapable of interacting with objects. Elizabeth Spelke and her associates at the Massachusetts Institute of Technology completed an ingenious series of studies which indicated that by three months of age infants do perceive objects as distinct from backgrounds. They do not have to manipulate objects to know this, as earlier thought. Visual experience of objects and the way that experience shapes the brain are enough.

Infants also seem to grasp the basic notion of cause and effect. In one study a film showed a red brick moving across a screen until it encountered a green brick at which point the red brick stopped and the green brick moved off. If the two bricks collided and there was a pause before the green brick moved, the infant responded as though surprised. Using variations on this scenario, infants as young as six months of age showed some appreciation of cause and effect.

Some scientists have argued that Pavlovian conditioning may be the basis for the understanding of causality. Due to experiences with the world, the brain has come to be so wired over the course of evolution. Remember Pavlov’s basic experiment: A bell rings and a few seconds later meat powder is put in a dog’s mouth, which causes the dog to salivate. After a few such experiences, the bell alone elicits salivation. It doesn’t work the other way around: Put meat powder in the dog’s mouth and then sound the bell. That bell would be of no help in predicting food. Since Pavlovian conditioning occurs in a wide range of animals, from relatively simple invertebrates to humans, we argue that cause and effect is “understood” by virtually all animals possessing brains. It forms the basis of adaptive behavior, behavior based on the ability to predict consequences.

When Things Go Missing

Young infants seem to think that if an object is suddenly hidden and they can no longer see it, it has ceased to exist. Dangle an enticing object such as a toy insect in front of an infant and he will happily reach for it. Before he reaches it, cover it with a towel. The infant suddenly looks blank and shows no interest in the towel. Instead, cover the toy with transparent plastic and he will pull the plastic off to grab the toy. It appears that when the object is covered such that it cannot be seen, the infant does not remember it is there.

Between about 7 months and 12 months of age, there is dramatic growth in an infant’s ability to remember that hidden objects still exist. The same is true for young monkeys. The task used to study the development of this memory ability is called delayed response, and it is very simple. The infant, human or monkey, is shown a board with two wells in it. A desired object, a small toy or raisin, is placed in one well, and the two wells are covered with identical blocks. A screen is then interposed so that the tiny subject cannot see the board for a brief time. The screen is then raised, and the infant reaches the blocks and pushes one aside (he is allowed to push only one). With a delay of a second or less while the screen is down, a seven-month-old human infant quickly learns to go to the baited well. If the delay period while the screen is down is increased beyond a second or so, the task quickly becomes very difficult for the seven-month-old. However, there is a dramatic increase with age in the infant’s ability to remember where the object is hidden. By the age of 12 months the infant can easily remember for 10 seconds or more where the object is.

Monkeys show exactly the same progression in memory for hidden objects. But monkeys are born a good deal less helpless than humans and mature much more rapidly. At 50 days of age the infant monkey can remember for about one second the location of an object, and by 100 days can easily remember delays of 10 seconds or more. These are examples of the early development of a raw memory ability.

This simple test was developed more than 50 years ago to chart the growth of memory ability in monkeys but has only more recently been used with human infants. Instead, a seemingly similar task was developed years ago for human infants by the pioneering Swiss child psychologist Jean Piaget. He called his “task A and not B.” Actually, the task is the same as delayed response but is done a little differently. What is dramatically different is the performance of young infants.

Suppose only the left well is baited with a toy repeatedly, so it is always correct. The screen is lowered for only a second or so each time. The seven-month-old (human) learns this very well. Then, in full view of the infant, the other well is baited instead. The screen is lowered and raised after one to two seconds. The infant immediately goes to the wrong well, the one that had earlier been baited repeatedly, even though she saw the other well being baited. As the infant grows older, she can perform correctly at longer and longer delay intervals. In more recent work, infant monkeys showed exactly the same results as infant humans on A, not B, but over a shorter period of development.

It is somewhat bemusing that these two tasks existed separately and independently for many years—delayed response for monkeys and A, not B, for humans—perhaps because in earlier years the two disciplines of child psychology and animal behavior did not interact.

These two tasks, particularly the A, not B, seem to involve two kinds of processes. On the one hand, in both tasks the infant has to remember where the missing object is over a brief period of time. The real surprise comes when young infants always choose the incorrect well, even though they see the other well being baited. If they had simply forgotten which well was baited, they should choose each well equally. But in fact they do remember that one well was repeatedly baited in the past and are unable to prevent or inhibit their response to this well. Learning to inhibit responses or behaviors that are incorrect or inappropriate is a key aspect of memory.

As it happens, a good deal is known about the brain system that underlies our ability to remember where objects are and to

inhibit the wrong responses. The critical region of the brain is in the cerebral cortex in the frontal lobe—that is, the prefrontal cortex. It was discovered many years ago that small lesions in this region on both hemispheres in adult monkeys completely abolished the animal’s ability to perform the delayed response task at any delay. The lesion changed an adult monkey into an infant monkey, at least in this task. When adult monkeys with these small brain lesions were tested in the A, not B, task, they behaved just like young humans and monkeys. At delays of as little as two seconds, they chose the well that had previously been baited repeatedly, even though they saw the other well being baited. Growth in the ability of infant humans and monkeys to perform these tasks correspond closely to the maturation of this critical region of the frontal cortex. The number of synapses (neuronal connections) grows rapidly from birth to one year of age.

What about adult humans who have damage to this region of the frontal lobe? The delayed response and A, not B, tasks are much too simple for adult humans, even if they are much impaired in their behavior. They can use words to hold the memory of where the object is during the delay period, using what is called working memory. But if a more sophisticated, analogous task is used, they show dramatic impairment in this kind of memory. The key task, famous in neuropsychology, is the Wisconsin card-sorting task. A deck of cards with various symbols is shuffled, and the person being tested is given the cards and told to sort them into categories. The investigator does not tell the subject the rules but only says “right” or “wrong” each time a card is sorted. Once the subject has mastered a category, the investigator, unbeknownst to the subject, changes the category. Normal people catch on quickly to the change in category, even though they are only told “right” or “wrong.”

On the other hand, people with damage to the critical region of their frontal lobe find it almost impossible to correctly perform on the task. The problem comes when they have to change to another category. They can’t. They might even say they need to change categories but can’t do it. More precisely, they cannot in-

hibit responding to the earlier and now incorrect category. Shades of A, not B. They behave like human and monkey infants on this more complex A-B task. Incidentally, patients with the mental disorder schizophrenia also have great difficulty with the card-sorting task.

Counting

Rachel Gelman, now at Rutgers University, and her associates completed an interesting series of studies on the basic numerical abilities of children. It appears that the ability to count, at least a few items, is present as early as two years of age, well before language mastery. Indeed, infants as young as six months can distinguish one, two, or three objects. Toddlers do seem to understand the concept of counting, but their verbal behavior is not perfect. If there are two items, the child might count: “one,” “two,” but if three items, might count: “one,” “two,” “six.” Yet the number of counts she states equals the number of objects.

In addition, human infants have a sense of “numerosity” similar to that of adults. This really means a number sense, a feeling about larger versus smaller numbers of items. In elegant studies, Elizabeth Spelke and her associates, then at the Massachusetts Institute of Technology, tested numerosity ability in six-month-old infants. They were able to discriminate groups of visual objects when one group was twice as large as the other but not when it was only one and a half times as large. The same thing happened when the infants were given auditory sequences. Charles Gallistel, now at Rutgers University, has summarized evidence indicating that animals also may possess a sense of numerosity.

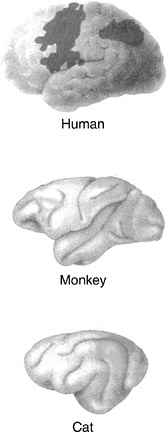

Interestingly, there are neurons in the posterior association areas of the cerebral cortex in cats and monkeys that behave like counters. One of the present authors (RFT) conducted the study in cats (Figure 3-6). A given neuron would respond to each stimulus (sound or light) or every second stimulus, up to about seven stimuli. Similar results were obtained for the monkey. A frontal area becomes involved as well. Two closely analogous areas in the

FIGURE 3-6Regions in the cerebral cortex in cat and monkey where neurons respond to the number of objects and corresponding areas in the human brain.

human brain also are activated during numerical computations. A basic ability to count may be common among higher mammals and might be innate.

Theory of Mind

An experimenter shows a 5-year-old a candy box with pictures of candy on it and asks her what she thinks is in it. She of course replies, “candy,” as would any adult (at least those not suspicious of psychological researchers). Then the child looks inside the box and discovers to her surprise that it actually contains not candy but crayons. The experimenter then asks her what another child who has not yet seen inside the box would think is

inside it. The child says, “candy,” amused at the deception. Things go a bit differently with a 3-year-old, however. His response to the initial question is the same—“candy”—but his response to the second question is surprising—an unamused “crayons.” It may surprise you even more to know that in response to further questioning he also claims that he had initially thought there were crayons in the box and had even said that there were! This difference between 3- and 5-year-olds illustrates a critical difference in their conceptions of mind. That is, 3-year-olds do not yet realize that people have representations of the world that may be true or false and that people act on the basis of these mental representations rather than the way the world actually is. In contrast, 5-year-olds understand the nature of “false beliefs.”

Although a sophisticated theory of mind does not develop until about age 5, even by age 1 babies have an amazing understanding of people. Suppose an adult is playing with a one-year-old and looks into two different boxes. When the adult looks into one box she has an expression of happiness, but when she looks in the other box she shows an expression of disgust. She then pushes the two boxes toward the one-year-old. What do you think happens? The baby happily reaches for the box that made the adult happy but won’t touch the other box. The baby not only recognizes when a person is happy or disgusted but also understands that things can make someone feel happy or disgusted.

The development of a theory of mind in children is a striking example of social learning. Over the period from about three to five years of age, children learn by experience with others that people have ideas, thoughts, and emotions and that they act on the basis of these internal representations. They learn that the mind can represent objects and events accurately or inaccurately. As each child’s theory of mind (actually theories about the minds of others) develops and becomes more sophisticated, the child is better able to predict the behavior of others, particularly as this behavior relates to the child.

The mind of a five- or six-year-old child is extraordinary. Not only does she have ideas about the minds of others, she is also aware that she has these ideas about ideas; she has self-awareness—so much so that by six to eight years of age children

find it very hard not to think. It is easy to see how and why the child develops her theory of mind. The better her theories are about other individuals’ minds and what their intentions are, the better she can respond to and even influence or control their behavior. The better her theory of mind, the better it is rewarded.

Some researchers believe that the beginnings of a theory of mind develop by way of imitation. As we saw earlier, children will imitate expressions. Partly as a result of imitation children learn that drooping shoulders in another person mean sadness. Facial expressions can mean happiness, sadness, anger, and so on. A primary aspect of the disorder autism is thought to be due to the lack of development of a theory of mind in autistic children.

Autism

Autism is one of the most common serious disorders of childhood. Earlier thought to be rare, it is now known to affect 1 in every 150 children age 10 and younger. If adults with autism are included, more than a million people in the United States have the disorder. It is much more common in boys than girls and the symptoms range from very mild to devastating. Individuals with severe cases can be profoundly retarded and unable to dress or even go to the bathroom without help. They often engage in meaningless repetitive behaviors, including self-injury, and may have temper tantrums and violent outbursts.

Children with the more severe forms of autism show a regular progression of symptoms that counter the normal development of learning in infants and children. At one year of age they still do not babble or point and by age 2 still cannot speak two-word phrases. A striking difference is that autistic infants and children do not imitate adults. If an adult pounds a pair of blocks on the floor, a normal 18-month-child will do the same, but young children with autism will not. Later, autistic children do not engage in pretend play, do not make friends, and do not make eye contact. They do make repetitive body movements and may have intense tantrums.

At the other extreme are people with Asperger’s syndrome, discovered in 1944 by Hans Asperger, an Austrian pediatrician. It wasn’t until 1994 that the American Psychiatric Association officially recognized Asperger’s syndrome as a form of autism. Children with Asperger’s syndrome are generally bright, sometimes gifted, and particularly adept at solitary repetitive activities like transformer toys or computer programming (see Box 3-2).

Asperger’s syndrome is much more subtle and usually not diagnosed until about age 6 or older. Such children have difficulty making friends, cannot communicate with facial and body expressions, and have an obsessive focus on very narrow interests. All autistic children have one characteristic in common. They seem unable to recognize that other people have minds that differ from their own. They think that what is in their mind is in everyone else’s mind and that how they feel is how everyone else feels. In short, they have never learned to develop a theory of mind.

Autism runs in families. If one identical twin has the disorder, the chances are 60 percent the other will too and a better than 75 percent chance that the twin without autism will exhibit one or more traits. It has been estimated that between 5 and 20 genes are involved in autism. One discovery of particular interest is the involvement of a gene on chromosome 7. A putative “language” gene maps to this same area. We will say more about this language gene in our treatment of language.

Several brain abnormalities have been associated with autism, but it is too early to draw firm conclusions. Some areas of the brain are smaller, particularly those involved in emotional behavior, and some areas are larger. There appear to be abnormalities associated with the cerebellum, an older brain structure earlier thought to be involved only with movement and motor control but now known to be involved in learning, memory, and cognitive processes as well. One particularly interesting finding from brain imaging work concerns the face area. Unlike normal people, this area does not become active at all in the brains of autistic individuals when they look at stranger’s faces. However, when looking at the faces of loved ones, an autistic’s face area and

BOX 3-2 At Michelle Winner’s social-skills clinic in San Jose, California, business is booming. Every week dozens of youngsters with Asperger’s syndrome file in and out of therapy sessions while their anxious mothers run errands or chat quietly in the waiting room. In one session, a rosy-cheeked 12-year-old struggles to describe the emotional reactions of a cartoon character in a video clip; in another, four little boys (like most forms of autism, Asberger’s overwhelmingly affects boys) grapple with the elusive concept of teamwork while playing a game of 20 Questions. Unless prompted to do so, they seldom look at one another, directing their eyes to the wall or ceiling or simply staring off into space. Yet outside the sessions the same children become chatty and animated, displaying an astonishing grasp of the most arcane subjects. Transformer toys, video games, airplane schedules, star charts, dinosaurs. It sounds charming, and indeed would be, except that their interest is all consuming. After about five minutes, children with Asperger’s, a.k.a. the “little professor” or “geek” syndrome tend to sound like CDs on autoplay. “Did you ask her if she’s interested in astrophysics?” a mother gently chides her son who has launched into an excruciatingly detailed description of what goes on when a star explodes into a supernova. Although Hans Asperger described the condition in 1944, it wasn’t until 1994 that the American Psychiatric Association officially recognized Asperger‘s syndrome as a form of autism with its own diagnostic criteria. It is this recognition, expanding the definition of autism to include everything from the severely retarded to the mildest cases, that is partly responsible for the recent explosion in autism diagnoses. There are differences between Asperger’s and high-functioning autism. Among other things, Asperger’s appears to be even more strongly genetic than classic autism, says Dr. Fred Volkmar, a child psychiatrist at Yale. About a third of the fathers or brothers of children with Asperger’s show signs of the disorder. There appear to be maternal roots as well. The wife of one Silicon Valley software engineer believes that her Asperger’s son represents the fourth generation in just such a lineage. |

It was the Silicon Valley connection that led Wired magazine to run its geek-syndrome feature last December. The story was basically a bit of armchair theorizing about a social phenomenon known as assortive mating. In university towns and R.- and D. corridors, it is argued, smart but not particularly well-socialized men today are meeting and marrying women very like themselves, leading to an overload of genes that predispose their children to autism, Asperger’s, and other related disorders. Is there anything to this idea? Perhaps. There is no question that many successful people—not just scientists and engineers but writers and lawyers as well—possess a suite of traits that seem to be, for lack of a better word, Aspergery. The ability to focus intensely and screen out other distractions, for example, is a geeky trait that can be extremely useful to computer programmers. On the other hand, concentration that is too intense—focusing on cracks in the pavement while a taxi is bearing down on you—is clearly, in Darwinian terms, maladaptive. But it may be a mistake to dwell exclusively on the genetics of Asperger’s; there must be other factors involved. Experts suspect that such variables as prenatal positioning in the womb, trauma experienced at birth or random variation in the process of brain development may also play a role. Even if you could identify the genes involved in Asperger’s, it’s not clear what you would do about them. It’s not as if they are lethal genetic defects, like the ones that cause Huntington’s disease or cystic fibrosis. “Let’s say that a decade from now we know all the genes for autism,” suggests Bryna Siegel, a psychologist at the University of California, San Francisco. “And let’s say your unborn child has four of these genes. We may be able to tell you that 80% of the people with those four genes will be fully autistic but that the other 20% will perform in the gifted mathematical range.” Filtering the geeky genes out of high-tech breeding grounds like Silicon Valley, in other words, might remove the very DNA that made these places what they are today. Time Magazine, May 6, 2002, pp. 50-51. |

related brain areas become much more active than is true for normal people. Why autistic children do not develop a theory of mind and what brain abnormalities are responsible remain mysteries. The lack of imitative behavior in autistic children may prove to be a most important clue.

Some very basic psychological principles of learning have been applied with great success in the treatment of autism. For over 30 years, Ivor Lovaas has run a clinic at the University of California at Los Angeles that uses basic operant conditioning techniques to teach communication to autistic children. Many of these children lack even the rudiments of social speech, and the training process proceeds in small steps with the goal of developing normal social speech. For example, suppose the therapist’s goal is to get an autistic child to respond appropriately to the question “What is your name?” The child does not respond, does not look at the therapist, and generally acts as if the therapist doesn’t exist. The training proceeds by rewarding the child with snacks for paying attention, first, and then for making eye contact (which might have to first be “prompted” by the therapist). Then the therapist focuses on manually shaping the child’s lips to prompt pronounciation of the first letter of his or her name and from there on rewards any of the child’s behavior that represents a step (sometimes very small ones) toward the goal of getting the child to pronounce his or her first name when asked. This training can last for weeks before the goal behavior is achieved. The program then continues to more complex speech behavior, always building on what has been learned and always based on the principle of rewarding desired behavior, never unwanted behavior. It is quite amazing to see the results of the training: a formerly uncommunicative child who now uses normal, spontaneous social speech. Lovaas’s success in treating autistic children lends further support to a statement made earlier: The limits of human abilities and their modifiability by learning are not yet known.

Critical Periods in Development

Perhaps the most dramatic example of a critical period in development is imprinting in birds. Birds like chickens, ducks, and geese that can walk and feed immediately after hatching, imprint on their mothers within the first day. The chick will stay close to its mother and follow her everywhere. The reader may have seen pictures of a mother duck leading her ducklings across a road, marching in single file.

If the mother is not present, the newborn chick will imprint on whatever object is present if it moves and makes noise. Imprinting works best for objects that look and sound like mother. In the laboratory, chicks have been imprinted on all kinds of objects, even little robots that move and make sounds. The classic example concerns the pioneering ethologist Konrad Lorenz, who imprinted a gaggle of goslings who were under the unfortunate impression he was their mother to follow him everywhere.

The critical period for imprinting is very brief. Once the chick has imprinted on the initial object, hopefully the mother, it will no longer imprint on other objects. Perhaps the closest example to imprinting immediately after birth in mammals occurs with olfactory learning. The human infant learns to identify and prefer the odor of his mother within the first day, especially if the mother is breast-feeding.

The now-classic example of a critical period in development for humans and other mammals is the visual system. Donald Hebb, a pioneer in the study of memory and the brain, has described clinical cases of people who were born and grew up with severe cataracts. They were essentially blind. Several had their cataracts removed as adults, after which their visual function, although improved, was still greatly impaired. They could see and quickly learned to identify colors, which are coded in the retina of the eye, but could not identify the shapes of objects. If presented with a wooden triangle, they could not identify it until they could touch and feel it. Object vision is coded in the brain, not the eye.

Being blind in one eye is almost as bad. The classic work on

the critical period in vision development was done by two scientists then at Harvard, David Hubel and Torsten Wiesel, who worked initially with cats. If they kept one eye of a kitten closed from the time its eyes opened after birth until about two months of age, the kitten was permanently blind in that eye. If the same procedure was done on an adult cat, there was no vision impairment in the closed eye. This same massive impairment in vision occurs in monkeys and humans, the only difference is the duration of the critical period: For humans it extends to about five years of age. Children born with severe impairments in one eye (for example, a cataract or squint), if not treated, will become permanently blind in that eye. Indeed, even keeping one eye closed for a period of several weeks in a one-year-old child—for example, for a medical procedure—can cause damage. Better to keep both eyes closed. In all these cases the eyes themselves are fine; it is the visual area of the cerebral cortex that is damaged.

Hubel and Wiesel were able to determine the processes in neurons in the visual cortex that led to this devastating loss of vision after one eye had been closed. The basics of the normal human visual system are summarized in Box 3-1. As noted there, both eyes project to each side of the visual cortex. In adults the neurons in the visual cortex are the first to be activated when an object is seen (activity from the eye is relayed to the visual thalamus and on to the cortex). They receive this input from either the left eye or the right eye but not from both (see Figure). They in turn converge on other neurons in the cortex that are activated by input from both eyes, permitting binocular vision.

At birth the situation is quite different. These first-activated cortical neurons receive virtually identical input from both eyes. Over the critical period, if vision is normal in both eyes, a kind of competition occurs so that each neuron ultimately loses input from one eye or the other to yield the adult pattern. But if one eye is closed during the critical period, activation from the seeing eye gradually takes over all the connections in each of these neurons and the input from the shut eye dies away. We think a reason for this is that activation—the occurrence of neuron action poten-

tials—is necessary to maintain synaptic connections in the critical period. Consequently, all input from the shut eye to the visual cortex vanishes.

This provides a particularly clear example of how genes and experience interact in human brain development. The basic connections, the neural circuits, are originally programmed by the genes and their interactions with the developing brain tissue. But the fine details of connections among neurons are guided by experience and learning. If one eye of a kitten is covered by an opaque goggle that transmits light but not detail, vision with that eye is still much impaired. Experience fine-tunes the organization of connections among neurons in the brain. These connections are termed synapses.

Synapses

If any one process is key to the organization of the brain and to the formation of memory it is the formation of synapses, the functional connections from one neuron to another. Each nerve cell has one fiber extending out to connect with another neuron, called the axon (Greek for “axis”) and many other fibers extending out from the body of the cell that receive input from other neurons. These fibers are called dendrites (Greek for “tree,” the dendrites on a neuron resemble the branches of a tree). Each dendrite is covered by small bumps or spines. Each spine is a synapse receiving an axon connection from another neuron (see Figure 3-7). Physically, synapses consist of a small synaptic terminal (presynaptic—before the synapse) in close opposition to a spine on the postsynaptic (after the synapse) neuron dendrite. There is a very small space between the two. When this presynaptic terminal is activated it releases a tiny amount of a chemical neurotransmitter that diffuses across to the postsynaptic membrane to activate the neuron. The number of synapses on a neuron is astonishing. A major type of neuron in the cerebral cortex may receive up to 10,000 synaptic connections from other neurons. The champions are a type of neuron in an older brain structure, the Purkinje neu-

FIGURE 3-7A typical neuron in the brain. The dendrites are covered with little bumps or spines. Each spine is a synapse, a connection from another neuron.

rons in the cerebellum, named for a famous anatomist. Each Purkinje neuron (there are millions) receives upward of 200,000 synaptic connections from other neurons!

Synapses grow, form, and die from well before birth through adulthood to death. The process of synaptic formation and growth is called synaptogenesis. Thanks to the work of Peter Huttchenlocker at the University of Chicago and many other scientists who have studied the brains of cadavers, we now have

solid data on the rates of synaptogenesis in various regions of the cerebral cortex in humans of all ages.

At birth the human brain is immature. New neurons are still forming and growing, synapses are multiplying, and myelin formation is far from complete (myelin is the insulating covering on nerve fibers that increases the speed of the electrical conduction of the neurons). The newborn brain is about one-fourth the size it will eventually reach, and most of its growth occurs in the first year of life. Most synapses connect to other neurons at terminals on dendrites. So the growth and elaboration of dendrites are closely associated with increased numbers of synapses.

At birth most of the connections, including dendritic growth and branching and synapse formation, are missing. Much of the growth occurs during the first year or so of life. At the end of this period the total number of synapses in the infant brain is twice that of an adult’s. Following this early period of exuberant growth, there is a slow and prolonged period of elimination and pruning of excess synaptic contacts, which is not completed until late adolescence in many cortical areas. But new synapses, and indeed new neurons, form throughout life in some regions of the brain.

The growth of and decline in the numbers of synapses in various regions of the cerebral cortex are closely associated with critical periods in development. This is strikingly evident in the visual area of the human cortex. The total number of synapses is very low at birth, skyrockets to a peak at about 6 to 12 months of age, and then gradually declines until about age 10, after which it is relatively stable until old age. The period of maximum synaptogenesis closely corresponds with the period of maximum plasticity and maximum sensitivity to impairment in the visual system.

Hearing in infants is poor at birth but reaches virtual adult competence by about six months. This is closely paralleled by the growth of synapses in the human primary auditory cortex (Heschl’s gyrus). By six months of age there has been a massive increase in the number of synapses, which approach adult levels by about three years of age and then gradually decline until about age 10.

Some musicians have perfect pitch; they can immediately identify the pitch of any note. The results of one study showed that all musicians who had perfect pitch began musical training before age 6. Those who began training after the age of 6 did not have perfect pitch, corresponding to the decline in synaptogenesis in the auditory cortex from age 3 to age 10. It is not known whether there are individuals with perfect pitch who did not begin musical training until after age 6 or so. But, of course, we must be careful about cause and effect in findings like this. Perhaps people with perfect pitch are so musically gifted that they naturally seek out musical training early.

The development of hearing is a clear example of a critical period. Many cases of early deafness can be helped by a device implanted in the inner ear, the cochlea. This device codes sounds into electrical impulses delivered to the cochlea to activate auditory nerve fibers.The development of cochlear implants has made it possible for some individuals who had been deaf from birth to learn to hear and understand speech. Interestingly, the implants are most effective if implanted before the age of 3. They decrease in effectiveness when implanted at older ages. Whether this is due to decreased plasticity in the auditory cortex or in the language areas of the cortex is not known. The growth of synapses in the language areas of the cortex also corresponds closely to the development of language functions.

As discussed earlier, infants show a dramatic increase in their ability to solve delayed response and A, not B, tasks from 7 to about 12 months of age and beyond. The prefrontal cortex, the region critical for these tasks, shows a rapid increase in the number of synapses from birth to one year of age and then remains high until at least age 5, declining in adolescence.

Enhancing Your Child’s Mind

The Mozart Effect

Parents have always been interested in ways to improve their child’s learning abilities and performance in school. A recent

popular fad is the so-called Mozart effect. Stores now sell Mozart CDs for young children to “enhance” their intelligence. This fad derived from studies on college students in which it was reported that listening to a Mozart sonata enhanced their subsequent performance on spatial-temporal IQ-like tasks. What was also reported but widely overlooked in the popular press is that the Mozart effect lasted only about 10 minutes. There is, in fact, no evidence to support such an effect (see Box 3-3).

There is some evidence that musical training may enhance performance on some tests of mental abilities, but the effects are not great. To some extent, this is another chicken-and-egg problem. Does musical training enhance performance on the tests or do children who take musical training exhibit enhanced performance on the tests because of their particular interests and abilities? But early musical training perhaps increases the possibility the child will have perfect pitch, as we noted, presumably enhancing later musical capabilities. Actually, one recent and carefully done study found a greater increase in the IQ scores of children after taking music lessons than after taking drama or no lessons.

Enriched Environment

Raising animals in a rich environment can result in increased brain tissue and improved performance on memory tests. Much of this work has been done with rats. The “rich” rat environment involved raising rats in social groups in large cages with exercise wheels, toys, and climbing terrain. Control “poor” rats were raised individually in standard laboratory cages without the stimulating objects the rich rats had. Both the rich and the poor rats were kept clean and given sufficient food and water. Results of these studies were striking: Rich rats had a substantially thicker cerebral cortex, the highest region of the brain and the substrate of cognition, with many more synaptic connections, than the poor rats. They also learned to run mazes better.

The popular press made much of the enriched-environment

BOX 3-3 Several years ago, great excitement arose over a report published in Nature which claimed that listening to the music of Mozart enhanced intellectual performance, increasing IQ by the equivalent of eight to nine points as measured by portions of the Stanford-Binet intelligence scale. Dubbed the “Mozart effect,” this claim was widely disseminated by the popular media. Parents were encouraged to play classical music to their infants and children and even to listen to such music during pregnancy. Companies responded by selling Mozart effect kits, including tapes and players. (An aspect of the Nature account overlooked by the media is that the effect was reported to last only about 10 to 15 minutes.) The authors of the Nature report subsequently offered a “neurophysiological” rationale for their claim. This rationale essentially held that exposure to complex musical compositions excites cortical neuron firing patterns similar to those used in spatial-temporal reasoning, so that performance on spatial-temporal tasks is positively affected. Several groups attempted to replicate the Mozart effect, with consistently negative results. One careful study precisely replicated the conditions described in the original study. Yet the results were entirely negative, even though the subjects were “significantly happier” listening to Mozart than they were listening to a control piece of postmodern music by Philip Glass. One recent report indicates a slight improvement in performance after listening to music by Mozart and Schubert as compared with silence. But listening to a pleasant story had the same effect, a finding that negates the brain model. Mood appeared to be the critical variable in this study. Why did the Mozart effect receive so much attention, particularly if it lasts only minutes? Perhaps because the initial positive result was published in Nature, a scientific journal routinely viewed by the media as being very prestigious. Another factor, no doubt, is that exposing one’s child to music appeared to be an easy way to make her or him smarter—much easier than reading to the child regularly. Moreover, the so-called neurophysiological rationale provided for the effect probably enhanced its scientific credibility in the eyes of the media. Actually, this rationale is not neurophysiological at all: There is no evidence to support the argument that music excites cortical firing patterns similar to those used in spatial-temporal tasks. |

effect, and commercial devices were developed to “enrich” the environment of babies’ cribs with bells, whistles, and moving objects. It turns out that the wrong conclusion was drawn from the animal literature. The data were clear; the rich rats had more developed brains than the poor rats. But when wild rats were examined, their brains were like those of the rich laboratory rats. Indeed, in one study a large area was fenced off outside the psychology building at the University of California at Berkeley, and rats were raised in this semiwild environment. They also developed rich rat brains. It seems that it was the poor rats whose brains developed abnormally, from being raised in isolation without the stimulation of normal rat life.

There are parallels in tragic cases where children have been raised in isolation. A California girl spent most of her first 14 years of life tied to a chair in a small room. She was never able to acquire normal language. There are also reports of impaired infant development in orphanages in Eastern Europe where the infants were left alone day and night except for feedings and changings.

What are the best things that concerned parents can do to enhance the cognitive development of their infants? The experts tell us to do what comes naturally. Talk to them, play with them, make funny faces, pay attention to them—spend time interacting with them. Mozart and noisy mobiles don’t really help. It is people they want to interact with.