Reference Manual on Scientific Evidence: Fourth Edition (2025)

Chapter: Reference Guide on Computer Science

Reference Guide on Computer Science

BRIAN N. LEVINE, JOANNE PASQUARELLI, AND CLAY SHIELDS

Brian N. Levine, Ph.D., is Professor, Manning College of Information and Computer Science, University of Massachusetts Amherst.

Joanne Pasquarelli, Esq., Washington, D.C.

Clay Shields, Ph.D., is Professor, Department of Computer Science, Georgetown University.

CONTENTS

Binary Encoding, Bits, and Bytes

Central Processing Units (CPUs)

Graphics Processing Units (GPUs)

Flash Memory, SSDs, and USB Drives

File Systems, File Metadata, and Databases

Algorithms, Programs, and Software

Programs and Programming Languages

Cryptographic Key Sizes and Attacking Encryption

Attacking Cryptographic Hashes

IP Network and Link Layer Addresses

Cloud Computing and Serverless Systems

Wireless Networking and Mobile Devices

Virtual Private Networking (VPN)

Anonymous Communication Systems

Network Identifiers and Third-Party Data Collection

Investigating Peer-to-Peer File-Sharing Systems

Investigating Anonymous Communication Systems

What You Have (Physical Tokens)

Forensics and Digital Evidence

Computer Science from the Perspective of Federal Rule of Evidence 702

FIGURES

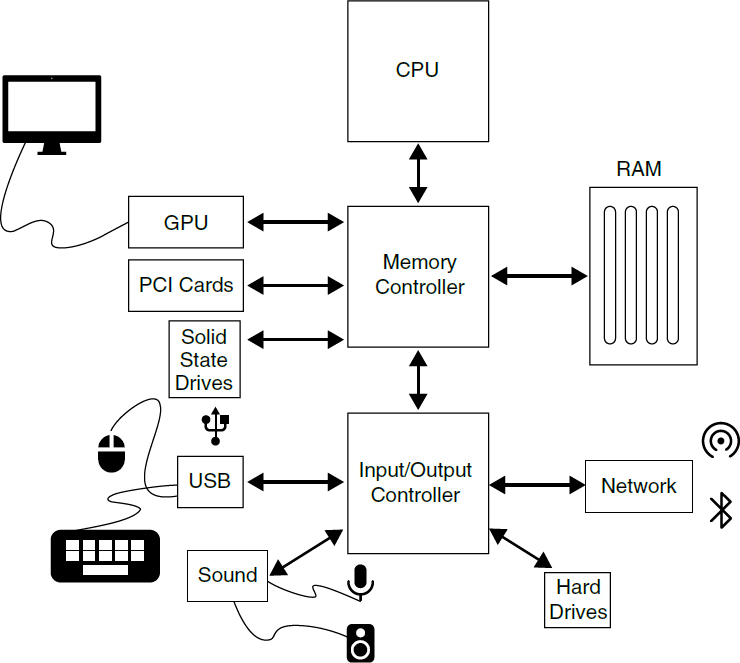

1. The components of a computer system

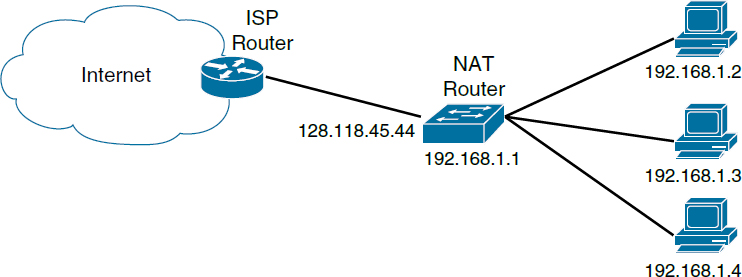

3. Network Address Translation box hides a larger network behind it from the rest of the internet

4. Racks of computers or “rack” servers in a data center

5. A client-server network architecture. Dotted lines represent connections across the internet

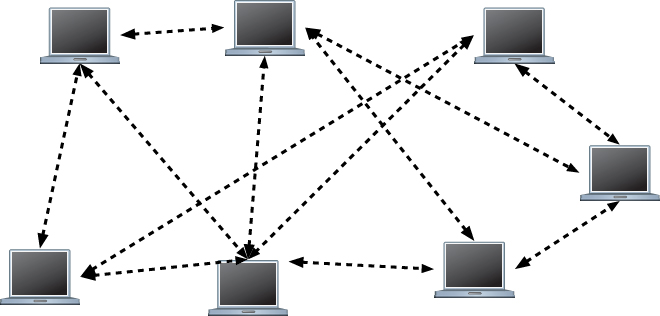

6. A peer-to-peer network architecture. Dotted lines represent connections across the internet

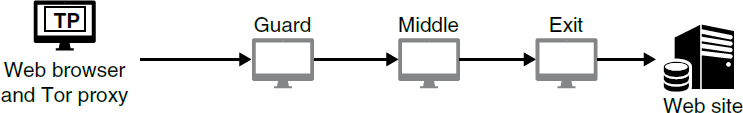

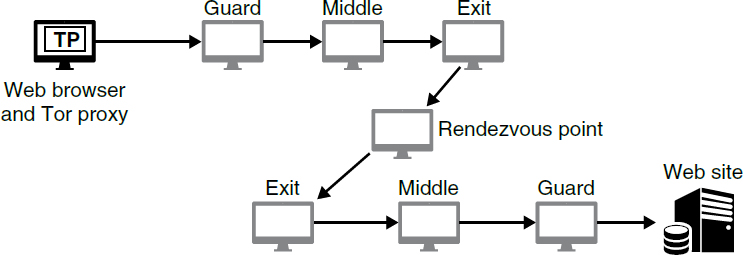

7. Tor Browser connects a user to a website in a multi-proxy setting

Introduction

Computers have become inescapable. Virtually every adult and teen carries and uses a computer in the form of a smartphone holding incredible amounts of information about the user. Computers are present as wearable devices that monitor our health or are present in our buildings as fixtures that monitor and control our environment. Cars and critical infrastructure no longer work without them. We commonly use computers to communicate, seek and find information, navigate, and supplement our memory—and evidence of our actions often remains. Companies store massive amounts of data electronically about their activities and, where applicable, those of their customers. Accordingly, our everyday movements, communications, and actions often result in critical evidence that is relevant to both criminal and civil disputes. Court cases often depend on a forensic analysis of this stored digital evidence of the computational activity that has occurred or been recorded, particularly in the realm of emails and other communications. Computers will be part of many legal cases, in some aspect, for the foreseeable future.

In this reference guide, we provide a brief overview of how computers operate and communicate; the basics of algorithms, software, and cryptography; how computers communicate over networks like the internet; computer security goals and the process of meeting them; and what digital forensic evidence can be collected to support or refute some legal theories. Given the wide scope of topics, we are necessarily brief in our treatment of these topics, but we hope this reference guide provides a basis for understanding the technology that is involved in many legal issues. Standard textbooks are available that cover many of these topics in greater detail, such as from Kurose and Ross,1 Anderson,2 Bishop,3 and Kernighan.4 See also Darrell5 and Kerr6 for legal textbooks concerned with technology and computers.

Computer Organization

We begin with a general overview of computer organization, including hardware, file systems, databases, and virtual machines. Computer networking is covered in a later section of this guide.

1. James Kurose & Keith Ross, Computer Networking: A Top-Down Approach (7th ed. 2016).

2. Ross Anderson, Security Engineering: A Guide to Building Dependable Distributed Systems (3d ed. 2020).

3. Matt Bishop, Computer Security: Art and Science (2d ed. 2018).

4. Brian W. Kernighan, Understanding the Digital World: What You Need to Know about Computers, the Internet, Privacy, and Security (2d ed. 2021).

5. Keith B. Darrell, Issues in Internet Law: Society, Technology, and the Law (11th ed. 2018).

6. Orin Kerr, Computer Crime Law (5th ed. 2022).

Overview of Computer Hardware

A computer is a tool that processes information at blazing speeds. It performs this feat by encoding the information as electrical signals that can be processed as billions of operations per second, stored in huge quantities, or sent over a network. The hardware of a computer is designed to process data quickly through simple mathematical operations. It is the combination of computational power with functionality provided by programs that makes it incredibly useful. We describe programs and how they are created in “Algorithms, Programs, and Software.”

Binary Encoding, Bits, and Bytes

Every computer performs computations using a series of high and low voltages that represent a series of 1 or 0 values, each value being called a bit. All information on the computer, including programs or data files, is a series of bits, with the series representing some numeric value that has meaning in a particular context. This representation is called binary encoding. Each added bit in a series doubles the number of possible values that can be represented. A single bit can represent two values; two bits can represent four values; three bits eight values, and so on. The most common unit of data is 8 bits combined; this unit is named a byte, and it can represent 256 possible values and often represents one character of text. Most data are measured in terms of bytes, and it is common for measurements of bits to be represented with a lowercase b and bytes with an uppercase B. Units of a thousand bytes are called a kilobyte, abbreviated KB; units of a million are a megabyte, or MB; units of a billion bytes are gigabytes, or GB; and units of a trillion bytes are terabytes, or TB.

Buses and Controllers

Almost all computers have a similar physical structure. Typically, there is a motherboard that provides a base for other components and for communication between them, using electrical connections called buses. The buses connect components called controllers that handle receiving data from the user, from storage, or from the network and sending that data to the central processing unit (CPU). A typical motherboard will have a variety of buses, some that are very high speed to access random access memory (RAM) or to other components like graphical processing units (GPUs), and others that are lower speed and connect devices like Universal Serial Bus (USB) mice and keyboards.

Central Processing Units (CPUs)

The primary computational component that is mounted on the motherboard is the central processing unit (CPU), which is often the single most expensive component. Older computers most often had one CPU that contained one computational core whose speed was governed by a clock that generated electrical signals to keep computations internally synchronized. Some higher-power computers had more than one CPU mounted on the motherboard and could perform multiple computations at the same time with one set of computations in each CPU.

Advances in computational speed initially came from increasing the tick speed of the internal clock. However, this approach reached physical limits, mostly in terms of power consumption and related heat generation. More recently, designers started adding additional computational cores to a single CPU so that each CPU could perform multiple computations simultaneously. Now a single physical CPU typically has many computational cores, which enable new uses like hosting virtual machines, as discussed below. The number of cores varies depending on design and cost of the CPU; low-cost CPUs might have 1 to 4 cores, while high-end CPUs might have 64 cores or more, with a range in between. With mobile computing becoming widespread, designers often work to decrease the power required for computation so that mobile devices, like phones and laptops, can last longer while running on battery power.

Graphics Processing Units (GPUs)

The other component that is increasingly used for computation is the graphics processing unit, or GPU. The GPU was originally used to increase the speed of on-screen video, particularly for games, but over time other uses have become popular. GPUs are excellent for machine learning and cryptocurrencies, and they have become essential to those areas.7

GPUs differ from CPUs in that they have many more computational cores than a CPU, though each core is more specialized and limited in what it can do. Whereas a CPU might have between 4 and 32 cores, a GPU might have thousands of less-powerful cores. In addition, GPUs execute tasks delegated by the CPU during the execution of a program, and they do not run programs on their own.

7. For a detailed discussion of machine learning, see James E. Baker and Laurie N. Hobart, Reference Guide on Artificial Intelligence, “What is Machine Learning?” section, in this manual.

Random Access Memory (RAM)

The CPU stores some working data in its own circuitry, but such storage is expensive and displaces circuitry that could be used for computation. Each CPU has several caches of memory: the high speed but smaller level 1 cache, and one or more other levels of caches, each larger and slower than the prior level. More storage is supplied off the CPU in random access memory (or RAM). RAM is a large bank of hardware memory that can be accessed relatively quickly by the CPU when it needs data. RAM is often connected to the CPU on a dedicated, high-speed bus so there is no conflict with other data coming from other devices. GPUs have less cache, instead having high-bandwidth connections to the RAM to supply the data needed.

RAM is volatile memory, meaning it requires continuous power to store data. For desktop computers this means that data can be lost when power is lost. For laptops and some battery-powered devices there is usually a mechanism to store the contents of RAM in longer-term storage when it appears the battery will become exhausted; other devices have a memory-preserving sleep mode.

Data Storage

Flash Memory, SSDs, and USB Drives

Most computers now use flash memory for internal data storage. Flash memory is a type of hardware-based storage similar to RAM, except that it is nonvolatile. When power is removed from flash memory the data is not lost. Flash is not a replacement for RAM in part because it is much slower and in part because flash has a characteristic that the memory cells can only be written to a limited number of times. Flash memory is commonly used for solid state drives (SSDs). These drives are an improvement over hard drives that are based on spinning magnetic platters, described below. SSDs have faster access speeds and lower power usage, but they can be more expensive for the same amount of storage.

Flash is also commonly used in portable storage. It is incorporated into a huge range of cards, sticks, and keys, which sport a variety of connectors. Perhaps the most common type of portable storage is the USB drive—often referred to as a “USB key fob” or “USB key”—which connects via a computer’s USB port. Card readers that support a variety of formats are available, and some laptops include built-in readers.

Hard Drives

Non-SSD hard drives use an older mechanical technology based on magnetism to store bits of data on a rotating platter. While these drives are less common in laptops and PCs, they are still used in many server applications for bulk data storage. The size of the platter has been getting smaller over time, though the density of information written on the platter continues to increase. Desktop drives commonly use 3.5-inch platters, while laptops use 2.5-inch platters, and embedded devices and the smallest laptops use drives with 1.5-inch platters.

Other Media

While SSDs and hard drives are most common, there are other types of media still in use. Optical media, such as compact discs (CDs), digital video discs (DVDs), and Blu-ray discs, encode data in a way that they are readable by laser light. Current technologies store data on discs by encoding bits as tiny pits that change the reflectivity in reflective media and can be read as bits by a laser. Tape is sometimes used as a backup media. It is a long, magnetic, ribbon-like material that is stored on spools that are often inside a cartridge. Tape provides sequential access to data instead of random access. The tape must be read from front to back. Tape can provide significant amounts of storage inexpensively, though the access rate is slow.

General System Diagram

Figure 1 shows the basic layout of the devices in a computer. The high-speed bus and low-speed bus are separate components on older computers, but some or all of that functionality might be incorporated directly into modern CPUs.

Operating Systems

The hardware of a computer is shared across all the programs using it. Operating systems (OS) are a common and specialized type of software that mediates between the system hardware and the software that wants to use it. Operating systems provide a number of useful functions. They manage resources shared between programs, such as access to devices like storage and input/output devices and allocating memory. They provide a common interface to hardware so that programmers don’t need to be aware of specific differences between individual hardware brands or components by providing an interface for drivers, which specify how the OS can interact with hardware. They provide a user interface so that users can start

and stop programs and access files and other information. Computer programs written for one operating system are generally not compatible and will not work with others. Some OS are designed for desktop and laptop use, like Microsoft Windows and Apple’s macOS. Others are used for mobile devices, like Google’s Android or Apple’s iOS. Still others are most commonly used for servers, like Linux.

File Systems, File Metadata, and Databases

Computer storage media provide the ability to store information, but they do not inherently organize it; they come as large blank storage areas. Computer operating systems format storage media with a file system that provides a structure for storing and retrieving directories and files. Operating systems typically protect

files that are critical for the OS to function and isolate user-generated files between users. The file system also keeps accounting information about each file. This is generally referred to as the MAC times, which are the times a file was last modified, when it was last accessed, or when it was created. These times may or may not be entirely accurate, depending on the operating system being used, if the system clock is accurately set, and the circumstances of the file creation; they also might be manually changed. They are not inherently reliable. These data are a type of metadata, which is data about or related to other data.

File metadata can be instrumental in litigation as it can reveal many facts relevant to key issues in litigation, such as the location and the date and time files were created, accessed, or modified. Often the most important role of file metadata is to support or attack the credibility of evidence—both testimonial evidence and document evidence. For example, in a contract dispute metadata revealed the creation date of a key document was almost one year later than the defendant had represented. Of note, the creation date shown by the file’s metadata was in fact just two days prior to the document being disclosed to the court. While metadata still requires authentication to be introduced as evidence of the true creation date, it provided sufficient grounds to challenge the credibility of the defendant’s prior assertions regarding the existence of the document.8 A court may deem metadata to be self-authenticating if the opposing party shows no reason to doubt the authenticity of the metadata.9 Metadata regarding the time a file is created, accessed, or modified can also be relevant in medical malpractice cases where electronic medical record systems are utilized. In today’s world of electronic files, metadata can be relevant in any case where the creation and access times for key files are important to pending litigation.

When a file is written to storage by a program, it is itself formatted in a very specific way according to the design of the program and the information the program needs to store. The internal format of the file depends on the program being used, but many files include additional useful information about the file that may not be visible to the user without special tools or without using program features to access it. For example, one image file format is the exchangeable image file format (EXIF) standard, which can include the time and date the photo was taken, a GPS location, as well as details on the camera that took the image. Similarly, Microsoft Office documents contain metadata about the creation of the document, often including the author and the amount of time spent editing the document and changes made, among many other things. Any file under the control of a user can be altered. Thus, just like the images themselves, EXIF data could be easily altered by the owner of a file, meaning the authenticity of the metadata should be confirmed before accepting it as evidence.

8. SPV-LS v. Transamerica Life Ins. Co., 912 F.3d 1106, 1114 (8th Cir. 2019).

9. Tamares Las Vegas Props., LLC v. Travelers Indemnity Co., 586 F. Supp. 2d 1022 (D. Nev. 2022), cited by CNA Ins. Co. Ltd. v. Expeditors Int’l of Wash., 2023 WL 6892565 (W.D. Wash.).

EXIF data can become critical evidence in criminal cases. For example, an image of child sexual abuse material (otherwise known as child pornography) containing EXIF data revealing the GPS location where the image was taken with an iPhone 4 was posted to a website. This EXIF data led the FBI to the owner of the iPhone who admitted to taking the image. During the resulting prosecution, the defendant filed a suppression motion arguing the EXIF data was protected by the Fourth Amendment because it was not visible to anyone viewing the image on the website. The court denied the motion holding there is no Fourth Amendment protection for the EXIF data as it was publicly accessible when the image was publicly posted.10

It is also important to note that the internal format of the data may not translate directly to what is shown on the screen. Additional information may be recoverable from careful analysis. For example, a portable document format (PDF) file is used to format and display documents for printing. Making a PDF file is often the final step in releasing documents in public settings. Information that was intended to be redacted from PDF documents is sometimes recoverable with special tools or simple mechanisms. For example, if the redaction involves covering text with a black rectangle, the redacted text may still be stored within the file and recoverable using the right tools.

A database is an alternative way of organizing a collection of information. Instead of files, the database has entries stored in tables. Most often, all items in a table are related information. For example, one table might consist of a set of names; another table might consist of a set of addresses. Internal identifiers are used to relate items across tables. Databases use a different interface than file systems. Most often, there is a computer language that can issue queries to the database so that it can retrieve items that match the queries. (For example, a query may be issued to identify all rows in a table related to a specific city.) In contrast, files are most often accessed by the file name and perhaps what directory the file is in; while some operating systems support searching files by content, it is not the primary means of accessing data.

Virtual Machines

Computers exist in the physical world, each taking up some space and requiring cabling for power and networking—which can be inconvenient for those who need an additional computer, for example, to run different operating systems or for those who want their own isolated server for security reasons. Providing these individual devices—real, physical devices—can be cumbersome and expensive.

10. United States v. Post, 997 F. Supp. 2d 602 (S.D. Tex. 2014).

Virtual machines (VMs) have become a commonplace solution to this problem. A virtual machine is a software program that pretends to be computer hardware while running on a host’s real hardware. A virtual machine makes use of one or more of the host computer’s CPUs or computational cores like any other program running on the host. The VM is allocated storage on the host computer, and it provides network access through the host computer. Within the environment provided by the VM, it is then possible to install the same or a different operating system as the host and then run programs on the VM. Programs operating in a virtual machine most often run the same way they do on a physical machine; some malicious software will attempt to detect if it is in a virtual machine and change its operation as a result, but few if any benign programs do so. Virtual machines are designed to be completely separate from each other and to isolate operations in the virtual machine from the host systems and other VMs.

Virtual machines have several uses. The most common is internet server hosting. Instead of paying to have an expensive physical server placed in a secure co-location facility, users and companies can instead rent virtual machines from services such as Amazon Web Services, Google Cloud, or Microsoft Azure, among many others. The use of rented servers is often called cloud computing or a serverless architecture (see section titled “Cloud Computing and Serverless Systems” below) and represents a multibillion-dollar market, although not all such services are provided through virtual machines. VMs are also commonly used to increase security. Untrusted software can be run in isolation on a VM in case the software acts maliciously. If it does, the impact is isolated, and the virtual machine can be easily discarded. Finally, individual users might run virtual machines to experiment with different operating systems. Rather than having a computer for each, one computer can host many different operating systems that can run different software.

Algorithms, Programs, and Software

The operation of programs is central to understanding other topics surrounding computing. Here, we provide an overview of how programs are developed and turned into software products, which is relevant to legal issues that address what software does and how it was developed.

An algorithm is a series of ordered steps used to complete a task.11 We use algorithms in many aspects of our daily life: a recipe to cook a certain dish or the diagnosis and repair of an item are both common examples of algorithms that

11. Algorithms (and in particular complex algorithms) are discussed in detail in James E. Baker and Laurie N. Hobart, Reference Guide on Artificial Intelligence, in this manual. See, e.g., sections titled “Complex Algorithms,” “The Heart of AI Is the Algorithm,” “Forms of Algorithmic Bias,” and “Algorithms that Predict Human Behavior.”

humans follow. An algorithm must be specified in terms that the person following it can understand and complete; you couldn’t give a child direction on how to drive somewhere as they don’t know how to drive, for example. It is also possible to define an algorithm as a combination of many smaller algorithms. For example, one might follow one algorithm to diagnose the cause of an item’s malfunction, and then repair the item following an algorithm determined by the diagnosis.

Programs and Programming Languages

A computer program is an algorithm that a computer can run to solve a computational problem or task. An application is a program that a user interacts with directly. When a program is in a format that the computer’s hardware can execute directly, it is called an executable. Because this executable format is difficult for humans to edit, programs are instead created by programmers who determine what the algorithm should do and then write source code that specifies the steps of the algorithm in a human-understandable programming language.

Some programming languages are called interpreted languages; sometimes these are called scripting languages, or scripts. In these languages there exists an executable called an interpreter that takes the source code and executes the algorithm one step at a time, essentially performing the interpretation and execution concurrently. Other languages are compiled languages. Programs in these languages are translated into executable form using a program called a compiler. This resulting executable can then be distributed and run independently of the source code. For compiled languages, translation to an executable occurs entirely ahead of the execution of the program. In general, compiled programs have better performance than interpreted programs but require more programmer time to develop and are closely tied to the system where they are expected to run; they often cannot run on other systems without changes or special support. To be able to run on many different types of hardware, some languages are designed to use an intermediate format that is independent of the hardware being used and which gets translated as needed to run on a local processor. For example, Java is designed to run on a Java virtual machine (JVM) or equivalent. Instead of recompiling the Java source code for each computer platform, the JVM only is recompiled. To execute a compiled Java program, the JVM translates from the program’s instructions to the actual hardware instructions; performance is better than purely interpreted code.

There are many different programming languages, each of which is generally used to solve some class of problems. Some languages are most convenient to use for the web, with some on the web server (such as Ruby and PHP) and some in the web browser (like JavaScript); some for data analysis (like Python, R, and Julia); others for games (commonly C++ and C#); others for computational infrastructure (like C and Rust); and still others for mobile application

development (like Swift and Java). There is a significant overlap between languages, and most can be used for multiple purposes.

It is also possible to partially reverse the compilation process, which is called decompilation. It is not straightforward to decompile code. For example, decompilation typically does not produce the exact source code used to create the executable because information of use only to human programmers, like names or labels indicating what a value means, is often removed as a step in the compilation process.

Computer programmers often split programs into smaller pieces for a variety of reasons, including to reuse algorithms and to allow many programmers to work on the same program at once. Because of this, code is often written in a modular form where smaller sub-algorithms can be assembled to form the larger overall algorithm. Within a program, these sub-algorithms might be called functions, methods, or procedures interchangeably. Often, so that such functions can be shared among many programs, they are placed into libraries. These libraries might natively be part of the ecosystem of the programming language; they might be libraries that are freely available for download; they might be libraries that are commercially available for license; or they might be part of the computer’s installed operating system, as described above. The term software refers to any or all of these various executable portions of code, from applications to libraries to the operating system.

Software Engineering

Programming is just one aspect of the larger topic of software engineering. Software engineering is a process that also involves design, testing, documentation, and maintenance of software. Many factors affect the complexity of the process, including the number of users of the software, the complexity of the software, the longevity of the software, the level of reliability needed, and the number of programmers coordinating simultaneously or longitudinally over time. A critical aspect of software engineering is communicating and documenting the requirements of a project.12

A variety of tools are commonly used to manage these processes. For example, splitting software into modules helps with the development of larger projects. Different teams of programmers can work on and test each module separately,

12. For a general discussion of the engineering design process, see Chaouki T. Abdallah et al., Reference Guide on Engineering, “The Engineering Design Process” section, in this manual. The role of computers and the implications of their use in design and complex systems is discussed in the Reference Guide on Engineering in the “Complex Systems” and “Computers, Artificial Intelligence, and Machine Learning” sections.

then combine them into a larger program. This approach leads to a model where most programs are layered. Each layer is independent and presents an interface known as the application programming interface (API) that defines how the software in that layer can be accessed. Each layer can then be changed or replaced without major modifications overall as long as the layer maintains a consistent API. APIs can be an important part of commercial products. For example, services like Amazon Web Services, Google Cloud, and Microsoft Azure provide computational services for rent that can be accessed through an API.

Software projects can vary significantly in size. Software size is often measured in lines of code, which is what it seems: a count of how long the programming language portion is. This metric is not easily comparable between languages and is a rough approximation of effort at best.

A small module or program might be only a few tens or hundreds of lines of code. A large project, like the source code for a computer operating system, might contain thousands of smaller modules and be many millions of lines long. It is thought that the code for Microsoft Windows totals over 50 million lines; the Linux operating system has about 28 million lines of code as of the time of this writing.

Code is typically stored in a source code repository. This is a specialized database, often called version control software, that can track changes made by different programmers to the code, which can help programmers and forensic investigators alike understand when and where code was added. A repository is helpful in merging new code with existing code, including new code in a program for testing, and submitting code for managerial or peer review before final inclusion. It also allows for different code versions and reverting source code changes should there be errors. Common tools for source code management include git, CVS, svn, and Visual SourceSafe. These can be useful sources that can show how software was developed over time.

Many kinds of testing methodologies are involved in verifying the correct operation of software. For example, unit tests ensure that the smallest components of a code base function as expected. Regression tests ensure that as new features are added or as repairs are made the current level of performance of a software system is not reduced or new errors added. Integration tests ensure that distinct subsystems interoperate as expected. Often software systems involve a continuous integration continuous delivery (CI/CD) pipeline where new changes are tested against a battery of tests before they are accepted into the main code base; this testing often occurs overnight. The goal of CI/CD is that software is always available in a state that is operational and correct, so that it can be delivered to the customer at any time. As a code base is expanded, developers add to the battery of tests to keep the CI/CD pipeline current. Finally, many larger projects will involve a team of quality assurance (QA) tests that test for bugs, rate performance, and ensure a high-quality user experience before software is released.

Source Code Distribution and Software Licensing

Most commercial programs that are developed by companies do not disclose source code, and the program’s source code is not shared with others. This closed-source approach helps protect trade secrets and avoids aiding competitors. In contrast, some programs are developed openly, and the source code is publicly available. For example, Microsoft’s GitHub.com platform hosts many open-source projects.

Software is commonly licensed, rather than being sold outright. Licensing allows the software vendor to impose conditions on its use, including prohibitions on copying, distribution, and reverse engineering. Many commercial programs are licensed under proprietary End User License Agreements (EULAs) that users must accept to acquire or use the software, and copyright is retained by the company.

Open-source software is often distributed under what are commonly called Free and Open Source (FOSS) licenses and are sometimes referred to less accurately as “free software.” There are a variety of open-source licenses; most allow use, copying, and modifications of the program. They vary on whether the code can be sold, redistributed, or sublicensed. Some licenses, most notably the GNU Public License (GPL), allow modification and private use but require that any such modifications that are sold or distributed are also licensed under the GPL, ensuring that source code improvements become available and that software will be improved over time by interested parties but remain available to all. As this approach is quite different from usual copyright, this license is referred to as copyleft.

Other open-source licenses allow sale and modification without requiring source code distribution; the BSD license, for example, allows use of and modification of code if the original license is included in future distributions. Similar licenses exist for other material, such as writing and photographs. A common one is called the Creative Commons license.

Source Code Review

In many situations, parties in a legal proceeding disagree about what software does, making source code review a key part of the discovery process so that both parties can assess essential evidence. This scenario arises in cases involving product liability, trade secrets, and patent infringement and is sought by defendants in criminal cases where key evidence was collected through software. Source code review intrudes on information protected as proprietary, trade secret, or law-enforcement sensitive information and therefore should only be used when the threshold has been met to overcome these software protections. Source code

review occurs when the software code is the central evidence to the case, such as in the seminal software copyright case of Google, L.L.C. v. Oracle America, Inc. Source code review was also used by plaintiffs in a key Toyota product liability case to argue that the software governing the electronic throttle control system was defective, causing sudden acceleration events that resulted in numerous deaths and injuries.13

However, protections for trade secret, proprietary, and law enforcement sensitive information should prevent disclosure of source code when the software is not the central evidence of the case itself. For example, in a patent infringement case involving wireless communication technology, a U.S. district court denied the plaintiff the opportunity to review a third party’s source code, holding that the third party’s concerns regarding the security of the source code could not be ignored despite the protective order. The court stated that the entity owning the source code was not a party to the lawsuit nor were they accused of patent infringement. Furthermore, the court held that the relevant information could be obtained from deposing a company engineer rather than from reviewing the source code.14 Similarly, software used in criminal investigations becomes essential when it is used to collect evidence in the prosecution’s case-in-chief. For example, in United States v. Budziak, the Ninth Circuit held that the source code was material to the defense’s case relying on the fact that the evidence presented at trial to support the distribution of child pornography charge was devoted to the government’s investigative software (called eP2P) and the evidence that was collected by its use. No court has held the investigative source code was material to the defense when the prosecution’s case-in-chief does not include evidence collected through the software.15

The demand for source code review in civil cases has resulted in an industry of source code review companies offering experts who can conduct code reviews and testify in court proceedings. Often the code being reviewed is proprietary and may contain information like trade secrets, so the source code is made available only on a secure machine, disconnected from any network, sometimes at one of the parties’ representatives’ offices. The parties often agree to provide the same set of tools to be used in the code review that the programmers used to develop it, or sometimes an even more minimal subset of similar tools is chosen by the legal representatives without expert guidance.

Programmers need to change code, however, to create new features and then turn those into a working, executable program. Source code reviewers usually

13. Bookout et al. v. Toyota Motor Sales USA Inc. et al., No. CJ-2008-7969, verdict returned (Okla. Dist. Ct., Okla. Cty. Oct. 25, 2013).

14. Realtime Data L.L.C. v. MetroPCS Tex. LLC, No. 12cv1048-BTM (MDD) (S.D. Cal. May 25, 2012).

15. See United States v. Pirosko, 787 F.3d 358 (6th Cir. 2015); United States v. Hoeffener, 950 F.3d 1037 (8th Cir. 2020); United States v. Arumugam, 2020 WL 949937 (W.D. Wash.); United States v. Feldman, 2015 WL 248006 (E.D. Wis.).

have different needs, as their goal most often is not to create or redesign code. Instead, the focus is on being able to read existing code easily to understand the underlying algorithm, to search the code for features, and to print code for reference in a way that allows ease of reference in legal discussions. This goal requires a different set of tools that focus on reading and searching code. For example, doxygen is a tool that converts source code to non-editable local files that can be opened as web pages that include the file name and line numbers for easy reference to the original source, and dngrep is a free tool that can conduct fast searches.

It is worth noting that the term “source code” might refer to different things depending on context. To a computer scientist, the term typically refers to the programmatic source code that is compiled or interpreted to produce a program. In a legal context, however, the term commonly applies to all files that are produced as part of discovery, which can include many other related files. These often include the following: build system configurations files or scripts, which are used to compile and link together the many modules that make up a large program; code that conducts testing of the program to detect errors; and other files, such as design documents, notes about the code, or histories of when files were added during development. These additional files often provide useful context to a source code reviewer.

Reverse Engineering

Aspects of hardware and code that are protected as a trade secret are subject to reverse engineering, in which others attempt to discover the secrets through independent discovery. Kewanee Oil Co. v. Bicron Corp.16 established that trade secrets do not receive patent protection and that it is legal to reverse engineer items in the public domain.

Reverse engineering is also used as an innovative tool for chip design. In 1985, Congress passed the Semiconductor Chip Protection Act (SPCA),17 which protects semiconductor design while providing a reverse engineering provision that allows copying of the entire chip design. The law does not distinguish between the protectable and nonprotectable portions of the chip as long as the copying is for the purpose of teaching, evaluating, or analyzing the chip. The law was written to specifically protect industry practice and encourage innovation. In intellectual property litigation where chips appeared to be similar, Congress assumed that admitting expert testimony to assist in determining whether subtle changes in a chip layout (called a “mask work”) were significant would resolve the problem of distinguishing a copy from a legitimate reverse-engineering attempt in most cases.18

16. 416 U.S. 470, 94 S. Ct. 1879 (1974).

17. 17 U.S.C. §§ 901–904 (1984).

18. Altera Corp. v. Clear Logic, Inc., 424 F.3d 1079 (9th Cir. 2005).

Reverse engineering of software is often prohibited. License agreements and non disclosure agreements can limit the right to work with software to determine its function. A copyright owner can disallow making copies of a work for reverse-engineering purposes. The Digital Millennium Copyright Act (DMCA) prohibits “circumvention of ‘technological protection measures’ that ‘effectively control access’ to copyrighted works.” In some cases, however, it has been found legal to reverse engineer software in particular circumstances, primarily to provide interoperability between systems.19

Overview of Cryptography

With a basic background of computing hardware and software complete, we move toward a brief description of the cryptographic mechanisms that have become essential tools for securing information stored on computers and transmitted across networks. These algorithms are fundamental to many areas of computer science as they relate to legal issues. We introduce concepts here that appear in later sections.

Encryption is used to maintain the secrecy of information. Encryption can be a legal issue when, for example, critical evidence is held inaccessible within an encrypted file. Cryptographic hashing algorithms are widely used to preserve the integrity of data and to recognize known content accurately and easily. Cryptocurrencies are complex networked systems that can allow transfers over the internet of funds that have public value without the involvement of financial institutions; their rise has enabled new types of criminal activity and new ways to launder money.

Encryption

The most common way to ensure the confidentiality of data is to encrypt it. The net effect of encryption is to take a large secret (the data being encrypted) and reduce it to a small secret (the key used for encryption). This approach makes handling sensitive information easier, as data can be stored and transported securely on the open internet in an encrypted form. In general, if the data is obtained by others, it will remain confidential as long as the key remains a secret. The keys are transported by a separate, more secure mechanism, which is easier because they remain small regardless of the size of the data. The terms passwords and keys are similar concepts but often used differently. The term password most often refers to a collection of characters that can be entered with a keyboard (letters, numbers, and punctuation) and are human-readable. A key is a more general term as the collection can contain additional values that cannot be entered

19. Sega Enters. v. Accolade, Inc., 977 F.2d 1510 (9th Cir. 1992); Sony Computer Ent. v. Connectix, 203 F.3d 596 (9th Cir. 2000).

with a keyboard, or perhaps a collection of values that is too long for a human to reasonably enter. In other words, the set of possible keys is much larger than the set of possible passwords. Keys are best chosen randomly, but they can be derived from passwords when needed so a human can remember them more easily.

When we encrypt data, we are altering data so that it no longer has any visible structure. The process of alteration is governed by the encryption algorithm and the encryption key chosen. Taking the original bits, referred to as the plaintext, and running them through the encryption algorithm with a given key produces the ciphertext. A good encryption algorithm produces a ciphertext that appears to have been generated completely at random. When we later want to recover the plaintext, we decrypt it by running the ciphertext through the encryption algorithm with the correct key.

If the algorithm works correctly, the secrecy of the data lies entirely with the key. For proven encryption algorithms the operations are publicly available and the best-known attack is to painstakingly attempt every possible key. We say that information is secure if an adversary cannot possibly attempt all keys in any reasonable amount of time given the resources (i.e., time and money) they have available or before the information loses its value. The number of possible keys for modern algorithms is so large that it is impossible in practice to search through all of them for the correct one, as we discuss below.

Shared Key Encryption

Algorithms that use the same key for encryption and decryption are called shared key or symmetric key algorithms. The standard shared key algorithm today is the Advanced Encryption Standard (AES), which is commonly implemented in hardware on many modern computer CPUs to increase performance.

While shared key encryption is common and efficient, it does have its drawbacks. The primary one is that if you want to have secure communication with many independent people, you need to share a different key with each individual separately. A simpler approach to this key management problem is to use public key encryption, as we describe below. The advantage of shared key encryption algorithms is that they are generally much faster than public key algorithms.

Public Key Encryption

Public key encryption algorithms use a linked pair of keys: anything encrypted with one key can only be decrypted using the other key. It is called public key encryption because one of these keys—the public key—is not kept secret. The other key is the private key and is kept secret by the owner. The advantage of this approach is that everyone who wants to send a confidential message to a recipient can

use the recipient’s public key for encryption; pairwise keys between individuals are not required as they are in shared key algorithms. All messages can be decrypted by the recipient using their private key.

Public key algorithms are based on the fact that mathematically there are problems that are difficult to solve but very easy to check if a solution is correct. By analogy, it is easy to see that a jigsaw puzzle has been solved; but it takes effort to complete a puzzle given a bag of pieces. A real cryptographic example is that given two large prime numbers it is easy to multiply them together to determine if their product is equal to a third value. But given a large number alone, it is difficult to factor out the original prime numbers. One of the original public-key algorithms, called RSA after the initials of its inventors, was based on this factoring challenge. Other algorithms are based on similar mathematical problems that are easy to solve for the person who has the private key but essentially impossible to solve without it.

Because these algorithms work differently than shared key algorithms, the key size is not an accurate indicator as to how long it would take to search for and find an unknown key, and because of the mathematical operations that need to be performed, public key encryption is often much slower than shared key encryption. It is also possible that some breakthrough in mathematics or computer science will render a previously difficult to solve problem suddenly easy to solve, making any algorithm based on that problem immediately breakable. Common public-key algorithms include RSA, elliptic curves, and El Gamal.

Cryptographic signatures

Public key encryption can also provide proof of who sent a particular message, called a digital signature. The basic idea is that if someone encrypts some data with their private key, decrypting it with the known public key verifies it was encrypted by the private key owner. Proving it was encrypted with the private key is therefore equivalent to the private key holder signing it (under the assumption that the private key would never be disclosed by the owner). For convenience and speed, some digital signatures do not encrypt the entire document. Instead, a cryptographic summary of the document is computed (called a hash, as described below), and that is encrypted. The hash is small and unique to the document being signed. Not all cryptographic digital signatures operate this way, but it’s a good high-level model for understanding how digital signatures are computed.

Certificates

One problem with public key cryptography, however, is verifying who owns a particular key. This verification must be managed by computers, be performed

in milliseconds, and be mathematically based. The common solution to this is the use of cryptographic certificates, which make use of digital signatures to verify public key ownership; certificates provide much of the cryptographic foundation of the internet. Individual operating systems or software, such as web browsers, are preloaded with root certificates. These certificates are, in short, the public key of trusted organizations that serve to verify other certificates. In this hierarchical system of trust, a shopping website would create a public key to include it in a certificate of its own, and then pay one of the root certificate organizations to sign it. Consumers are trusting the root certificate organizations to sign with integrity.

For example, when a secure connection is made over the internet to shop using a web browser, the shopping website presents its own certificate to the browser. As described above, this certificate contains the public key for the shopping website and a digital signature from one of the root certificate organizations. The browser uses one of the preloaded root certificates to verify the trusted organization’s signature on the shopping website certificate. When the browser finds that the shopping site certificate is correctly signed, it then trusts that the shopping site certificate belongs to the shopping site (and not some impostor) and that it is safe to conduct transactions. The site public key is then used to exchange a shared key used during only this session for encrypting the transactions between the user’s browser and the shopping website. The actual process has a few more steps, including some intermediate certificates and keys, but conceptually the result is the same.

Cryptographic Key Sizes and Attacking Encryption

Cryptographic operations depend on the fact that the number of possible keys and hashes are so large that they represent values far outside human experience. Each key or hash is a series of individual bits, each a single 1 or 0 value, often hundreds of bits long. Each time a bit is added, the number of possible combinations for the series doubles. By the time the length of the series is 128 bits, the number of possible combinations is 2128 which is about 1038 (or 1 followed by 38 zeros). To put this in perspective, some estimates put the number of grains of sand on the earth at about 1024. In other words, there are about 100 trillion times as many possible values for a 128-bit sequence than grains of sand on earth. Moving to 256 bits or 512 bits produces combinatorial sizes that are even less comprehensible. A sequence of 256 bits produces about 1077 possibilities; for 512 bits the set of possibilities becomes about 10154.

Breaking encryption for these size sequences by trying all keys is impossible; there is not enough computational power to do so nor is it possible to create

enough computational power to do so. If keys or hashes are random then they are incredibly unlikely to be broken this way.

Attacking Encryption

It is impossible to try every possible key to attempt to decrypt something encrypted; there are too many keys to try as bounded by the laws of physics. For that reason, when users let their computers pick their keys or passwords randomly then decryption through automated guessing will not work. On the other hand, people are frequently not random when picking their own passwords, and they are often poor at securing the storage of those passwords. For example, passwords might be based on pets and birthdays and be written down in a notebook, or on a scrap of paper, or recoverable from a computer memory or disk. In these cases, there might be some hope that the password might be recovered. It is also possible to try and brute force passwords by repeated guessing. Humans often use statistically predictable patterns, and software exists that will work through trillions of combinations of statistically probable passwords to see if they create a key that can be used for decryption; this approach can be very effective for poorly chosen passwords, and very ineffective for randomly created ones.

In cases where the password is not recovered, it might be possible to get it from the user.20 Some courts have reached a variety of conclusions whether a court can issue an order to compel disclosure of a password or even compel someone to unlock a device. Some courts hold there is no Fifth Amendment violation to compel a password’s disclosure if the ownership of the device is a foregone conclusion.21 Other courts have explicitly refused to apply the foregone conclusion doctrine and denied orders to compel disclosure of passwords.22

20. Orin Kerr, Compelled Decryption and the Privilege Against Self-Incrimination, 97 Tex. L. Rev. 767 (2019).

21. See, e.g., United States v. Apple Mac Pro Comput., 949 F.3d 102 (3d Cir. 2020) (court upheld court order under the All Writs Act compelling disclosure of password under the foregone conclusion exception to the Fifth Amendment); State v. Andrews, 234 A.3d 1254 (N.J. 2020), cert. denied, 141 S. Ct. 2623 (2021) (the foregone conclusion exception allows the defendant to be compelled to communicate his memorized passcodes to the government); Commonwealth v. Jones, 481 Mass. 540 (Mass. 2019).

22. See Seo v. State, 148 N.E.3d 952 (Ind. 2020) (discussing concerns with extending the foregone conclusion exception to the Fifth Amendment in the context of compelling production of an unlocked smartphone); Commonwealth v. Davis, 220 A.3d 534 (Penn. 2019) (foregone conclusion exception to the Fifth Amendment is inapplicable to compel the disclosure of a defendant’s password to assist the government to gaining access to a computer).

Cryptographic Hash Functions

A cryptographic hash is a mathematical function that takes any digital content as input of any length and produces a short unique numeric signature as output, typically 512 bits or fewer. This reduced number of bits, which is often referred to as the hash, is like a digital summary or identifier of the content that was input. Changing any single bit in the input changes the output hash significantly and unpredictably. We can therefore use hash functions to verify the integrity of large amounts of data, at any input size, at a level of granularity involving single bits. Good hash functions have the following properties that ensure the hash is secure:

- First, given a hash, it is not possible to recover the original data. This means that you can share the hash with anyone, and they cannot tell what was used to create it. If you give them the original data, though, it is easy to run those through the hash function to ensure that they are the same. Hashing is a one-way function.

- Second, given a hash, it is virtually impossible to find any other content that produces the same hash when run through the hash function. This property is related to the size of the hash output in bits: increasing the length of the hash output increases the difficulty of finding other content.

- Third, it is very difficult to create two different digital objects that have the same hash. This task isn’t as hard as the second property, as we describe below, but it is still very hard for good hash functions. (By “hard” and “difficult” we mean that an adversary cannot acquire sufficient computing resources to achieve the desired result with even the smallest probability of success.)

Cryptographic hashes have myriad uses, the most common as digital summaries. To detect changes to a file, the hash of a file or other digital object is taken and recorded separately from the object itself. Later, the hash can be recomputed from the object or a copy of it. If the object has changed, then the hash will have changed. Similarly, no two objects will have the same hash value, as described above.

Accordingly, hash values are an important tool used in e-discovery as they provide a guarantee for the authenticity of an original data set and can be used as a digital equivalent of the Bates stamp used in paper document production.23 Amendments to Federal Rule of Evidence 902 provide a procedure to authenticate electronic documents without calling the testimony of a witness. Committee notes to the 2017 amendment specifically point to the use of hash values to self-authenticate, relying only on a certification by a qualified person that they checked the hash value of the proffered item and that it was identical to the original.

23. Managing Discovery of Electronic Information 52 (Federal Judicial Center Pocket Guide, 3d ed. 2017).

Attacking Cryptographic Hashes

There are a variety of commonly used hash functions. Some older ones, particularly MD5 and SHA-1, have been shown to be weak or broken in ways that newer hashes such as SHA-256 are not. Specifically, attackers can violate the third property above and produce multiple data files that have the same cryptographic hash.

In contrast, for cryptographic hash algorithms that have no known weakness, the chance of generating a second file that has the same hash as a given, fixed first file is vanishingly small. For example, given a file with a particular cryptographic hash value that is n bits long and violating the second principle above, one would expect to have to generate 2n candidate files before finding a match. For example, when using SHA-256, which produces a 256-bit output, one would have to generate 2256 (roughly 1076) candidate files before expecting to find a match, which is essentially impossible in practice.

The process of creating hashes is subject to the so-called birthday attack, however, which violates the third property above. This attack is so named because while the chance of any one specific person having a set birthday is 1/365 (ignoring leap years), the chances that some two people in a group of 23 having the same birthday is about 50%. In the first case, we have fixed on a single birthday date; in the second case, any matching birthday date achieves success. These are analogous to principles one and two above. Producing a large set of random digital objects will produce two objects that have the same hash more often than when fixing a file to match against. Specifically, one would expect to generate 2n/2 candidate files to find any pair that match from among all generated candidates. In the case of SHA-256, finding a matching pair requires generating 2128 (roughly 1038) candidate files in expectation, which is still impractical.

Hash values are also used by electronic service providers to detect images of apparent child pornography. They typically configure their systems to watch for such images being transmitted or stored across their networks by computing hashes of the images and comparing them against hashes known to be illegal.24 It is, however, easily possible to make alterations to the image that will not be visible but would change the hash.

Cryptocurrency

Cryptocurrencies have become the de facto standard form of conducting online payments for many illegal activities; in addition, there seems to be a growing

24. See United States v. Miller, 982 F.3d 412 (6th Cir. 2020) (analyzing Fourth Amendment concerns when Google used hash values to identify images of apparent child pornography transmitting across their network).

number of financial fraud cases involving cryptocurrency companies. In this subsection, we provide a very brief overview of how Bitcoin, the original cryptocurrency, works. There are many different cryptocurrencies that are based on a variety of cryptographic principles, but others are often similar in their design.

The fact that many cryptocurrencies have “coin” in their name is misleading. Instead of tracking individual currency units, most cryptocurrencies instead operate on the concept of a shared ledger. On this ledger there are a series of identifiers called addresses (analogous to a bank account number) that are each associated with an amount of cryptocurrency; the ledger is essentially a shared database that maps addresses to the cryptocurrency balance. This approach is as if a bank kept track of how much money is in an account without recording the serial numbers of any cash deposited and without attaching a name to the account.

Transactions cause some portion of a balance to be transferred from one address to another and recorded on the ledger. This ledger is called the blockchain and contains a history of every transaction that has ever happened in Bitcoin (or other cryptocurrency). By processing through the list of transactions listed on the blockchain, anyone can determine the balance associated with any address. In Bitcoin, each address is a public cryptographic key. Only the person who knows the corresponding private key may create transactions with that identity. Transactions are analogous to bank checks: they transfer currency controlled by one private key to the control of a different private key. One person might have control of any number of addresses. Transactions, however, are not anonymous in that they are inseparable from the addresses listed in the ledger. It is often possible to associate these Bitcoin addresses to real-world persons by finding Bitcoin transactions that are associated with real-world financial actions, like purchasing Bitcoin using a bank account or using Bitcoin to order physical items mailed to a postal address.

The major innovation of Bitcoin was to establish a mechanism by which a large group of computers could come to a consensus, without a central organizing authority, on which transactions become part of the blockchain despite some minority of participants attempting to cheat or otherwise influence the outcome. This mechanism, called mining, also creates new Bitcoin, and it incentivizes participation in the Bitcoin protocol, which helps make it resilient against attack.

The value people see in Bitcoin is that it is exceedingly difficult to alter the blockchain; to do so would require a very significant amount of computational power, in general roughly more than about half the computer power already performing mining on a chain; for the more popular cryptocurrencies it’s most often an amount out of reach of any single entity. As long as a majority of participants, as rated by computational power, behave consistently then changing the blockchain should be quite challenging and therefore secure.

For some cryptocurrencies it is possible to anonymize the relationship between currency deposited in an address and later expenditures from that address. This process is often called mixing. Because the blockchain is a public ledger, it can be used by criminal investigators to follow illegal transactions. Blockchain

analytics can become key evidence in criminal activity involving virtual currency. For example, IRS investigators analyzed the blockchain and de-anonymized Bitcoin transactions allowing for identification of hackers compromising celebrities’ Twitter accounts. The affidavit supporting the criminal complaint steps through the blockchain analysis leading to the identification of several co-conspirators.25

Networking

The internet’s core feature is the connections it creates among the world’s people, computers, and devices. The internet is supported by an enormous amount of infrastructure, including: equipment commonly used in homes and offices, such as Wi-Fi access points and cable modems; equipment deployed by internet service providers (ISP) that have home and business customers; and the connections among ISPs that connect the world together. The internet is further extended by cellular network providers, including mobile phones, radio towers, and connecting infrastructure.

It has become commonplace for people to carry mobile phones and devices at all times, and for almost all communications to be based on the internet and modern cellular infrastructure. For this reason, a great deal of evidence and legal issues are based on network communications and services. To understand modern networks and the legal issues surrounding them, it’s helpful to learn a number of fundamental concepts that we explain here.

Networking Layers

The connection of the world’s cellular carriers, ISPs, and end users as one massive system is made possible by a collection of standards called the internet protocol (IP) suite. All computers that speak IP can communicate with each other regardless of the developer and manufacturer responsible for the software or hardware. These protocols are organized as several layers. At the top layer are the applications, such as email and the web. And at the bottom are the wires and cables that carry data. In between are a series of layers that handle reliability, routing, and access control, which are all terms that we define below.

An important aspect of this design is that each layer has its own naming and addressing scheme. An address is a place on the internet that data can go to, while a name is an identifier unique to that address. For example, an email “address” is actually composed of a name (like alice) connected by an @ symbol to an address (like alice@umass.edu).

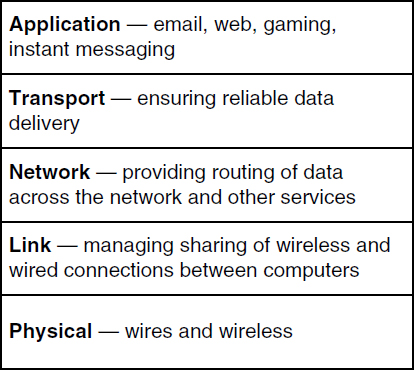

To help understand the purpose and relationship among these layers, we can make an extended analogy to the postal service. Figure 2 illustrates the five IP

25. United States v. Sheppard, 3:20-mj-70996 (N.D. Cal. 2020), https://perma.cc/5NSR-7QD3.

layers. At the top is the application layer, which consists of protocols that operate between two instances of the same application running on different desktops, laptops, or smart phones. These different devices at the edge of the internet are often called end-hosts. Email clients, web browsers, messaging programs, social media applications, and online multiplayer games and other applications each send data across the network in their own way. Application-level content very rarely is examined or modified by devices on the network. One of the reasons the internet has been able to grow to a world-wide system is that what happens on these end-hosts is largely separate from the core infrastructure. In our postal service analogy, the application layer is like letters and magazines that are mailed: although the content is important to the sender and receiver, the actions taken by the postal system are not affected by the specific words, images, or other content within the letters, and the postal system generally does not examine the content.

Below is the transport layer, which also operates across the network between the two end hosts. The transport layer corrects problems and errors that are caused by the layers below so that applications see a consistent stream of data. The specific transport-layer protocol that performs these functions is called the Transport Control Protocol (TCP). In our analogy, TCP is like one of the special services that can be purchased from the post office, such as a delivery acknowledgment. For an important letter, you might make a copy of the letter and then send the original. If you don’t receive a delivery acknowledgment after a time, you’ll send a new copy of the letter and reset your timeout for the delivery acknowledgment; at its core, the algorithm providing reliable delivery of data operates the same way.

Next, going downward, is the network layer, which is the first layer that operates on the core devices that form the internet. Whenever information leaves an end host, it is handed off to a router. Just like desktops, routers are computers. The only difference is that they are not computers that are used to run just any program; they are specialized to operate programs that route data from one end host to another end host across a building or across the world. The entire internet is composed of routers that communicate in an unbroken mesh.

Most routers have more than one or two neighboring routers, and so just like roads that intersect with other roads, routers have to be navigated from a starting end host to a destination end host. A series of protocols determine for routers which of its neighbors most quickly leads to a destination. In our analogy, routers are like post offices. When a letter leaves a home, it travels from one post office to the next, until it reaches a final post office that delivers the mail to the destination home. The network layer is responsible for assigning an IP address, and it is often critical to attributing evidence in an investigation.

The link layer manages the connections between routers (and between hosts and routers). For example, the link between a host and its wireless access point can be managed by a Wi-Fi protocol. Stretching our analogy to almost its breaking point, links are like the postal workers that are the conduits for managing the many letters at a time that go from a home to the neighborhood post office, as well as transfers along a sequence of post offices on route to the destination.

Finally, at the bottom, physical layer protocols allow hosts to send data via some physical medium, including wireless, optical fiber, or coax wire. To complete our analogy, the vans, trucks, trains, and airplanes used by the post office are the physical objects that move mail from one point to another.

IP Network and Link Layer Addresses

The two most important computer addresses that are used to move a user’s data across the internet are a computer’s IP address and the address of a computer’s network interface.

IP addresses are assigned by the local network administrator at an internet service provider (ISP). Before data can be transferred by a computer, it must obtain an IP address. They can be assigned once and remain static for the lifetime that the computer uses the ISP. Or, more typically, IP addresses are assigned dynamically by the internet service provider using the Dynamic Host Configuration Protocol (DHCP).

Network interface addresses are assigned at the factory that manufactured the radio card or Ethernet card, and they are typically 48 bits long. They are often written as colon-separated hexadecimal strings: e.g., 00:17:F2:40:F9:B2. The first 24 bits are a prefix that should identify the manufacturer; the second half should uniquely identify the hardware across the world. This value is easily

changed by the user, as we explain below. The link-layer protocols that make use of network interface addresses are called medium access control (MAC) protocols, and so most often the 48-bit values are called MAC addresses.

A person can take their laptop from one ISP to another. For example, a laptop may move from a person’s home ISP to their work ISP, and then to an internet café afterwards, all in the space of one day. Each move will cause the computer to be assigned a new IP address—however, the MAC address will stay the same throughout. A computer’s MAC address is never sent farther than a router or host one hop away, to the neighboring link-connected computers. Across the internet, it will be impossible to learn that one MAC address is behind all three IP addresses at different times.

Typically, a DHCP IP address assignment is logged, including the date and time, network interface address, and assigned IP address. The Communications Assistance for Law Enforcement Act (CALEA) asks ISPs to keep DHCP logs for 90 days, but this is not a requirement under the law. Some ISPs keep information for hours, some for longer than 90 days. Many law enforcement investigations involving a network are led by a search for the computer (and home) that has been assigned an IP address that was observed online. The ISP that assigned the computer needs to be found first, so that the DHCP logs can be requested via legal process.

Sometimes an IP address is not a completely unique identifier. There are only 3.7 billion of the shorter (version 4) IP addresses for the entire world, and they are assigned in large blocks by Internet Assigned Numbers Authority (IANA). Given the number of computers in the world, addresses are scarce. Some organizations have tens of computers yet are assigned only one address by their ISP. To get around this problem, many organizations (and home routers) make use of network address translation (NAT) boxes, which allow many computers inside a network to share a single external IP address. Figure 3 illustrates this operation.

Cloud Computing and Serverless Systems

The term cloud computing refers to a popular approach to supporting online computer services. When the web and online services first started gaining popularity, it was common for businesses to operate a computer to serve online customers on their own premises. As the customer base grows, the business would not only require more powerful computing hardware, but investments in air conditioning, physical security, electrical power, redundant hardware, and more. Further, for some businesses, demand might be extraordinarily high for one day and not most of the year; it can be challenging to expand infrastructure for that one day.

Cloud computing addresses these issues for businesses. Cloud providers build large data centers that provide power, network access, and cooling for many thousands of computers. Typically, there are staff onsite to provide security and help deal with hardware problems. The computers used in these centers are different than laptops and desktops. Called rack servers, they are shaped more like elongated pizza boxes so they can stack neatly, as shown in Figure 4. They often include remote management interfaces so that they can be configured and operated across the network without a human needing to be present, but humans are required to address hardware problems.

Source: Provided by the U.S. Department of Energy’s National Energy Technology Laboratory, https://perma.cc/VGR4-URGD.

Source: Provided by the U.S. Department of Energy’s National Energy Technology Laboratory, https://perma.cc/VGR4-URGD.When the notion of cloud computing was introduced, it was common to lease a complete virtual machine above, and this is still done. More recently, cloud providers realized that they could charge for hosting a specific program. For example, the program might accept a photographic image as input and return the text that appears in the image as output. This approach, called serverless computing, allows the cloud customer to avoid paying for an idle virtual machine. Instead, they pay for each execution of their program. The cloud provider ensures that as demand ebbs and flows, the execution of the program remains performant and reliable. Large providers of such services include Amazon Web Services, Google Cloud, and Microsoft Azure. Businesses can lease computing power from the provider and scale up or down quickly as demand for their product changes.

Cloud services are a good example of what is defined as a remote computing service (RCS) in federal law.26

Wireless Networking and Mobile Devices

Wireless networking is commonly of two primary types. Cellular networks support long-range communication between a user’s mobile device (e.g., a smart phone) and radio towers that can be miles away. Wi-Fi networks support short-range communication between a user’s mobile device and access points (AP) that can be hundreds of feet away. Bluetooth networks are similar to Wi-Fi but typically operate over an even shorter range. There are many other types of wireless networks, including satellite-based communication, that we do not discuss here.

Wireless communications are typically broadcast in nature and based on radio waves. The physics of the radio frequencies involved and the expense of the equipment determines whether the signals can permeate walls or travel long distances. For example, cellular networks are based on very large antennas situated on very large towers. Wi-Fi networks are based on small antennas affixed to boxes placed on shelves in homes. The difference explains the large geographical ranges that cellular networks can traverse compared to Wi-Fi networks. Given that wireless signals will be received by anyone within range, cryptography is typically used to keep communications confidential between the two parties communicating over the wireless channel.

26. See the definition of remote computing service, 18 U.S.C. § 2711(2).

Cellular Networks

Cellular networks are deployed across large geographic areas to allow for a user to be mobile and stay connected. Originally, cellular networks were deployed to support voice phone calls. Over time, the networks have evolved to support the data connections required by internet-enabled apps and services common on smart phones.