Reference Manual on Scientific Evidence: Fourth Edition (2025)

Chapter: Reference Guide on Statistics and Research Methods

Reference Guide on Statistics and Research Methods

DAVID H. KAYE AND HAL S. STERN

David H. Kaye, M.A., J.D., is Regents Professor Emeritus, Arizona State University Sandra Day O’Connor College of Law and School of Life Sciences, and Distinguished Professor of Law and Academy Professor Emeritus, Pennsylvania State University School of Law.

Hal S. Stern, Ph.D., is Distinguished Professor, Department of Statistics, University of California, Irvine.

Authors’ Note: Research for this reference guide was completed in 2023. Professor David A. Freedman co-authored the first three editions of this reference guide. His writing and thinking are evident throughout this edition as well.

CONTENTS

Admissibility and Weight of Statistical Studies

Varieties and Limits of Statistical Expertise

Procedures That Enhance Statistical Testimony

Maintaining Professional Autonomy

Disclosing Limitations and Other Analyses

Disclosing Data and Analytical Methods Before Trial

How Have the Data Been Collected?

Is the Study Designed to Investigate Causation?

Randomized Controlled Experiments

Descriptive Surveys and Censuses

What Method Is Used to Select the Units?

Of the Units Selected, Which Provide Measurements?

Is the Measurement Process Reliable?

Is the Measurement Process Valid?

How Have the Data Been Presented?

Are Rates or Percentages Properly Interpreted?

How Big Is the Base of a Percentage?

Have Appropriate Benchmarks Been Provided?

Have the Data Collection Procedures Changed?

Are the Categories Appropriate?

Is an Appropriate Measure of Association Used?

Does a Graph Portray Data Fairly?

How Are Distributions Displayed?

Is an Appropriate Measure Used for the Center of a Distribution?

Is an Appropriate Measure of Variability Used?

What Inferences Can Be Drawn from the Data?

What Estimator Should Be Used?

What Is the Confidence Interval?

The normal curve and large samples

What Are the Technical and Interpretive Difficulties with Confidence Intervals?

p-values, Significance Levels, and Hypothesis Tests

Is a Difference Statistically Significant?

Recent Emphasis on the Limitations of p-values

Is the Sample Statistically Significant?

What Is the Power of the Test?

How Many Tests Have Been Done?

What Are the Rival Hypotheses?

Bayesian Statistical Methods and Posterior Probabilities

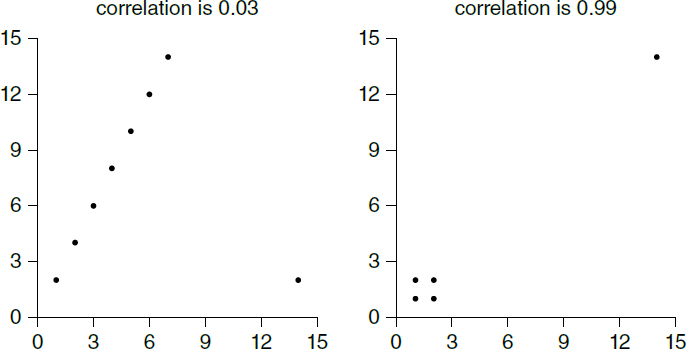

Do Outliers Influence the Correlation Coefficient?

Does a Confounding Variable Influence the Coefficient?

Data Science and Statistical Machine Learning

What Statistical Questions Arise with Machine Learning Studies?

Is the Dataset Appropriate and of Sufficient Quality?

Is the Predictor or Classifier Robust?

Is the Predictor or Classifier Too Opaque?

Appendix: Conditional Probability and Bayes’ Rule

What Do Probabilities Apply To?

What Are Conditional Probabilities?

References on Statistics and Research Methods

FIGURES

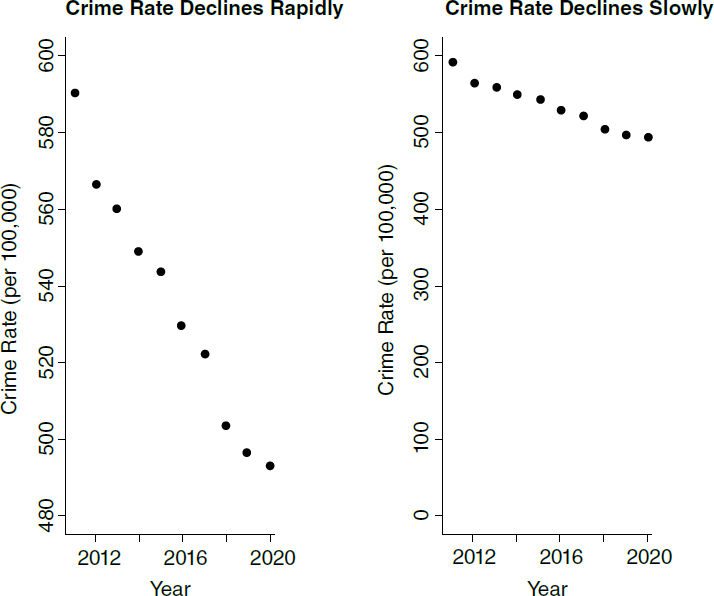

1 and 2. Manipulating the scale of a graph

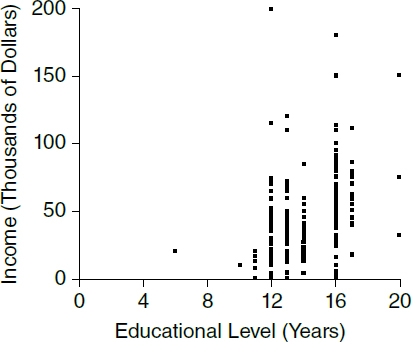

5. Plotting points in a scatter diagram

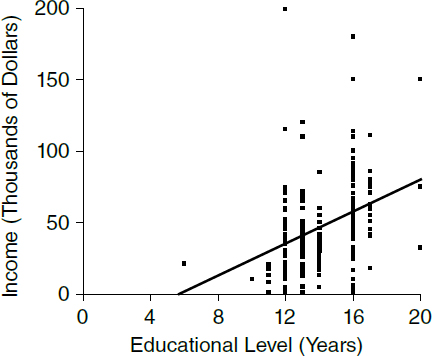

6. Scatter diagram for income and education: men ages 25 to 34 in Kansas

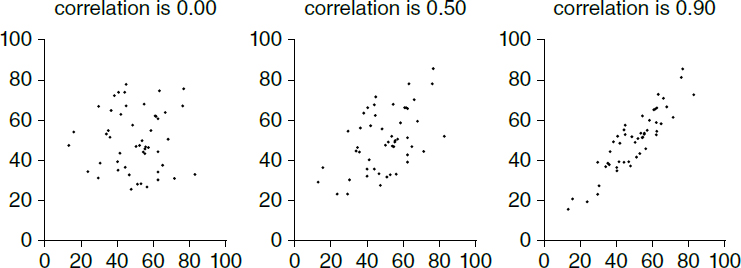

7. The correlation coefficient measures the sign of a linear association, and its strength

8. A strong nonlinear association with a correlation coefficient close to zero

9. The correlation coefficient can be distorted by outliers

10. The regression line for income on education and its estimates

11. Scatter diagram for income and education, with the regression line indicating the trend

TABLES

1. Test Results for Cartridge-case Comparisons

2. Data Used by a Defendant to Refute Plaintiff’s False Advertising Claims

3. Home Pregnancy Test Results

Introduction

Statistical assessments are prominent in many kinds of legal cases, including antitrust, employment discrimination, toxic torts, and voting rights cases. This reference guide describes the elements of statistical reasoning. We hope the explanations will help judges and lawyers to understand statistical terminology, to see the strengths and weaknesses of statistical arguments, and to apply relevant legal doctrine. The guide is organized as follows:

- This introduction provides an overview of the field, discusses the admissibility of statistical studies, and offers some suggestions about procedures that encourage the best use of statistical evidence.

- The section titled “How Have the Data Been Collected?” addresses data collection. It explains why the design of a study is the most important determinant of its quality. The section compares experiments with observational studies and surveys with censuses, indicating when the various kinds of study are likely to provide useful results.

- The section titled “How Have the Data Been Presented?” discusses the art of summarizing data. This section considers the mean, median, and standard deviation. These are basic descriptive statistics, and most statistical analyses use them as building blocks. This section also discusses patterns in data that are brought out by graphs, percentages, and tables.

- The section titled “What Inferences Can Be Drawn from the Data?” describes the logic of statistical inference, emphasizing foundations and disclosing limitations. This section covers estimation, standard errors and confidence intervals, p-values, and hypothesis tests.

- The section titled “Correlation and Regression” shows how associations can be described by scatter diagrams, correlation coefficients, and regression lines. Regression is often used in attempts to infer causation from association. This section explains the technique, indicating the circumstances under which it and other statistical models are likely to succeed—or fail.

- Advances in computing speed and the availability of large datasets have made it possible to fit models of greater complexity than those described in the section titled “Correlation and Regression.” The section titled “Data Science and Statistical Machine Learning” describes the developing fields of “data science” and “machine learning” and statistical issues that affect the confidence one can have in the output of machine-learning techniques.

- An appendix discusses the scope of the theory of probability, conditional probabilities, Bayes’ rule, and perspectives on statistical inference.

- The glossary defines statistical terms that may be encountered in litigation, including ones that do not appear in the body of the guide.

Admissibility and Weight of Statistical Studies

Statistical studies suitably designed to address a material issue generally will be admissible under the Federal Rules of Evidence. The hearsay rule rarely is a serious barrier to the presentation of statistical studies, because such studies may be offered to explain the basis for an expert’s opinion or may be admissible under the learned treatise exception to the hearsay rule.1 Most statistical methods applied in litigation are described in textbooks or journal articles and are capable of producing useful results when properly applied. As such, these methods generally satisfy important aspects of the “scientific knowledge” requirement in Daubert v. Merrell Dow Pharmaceuticals, Inc.2 However, a particular study may use a method that is entirely appropriate but so poorly executed that it should be inadmissible under Federal Rules of Evidence 403 and 702.3 Or, the method may be inappropriate for the problem at hand and thus lack the “fit” referred to in Daubert.4 Or the study might rest on data of the type not reasonably relied on by statisticians or substantive experts and hence not be suitable as a basis for an opinion under Rule 703. Often, however, the battle over statistical evidence concerns weight or sufficiency rather than admissibility.

Varieties and Limits of Statistical Expertise

For convenience, the field of statistics may be divided into three subfields: probability theory, theoretical statistics, and applied statistics. Probability theory is the mathematical study of outcomes that are governed, at least in part, by chance. Theoretical statistics is about understanding the properties of statistical procedures, including error rates; probability theory plays a key role in this endeavor. Applied statistics draws on both these fields to develop techniques for collecting or analyzing particular types of data.

Statistical expertise is not confined to witnesses with degrees in statistics. Because statistical reasoning underlies many kinds of empirical research, scholars in a variety of fields—including biology, business, economics, epidemiology, medicine, political science, psychology, and sociology—are exposed to statistical ideas, with an emphasis on the methods most important to the discipline. The diffusion of statistical concepts and methods across so many fields raises the question

1. See generally 2 McCormick on Evidence §§ 321, 324.3 (Robert P. Mosteller ed., 8th ed. 2020). Studies published by government agencies also may be admissible as public records. Id. § 296.

2. 509 U.S. 579, 589–90 (1993).

3. See Kumho Tire Co. v. Carmichael, 526 U.S. 137, 152 (1999) (suggesting that the trial court should “make certain that an expert, whether basing testimony upon professional studies or personal experience, employs in the courtroom the same level of intellectual rigor that characterizes the practice of an expert in the relevant field”).

4. Daubert, 509 U.S. at 591.

of who is qualified to conduct and testify to statistical assessments—a statistical methodologist, a substantive scientist, or both? Much depends on context.

If the study involves assembling and then analyzing case-specific data, the choice of which data to examine and how best to model a particular process could require both subject-matter and general statistical expertise. The two types of expertise might be combined in a single individual. A labor economist, for example, should be able to supply a definition of the relevant labor market from which an employer draws its employees. This economist also might be sufficiently educated in statistical tests for group differences to compare the race of new hires to the racial composition of the labor market. When the analysis is as straightforward as the comparison of two proportions, a single substantive expert may suffice.5 But further analysis of the possible reasons for the racial disparity might require input from an expert with an understanding of more advanced methods. This expert might be a statistician or an econometrician, or a scientist or engineer who works with other data in a completely different field of study.

The critical question is whether the individual has the education and experience to determine which statistical techniques are appropriate for the task at hand and to apply such techniques with an appreciation of their limitations. Experts who specialize in using statistical methods, and whose professional careers demonstrate this orientation, are most likely to use appropriate procedures and correctly interpret the results. To ascertain the extent to which an expert has this orientation and acumen, one can look to formal education, professional accomplishments, and reputation in the pertinent community of experts. Has the individual studied quantitative methods? Taught them? Used them in research? Which ones? If the expert is or was an academic professional, a university teaching portfolio with courses on statistical methods is a good sign. Ideally, the publication and research record will include studies that use or describe the same or similar methods as those applied (or applicable) to the case at bar.6 Invitations from mainstream journal editors to review articles submitted for publication, from academic conference organizers to present research involving statistics, and from government regulatory and research agencies to serve on expert panels that evaluate statistical work are further indications of recognized expertise within a statistical discipline. General membership in most scientific and professional societies requires no special accomplishments, but certain designations, prizes, or awards from such organizations for scholarship are a sign that an

5. Of course, the case could be presented in the form of interlocking testimony from two experts—the labor economist followed by any expert with the appropriate expertise in the method for making the statistical comparison. The substantive knowledge may be of lesser value in selecting a statistical model. Various models might be consistent with the substantive knowledge, and there may be purely statistical criteria for choosing among them.

6. Academic experts are likely to have a long list of publications, but length alone is not the best indication of pertinent expertise. Not all publishers are equal, and every field has a relatively small number of top-tier journals.

expert’s work has had an impact. Of course, these factors are merely indicia of degrees of expertise and specialization. Highly competent work can be done by individuals with shorter curricula vitae.

Again, not every case involving computations of probability or statistics necessitates a highly skilled statistical specialist. Medical practitioners and forensic scientists who are not statistical specialists often make statistical assessments of evidence or rely on and present the results of statistical studies. In these situations, it is important to ensure that the witnesses do not exceed the bounds of their statistical expertise. The scientist or practitioner might lack basic information about the studies underlying their testimony. State v. Garrison7 illustrates the problem. In this murder prosecution involving bitemark evidence, a dentist was allowed to testify that “the probability factor of two sets of teeth being identical in a case similar to this is, approximately, eight in one million,” even though “he was unaware of the formula utilized to arrive at that figure other than that it was ‘computerized.’”8 Likewise, laboratory analysts or examiners may have only a limited understanding of the foundations of automated systems for comparing patterns within DNA samples, toolmarks, fingerprints, voice recordings, and the like. Once the formulas or methods have been shown to work well, and when their limitations and proper use are known, the practitioners may be qualified to operate the statistical machinery, as it were, without an in-depth understanding of the routinized or automated statistical or probabilistic method. But the operational training may not qualify them to explain the developmental research. They may have to limit their statistical testimony to an explanation of how they arrived at their estimates and to statements of the extent to which software or hardware is used in practice and whether there are publications on its validity.

Procedures That Enhance Statistical Testimony

Maintaining Professional Autonomy

Ideally, experts who conduct research in the context of litigation should proceed with the same objectivity that would be required in other professional contexts. Thus, experts who testify (or who supply results used in testimony) should conduct the analysis required to address, in a professionally responsible fashion, the issues posed by the litigation.9 Questions about the freedom of inquiry accorded

7. 585 P.2d 563 (Ariz. 1978).

8. Id. at 566, 568. For other examples, see David H. Kaye et al., The New Wigmore: A Treatise on Evidence: Expert Evidence § 12.2 (2d ed. 2011).

9. See Nat’l Research Council Panel, The Evolving Role of Statistical Assessments as Evidence in the Courts 164 (Stephen E. Fienberg ed., 1989) [hereinafter NRC Panel] (recommending that the expert be free to consult with colleagues who have not been retained by any party to the litigation and that the expert receive a letter of engagement providing for these and other safeguards).

to testifying experts, as well as the scope and depth of their investigations, may reveal some of the limitations to the testimony.

Disclosing Limitations and Other Analyses

Statisticians analyze data using a variety of methods. To permit a fair evaluation of the analysis that is eventually settled on, the testifying expert can be asked how that approach was developed, whether alternative approaches were considered, and, if so, what the results were.10 Ethical guidelines for statisticians require them to disclose known and suspected limitations in the data and its analysis.11

Disclosing Data and Analytical Methods Before Trial

The collection of data often is expensive and subject to errors and omissions. Moreover, careful exploration of the data can be time-consuming. To minimize debates at trial over the accuracy of data and the choice of analytical techniques, pretrial-discovery procedures should be used, particularly with respect to the quality of the data and the method of analysis.12 In some cases, these could include requirements for specifying in detail the study design and analysis that are planned before data collection begins.13

10. Id. at 167; cf. Edith Beerdsen, Litigation Science After the Knowledge Crisis, 106 Cornell L. Rev. 529 (2021) (discussing responses to the “replication crisis” in the academic biomedical and behavioral science publication process and suggesting pretrial procedures to make the exercise of “analytical flexibility” in “litigation science” more transparent); Yoav Benjamini, Selective Inference: The Silent Killer of Replicability, 2 Harv. Data Sci. Rev. (2020), https://doi.org/10.1162/99608f92.fc62b261 (noting the problem of presenting or emphasizing selected findings within scientific publications).

11. ASA Comm. on Professional Ethics, Ethical Guidelines for Statistical Practice, Feb. 2022, at 3 & 4, https://perma.cc/P4P6-JU6C. Invoking an attorney’s professional duty not to intentionally mislead the courts on the facts or the law, the Panel on Statistical Assessments as Evidence insisted that “it is not appropriate for the attorney to seek to avoid such revelation [of alternative forms of data and analyses] by consulting a series of experts without revealing to the experts ultimately retained any prior history of the involvement of other experts in the litigation.” NRC Panel, supra note 9, at 167.

12. See The Special Comm. on Empirical Data in Legal Decision Making, Recommendations on Pretrial Proceedings in Cases with Voluminous Data, reprinted in NRC Panel, supra note 9, app. F; David H. Kaye, Improving Legal Statistics, 24 Law & Soc’y Rev. 1255 (1990).

13. Drawing on “open science” practices, commentators have proposed adaptations of practices for “registration” of academic research studies. See Beerdsen, supra note 10; section titled “Recent Emphasis on the Limitations of p-values” below.

How Have the Data Been Collected?

The interpretation of data often depends on understanding “study design”—the plan for a statistical study and its implementation.14 Different designs are suited to answering different questions. Also, flaws in the data can undermine any statistical analysis, and data quality is often determined by study design.

In many cases, statistical studies are used to show causation. Do food additives cause cancer? Does capital punishment deter crime? Would additional disclosures in a securities prospectus cause investors to behave differently? The design of studies to investigate causation is the first topic of this section.15

Sample data can be used to describe a population. The population is the whole class of units that are of interest; the sample is the set of units chosen for detailed study. Inferences from the part to the whole are justified when the sample is representative. Sampling is the second topic of this section.

Finally, issues associated with the reliability and accuracy of collected data will be considered. Measurement error should be assessed and the likely impact of errors considered. Data quality is the third topic of this section.

All the sections concern the study of “variables.” In statistics, a variable is a characteristic of the units in a study. With a study of people, the unit of analysis is the person, and the variables describe people. Two such variables would be income (dollars per year) and educational level (years of schooling completed). With a study of school districts, the unit of analysis is the district. Typical variables include average family income of district residents and average test scores of students in the district.

Variables may be related to one another in various ways. Many studies examine whether a variable or group of variables, known as independent variables, are related to an outcome or dependent variable. For example, census data can be analyzed to determine whether people who complete more years of school tend to have higher incomes later in life. Educational level would be the independent variable, and annual income the dependent variable. In a study of smoking and lung cancer, the independent variable could be smoking (perhaps measured by the number of cigarettes smoked per day), and the dependent variable could mark the presence or absence of lung cancer.

14. For introductory treatments of data collection, see, for example, David Freedman et al., Statistics (4th ed. 2007); Darrell Huff, How to Lie with Statistics (1993); David S. Moore & William I. Notz, Statistics: Concepts and Controversies (10th ed. 2019); Hans Zeisel, Say It with Figures (6th ed. 1985); Hans Zeisel & David Kaye, Prove It with Figures: Empirical Methods in Law and Litigation (1997).

15. See also Steve C. Gold et al., Reference Guide on Epidemiology, “The Different Kinds of Epidemiologic Studies” section, in this manual.

Is the Study Designed to Investigate Causation?

Types of Studies

When causation is the issue, anecdotal evidence can be brought to bear. So can observational studies or controlled experiments. Anecdotal reports may be of value, but they are ordinarily more helpful in generating lines of inquiry than in proving causation. In medicine, evidence from clinical practice can be a good starting point for discovery of cause-and-effect relationships, but the anecdotal experience of practitioners is not definitive.16 Observational studies can establish that one factor is associated with another, but work is needed to bridge the gap between association and causation. Randomized controlled experiments are ideally suited for demonstrating causation.

Anecdotal evidence usually amounts to reports that events of one kind are followed by events of another kind. Typically, the reports are not even sufficient to show association, because there is no comparison group. For example, some children who live near power lines develop leukemia. Does exposure to electrical and magnetic fields cause this disease? The anecdotal evidence is not compelling because leukemia also occurs among children without exposure.17 It is necessary to compare disease rates among those who are exposed and those who are not. If exposure causes the disease, the rate should be higher among the exposed and lower among the unexposed. That would be association.

16. Consequently, many courts have suggested that attempts to infer causation from anecdotal reports are inadmissible as unsound methodology under Daubert v. Merrell Dow Pharmaceuticals, Inc., 509 U.S. 579 (1993). See, e.g., Miller v. Pfizer, Inc., 356 F.3d 1326, 1331 (10th Cir. 2004) (affirming the district court’s exclusion in part because “placing substantial emphasis on a few challenge-dechallenge-rechallenge studies and case reports is not a generally accepted methodology”); Hendrix ex rel. G.P. v. Evenflo Co., Inc., 609 F.3d 1183 (11th Cir. 2010) (“Case studies and clinical experience, used alone and not merely to bolster other evidence, are . . . insufficient to show general causation.”); cf. Matrixx Initiatives, Inc. v. Siracusano, 563 U.S. 27, 44 (2011) (concluding that adverse-event reports combined with other information could be of concern to a reasonable investor and therefore subject to a requirement of disclosure under SEC Rule 10b-5, but stating that “the mere existence of reports of adverse events . . . says nothing in and of itself about whether the drug is causing the adverse events”). Other courts are more open to “differential diagnoses” based primarily on timing. E.g., Best v. Lowe’s Home Ctrs., Inc., 563 F.3d 171 (6th Cir. 2009) (reversing the exclusion of a physician’s opinion that exposure to propenyl chloride caused a man to lose his sense of smell because of the timing in this one case and the physician’s inability to attribute the change to anything else).

17. See Nat’l Research Council, Possible Health Effects of Exposure to Residential Electric and Magnetic Fields (1997). There are problems in measuring exposure to electromagnetic fields, and results are inconsistent from one study to another. For such reasons, the epidemiologic evidence for an effect on health is inconclusive. Nat’l Cancer Inst., Electromagnetic Fields and Cancer, May 30, 2022 (“Studies have examined associations of these cancers with living near power lines, with magnetic fields in the home, and with exposure of parents to high levels of magnetic fields in the workplace. No consistent evidence for an association between any source of non-ionizing EMF and cancer has been found.”).

The next issue is crucial: Exposed and unexposed people may differ in ways other than the exposure they have experienced. For example, children who live near power lines could come from poorer families and be more at risk from other environmental hazards. Such differences can create the appearance of a cause- and-effect relationship. Other differences can mask a real relationship. Cause-and-effect relationships often are quite subtle, and carefully designed studies are needed to draw valid conclusions.

An epidemiological classic makes the point. At one time, it was thought that lung cancer was caused by fumes from tarring the roads, because many lung cancer patients lived near roads that recently had been tarred. This is anecdotal evidence. But the argument is incomplete. For one thing, most people—whether exposed to asphalt fumes or unexposed—did not develop lung cancer. A comparison of rates was needed. Epidemiologists found that exposed persons and unexposed persons suffered from lung cancer at similar rates: Tar was probably not the causal agent. Exposure to cigarette smoke, however, turned out to be strongly associated with lung cancer. This study, in combination with later ones, made a compelling case that smoking cigarettes is the main cause of lung cancer.18

A good study design compares outcomes for subjects who are exposed to some factor (the treatment group) with outcomes for other subjects who are not exposed (the control group). With comparison groups, there is another important distinction—between controlled experiments and observational studies. In a controlled experiment, the investigators decide which subjects will be exposed and which subjects will be in the control group. In observational studies, the researchers do not determine which subjects are exposed; often, the subjects themselves choose their exposures. Because of self-selection or for other reasons, the treatment and control groups are likely to differ with respect to influential factors other than the ones of primary interest. These other factors are called lurking variables or confounding variables.19 A confounding variable can be correlated with the independent variable and act causally on the dependent variable. When

18. Richard Doll & A. Bradford Hill, A Study of the Aetiology of Carcinoma of the Lung, 2 Brit. Med. J. 1271 (1952), https://doi.org/10.1136/bmj.2.4797.1271. This was a matched case-control study. Cohort studies soon followed. See Gold et al., supra note 15. For a review of the evidence on causation, see 38 Int’l Agency for Research on Cancer (IARC), World Health Org., IARC Monographs on the Evaluation of the Carcinogenic Risk of Chemicals to Humans: Tobacco Smoking (1986) (updated 100E, A Review of Human Carcinogens: Personal Habits and Indoor Combustions 43–211 (2012)).

19. Epidemiologists sometimes limit “confounding” to “a bias due to the existence of a common cause of exposure and outcome” and define “selection bias” as “bias by [selecting units for study based] on common effects of otherwise unrelated variables.” Stephen R. Cole et al., Illustrating Bias Due to Conditioning on a Collider, 39 Int’l J. Epidemiology 417, 420 (2010), https://doi.org/10.1093/ije/dyp334. The distinction can be helpful in spotting different threats to causal inference, but this section uses the terms more loosely.

that happens, the confounder—not the independent variable—could be responsible for differences seen on the dependent variable. With the health effects of power lines, family background is a possible confounder; so is exposure to other hazards. Many confounders have been proposed to explain the association between smoking and lung cancer, but careful epidemiological studies have ruled them out, one after the other.

Confounding remains a problem even for the best observational research. For example, women with herpes are more likely to develop cervical cancer than other women. Some investigators concluded that herpes caused cancer: In other words, they thought the association was causal. Later research showed that the primary cause of cervical cancer was human papilloma virus (HPV). Herpes was a marker of sexual activity. Women who had multiple sexual partners were more likely to be exposed not only to herpes but also to HPV. The association between herpes and cervical cancer was due to other variables.20

Randomized Controlled Experiments

In randomized controlled experiments, investigators assign subjects to treatment or control groups at random. The groups are therefore likely to be comparable, except for the treatment. This minimizes the role of confounding. Minor imbalances will remain, owing to the play of random chance; the likely effect on study results can be assessed by statistical techniques.21 The bottom line is that causal inferences based on well-executed randomized experiments are generally more secure than inferences based on well-executed observational studies.

The following example should help bring the discussion together. Today, we know that taking aspirin helps prevent heart attacks. But initially, there was some controversy. People who take aspirin rarely have heart attacks. This is anecdotal evidence for a protective effect, but it proves almost nothing. After all, few people have frequent heart attacks, whether or not they take aspirin regularly. A good study compares heart-attack rates for two groups: people who take aspirin (the treatment group) and people who do not (the controls). An observational study would be easy to do, but in such a study the aspirin-takers are likely to be different from the controls. Indeed, they are likely to be sicker—that is why they are taking aspirin. The study would be biased against finding a protective effect.

20. For additional examples and further discussion, see David A. Freedman, From Association to Causation: Some Remarks on the History of Statistics, 14 Stat. Sci. 243 (1999). Some studies find that herpes is a “cofactor,” which increases risk among women who are also exposed to HPV. Only certain strains of HPV are carcinogenic.

21. Randomization of subjects to treatment or control groups puts statistical tests of significance on a secure footing. See section titled “What Inferences Can Be Drawn from the Data?” below.

Randomized experiments are harder to do, but they provide better evidence.22 The experiments demonstrate the protective effect.23

In summary, data from a treatment group without a control group generally reveal very little and can be misleading. Comparisons are essential. If subjects are assigned to treatment and control groups at random, a difference in the outcomes between the two groups can usually be accepted, within the limits of statistical error,24 as a good measure of the treatment effect. However, if the groups are created in any other way, differences that existed before treatment may contribute to differences in the outcomes or mask differences that otherwise would become manifest. Observational studies succeed to the extent that the treatment and control groups are comparable—apart from the treatment.

Observational Studies

The bulk of the statistical studies seen in court are observational, not experimental. Take the question of whether capital punishment deters murder. To conduct a randomized controlled experiment, people would need to be assigned randomly to a treatment group or a control group. People in the treatment group would know they were subject to the death penalty for murder; the controls would know that they were exempt. Conducting such an experiment is not possible.

Many studies of the deterrent effect of the death penalty have been conducted, all observational, and some have attracted judicial attention. Researchers have catalogued differences in the incidence of murder in states with and without the death penalty and have analyzed changes in homicide rates and execution rates over the years. When reporting on such observational studies, investigators may speak of “control groups” (e.g., the states without capital punishment) or claim they are “controlling for” confounding variables by statistical methods such as multiple regression.25 However, association is not causation. The

22. One important feature of a clinical trial is “blinding” to prevent unconscious bias. In a “double blind” trial, neither the patients nor the clinicians ascertaining the outcomes know who received the treatment and who did not. This prevents the expectations of the subjects and the experimenters from systematically affecting the observed outcomes in one group relative to the other.

23. But randomized experiments also show that aspirin can cause internal bleeding, raising the practical questions of whether and when a low-dose regimen is more beneficial than harmful. See, e.g., U.S. Preventive Services Task Force, Aspirin Use to Prevent Cardiovascular Disease: Preventive Medication, Apr. 26, 2022, https://perma.cc/C8V9-WMYS. In other instances, experiments have contradicted strongly held beliefs. E.g., Eric A. Klein et al., Vitamin E and the Risk of Prostate Cancer: Results of the Selenium and Vitamin E Cancer Prevention Trial (SELECT), 306 JAMA 1549 (2011), https://doi.org/10.1001/jama.2011.1437.

24. See section titled “What Inferences Can Be Drawn from the Data?” below.

25. Multiple regression is described in Daniel L. Rubinfeld & David Card, Reference Guide on Multiple Regression and Advanced Statistical Models, in this manual. On the limits of regression to cope

causal inferences that can be drawn from analysis of observational data—no matter how complex the statistical technique—usually rest on a foundation that is less secure than that provided by randomized controlled experiments.

When an external change in circumstances naturally creates a treatment and a control group in a manner that is comparable to random assignment by the researchers, the study may be called a “natural experiment” or a “quasi-experiment.”26 A celebrated example comes from Dr. John Snow’s investigation of cholera and sewage in the mid-1800s. The residents of an area in London received water from two companies with overlapping water mains drawing from a part of the Thames River that was heavily polluted with raw sewage. Then, one company moved its water intake upstream, to a less polluted spot. Snow showed that during a London cholera epidemic soon afterwards, the death rates from cholera in homes with the less polluted water was less than one-eighth that for the more polluted homes—and less than that for the rest of London.27 Snow argued that “no experiment could have been devised which would more thoroughly test the effect of water supply on the progress of cholera than this . . . .”28 In modern terminology, the argument is that even though the study was observational, the seemingly random assignment of homes to the two intake points protects against confounding and bias.

Observational studies can be very useful even when assignments to the groups being compared do not resemble randomization imposed by an experimenter. For example, there is strong observational evidence that smoking causes lung cancer (see section titled “Types of Studies” above). Generally, observational studies provide good evidence in the following circumstances:

- The association is seen in studies with different designs, on different kinds of subjects, and done by different research groups.29 That reduces the chance that the association is due to a defect in one type of study, a peculiarity in one group of subjects, or the idiosyncrasies of one research group.

with lurking variables, see Richard A. Berk, Regression Analysis: A Constructive Critique (2004); Richard Berk, What You Can and Can’t Properly Do with Regression, 26 J. Quantitative Criminology 481 (2010), https://doi.org/10.1007/s10940-010-9116-4; David A. Freedman, Statistical Models: Theory and Practice (2005).

26. See, e.g., Frank de Vocht et al., Conceptualising Natural and Quasi Experiments in Public Health, 21 BMC Med. Research Methodology 32 (2021), https://doi.org/10.1186/s12874-021-01224-x.

27. John Snow, On the Mode of Transmission of Cholera 55–98 (2d ed. 1855).

28. Id. at 46.

29. For example, case-control studies are designed one way and cohort studies another, with many variations. See, e.g., David D. Celentano & Moyses Szklo, Gordis Epidemiology (6th ed. 2020); supra note 18.

- The association holds when effects of confounding variables are taken into account by appropriate methods, for example, comparing smaller groups that are relatively homogeneous with respect to the confounders.30

- There is a plausible explanation for the effect of the independent variable; alternative explanations in terms of confounding should be less plausible than the proposed causal link.31 Thus, evidence for the causal link does not depend on observed associations alone.

Observational studies can produce legitimate disagreement among experts, and there is no mechanical procedure for resolving such differences of opinion. In the end, deciding whether associations are causal typically is not a matter of statistics alone, but also rests on scientific judgment.32

There are, however, some basic questions to ask when appraising causal inferences based on empirical studies:

- Was there a control group? Unless comparisons can be made, the study has little to say about causation.

- If there was a control group, how were subjects assigned to treatment or control: through a process under the control of the investigator (a controlled experiment) or through a process outside the control of the investigator (an observational study)?

- If the study was a controlled experiment, was the assignment made using a chance mechanism (randomization), or did it depend on the judgment of the investigator?

If the data came from an observational study or a nonrandomized controlled experiment,

- How did the subjects come to be in treatment or in control groups?

- Are the treatment and control groups comparable?

30. The idea is to control for the influence of a confounder by stratification—making comparisons separately within groups for which the confounding variable is nearly constant and therefore has little influence over the variables of primary interest. For example, smokers are more likely to get lung cancer than nonsmokers. Age, gender, social class, and region of residence are all confounders, but controlling for such variables does not materially change the relationship between smoking and cancer rates.

31. A. Bradford Hill, The Environment and Disease: Association or Causation?, 58 Proc. Royal Soc’y Med. 295 (1965); Alfred S. Evans, Causation and Disease: A Chronological Journey 187 (1993).

32. At best, statistical analysis can help address the question of how impactful an unknown confounding variable would have to be to vitiate an inference of causation. See Jerome Cornfield et al., Smoking and Lung Cancer: Recent Evidence and a Discussion of Some Questions, 22 J. Nat’l Cancer Inst. 173 (1959), https://doi.org/10.1093/jnci/22.1.173; Tyler J. VanderWeele, Are Greenland, Ioannidis and Poole Opposed to the Cornfield Conditions? A Defence of the E-value, 51 Int’l J. Epidemiology 364 (2022), https://doi.org/10.1093/ije/dyab218.

- If not, what adjustments were made to address confounding?

- Were the adjustments sensible and sufficient?

Generalizing the Results

In considering what conclusions can be drawn from studies, it is helpful to distinguish between internal and external validity. Internal validity concerns the specifics of a particular study: Threats to internal validity include confounding and chance differences between treatment and control groups. External validity concerns using a particular study or set of studies to reach more general conclusions. A careful randomized controlled experiment on a large but unrepresentative group of subjects will have high internal validity but low external validity.

Any study must be conducted on certain subjects, at certain times and places, and using certain treatments. To extrapolate from the conditions of a study to more general conditions raises questions of external validity. For example, studies suggest that definitions of insanity given to jurors influence decisions in cases of incest. Would the definitions have a similar effect in cases of murder? Other studies indicate that recidivism rates for ex-convicts are not affected by providing them with temporary financial support after release. Would similar results be obtained if conditions in the labor market were different?

Confidence in the appropriateness of an extrapolation cannot come from the experiment itself. It comes from knowledge about outside factors that would or would not affect the outcome. Such judgments are easiest in the physical and life sciences, but even here, there are problems. For example, it may be difficult to infer human responses to substances that affect animals. First, there are often inconsistencies across test species. A chemical may be carcinogenic in mice but not in rats. Extrapolation from rodents to humans is even more problematic. Second, to get measurable effects in animal experiments, chemicals are administered at very high doses. Results are extrapolated—using mathematical models—to the very low doses of concern in humans. However, there are many dose–response models to use and few grounds for choosing among them. Generally, different models produce radically different estimates of the “virtually safe dose” in humans.33

33. David A. Freedman & Hans Zeisel, From Mouse to Man: The Quantitative Assessment of Cancer Risks, 3 Stat. Sci. 3 (1988), https://doi.org/10.1214/ss/1177012993; Lorenz R. Rhomberg et al., Linear Low-Dose Extrapolation for Noncancer Health Effects Is the Exception, Not the Rule, 41 Critical Reviews Toxicology 1 (2011), https://doi.org/10.3109/10408444.22010.536524. For these reasons, many experts—and some courts in toxic tort cases—have concluded that evidence from animal experiments is generally insufficient by itself to establish causation. Likewise, extrapolation from animals to humans in testing for drug efficacy and safety has been the subject of extensive discussion. Johnique T. Atkins et al., Pre-clinical Animal Models Are Poor Predictors of Human Toxicities in Phase 1 Oncology Clinical Trials, 123 Brit. J. Cancer 1496 (2020), https://doi.org/10.1038/s41416-020-01033-x; Brian R. Berridge, Animal Study Translation: The Other Reproducibility Challenge,

Sometimes several studies, each having different limitations, all point in the same direction. This combination is why most experts believe that smoking causes lung cancer and many other diseases. So, too, a variety of studies indicate that jurors who approve of the death penalty are more likely to convict in a capital case.34 Convergent results support the validity of generalizations.

Descriptive Surveys and Censuses

We now turn to a second topic—choosing units for study. A census tries to measure some characteristic of every unit in a population. This is often impractical. Then investigators use sample surveys, which measure characteristics for only part of a population. The accuracy of the information collected in a census or survey depends on how the units are selected for study and how the measurements are made.35

What Method Is Used to Select the Units?

By definition, a census seeks to measure some characteristic of every unit in a whole population. It may fall short of this goal; in which case one must ask whether the missing data are likely to differ in some systematic way from the data that are collected.36 The methodological framework of a scientific survey is different. With probability methods, a sampling frame (i.e., an explicit list of units in the

62 Int’l Lab’y Animal Rsch. J. 1 (2021), https://doi.org/10.1093/ilar/ilac005; John P. A. Ioannidis, Extrapolating from Animals to Humans, 12 Sci. Translational Med. 151 (2012), https://doi.org/10.1126/scitranslmed.3004631; Pandora Pound & Merel Ritskes-Hoiting, Is It Possible to Overcome Issues of External Validity in Preclinical Animal Research? Why Most Animal Models Are Bound to Fail, 16 J. Translational Med. 304 (2018), https://doi.org/10.1186/s12967-018-1678-1.

34. Phoebe C. Ellsworth, Some Steps Between Attitudes and Verdicts, in Inside the Juror 42, 46 (Reid Hastie ed., 1993). Nonetheless, in Lockhart v. McCree, 476 U.S. 162 (1986), the Supreme Court held that the exclusion of opponents of the death penalty in the guilt phase of a capital trial does not violate the constitutional requirement of an impartial jury.

35. See Shari Seidman Diamond et al., Reference Guide on Survey Research, “Population Definition and Sampling” section, in this manual.

36. The U.S. Decennial Census does not count everyone that it should, and it counts some people who should not be counted. There is evidence that net undercount is greater in some demographic groups than others. Supplemental studies may enable statisticians to adjust for errors and omissions. See Elizabeth Marra & Timothy Kennel, U.S. Census Bureau, PES20-J-01, Source and Accuracy of the 2020 Post-Enumeration Survey Person Estimates: 2020 Post-Enumeration Survey Methodology Report (2022). However, the adjustments rest on uncertain assumptions. See Lawrence D. Brown et al., Statistical Controversies in Census 2000, 39 Jurimetrics J. 347 (1999); David A. Freedman & Kenneth W. Wachter, Methods for Census 2000 and Statistical Adjustments, in Social Science Methodology 232 (Steven Turner & William Outhwaite eds., 2007) (reviewing technical issues and litigation surrounding census adjustment in 1990 and 2000).

population) is created. Individual units then are selected by an objective, well-defined, chance procedure, and measurements are made on the sampled units.

A sampling frame

To illustrate a sampling frame, suppose that a defendant in a criminal case seeks a change of venue. According to the defendant, popular opinion is so adverse that it would be difficult to impanel an unbiased jury. To prove the state of popular opinion, the defendant commissions a survey. The relevant population consists of everyone in the jurisdiction who might be called for jury duty. The sampling frame is the list of all potential jurors, which is maintained by court officials and is made available to the defendant. In this hypothetical case, the fit between the sampling frame and the population would be excellent.

In other situations, the sampling frame is more problematic. In an obscenity case, for example, the defendant can offer a survey of community standards.37 The population comprises all adults in the legally relevant district, but obtaining a full list of such people may not be possible. Suppose the survey is done by telephone, but cell phones are excluded from the sampling frame. Suppose too that cell phone users, as a group, hold different opinions from landline users. Then the poll is unlikely to reflect the opinions of the cell phone users, no matter how many individuals are sampled and no matter how carefully the interviewing is done.38

Selection bias

Many surveys do not use probability methods. In commercial disputes involving trademarks or advertising, the population of all potential purchasers of a product is hard to identify. Pollsters may resort to an easily accessible subgroup of the population—for example, shoppers in a mall. Such convenience samples may be

37. On the admissibility of such polls, see State v. Midwest Pride IV, Inc., 721 N.E.2d 458 (Ohio Ct. App. 1998) (holding one such poll to have been properly excluded, and collecting cases from other jurisdictions); Admissibility of Evidence of Public-Opinion Polls or Surveys in Obscenity Prosecutions on Issue Whether Materials in Question Are Obscene, 59 A.L.R.5th 749.

38. Survey researchers may turn to other methods to reach cell phone users with area codes from the jurisdiction’s geographic locale. Kyley McGeeney & Courtney Kennedy, Pew Research Center, Advances in Telephone Survey Sampling (2015), https://perma.cc/D4MY-2L93. However, the cell phone sampling frame will omit people who have moved into the jurisdiction with cell phone numbers from other areas. Again, the mismatch between the sampling frame and the relevant population could bias the results. People who move from county-to-county or state-to-state tend to be younger, more likely to be minority, to be male, and to have lower incomes. See Carol Pierannunzi et al., Sample and Respondent Provided County Comparisons Among Cellular Respondents Using Rate Center Assignments, 12 Survey Practice 1 (2019), https://doi.org/10.29115/SP-2019-004.

biased by the interviewer’s discretion in deciding whom to approach—a form of selection bias—and the refusal of some of those approached to participate—nonresponse bias (see section titled “Of the Units Selected, Which Provide Measurements?” below). Selection bias is acute when constituents write their representatives, listeners call into radio talk shows, interest groups collect information from their members, individuals complete available online surveys, or attorneys choose cases for trial.39

A well-known example of selection bias is the 1936 Literary Digest poll. After successfully predicting the winner of every U.S. presidential election since 1916, the Digest used the replies from 2.4 million respondents to predict that Alf Landon would win the popular vote, 57% to 43%. In fact, Franklin Roosevelt won by a landslide vote of 62% to 38%.40 The Digest was so far off, in part, because it chose names from telephone books, rosters of clubs and associations, city directories, lists of registered voters, and mail order listings.41 In 1936, when only one household in four had a telephone, the people whose names appeared on such lists tended to be more affluent. Lists that overrepresented the affluent had worked well in earlier elections, when rich and poor voted along similar lines, but the bias in the sampling frame proved fatal when the Great Depression made economics a salient consideration for voters.

There are procedures that attempt to correct for selection bias. In quota sampling, for example, the interviewer is instructed to interview so many women, so many older people, so many ethnic minorities, and the like. But quotas still leave discretion to the interviewers in selecting members of each demographic group and therefore do not solve the problem of selection bias.42

Probability methods are designed to avoid selection bias. Once the population is reduced to a sampling frame, the units to be measured are selected by a lottery that gives each unit in the sampling frame a known, nonzero probability of being chosen.43 Such procedures are used to select individuals for jury duty.

39. In re Chevron U.S.A., Inc., 109 F.3d 1016, 1020 (5th Cir. 1997) (although random sampling of 30 cases to resolve common issues or to ascertain damages in 3,000 claims arising from Chevron’s allegedly improper disposal of hazardous substances would have been acceptable, having the opposing parties select 15 cases each was not, because those were “not cases calculated to represent the group of 3000 claimants”); In re Countrywide Fin. Corp. Mortgage-Backed Sec. Litig., 984 F. Supp. 2d 1021, 1039 (C.D. Cal. 2013) (“The [sample’s disproportionate] reliance on loans supporting certificates selected for litigation strikes the Court as a clear example of selection bias . . . .”). See infra note 44 (on sampling cases or claims from a large set of similar cases or claims).

40. See Freedman et al., supra note 14, at 334–35.

41. Id. at 335, A-20 n.6.

42. See id. at 337–39.

43. Many types of probability sampling have been developed. In simple random sampling, units are drawn at random without replacement. In particular, each unit has the same probability of being chosen for the sample. Id. at 339–41. More complicated methods, such as stratified sampling and cluster sampling, give greater selection probabilities to some types of units than others, which has advantages in certain applications. In systematic sampling, every nth unit (for example, every

They also have been proposed or used to choose “bellwether” cases for representative trials to resolve issues in a large group of similar cases.44

Of the Units Selected, Which Provide Measurements?

Probability sampling ensures that within the limits of chance, the sample will be representative of the sampling frame. But will all these units be measured? When documents are sampled for audit, they can all be examined, at least in principle. Human beings are less easily managed, and some will refuse to cooperate. In the 1936 Literary Digest election poll, only 24% of the 10 million people who received questionnaires returned them. Most of the respondents probably had strong views on the candidates and objected to President Roosevelt’s economic program. This self-selection is likely to have biased the poll.45

Surveys should therefore report nonresponse rates. A large nonresponse rate warns of potential bias.46 Supplemental studies may establish that nonrespondents are similar to respondents with respect to characteristics of interest, but

fifth, tenth, or hundredth unit) in the sampling frame is selected. If the units are not in any special order, then systematic sampling is often comparable to simple random sampling.

44. See Scottsdale Mem’l Health Sys., Inc. v. Maricopa Cnty., 228 P.3d 117, 131–35 (Ariz. Ct. App. 2010) (reviewing major cases and approving in principle of random sampling but concluding that the record failed to demonstrate the adequacy of the statistical sampling plan for resolving 40,000 consolidated cases); David H. Kaye & David A. Freedman, Statistical Proof, in 1 Modern Scientific Evidence: The Law and Science of Expert Testimony § 5:16, at 398–406 (David L. Faigman et al. eds., 2022–2023) (discussing both legal and statistical issues arising in these cases); David H. Kaye et al., The New Wigmore: A Treatise on Evidence: Expert Evidence § 12.10.3(b) (Cumulative Supp. to 2d ed., 2020) (same).

45. Maurice C. Bryson, The Literary Digest Poll: Making of a Statistical Myth, 30 Am. Statistician 184 (1976); Freedman et al., supra note 14, at 335–36.

46. For discussions of the admissibility of surveys with extremely low response rates, see In re ConAgra Foods, Inc., 90 F. Supp. 3d 919 (C.D. Cal. 2015) (excluding survey with many defects, including a 95% nonresponse rate); United States v. H & R Block, Inc., 831 F. Supp. 2d 27, 34 (D.D.C. 2011) (98% nonresponse rate does not preclude admission when a judge is the factfinder). On nonresponse rates in studies not undertaken for litigation, see Clearinghouse for Military Family Readiness, Penn. State Univ., Survey Response Rates: Rapid Literature Review (2016), available at https://perma.cc/8Y9Z-PTKH.

In United States v. Gometz, 730 F.2d 475, 478 (7th Cir. 1984) (en banc), the Seventh Circuit recognized that “a low rate of response to juror questionnaires could lead to the underrepresentation of a group that is entitled to be represented on the qualified jury wheel.” Nonetheless, the court held that under the Jury Selection and Service Act of 1968, 28 U.S.C. §§ 1861–1878 (1988), the clerk did not abuse his discretion by failing to take steps to increase a response rate of 30%. According to the court, “Congress wanted to make it possible for all qualified persons to serve on juries, which is different from forcing all qualified persons to be available for jury service.” Gometz, 730 F.2d at 480. Although it might “be a good thing to follow up on persons who do not respond to a jury questionnaire,” the court concluded that Congress “was not concerned with anything so esoteric as nonresponse bias.” Id. at 479, 482; cf. In re United States, 426 F.3d 1 (1st Cir. 2005)

even when demographic characteristics of the sample match those of the population, caution is indicated.47

In short, a good survey defines an appropriate population, uses a probability method for selecting the sample, has a high response rate, and gathers accurate information on the sample units. When these goals are met, the sample tends to be representative of the population, and data from the sample can be extrapolated to describe the characteristics of the population. Of course, surveys may be useful even if they fail to meet these criteria. But then, additional arguments are needed to justify the inferences.

Individual Measurements

Is the Measurement Process Reliable?

Reliability and validity are two aspects of accuracy in measurement. In statistics, reliability refers to reproducibility of results. A reliable measuring instrument returns consistent measurements. A scale, for example, is perfectly reliable if it always reports the same weight for the same unchanged object. It may not be accurate—it may always report a weight that is too high or one that is too low—but the perfectly reliable scale always reports the same weight for the same object. Its errors, if any, are systematic: They tend to point in the same direction.

Courts often use “reliable” to mean “that which can be relied on” for some purpose, such as establishing probable cause through a reliable informant or as part of an argument for admitting hearsay statements.48 Thus, in Daubert v. Merrell Dow Pharmaceuticals, the Court distinguished “evidentiary reliability” from reliability in the statistical sense of giving consistent results.49 This statistical reliability is a component of the broader evidentiary reliability required of scientific evidence. It can be ascertained by measuring the same quantity several times; the measurements must be made independently to avoid bias. Given independence, the correlation coefficient (see section titled “Correlation Coefficients” below) between repeated measurements can be used as a measure of reliability. This is sometimes called a test-retest correlation or a reliability coefficient. But administering the same test twice to the same group of people may be impractical. And

(reaching the same result with respect to underrepresentation of African Americans resulting in part from nonresponse bias).

47. See David Streitfeld, Shere Hite and the Trouble with Numbers, 1 Chance 26 (1988); Chamont Wang, Sense and Nonsense of Statistical Inference: Controversy, Misuse, and Subtlety 174–76 (1992).

48. E.g., United States v. Moore, 824 F.3d 620 (7th Cir. 2016) (“The purpose of Rule 807 is to make sure that reliable, material hearsay evidence is admitted, regardless of whether it fits neatly into one of the exceptions enumerated in the Rules of Evidence.”); Fed. R. Evid. 803(18)(B) (to be admissible hearsay, a learned treatise must be a “reliable authority”).

49. 509 U.S. 579, 590 n.9 (1993).

even if repeated testing is practical, it may be statistically inadvisable, because subjects may learn something from the first round of testing that affects their scores on the second round. Such “practice effects” are likely to compromise the independence of the two measurements, and independence is needed to estimate reliability. Statisticians therefore use internal evidence from the test itself. For example, a strong correlation between scores on the first half of the test and scores on the second half is evidence of reliability.50

The Supreme Court was faced with the imperfect reliability of a test for IQ scores in Hall v. Florida.51 The Court listed various factors contributing to the variability in an individual’s test scores, including “health; practice from earlier tests; the environment or location of the test; the examiner’s demeanor; the subjective judgment involved in scoring certain questions on the exam; and simple lucky guessing.”52 Having previously held that intellectually disabled offenders could not be punished by execution, the Court held that a state had to account for the potential variability in an individual’s IQ score in setting a number above which no one could be deemed intellectually disabled. The particular rule the Court adopted turned on one version of a statistic known as the standard error.53

A simpler courtroom example comes from DNA identification. An early method of identification required laboratories to determine the lengths of fragments of DNA. By making independent repeated measurements of the same fragments, laboratories determined the likelihood that two measurements differed by specified amounts.54 Such results were needed to decide whether a discrepancy between a crime sample and a suspect sample was sufficient to exclude the suspect.55

Coding of data also can affect reliability. In many studies, descriptive information is obtained on the subjects. For statistical purposes, the information usually has to be reduced to numbers. The process of reducing information to numbers is called “coding,” and the reliability of the process should be evaluated. For example, in a meticulous study of death sentencing in Georgia, legally trained evaluators examined short summaries of cases and ranked them according to the

50. In practice, more refined measures of internal consistency are used to estimate a test’s reliability. See, e.g., Neal M. Kingston & Laura B. Kramer, High Stakes Test Construction and Test Use, in 1 Oxford Handbook of Quantitative Methods: Foundations 189, 201 (Todd D. Little ed., 2013).

51. 572 U.S. 701 (2014).

52. Id. at 713.

53. “Standard error” is the subject of the section titled “Is a Difference Statistically Significant?” below. The relationship between the reliability coefficient and different standard errors as well as the choice of a cut-off score that accounts for a given type of standard error is explained in David H. Kaye, Deadly Statistics: Quantifying an “Unacceptable Risk” in Capital Punishment, 16 Law, Probability & Risk 7 (2017) (proposing alternatives to the Court’s rule).

54. See Nat’l Research Council, The Evaluation of Forensic DNA Evidence 139–41 (1996).

55. Id.; Nat’l Research Council, DNA Technology in Forensic Science 61–62 (1992). Current methods are discussed in David H. Kaye, Reference Guide on Human DNA Identification Evidence, in this manual.

defendant’s culpability.56 Two different aspects of reliability should be considered. First, the “within-observer variability” of judgments should be small—the same evaluator should rate essentially identical cases in similar ways. Second, the “between-observer variability” should be small—different evaluators should rate the same cases in essentially the same way.

Metrologists (specialists in measurement science) similarly distinguish between “reproducibility” and “repeatability.” The difference lies in the degree to which the conditions for making measurements are similar. With a repeatable procedure, measurements by the same examiner using the same equipment at the same place and time should be close to one another. With a reproducible procedure, measurements from different examiners even at different places and times also should be similar.57

Is the Measurement Process Valid?

Reliability is necessary but not sufficient to ensure accuracy. In addition to reliability, validity is needed. A valid measuring instrument measures what it is supposed to. Thus, a polygraph measures certain physiological variables, for example, pulse rate or blood pressure, in response to stimuli. The measurements may be reliable. Nonetheless, the polygraph is not valid as a lie detector unless the measurements are well correlated with lying.58

When there is an established way of measuring a variable, a new measurement process can be validated by comparison with the established one. Breathalyzer readings can be validated against alcohol levels found in blood samples. LSAT or GRE scores used for law school admissions can be validated against grades earned in law school. A common measure of validity is the correlation coefficient between the predictor and the criterion (for example, test scores and later performance).59

Employment discrimination cases illustrate some of the difficulties. Plaintiffs suing under Title VII of the Civil Rights Act may challenge an employment

56. David C. Baldus et al., Equal Justice and the Death Penalty: A Legal and Empirical Analysis 49–50 (1990).

57. Alan H. Dorfman & Richard Valliant, A Re-Analysis of Repeatability and Reproducibility in the Ames-USDOE-FBI Study, 9 Stat. & Public Pol’y 175 (2022), https://doi.org/10.1080/2330443X.2022.2120137; Hal S. Stern et al., Reliability and Validity of Forensic Science Evidence, Significance, Apr. 2019, at 21, 22–23.

58. See United States v. Henderson, 409 F.3d 1293, 1303 (11th Cir. 2005) (“while the physical responses recorded by a polygraph machine may be tested, ‘there is no available data to prove that those specific responses are attributable to lying’”); Nat’l Research Council, The Polygraph and Lie Detection (2003) (reviewing the scientific literature).

59. As the discussion of the correlation coefficient in the section titled “Correlation Coefficients” below indicates, the closer the coefficient is to 1, the greater the validity. For a review of data on test reliability and validity, see Measuring Success: Testing, Grades, and the Future of College Admissions (Jack Buckley et al. eds., 2018).

test that has a disparate impact on a protected group, and defendants may try to justify the use of a test as valid, reliable, and a business necessity.60 For validation, the most appropriate criterion variable is clear enough: job performance. However, plaintiffs may then challenge the validity of performance ratings. Are they sufficiently related to the actual requirements of the job? Are they biased? As for reliability, plaintiffs would need to show that each measure (the test scores and the later performance ratings) provides consistent measurements.61

A further problem is that test-takers are likely to be a select group. The ones who get the jobs are even more highly selected. Generally, selection attenuates (weakens) the correlations because differences in performance within a narrow band of highly qualified applicants do not exhibit as much variation as would be expected if a wider range of applicants were selected.62 Statistical methods that correct for attenuation depend on assumptions about the nature of the test and the procedures used to select the test-takers; these assumptions may be open to challenge.63

Measurements also can be made on a nominal scale, as when a chemist notes that litmus paper has turned red, or a criminalist declares that there is a “physical fit” between two fragments of glass. For binary (yes–no) classifications, validity (accuracy) of the classifier can be evaluated with experiments to estimate “sensitivity” and “specificity” (or corresponding error probabilities). Suppose that firearms examiners are given pairs of spent cartridge cases and are required to decide whether they come from the same gun or from two different guns. The experimenter, who has prepared the test pairs, knows the truth; the examiners do not. Results related to a small experiment are in Table 1.64

60. See, e.g., Ricci v. DeStefano, 557 U.S. 557 (2009); Washington v. Davis, 426 U.S. 229, 252 (1976); Albemarle Paper Co. v. Moody, 422 U.S. 405, 430–32 (1975); Griggs v. Duke Power Co., 401 U.S. 424 (1971); Lanning v. S.E. Penn. Transp. Auth., 308 F.3d 286 (3d Cir. 2002).

61. See section titled “Is the Measurement Process Reliable?” above (discussing Hall v. Florida).

62. For an extreme example, consider a firm that provides personal tutoring for college-entrance examinations and hires only job applicants who had near-perfect scores themselves to be the tutors. It could well be that receiving a high score is associated with being an effective tutor. But if the firm hired no low-scoring applicants as tutors, it would be impossible to see that association in a study of the correlation between the scores of the exclusively high-scoring tutors and those of the students they tutor after being hired.

63. See Thad Dunning & David A. Freedman, Modeling Selection Effects, in Social Science Methodology 225 (Steven Turner & William Outhwaite eds., 2007); Howard Wainer & David Thissen, True Score Theory: The Traditional Method, in Test Scoring 23 (David Thissen & Howard Wainer eds., 2001).

64. The numbers are rounded-off versions of those in Table C1 of Heike Hofmann et al., Treatment of Inconclusives in the AFTE Range of Conclusions, 19 Law, Probability & Risk 317, 363 (2020), https://doi.org/10.1093/lpr/mgab002 (deducing numbers for independent comparisons within a somewhat differently designed and harder to interpret 2003 experiment with eight FBI examiners). That there are zeros in the cells for false identifications and false eliminations does not mean that these outcomes cannot occur. See the “Other situations” subsection for confidence intervals below. Also, in

Table 1. Test Results for Cartridge-case Comparisons

| Same Source | Different Source | |

|---|---|---|

| Reported Same Source | 30 | 0 |

| Reported Different Source | 0 | 45 |

The examiners performed flawlessly in these 75 instances. If we call the same-source condition “positive” and the “different-source” condition “negative,” there were zero false-positive reports and zero false-negative reports. To put it another way, the examiners were 100% accurate in dealing with same-source pairs—they called 30 out of 30 such pairs positives, for an observed sensitivity of 1; likewise, they were 100% accurate in dealing with different-source pairs—they called 45 out of 45 such pairs different, for an observed specificity of 1. Whether the results of this small experiment are representative of what might be seen in a larger set of experiments, and whether the experiments would be representative of the outcomes in case work are further questions.

Are the Measurements Recorded Correctly?

Judging the adequacy of data collection involves an examination of the process by which measurements are taken. Are responses to interviews coded correctly? Do mistakes distort the results? How much data are missing? What was done to compensate for gaps in the data? These days, data are stored in computer files. Cross-checking the files against the original sources (e.g., paper records), at least on a sample basis, can be informative.

Data quality is a pervasive issue in litigation and in applied statistics more generally.65 A programmer moves a file from one computer to another, and half the data disappear. The definitions of crucial variables are lost in the sands of time. Values get corrupted: Social Security numbers come to have eight digits instead of nine, and vehicle identification numbers fail the most elementary consistency checks. Everybody in the company, from the CEO to the rawest mailroom trainee,

experiments and in practice, firearms examiners often report comparisons as “inconclusive.” This complication is discussed in the section titled “Are the Categories Appropriate?” below.

65. E.g., Bland–Collins v. Howard Univ., 19 F. Supp. 3d 252, 255 (D.D.C. 2014) (statistician who was fired after she discovered “over 5,000 errors in the coded data collected from structured interviews” in a National Science Foundation funded research project brought a whistleblower retaliation claim). Transcription errors put the FBI in the awkward position of using 33 incorrect allele frequencies (out of 1,100) in the software it distributed since 1999 to DNA laboratories for computing random-match probabilities. Tamyra R. Moretti et al., Erratum, 60 J. Forensic Sci. 1114 (2015), https://doi.org/10.1111/1556-4029.12806; Spencer S. Hsu, FBI Notifies Crime Labs of Errors Used in DNA Match Calculations Since 1999, Wash. Post, May 29, 2015.

turns out to have been hired on the same day. Many of the residential customers have last names that indicate commercial activity (e.g., “Happy Valley Farriers”). These problems seem humdrum by comparison with those of reliability and validity, but—unless caught in time—they can be fatal to statistical arguments.66

What Does It Mean to Be Random?

In the law, a selection process sometimes is called “random,” provided that it does not exclude identifiable segments of the population. Statisticians use the term in a more rigorous and technical sense. For example, to choose one person at random from a population in the strict statistical sense, we would have to ensure that everybody in the population has the same probability of selection. With a randomized controlled experiment, subjects are assigned to treatment or control at random in the strict sense—by tossing coins, throwing dice, looking at tables of random numbers, or more commonly these days, by using a random number generator on a computer. The same rigorous definition applies to random sampling. Randomness in the technical sense provides assurance of unbiased estimates from a randomized controlled experiment or a probability sample. Randomness in the technical sense also justifies calculations of standard errors, confidence intervals, and p-values (see sections titled “What Inferences Can Be Drawn from the Data?” and “Correlation and Regression” below). Looser definitions of randomness are inadequate for statistical purposes.

How Have the Data Been Presented?

After data have been collected, they should be presented in a way that makes them intelligible and that helps reveal their implications. Data can be summarized with a few numbers or with graphical displays. However, the wrong summary can

66. See, e.g., Malletier v. Dooney & Bourke, Inc., 525 F. Supp. 2d 558, 630 (S.D.N.Y. 2007) (coding errors contributed “to the cumulative effect of the methodological errors” that warranted exclusion of a consumer confusion survey); EEOC v. Sears, Roebuck & Co., 628 F. Supp. 1264, 1304, 1305 (N.D. Ill. 1986) (finding that the EEOC “has made so many general coding errors that its data base does not fairly reflect the characteristics of applicants”), aff’d, 839 F.2d 302 (7th Cir. 1988); cf. EEOC v. Freeman, 961 F. Supp. 2d 783, 796 (D. Md. 2013) (excluding an EEOC analysis of an employer’s records purporting to show disparate impact of credit and criminal records checks of job applicants because the EEOC database was not a random sample but rather was “cherry-picked” and “[t]he mind-boggling number of errors contained in [its] database could alone render [the] conclusions worthless”).

mislead.67 The section “Are Rates or Percentages Properly Interpreted?” below discusses rates or percentages and provides some cautionary examples of misleading summaries, indicating the kinds of questions that might be considered when summaries are presented in court. Percentages are often used to demonstrate statistical association, which is the topic of the section titled “Is an Appropriate Measure of Association Used?” The section titled “Does a Graph Portray Data Fairly?” considers graphical summaries of data, while the sections titled “Is an Appropriate Measure Used for the Center of a Distribution?” and “Is an Appropriate Measure of Variability Used?” discuss some of the basic descriptive statistics that are likely to be encountered in litigation, including the mean, median, and standard deviation.

Are Rates or Percentages Properly Interpreted?

How Big Is the Base of a Percentage?

Rates and percentages often provide effective summaries of data, but these statistics can be misinterpreted. A rate reports a comparison of one number against some other quantity, for example, the number of reported crimes per hundred thousand residents. A percentage reports a comparison between two numbers by putting them in terms of a common base (100). Expressing the ratio of the two numbers on a common base makes it easy to compare them.

One application of percentages is for reporting increases or decreases in a quantity or rate by describing the percent change compared to the initial or base amount. When the base is small, however, a small change in absolute terms can generate a large percentage gain or loss. (This could lead to newspaper headlines such as “Increase in Rate of Thefts Alarming,” even when the total number of thefts is small.68) Conversely, a large base will make for small percentage increases. In these situations, actual numbers may be more revealing than percentages.

Have Appropriate Benchmarks Been Provided?

The selective presentation of numerical information is like quoting someone out of context. Is the fact that a particular actively managed fund of large-cap stocks boasted a return of 25% in 2021 indicative of outstanding management? Considering that the 500 large-cap stocks in “the benchmark S&P 500 notched a total

67. See generally Freedman et al., supra note 14; Huff, supra note 14; Moore & Notz, supra note 14; Zeisel, supra note 14.

68. Lyda Longa, Increase in Thefts Alarming, Daytona News-J. June 8, 2008 (reporting a 35% increase in armed robberies in Daytona Beach, Florida, in a 5-month period, but not indicating whether the number had gone up by 6 (from 17 to 23), by 300 (from 850 to 1,150), or by some other amount).