Reference Manual on Scientific Evidence: Fourth Edition (2025)

Chapter: Reference Guide on Toxicology

Reference Guide on Toxicology

DAVID L. EATON, BERNARD D. GOLDSTEIN, AND MARY SUE HENIFIN

David L. Eaton, Ph.D., is Professor Emeritus of Environmental and Occupational Health Sciences, and Former Dean and Vice Provost of the Graduate School at the University of Washington.

Bernard D. Goldstein, M.D., is Professor Emeritus of Environmental and Occupational Health, and Former Dean, School of Public Health at the University of Pittsburgh.

Mary Sue Henifin, J.D., M.P.H., is Of Counsel at Buchanan Ingersoll & Rooney, P.C.

CONTENTS

Fundamental Principles of Toxicology

The Use of Toxicological Information in Risk Assessment

The Role of Toxicology in Establishing Causation

Toxicology and Exposure Science

Use of Biomarkers in Toxicology Exposure Assessment

In Vivo Research: Use of Live Animals in Toxicity Testing and Safety Assessment

Determining Dose–Response Relationships

Acute Toxicity Testing and the Lethal Dose 50% (LD50)

No Observed Adverse Effect Level (NOAEL)

Linearized Non-Threshold (LNT) Model and Determination of Cancer Risk

Maximum Tolerated Dose (MTD) and Chronic Toxicity Tests

New Approach Methodologies (NAMs)

Extrapolation from Animal (In Vivo) and Cell (In Vitro) Research to Humans

Toxicological Processes and Target Organ Toxicity

Demonstrating an Association Between Exposure and Risk of Disease

What Is Known About the Chemical Structure of the Compound and Its Relationship to Toxicity?

Is the Association Between Exposure and Disease Biologically Plausible?

Specific Causal Association Between an Individual’s Exposure and Onset of Disease

Were Other Factors Present That Can Affect the Distribution of the Compound Within the Body?

What Is Known About How Metabolism in the Human Body Alters the Toxic Effects of the Compound?

What Excretory Route Does the Compound Take, and How Does This Affect Its Toxicity?

What Is the Likelihood That the Disease Would Have Occurred Without the Specific Exposure?

Are the Complaints Specific or Nonspecific?

Do Laboratory Tests Indicate Exposure to the Compound?

What Other Causes Could Lead to the Given Complaint?

Is There Evidence of Interaction with Other Chemicals?

Has the Expert Considered Data That Contradict Their Opinion?

Are They Board Certified in Toxicology or in a Field Such as Occupational Medicine?

What Other Criteria Does the Proposed Expert Meet?

General References on Toxicology and Chemical Risk Assessment

FIGURES

2. Idealized comparison of a linearized non-threshold and threshold dose–response relationship

TABLE

1. Sample of Selected Toxicological Endpoints and Examples of Agents of Concern in Humans

Introduction

The discipline of toxicology is concerned primarily with identifying and understanding the adverse effects of external chemical and physical agents on biological systems, including the prevention and amelioration of such adverse effects.1 The field of risk assessment, when applied to chemicals, uses the basic principles of toxicology to help make informed decisions about how likely it is that a given proportion (e.g., 1 in 100,000) of individuals in an exposed population are likely to develop the toxic endpoint of concern. Such judgments are an essential component of regulatory toxicology in establishing health protective guidance and regulation for exposures to chemicals in the workplace, and chemical contaminants in air, drinking water, food, cosmetics, and pharmaceuticals.2 In the context of toxic tort litigation, toxicology expert opinion is focused on the likelihood that an alleged exposure to an individual, or in some instances a group of individuals, was more likely than not a significant cause or contributor to the specific disease in question. Although it is an age-old science, toxicology has become a discipline distinct from pharmacology, biochemistry, cell biology, and related fields only in the past sixty years.

Fundamental Principles of Toxicology

There are three central tenets of toxicology. First, “the dose makes the poison”: This implies that all chemical agents are intrinsically hazardous; whether they cause harm is only a question of dose.3 Even water, if consumed in large quantities, can be toxic. Second, each chemical or physical agent tends to produce a specific pattern of biological effects that can be used to establish disease causation.4 Third, the toxic responses in laboratory animals are useful predictors of toxic responses in humans, with appropriate qualifications based on an understanding of how the chemical is metabolized in humans and experimental animals, and how the chemical causes its toxicity (so-called mechanism or mode of action). Each of these tenets, and their exceptions, is discussed in greater detail in this reference guide.

1. Casarett & Doull’s Toxicology: The Basic Science of Poisons (Curtis D. Klaassen ed., 9th ed. 2019) [hereinafter Casarett & Doull’s].

2. Elaine M. Faustman, Risk Assessment, in Casarett & Doull’s, supra note 1, at ch. 4.

3. For a discussion of more modern formulations of this principle, which was articulated by Paracelsus in the sixteenth century, see David L. Eaton, Scientific Judgment and Toxic Torts—A Primer in Toxicology for Judges and Lawyers, 12 J.L. & Pol’y 5, 15 (2003); Lauren M. Aleksunes & David L. Eaton, Principles of Toxicology, in Casarett & Doull’s, supra note 1, at ch. 2. A short review of the field of toxicology can be found in Curtis D. Klaassen, Principles of Toxicology and Treatment of Poisoning, in Goodman and Gilman’s The Pharmacological Basis of Therapeutics 1739 (11th ed. 2008).

4. Some substances, such as central nervous system toxicants, can produce complex and nonspecific symptoms, such as headaches, nausea, and fatigue.

The science of toxicology attempts to determine at what doses foreign agents produce their effects. The foreign agents classically of interest to toxicologists are all chemicals (including foods and pharmaceuticals) and physical agents in the form of radiation, but not living organisms that cause infectious diseases.5 However, the poisons that are produced by living organisms (properly called toxins), such as snake venom or botulinum toxin, are generally considered under the framework of toxicology. The discipline of toxicology provides scientific information relevant to the following questions:

- What hazards does a chemical or physical agent present to human populations or the environment (ecotoxicology)?

- What degree of risk is associated with chemical exposure at any given dose?6

This reference guide focuses on toxicology concepts used in regulatory, administrative, and toxic tort cases, with an emphasis on human health effects, rather than ecological effects. A set of model questions for evaluating the admissibility and strength of an expert’s opinion is also included. Following each question is an explanation of the type of toxicological data or information that may be offered in response to the question, as well as a discussion of its significance. The relationship between toxicology, epidemiology, and exposure assessment is also addressed, with cross references to information included in the Reference Guide on Epidemiology and the Reference Guide on Exposure Science and Exposure Assessment.

Safety and Risk Assessment

Toxicological expert opinion also relies on formal safety and risk assessments. Safety assessment is the area of toxicology relating to the testing of chemicals and drugs for toxicity. It is a relatively formal approach in which the potential for toxicity of a chemical is tested in vivo or in vitro using standardized techniques. The protocols for such studies usually are developed through scientific consensus and are subject to oversight by governmental regulators or other watchdog groups.

After a number of bad experiences, including outright fraud, government agencies have imposed codes on laboratories involved in safety assessment,

5. Forensic toxicology, a subset of toxicology generally concerned with criminal matters, is not addressed in this reference guide, because it is a highly specialized field with its own literature and methodologies that do not relate directly to toxic tort or regulatory issues.

6. In standard risk assessment terminology, hazard—the ability of a chemical to cause (a) specific type(s) of toxic effect(s)—is an intrinsic property of a chemical or physical agent, while risk—the likelihood/probability that the effect will occur in an individual or population under a given set of circumstances—is dependent both upon hazard and on the extent of exposure (both the concentration or amount and the duration/frequency of exposure).

including industrial, contract, and in-house laboratories.7 Known as good laboratory practices (GLPs), these codes govern many aspects of laboratory standards, including such details as the number of animals per cage, dose and chemical verification, and the handling of tissue specimens. GLPs are remarkably similar across agencies, but the tests called for differ depending on the mission. For example, there are major differences between the Food and Drug Administration’s (FDA) and the Environmental Protection Agency’s (EPA) required procedures for testing drugs and environmental chemicals.8 The FDA requires and specifies both efficacy and safety testing of drugs in humans and animals. Carefully controlled clinical trials using doses within the expected therapeutic range are required for premarket testing of drugs, because exposures to prescription drugs are carefully controlled and should not exceed specified ranges or uses.

Although new chemicals manufactured for use as food additives, pharmaceuticals, pesticides, and cosmetics have required substantial toxicity testing prior to regulatory approval since at least the 1950s, the vast majority of industrial chemicals had little, if any, premarket toxicity testing until the mid-1970s. In 1976, Congress established new legislation, called the Toxic Substances Control Act (TSCA), to require chemical manufacturers of new chemical entities (chemicals not on an established list of the then approximately 60,000 chemicals in commerce) to submit premarket notifications that would require EPA evaluation and approval prior to the manufacture of significant quantities of the chemical. Although well intended, the legislation “lacked teeth” and over the years was widely viewed as ineffectual. In 2016, the TSCA was extensively revised under the Frank R. Lautenberg Chemical Safety for the 21st Century Act.9 This revised TSCA legislation (the Lautenberg Act) increases substantially the expectations for toxicity evaluation for existing chemicals, with a focus on those that the EPA believes pose the highest risk to human health and the environment, as well as giving the EPA more oversight prior to approval for the manufacturing of new chemicals. The EPA now provides extensive guidance to industry on the types of information and data

7. A dramatic case of fraud involving a pharmaceutical company reporting toxicology research to support the safety of a consumer product is described in Schueneman v. Arena Pharmaceuticals, Inc., 840 F.3d 698 (9th Cir. 2016). The defendants made positive public statements to investors about the safety of its weight loss drug, specifically indicating that the drug was not carcinogenic and referred to supporting “animal studies.” Id. at 700. However, it was discovered that the defendants were conducting a study in which the drug was given to lab rats, and the drug was in fact causing cancer in the rats. Id. at 701. The Ninth Circuit found that the plaintiff, one of the defendant’s investors, successfully showed that the defendants intentionally and deliberately did not disclose the negative findings of the rat study to investors. Id. at 707–08.

8. Comparison of FDA, EPA, OECD GLP (Apr. 7, 2015), https://www.fda.gov/inspections-compliance-enforcement-and-criminal-investigations/fda-bioresearch-monitoring-information/articles.

9. See Frank R. Lautenberg Chemical Safety for the 21st Century Act, Pub. L.114-182,130 Stat. 448 (2016), https://perma.cc/B4T2-9PV7.

required under the TSCA Premanufacture Notification guidelines.10 While the law and regulatory guidance often do not explicitly specify which of the numerous available toxicity testing procedures must be performed, they usually do provide a framework. Such frameworks, which may vary between different regulations, enable both industry and the EPA to assess the potential harm a new chemical may pose to people or the environment.11 Much of the evaluation relies upon chemical structure and comparison with other similar chemicals for which substantial toxicological and environmental data may be available.

In a similar manner, the European Union Regulation on Registration, Evaluation, Authorization and Restriction of Chemicals (REACH) requires extensive testing of both new chemicals and chemicals already in commerce.12 Moreover, because exposures are less predictable, doses usually are given in a wider range in animal tests for nonpharmaceutical agents.13

10. See EPA, Points to Consider When Preparing TSCA New Chemical Notifications (June 2018), https://perma.cc/H9G5-7SAT.

11. The 2016 revisions of the TSCA set forth five possible EPA determinations on new chemical notices:

- The chemical or significant new use presents an unreasonable risk of injury to health or the environment;

- Available information is insufficient to allow the EPA to make a reasoned evaluation of the health and environmental effects associated with the chemical or significant new use;

- In the absence of sufficient information, the chemical or significant new use may present an unreasonable risk of injury to health or the environment;

- The chemical is or will be produced in substantial quantities and either enters or may enter the environment in substantial quantities or there is or may be significant or substantial exposure to the chemical; or

- The chemical or significant new use is not likely to present an unreasonable risk of injury to health or the environment.14

The lack of toxicity data for new chemicals (or new uses of existing chemicals) introduced into commerce has led the EPA to propose methods of evaluation using (1) in vitro toxicity pathway testing, (2) computational toxicology approaches using structural analogs for which toxicity information is available, (3) followed by whole-animal testing where warranted. See EPA’s Review Process for New Chemicals, https://perma.cc/U65D-WANT.

12. REACH is a regulation of the European Union, adopted in 2007 to “improve the protection of human health and the environment from the risks that can be posed by chemicals, while enhancing the competitiveness of the EU chemicals industry. It also promotes alternative methods for the hazard assessment of substances in order to reduce the number of tests on animals.” See European Chemicals Agency, Understanding REACH, https://perma.cc/AC3M-UTYF.

The legislation was updated in 2022. See European Chemicals Agency, Upcoming Changes to REACH Information Requirements, https://perma.cc/A6WV-L4GZ.

13. The development of a new drug inherently requires searching for an agent that at useful doses has a biological effect (e.g., decreasing blood pressure), whereas those developing a new chemical for consumer use (e.g., a house paint) hope that at usual doses no biological effects will occur. There are other compounds, such as pesticides and antibacterial agents, for which a biological effect is desired, but it is intended that at usual doses humans will not be affected. These different expectations

A major impetus for both REACH and the Lautenberg Act was the recognition that less than 1% of the 60,000 to 75,000 chemicals in commerce had been subjected to a full safety assessment, and there were significant toxicological data on only 10% to 20% of them. Under the current U.S. and international approaches to testing chemicals with high production volume, the extent of toxicological information is expanding rapidly.15

Risk assessment is an approach increasingly used by regulatory agencies to estimate and compare the risks of hazardous chemicals and to assign priority for avoiding their adverse effects. The National Academy of Sciences defines four components of risk assessment: hazard identification, dose–response estimation, exposure assessment, and risk characterization.16

The Use of Toxicological Information in Risk Assessment

Risk assessment, as practiced by government agencies involved in regulating exposure to environmental chemicals, is highly dependent upon the science of toxicology and on the information derived from toxicological studies. The EPA, FDA, Occupational Safety and Health Administration (OSHA), Consumer Product Safety Commission, and other international (e.g., the World Trade Organization), national, and state agencies use risk assessment as a means to protect workers or the

are part of the rationale for the differences in testing information available for assessing toxicological effects. Under FDA rules, approval of a new drug usually will require extensive animal and human testing, including a randomized double-blind clinical trial for efficacy and toxicity. In contrast, under the TSCA, the only requirement before a new chemical can be marketed is that a premanufacturing notice be filed with the EPA, including any toxicity data in the company’s possession.

14. See EPA, Regulatory Determinations made under Section 5 of the Toxic Substances Control Act (TSCA), https://perma.cc/6FNB-R74U

15. For a comparison of European REACH legislation and the U.S. EPA’s 2016 TSCA provisions, see Ágnes Botos, John D. Graham & Zoltán Illés, Industrial Chemical Regulation in the European Union and the United States: a Comparison of REACH and the Amended TSCA, 22 J. Risk Rsch. 1187, https://doi.org/10.1080/13669877.2018.1454495. For an overview of current trends in toxicity testing, see Ida Fischer, Catherine Milton & Heather Wallace, Toxicity Testing Is Evolving!, 9 Toxicology Rsch. 67 (2020), https://doi.org/10.1093/toxres/tfaa011; see also Daniel Krewski et al., Toxicity Testing in the 21st Century: Progress in the Past Decade and Future Perspectives, 94 Archives Toxicology 1 (2020), https://doi.org/10.1007/s00204-019-02613-4.

16. Nat’l Rsch. Council, Science and Decisions: Advancing Risk Assessment (2009). See also In re Johnson & Johnson Talcum Powder Prods. Mktg., Sales Pracs. & Prods. Litig., 509 F. Supp. 3d 116 (D.N.J. 2020) (expert opinion that talcum powder may cause ovarian cancer based on risk assessment and analysis of Bradford Hill factors considerations is admissible). Recently, a National Academy of Sciences panel has discussed potential approaches using systematic review to improve the EPA’s risk paradigm. See Nat’l Acads. of Scis., Eng’g & Med., The Use of Systematic Review in EPA’s Toxic Substances Control Act Risk Evaluations (2021).

public from adverse effects.17 Acceptable risk levels—for example, 1 in 1,000 to 1 in 1,000,000—are usually well below what can be measured through epidemiological study. Inevitably, this means that risk assessment is usually based solely on toxicological data—or, if epidemiological findings of an adverse effect are observed, then toxicological reasoning must be used to extrapolate to the appropriate lower exposure or dose standard aimed at protecting the public.

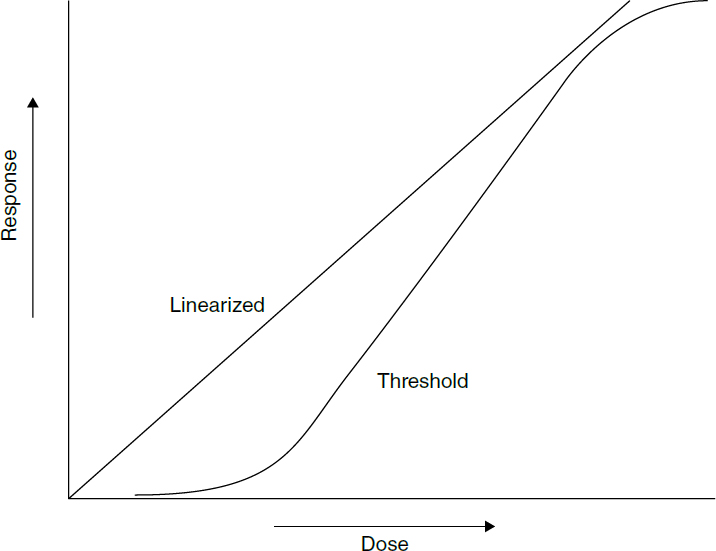

The four-part paradigm of risk assessment is heavily based on toxicological precepts. First, hazard identification, which is roughly equivalent to the legal term general causation, reflects the toxicological rule of specificity of effects, and the second step, dose–response assessment, is based upon “the dose makes the poison” and is fundamental to specific causation. The hazard identification process often uses weight-of-evidence approaches in which the toxicological, mechanistic, and epidemiological data are rigorously assessed to form a judgment regarding the likelihood that the agent produces a specific effect. Second, establishing the appropriate dose–response curve, threshold, or “one-hit” model is an exercise in toxicological reasoning. Even for those chemicals known to be carcinogens, a threshold model is appropriate if the toxicological mechanism of action can be demonstrated to depend upon a threshold. The third step, exposure assessment, requires knowledge of specific toxicological dynamics; for example, the impact on the lung of an air pollutant varies by factors such as: inhalation rate per unit body mass, which is affected by exercise and by age; the size of a particle or the solubility of a gas, either of which will affect the depth of penetration into the more sensitive parts of the airways; the competence of the usual airway defense mechanisms, such as mucus flow and macrophage function; and the ability of the lung to metabolize the agent.18 The fourth and final step is the characterization of risk.19

Risk assessment is not an exact science. It should be viewed as a useful framework to organize and synthesize information and to provide estimates on which policy making can be based. In recent years, codification of the methodology used to assess risk has increased confidence that the process can be reasonably free of bias; however, significant controversy remains, particularly when actual data are limited and generally conservative default assumptions are used.

17. Pharmaceuticals intended for human use are an exception in that a tradeoff between desired and adverse effects may be acceptable, and human data are available prior to, and as a result of, the marketing of the agent.

18. Some toxic agents pass through the lung without producing any direct effects on this organ. For example, inhaled carbon monoxide produces its toxicity in essence by being treated by the body as if it is oxygen. Carbon monoxide readily combines with the oxygen-combining site of hemoglobin, the molecule in red blood cells that is responsible for transporting oxygen from the lung to the tissues. In this way, the effective transport and tissue utilization of oxygen is blocked.

19. See, e.g., Nat. Res. Defense Council v. U.S. EPA, 38 F.4th 34 (9th Cir. 2022) (according to the EPA’s Office of Research and Development, the EPA did not follow its 2005 Guidelines for Carcinogen Risk Assessment, which lay out four steps for conducting risk assessments of chemicals’ carcinogenic potential).

Although risk assessment information about a chemical, and the research data on which it is based, may be useful in setting reasonable boundaries regarding the likelihood of causation, the impetus for the development of risk assessment has been the regulatory process, which has different goals.20 Because of their use of appropriately prudent assumptions in areas of uncertainty and their use of default assumptions when there are limited data, as well as legal requirements related to public health considerations, risk assessments often intentionally encompass the upper range of possible risks.21 An additional issue, particularly related to cancer risk, is that standards based on risk assessment often are set to avoid the risk caused by lifetime exposure at this upper-range estimate of risk. Exposure to levels exceeding this standard for a small fraction of a lifetime does not mean that the overall lifetime risk of regulatory concern has been exceeded.22 Given these issues, courts have taken different approaches to how information derived from risk assessments may be relied upon by experts.23

20. See Nat’l Rsch. Council & Comm. on Improving Risk Analysis Approaches Used by the U.S. EPA, Toward a Unified Approach to Dose–Response Assessment, in Science and Decisions: Advancing Risk Assessment 127 (2009), https://perma.cc/47RG-NKMG. See also Yates v. Ford Motor Co., No. 5:12-CV-752-FL, 2015 WL 2189774, at *17, *27 (E.D.N.C. May 11, 2015) (holding that an EPA pamphlet that includes regulatory risk assessment can be relied on by the plaintiff’s experts only to “support the reliability of studies the experts themselves rely upon”).

21. It is also claimed that standard risk assessment will underestimate true risks, particularly for sensitive populations exposed to multiple stressors, an issue of particular pertinence to discussions of environmental justice. Comm. on Env’t Just., Institute of Med., Toward Environmental Justice: Research, Education, and Health Policy Needs (1999), https://doi.org/10.17226/6034. In 2003, the EPA developed formal guidance for cumulative risk assessment, wherein it defined aggregate exposure as the “combined exposure of an individual (or defined population) to a specific agent or stressor via relevant routes, pathways, and sources.” EPA, Framework for Cumulative Risk Assessment (2003), https://perma.cc/D7CT-Z2RT. In 2022, the EPA released a draft update to this document, with an initial emphasis on identifying research needs. See EPA, Cumulative Impacts: Recommendations for ORD Research (2022), https://perma.cc/B6SL-EHUT.

22. A public health standard to protect against the lifetime risk of inhaling a known carcinogen will usually be based on lifetime exposure calculations of twenty-four hours a day, every day for seventy years. This is more than 25,000 days and 600,000 hours. Exceeding this standard for a few hours would presumably have little impact on cancer risk. In contrast, for a short-term standard set to avoid a threshold-based risk, exceeding the standard for this short time may make a major difference—for example, an asthma attack caused by being outdoors on a day that the ozone standard is exceeded. Regulatory statutes sometimes limit consideration of multiple pathways of exposure to the same chemical, potentially underestimating overall exposure and risk. See, e.g., Swati D. G. Rayasam et al., Toxic Substances Control Act (TSCA) Implementation: How the Amended Law Has Failed to Protect Vulnerable Populations from Toxic Chemicals in the United States, 56 Env’t Sci. & Tech. 11969 (2022), https://doi.org/10.1021/acs.est.2c02079. However, the interpretation of how such evaluations under the revised TSCA law are done by the EPA has been criticized. See Anthony C. Tweedale, Correspondence on “Toxic Substances Control Act (TSCA) Implementation: How the Amended Law has Failed to Protect Vulnerable Populations from Toxic Chemicals in the United States,” 56 Env’t Sci. & Tech. 16533 (2022), https://doi.org/10.1021/acs.est.2c06032.

23. See, e.g., Hardeman v. Monsanto Co., 997 F.3d 941 (9th Cir. 2021) (discussion of appropriate use in an expert opinion of the IARC’s classification of glyphosate as a “probable carcinogen”).

Toxicology and the Law

Toxicological studies, by themselves, rarely offer direct evidence that a disease in any one individual was caused by a chemical exposure.24 However, toxicology can provide scientific information regarding the increased risk of contracting a disease at any given exposure or dose and help rule out other risk factors for the disease (see section titled “Toxicology and Epidemiology” below for discussion on disease causation). Toxicological evidence also contributes to the weight of evidence supporting causal inferences by explaining how a chemical causes a specific disease through describing metabolic, cellular, and other physiological effects of exposure. For certain chemicals that create sufficiently long-lasting changes to molecular structures suitable for testing, toxicology evidence may be offered to establish chemical-specific “fingerprints” that can strengthen the causal inference between a specific chemical exposure and the presence of a specific disease.

The interface of toxicological science with legal issues can be complex, in part reflecting the inherent challenges of bringing science into a courtroom, but also because of issues pertinent to toxicology.

Complexity in toxicology is derived primarily from three factors. The first is that chemicals often change within the body as they go through various routes to eventual elimination.25 Thus absorption, distribution, metabolism, and excretion are central to understanding the toxicology of an agent.

The second is that human sensitivity to chemical and physical agents can vary greatly among individuals, often as a result of differences in absorption, distribution, metabolism, or excretion. Although toxic responses from a given chemical sometimes occur in multiple different organs in the body (e.g., liver, kidney, brain), toxic effects often occur selectively in specific tissues, which are then referred to as target organs. Both the disposition of the chemical (e.g., metabolism and excretion) and target organ sensitivity can be affected by a combination of genetic and environmental factors, including factors as mundane as drinking certain fruit juices.26

24. There are exceptions—for example, when measurements of levels in the blood or other body constituents of the potentially offending agent are at a high enough level to be consistent with reasonably specific health impacts, such as in carbon monoxide poisoning and lead poisoning.

25. Direct-acting toxic agents are those whose toxicity is due to the parent chemical entering the body. A change in chemical structure through metabolism usually results in detoxification. Indirect-acting chemicals are those that must first be metabolized to a harmful intermediate for toxicity to occur. For an overview of metabolism in toxicology, see David L. Eaton & Julia Cui, Biotransformation, in Patty’s Toxicology (James Klaunig ed., 7th ed. 2023), https://doi.org/10.1002/0471125474.tox122.

26. Grapefruit juices and certain other fruit juices at sufficient dose have the potential to inhibit the metabolism of a wide variety of drugs and chemicals. See Michael J. Hanley et al., The Effect of Grapefruit Juice on Drug Disposition, 7 Expert Op. Drug Metabolism & Toxicology 267 (2011), https://doi.org/10.1517/17425255.2011.553189; Meng Chen et al., Food-Drug Interactions

The third major source of complexity is the need for extrapolation—either across species, because many toxicological data are obtained from studies in laboratory animals, or across doses, because human toxicological and epidemiological data often are limited to specific exposure or dose ranges that may differ from the ranges of interest. All three of these factors are responsible for much of the complexity in utilizing toxicology for tort or regulatory judicial decisions and are described in more detail below.

The science of toxicology is useful in establishing general causation in toxic tort litigation through demonstrating that specific chemicals are capable of causing specific effects (e.g., specific types of cancers, birth defects, poisoning) and identifying when such effects may be manifested, either acutely or over time. Identifying such qualitative characteristics of a chemical is referred to as hazard evaluation and is often based on results of experimental animal studies. To determine the likelihood that a specific chemical caused a specific toxic effect in an individual person (specific causation in tort litigation) or a population (regulatory/population health guidance) requires careful consideration of the exposure characteristics (how much, when, and how often) that are central to determining the dose–response for the specific toxic endpoint in the individual or population. Such decisions are complex and may also rely on human epidemiological data where they exist.

Toxicology and Epidemiology

From the beginning of human history, “Why me?” is probably the most frequently asked question about disease. Epidemiology is the scientific discipline most closely involved in providing evidence to answer this question. Epidemiology is the study of the incidence and distribution of disease in human populations. Clearly, both epidemiology and toxicology have much to offer in elucidating the causal relationship between chemical exposure and disease.27 These sciences often go hand in hand with assessments of the risks of chemical exposure, without

Precipitated by Fruit Juices Other than Grapefruit Juice: An Update Review, 26 J. Food & Drug Analysis S61 (2018), https://doi.org/10.1016/j.jfda.2018.01.009.

27. See Steve C. Gold et al., Reference Guide on Epidemiology, section titled “General Causation,” in this manual. See also Nat’l Acads. Sci., Eng’g & Med, Assessing Causality from a Multidisciplinary Evidence Base for National Ambient Air Quality Standards (2022), https://perma.cc/6L9G-NBCN (discussing the strengths and limitations of epidemiological studies in establishing “causation” between exposure to a hazardous chemical (in this case, various air pollutants) and the development of specific diseases). For example, in In re Nexium Esomeprazole, 662 F. App’x 528 (9th Cir. 2016), testimony on general causation was excluded as unreliable in which the expert did not adequately explain how he inferred a causal relationship from epidemiological studies that did not come to the same conclusions. However, epidemiological studies are not always necessary. McMunn v. Babcock & Wilcox Power Generation Grp., Inc., No. 2:10CV143, 2014 WL 814878, at *15 (W.D. Pa. Feb. 27, 2014) (citing Glastetter v. Novartis Pharms. Corp., 252 F.3d 986, 992 (8th Cir. 2001); Rider v. Sandoz Pharms. Corp., 295 F.3d 1194, 1198 (11th Cir. 2002)).

artificial distinctions being drawn between them. However, although courts generally rule epidemiological expert opinion admissible, the admissibility of toxicological expert opinion has been more controversial because of uncertainties regarding extrapolation from animal and in vitro data to humans. This has been particularly true in cases in which relevant epidemiological research data exist. However, the methodological weaknesses of some epidemiological studies, including their inability to accurately measure exposure, the often small numbers of subjects, and issues such as recall bias when individuals with specific diseases are asked to recall their past exposure to chemicals in comparison with a control population, often render these studies difficult to interpret. As detailed in the Reference Guide on Epidemiology, an epidemiological study by itself can only show an association, which may or may not be causal.

The Role of Toxicology in Establishing Causation

The most well-known guidelines for considering causality of a specific effect by a specific cause are the Bradford Hill considerations.28 Austin Bradford Hill was a British epidemiologist who contributed greatly to studies linking cigarette smoking to lung cancer. As discussed in detail in the Reference Guide on Epidemiology, Hill described nine points of consideration to facilitate the determination of a causal association seen in an epidemiology study. A modified list of the Bradford Hill considerations that inform epidemiologists in making judgments about causation includes:

- Temporal relationship

- Strength of the association

- Dose–response relationship

- Replication of the findings

- Biological plausibility

- Consideration of alternative explanations

- Cessation of exposure

- Specificity of the association

- Consistency with other knowledge

28. Austin Bradford Hill, The Environment and Disease: Association or Causation?, 108 J. Royal Soc’y Med. 32 (2015) (reprint of 1965 article), https://doi.org/10.1177/0141076814562718. For a discussion of the Bradford Hill considerations, see Steve C. Gold et al., Reference Guide on Epidemiology, section titled “General Causation,” in this manual. Although the points raised by Bradford Hill in his 1965 article are often referred to as “criteria,” the author made it clear that none were absolutes. As discussed in the Reference Guide on Epidemiology in this manual, the term “consideration,” rather than “criteria” or even “guidelines,” is perhaps more appropriate to represent these ideas.

Toxicological evidence can provide insights into almost all of these considerations:

- Temporal relationship is perhaps obvious and refers to a fundamental concept that, in order for a toxic substance to have caused an adverse effect in an individual (or population of individuals), the exposure must have occurred before the onset of symptoms of the disease. However, the temporality consideration would not exclude the potential for a chemical to enhance or aggravate a preexisting disease. Toxicology often considers the potential interaction of two chemicals, referred to as potentiation, where chemical A does not by itself cause a specific adverse response or disease, but when combined with exposure to chemical B, which has been established to cause the adverse response or disease in question, the magnitude of response to chemical B is much greater in the presence of chemical A. A classic example of potentiation would be someone who takes a statin drug to lower cholesterol, with no evident adverse effects, but, upon regular consumption of large amounts of grapefruit juice, develops muscle weakness and spasms (rhabdomyolysis), a symptom of statin toxicity. In this example, the causal agent is actually the statin drug, but effects occur only after consuming large amounts of grapefruit juice.

- Strength of association refers to the overall magnitude of effect and is largely pertinent to epidemiology studies.

- Dose–response is a fundamental tenet of toxicology and is highly relevant to both toxicological and epidemiological studies. This is discussed in greater detail in other parts of this reference guide.

- Replication of the findings is also relevant to both epidemiology and toxicology. For toxicology, this is most important in demonstrating another Bradford Hill consideration, biological plausibility, whereby several independent studies under different conditions demonstrate that chemical A reproducibly causes effect B. This reproducibility could be in animal studies, in vitro studies with human tissues, or both.

- Biological plausibility is, of all of the Bradford Hill considerations, perhaps the most relevant to toxicology. As discussed in detail later in this reference guide, understanding the cellular, biochemical, and molecular processes (mechanisms and mode of action) by which a toxic substance causes an adverse effect is a critical element that can support a causal association in epidemiology studies, largely by demonstrating that adverse effects seen in experimental animals are relevant to humans because they share common mechanisms/modes of action, supporting biological plausibility. Because of ethical considerations, with the exception of pharmaceutical agents, controlled in vivo studies are largely limited to experimental animals, which is the domain of toxicology.

- Consideration of alternative explanations is largely confined to epidemiology, although the consideration of mechanisms/modes of action of a toxic substance determined in experimental animals (the domain of toxicology) may be informative of alternative explanations—for example, a workplace where exposure to multiple different substances is associated with an elevated incidence of disease. Determining which, if any, of the substances has been shown through toxicological studies to cause the disease in question may support an alternative explanation.

- Cessation of exposure can also be supported by animal studies in which the toxic response or disease in question is reduced or eliminated following cessation of exposure. This would be similarly informative and provide biological plausibility for such observations seen in epidemiology studies.

- Specificity of the association is also relevant to toxicology, where a specific adverse effect can be shown to cause an unusual or uncommon effect in animals. Toxicological studies on mechanism or mode of action may demonstrate a specific or even unique development of a particular disease or symptom in experimental animals and/or human tissues in vitro, lending biological plausibility to the specificities of association observed in epidemiology studies.

- Consistency with other knowledge also fits equally well in toxicology studies, again focused on a scientific understanding of how the toxic substance in question causes a specific adverse outcome or disease.

Thus, toxicology may contribute to the Bradford Hill considerations, for consistency considerations, particularly biological plausibility. Making sense of whether an association is causal is affected by whether there is a likely biological pathway between the exposure and the effect, for which the evidence is often based on animal toxicology studies. However, Hill argues that biological plausibility cannot be demanded, because it depends upon the biological knowledge of the day. The importance of biological plausibility to setting environmental regulation is evident in that it is now being routinely included as a chapter or sub-chapter in EPA analyses on which air pollution and other standards are based.

In recent decades, medical science has been challenged by the need to derive actionable information from the medical literature—a breadth of literature that has increased both in size and in the diversity of medical specialties and subspecialties that are being applied to solve problems related to diagnosis and treatment of disease. This has led to the development of formal systematic approaches to gathering and evaluating the pertinent epidemiological literature. The National Institutes of Health (NIH) held consensus-developing conferences from 1977 to 2013 aimed at controversial topics in clinical decision-making, which included

toxicological data where relevant. These were discontinued as no longer necessary because many other organizations had developed similar approaches.29

A more recent example of a common approach to developing a systematic review process for evaluating the medical science literature has been that of the Cochrane reviews.30 These are systematic approaches that analyze the existing literature and focus almost exclusively on epidemiological findings, primarily related to diagnosis and treatment of disease. In part building on the Cochrane review model, systematic reviews have been suggested for toxicology31 and for public health.32

In contrast to epidemiology, because animal and cell studies permit researchers to isolate the effects of exposure to a single chemical or to mixtures, toxicological findings offer unique information concerning dose–response relationships, mechanisms of action, specificity of response, and other information relevant to the assessment of causation—although these can present issues related to extrapolation to humans.33

The gold standard in clinical epidemiology and in the testing of pharmaceutical agents is the randomized double-blind placebo control study in which the control and intervention groups are usually well matched. Although appropriate and very informative for the testing of pharmaceutical agents, it is generally unethical for chemicals used for other purposes. The randomized control design in essence is what is used in a classic toxicological study in laboratory animals,

29. For a review of consensus approaches in general and the importance of unbiased selection of members of review committees that reflect the broad disciplines involved, see Bernard D. Goldstein, What the Trump Administration Taught Us About the Vulnerabilities of EPA’s Science-Based Regulatory Processes: Changing the Consensus Processes of Science into the Confrontational Processes of Law, 31 Health Matrix 299 (2021), https://perma.cc/YJ3H-TPPR. For a discussion of general issues regarding medical practice and testimony, see John B. Wong et al., Reference Guide on Medical Testimony, in this manual.

30. Cochrane Library, About Cochrane Reviews, https://perma.cc/UXR9-4HPL.

31. Lena Smirnova et al., 3S—Systematic, Systemic, and Systems Biology and Toxicology, 35 ALTEX 139 (2018) https://doi.org/10.14573/altex.1804051. See also Thomas Hartung et al., Systems Toxicology II: A Special Issue, 30 Chem. Rsch. Toxicology 869 (2017), https://doi.org/10.1021/acs.chemrestox.7b00038.

32. See also Steve C. Gold et al., Reference Guide on Epidemiology, section titled “Methods for Synthesizing or Combining the Results of Multiple Studies,” in this manual.

33. Courts have found animal studies to be relevant evidence of causation where there is a sound basis for extrapolating conclusions from those studies to humans in real-world conditions. See Hardeman v. Monsanto Co., 997 F.3d 941 (9th Cir. 2021); see also Milana v. Eisai, Inc., No. 8:21-cv-831-CEH-AEP, 2022 WL 846933 (M.D. Fla. Mar. 22, 2022) (increase in tumors in mammary tissue of experimental animals compared to higher rates of cancer in patients taking weight loss drug); In re Levaquin Prods. Liab. Litig., No. 08–1943 (JRT), 2010 WL 8400514, at *2–4, *8 (D. Minn. Nov. 8, 2010); Brandeis Univ. v. Keebler Co., No. 1:12–cv–01508, 2013 WL 5911233 (N.D. Ill. Jan. 18, 2013); In re Abilify (Aripiprazole) Prods. Liab. Litig., 299 F. Supp. 3d 1291, 1310 (N.D. Fla. 2018).

although matching is more readily achieved because the animals are bred to be genetically similar and have identical environmental histories.

Dose issues are at the intersection between toxicology and epidemiology. Many epidemiological studies of the potential risk of chemicals do not have direct information about dose, although qualitative differences among subgroups or in comparison with other studies can be inferred. The epidemiology database includes many studies that are probing for the potential for an association between a cause and an effect. Thus, a study asking all those suffering from a specific disease a multiplicity of questions related to potential exposures is bound to find some statistical association between the disease and one or more exposure conditions due to chance alone. Such studies generate hypotheses that can then be evaluated by subsequent studies that more narrowly focus on the potential cause-and-effect relationship. One way to evaluate the strength of the association is to assess whether those epidemiological studies evaluating cohorts with relatively high exposure support the association.34

The requirement in certain jurisdictions for epidemiological evidence of a relative risk greater than two (RR > 2) for general causation also has limited the utilization of toxicological evidence.35 A firm legal requirement for such evidence means that if the epidemiological database only showed statistically significant evidence that cohorts exposed to ten parts per million of an agent for twenty years produced an 80% increase in risk, the court could not hear the case of a plaintiff alleging that exposure to fifty parts per million for twenty years of the same agent caused the adverse outcome. Yet to a toxicologist, there would be little question that exposure to the fivefold-higher dose of an agent producing an 80% increase in risk would lead to more than a 100% increase in risk, i.e., more than doubling of the risk.

34. As an example of considering dose and exposure issues across epidemiological studies, see Luoping Zhang et al., Formaldehyde Exposure and Leukemia: A New Meta-Analysis and Potential Mechanisms, 681 Mutation Rsch. 150 (2008), https://doi.org/10.1016/j.mrrev.2008.07.002. The subject of the strength of an epidemiological association and its relation to causality is considered in Steve C. Gold et al., Reference Guide on Epidemiology, in this manual; see also note 79, infra.

35. The basis for the use of RR > 2 is the translation of the preponderance of evidence rule, or “more likely than not” legal standard, into the epidemiological concept of at least a doubling of risk. An example is the Havner rule in Texas, which for general causation requires that there be reliable epidemiological studies with a statistically significant RR > 2 associating a putative cause with an effect. See Bostic v. Ga. Pac. Corp., 439 S.W.3d 332 (Tex. 2014). But see Hardeman v Monsanto Co., 997 F.3d 941, 966 (9th Cir. 2021) (“[W]e have never suggested that a hardline increase in a risk statistic, or even an adjusted odds ratio above 2.0, is necessary for finding a strong association. To the contrary, flexibility is warranted considering the contextual nature of the Daubert inquiry.”) (citations omitted). For a discussion of the use by courts in various jurisdictions of relative risk > 2 for general and specific causation, see Russellyn S. Carruth & Bernard D. Goldstein, Relative Risk Greater Than Two in Proof of Causation in Toxic Tort Litigation, 41 Jurimetrics 195 (2001); for the toxicological issues, see Bernard D. Goldstein, Toxic Torts: The Devil Is in the Dose, 16 J.L. & Pol’y 551–85 (2008).

Even though there is little toxicological data on many of the approximately 75,000 compounds in general commerce, there is usually far more information from toxicological studies than from epidemiological studies.36 It is much easier, and usually more economical, to expose an animal to a chemical or to perform in vitro studies than it is to perform epidemiological studies. This difference in data availability is evident even for cancer causation, for which toxicological study is particularly expensive and time consuming. Of the approximately fifty specific chemicals that reputable international authorities agree are known human carcinogens based on positive epidemiological studies, almost all of them have also been shown to cause cancer in laboratory animal studies. Yet there are hundreds of known animal carcinogens for which there is either no valid epidemiological database or for which the epidemiological database has been equivocal.37

To clarify any findings, regulators can require a repeat of an equivocal two-year animal toxicological study or the performance of additional laboratory

36. See, for example, Nat’l Toxicology Prog., 15th Rep. on Carcinogens (2021), https://perma.cc/V4DP-3YNZ, for a discussion of how epidemiology and animal toxicity studies are used to assess the potential cancer risk of different chemicals.

37. See, e.g., Int’l Agency for Rsch. on Cancer, IARC Monographs on the Identification of Carcinogenic Hazards to Humans, https://perma.cc/R56M-EQMM; Nat’l Toxicology Prog., supra note 36; Waite v. AII Acquisition Corp., 194 F. Supp. 3d 1298 (S.D. Fla. 2016) (court remains “unconvinced” that lack of statistically significant epidemiological studies properly overcomes all other types of scientific evidence including animal, cellular, and molecular studies). The absence of epidemiological data is due, in part, to the difficulties in conducting cancer epidemiology studies, including the lack of suitably large groups of individuals exposed for a sufficient period of time, long latency periods between exposure and manifestation of disease, the high variability in the background incidence of many cancers in the general population, and the inability to measure actual exposure levels. See also Parker v. Mobil Oil Corp., 857 N.E.2d 1114 (N.Y. 2006), in which New York’s highest court reviewed an appeal from a summary judgment of a lower court that had dismissed a plaintiff’s case because of the lack of a specific exposure estimate by the plaintiff’s experts. The plaintiff’s experts claimed that unusual work practices during his seventeen years employed at a gasoline station led to higher exposure to benzene, a known cause of acute myelogenous leukemia (AML) from which the patient suffered, than would have occurred in the work practices of a usual gasoline station worker. The higher court found for the plaintiff on the lack of a need for a specific exposure assessment, citing other cases finding that an expert does not need to establish the amount of exposure the plaintiff experienced. Id. at 1121 (citing McClain v. Metabolife Int’l, Inc., 401 F.3d 1233, 1241 n.6 (11th Cir. 2005)). However, the same court ruled for the defense based on the absence of epidemiological evidence that gasoline caused AML, despite the fact that benzene is a component of the gasoline mixture. Court preference for epidemiology is also evident in the Havner rule (see supra note 35). The requirement for epidemiological evidence of a doubling of risk precludes toxicological reasoning related to dose. For example, suppose there were two epidemiological studies showing a statistically significant 80% increase of an adverse endpoint in large workforces in well-regulated industries that were exposed to ten parts per million (ppm) of a chemical for thirty years. Suppose also that there was a plaintiff with the same endpoint whose experts presented evidence that the plaintiff was exposed to fifty ppm for thirty years to the same chemical. Based on dose considerations, a toxicologist could argue that the plaintiff’s level of exposure would exceed 100%, i.e., more than double the plaintiff’s risk. But under a strict interpretation of the Havner rule, the plaintiff’s case would be dismissed.

studies in which animals deliberately are exposed to the chemical. Such deliberate exposure is not possible in humans. As a general rule, unequivocally positive epidemiological studies reflect prior workplace practices that led to relatively high levels of chemical exposure for a limited number of individuals and that, fortunately, in most cases no longer occur now. Thus, an additional prospective epidemiological study often is not possible, and even the ability to do retrospective studies is constrained by the passage of time.

In essence, epidemiological findings consistently demonstrating an adverse effect in humans of a manufactured chemical represent a failure of toxicology as a preventive science. A corollary of the tenet that, depending upon dose, all chemical and physical agents are harmful is that society depends upon toxicological science to discover and anticipate these harmful effects, and we also rely on regulators and other responsible parties to prevent human exposure to a harmful level or to ensure that the agent is not manufactured and distributed. In this regard epidemiology is a valuable backup approach that functions to detect failures of primary prevention. The two disciplines complement each other, particularly when the approaches are iterative.

Toxicologists may use epidemiological findings in their expert opinions concerning general causation and specific causation. This is particularly true for well-studied compounds that have been thoroughly evaluated by respected organizations, such as the International Agency for Research on Cancer (IARC, affiliated with the World Health Organization (WHO)) or the National Toxicology Program (NTP, affiliated with the U.S. Department of Health and Human Services), which include toxicologists and epidemiologists in their review of the evidence. For example, in mortality studies of large cohorts of workers exposed to high levels of a carcinogenic agent in which all types of cancer are reported, but only an increase in brain cancer is noted, the toxicologist may consider this information in determining if a pancreatic cancer was caused by exposure to a lesser dose of the agent. In terms of assessing specific causation for exposure to this known carcinogen, there may be the equivalent of a No Observed Adverse Effect Level (NOAEL; see section titled “Toxicological Study Design” below) in the reviewed and accepted epidemiological data in which an increase in brain cancer risk is seen only in the more highly exposed populations. Whether a toxicologist is qualified to offer an opinion based on both toxicology and epidemiology data depends on the particular background of the proffered expert and the legal standard applied in the jurisdiction.

Toxicology and Exposure Science

In recent decades, exposure assessment has developed into a scientific field (exposure science) with journals, learned societies, and research funding processes.

Exposure science methodologies include mathematical models used to predict exposure resulting from an emission source, which might be a long distance upwind; chemical or physical measurements of media such as air, food, and water; and biological monitoring within humans, including measurements of blood and urine specimens if available, for the chemical of concern and/or its metabolites. Extrapolation back to the level of external exposure depends upon toxicokinetic analyses (see section titled “Safety and Risk Assessment” above). In this continuum of exposure metrics, the closer to the human body, the greater the overlap with toxicology.38 An exposure assessment should also look for competing exposures from other sources of the chemical of concern.

Exposure assessment is central to epidemiology as well and is often the limiting factor in using an epidemiological study to infer causation. Many of the causal associations between chemicals and human disease have been developed from epidemiological studies relating a workplace chemical to an increased risk of the specific disease in cohorts of workers, often with only a qualitative assessment of exposure. More sophisticated modern approaches to chemical exposures at complex workplaces include development of a job exposure matrix based on specific exposure levels throughout the working lifetime. An improved quantitative understanding of such exposures enhances the likelihood of observing causal relations.39 It also can support the opinion of the expert toxicologist on the likelihood that a specific exposure was responsible for an adverse outcome.

38. Toxicologists also have indirect means of approaching exposure through symptoms— e.g., for many agents, there is a known threshold for smell and a reasonable range of levels that might cause symptoms. For example, the use of toxicological expertise is appropriate in a situation in which chronic exposure to a volatile hydrocarbon is alleged to have occurred at levels at which acute exposure would be expected to render the individual unconscious. Toxicologists may also contribute knowledge of the extent of individual exposure based upon appropriate assumptions concerning inhalation rate or water use; for example, children inhale more per body mass than do adults, and outdoor workers in hot climates will drink more fluids than those in cool climates. For a discussion of general issues regarding assessment of exposure of toxic substances, see M. Elizabeth Marder & Joseph V. Rodricks, Reference Guide on Exposure Science and Exposure Assessment, in this manual.

39. In terms of general causation, accurate exposure assessment is important because a true effect can be missed owing to confounding caused by cohorts that often include workers with little exposure to the putative offending agent, thereby diluting the actual effect. See Peter F. Infante, Benzene Exposure and Multiple Myeloma: A Detailed Meta-analysis of Benzene Cohort Studies, 1076 Annals N.Y. Acad. Scis. 90 (2006), https://doi.org/10.1196/annals.1371.081, for a discussion of this issue in relation to a meta-analysis of the potential causative role of benzene in multiple myeloma. On the other hand, an association between exposure and effect occurring solely by chance is more likely if the effect does not meet the expected standard of being more pronounced in those receiving the highest dose. See Goldstein, supra note 35. Setting regulatory standards based on the observed effect in a cohort often requires a risk assessment, which in turn is dependent on understanding the extent of the exposure. This has led to extensive retrospective reconstruction of exposure in key cohorts.

Use of Biomarkers in Toxicology Exposure Assessment

The term biomarker is used to identify the measurement of a chemical or biological molecule present in tissue when such tests are available for the substance at issue, and where the tests have been administered within the time frame when the substance may be present in the body to indicate past and/or current exposure.40 There are three different types of biomarkers of relevance in toxicology: a) biomarkers of exposure, b) biomarkers of effect, and c) biomarkers of susceptibility. Biomarkers may be persistent in tissue or may rapidly disappear, depending on the chemicals at issue.

Biomarkers of Exposure

A widely used biomarker of exposure is blood alcohol levels estimated by the Breathalyzer test, frequently used by law enforcement to establish whether an automobile driver is inebriated. The science behind the breathalyzer test has been the subject of many court decisions. In a similar manner, measurements of the concentration of drug(s) in the blood may be used to establish cause of death. However, alcohol and most drugs have relatively short half-lives (the time it takes for 50% of the chemical to disappear from the blood), on the order of a few hours or less, and thus are useful only if the blood (or breath) sample is collected soon after the exposure occurred.

The concentration of lead in the blood and/or bones of an individual is another example of a biomarker of exposure that has been extensively studied. In the case of lead exposure, the half-life in blood is about one month, so repeated exposures in the workplace or environment can result in blood lead concentrations above what is measured in the environment. The half-life of lead in bone is on the order of twenty-five to thirty years, so assessment of lead in bone, performed noninvasively through an X-ray fluorescence technique, provides a window into past exposures to lead over a lifetime. Some other environmentally relevant toxic substances, referred to collectively as persistent organic pollutants or POPs (e.g., polychlorinated biphenyls (PCBs), dioxins, certain organochlorine pesticides like DDT and chlordane, and more recently a group of fluorinated chemicals collectively called per- and polyfluoroalkyl substances, or PFAS41),

40. See Nat’l Rsch. Council, Human Biomonitoring for Environmental Chemicals (2006), https://perma.cc/9AQ2-QXUQ; see also Lesa L. Aylward, Integration of Biomonitoring Data into Risk Assessment, 9 Current Op. Toxicology 14 (2018), https://doi.org/10.1016/j.cotox.2018.05.001.

41. Sometimes also referred to in the popular press as “forever chemicals.” For an EPA summary of research on PFAS, see https://perma.cc/JLV4-54XX.

have half-lives measured in years, and thus slow accumulation from environmental exposures can occur over many years. For these types of chemicals, blood concentrations are indicative of lifetime exposures—not just recent exposure—and can be useful to establish whether an unusual exposure to a specific agent has occurred in the past.

The Centers for Disease Control and Prevention’s (CDC) National Health and Nutrition Examination Survey (NHANES) monitors a broad level of biological markers of health—e.g., blood cholesterol levels—on a U.S. population sample. This survey now includes measurements of a wide variety of environmental and dietary substances. For environmental agents, the CDC considers blood levels above the statistical ninety-fifth percentile level in the NHANES samples as an indication of an “unusual exposure,” although it does not state whether exposures above, or below, the ninety-fifth percentile are necessarily harmful or safe, respectively.42 However, other regulatory agencies might use such levels to indicate that an exposure at that level is associated with an unacceptable risk.

Biomarkers of Effect

Research on certain toxic chemicals has established the presence of biological changes indicative of the effects of that specific chemical and, thus, can also provide evidence of exposure, at least within the time period that the biological changes remain within the body. For example, when carbon monoxide enters the bloodstream, it binds to hemoglobin, the molecule responsible for delivering oxygen from the lungs to the rest of the body. Once bound, it prevents oxygen from binding, which results in oxygen deprivation that can be rapidly fatal. For sublethal doses of carbon monoxide, the time to full equilibrium with blood hemoglobin is eight to twelve hours, depending largely on breathing rate. The measurement of the amount of this carboxyhemoglobin in blood is therefore both a biomarker of exposure—reflecting carbon monoxide exposure during the past eight to twelve hours—as well as a biomarker of effect, as it is on the direct pathway to toxicity. Carbon monoxide can also be measured in expired air, in which case it primarily reflects exposure. Another example of a biomarker of exposure and effect is provided by a broad class of insecticides known as organophosphorus chemicals (OPs). As a chemical class, OPs bind to and inhibit an important

42. For more details on the NHANES program, see https://perma.cc/UY4L-S8TY. One objective of the NHANES program was to “establish reference ranges that physicians and scientists can use to determine whether a person or group has an unusually high exposure. . . . The 95th percentile is helpful when determining whether levels observed in separate public health investigations or other studies are unusual.” CDC, Fourth National Report on Human Exposure to Environmental Chemicals (Volume Three: Analysis of Pooled Serum Samples for Select Chemicals, NHANES 2005–2016), https://perma.cc/E28W-X5HL.

enzyme in nerve function called cholinesterase and can be rapidly lethal. Cholinesterase activity unrelated to its role in the nervous system is also present in red blood cells and in plasma. Measurements of cholinesterase in the blood can thus be an effects-related biomarker of exposure to many OP pesticides. This test is used frequently in the occupational environment and clinically to diagnose OP poisoning. Where available, and when testing is done within the required time frames, such effects-related biomarkers provide information that is directly on the pathway between external exposure and internal effects. Although currently there are only a relatively few chemicals for which specific biomarkers are available, it is predicted that the increasing understanding of molecular effects of toxic substances will lead to more effects-related biomarkers of exposure.

Biomarkers of Susceptibility

Just as biological measurement of environmental exposures is an area of research interest, another area of research interest is DNA analyses to identify individuals who may be more susceptible to exposures, such as genetically determined variability in enzymes responsible for detoxifying chemicals of concern. Given the complexities of environmental and genetic interactions, much discussion in this area remains theoretical.

Toxicological Study Design

Toxicological studies usually involve exposing laboratory animals (in vivo research) or cells or tissues (in vitro research) to chemical or physical agents, monitoring the outcomes (such as cellular abnormalities, tissue damage, organ toxicity, or tumor formation), and comparing the outcomes with those for unexposed control groups. As explained below,43 the extent to which animal and cell experiments accurately predict human responses to chemical exposures is subject to debate. However, because it is generally unethical to experiment on humans by exposing them to known doses of chemical agents, animal toxicological evidence often provides the best scientific information about the risk of disease from a chemical exposure.44

43. See section titled “Extrapolation from Animal (In Vivo) and Cell (In Vitro) Research to Humans” below.

44. Clinical drug trials for therapeutic drugs are an example of experimental human exposure, where as a policy matter potential benefit is considered greater than the risk of adverse events. Notably, information from animal research is used to determine risk profiles, prior to human trials. The human trials are highly regulated, and subject to ethical requirements, including informed

In contrast to their exposure to drugs, only rarely are humans exposed to environmental chemicals in a manner that permits a quantitative determination of adverse outcomes.45 This area of toxicological study may consist of individual or multiple case reports, or even experimental studies in which individuals or groups of individuals have been exposed to a chemical under circumstances that permit analysis of dose–response relationships, mechanisms of action, or other aspects of toxicology. For example, individuals occupationally or environmentally exposed to PCBs have been studied to determine the routes of absorption, distribution, metabolism, and excretion for this group of industrial chemicals. Human exposure occurs most frequently in occupational settings where workers are often exposed each working day to industrial chemicals. In well-regulated workplaces, there is often information about the exposure levels of specific chemicals of concern to the average worker. However, even under these circumstances, determining the amount (concentration, frequency, and duration) of exposure of an individual worker is more problematic, particularly as it must be done retrospectively in most toxic tort cases. Moreover, human populations are exposed to many other chemicals and risk factors, including those associated with normal aging, making it more difficult to isolate the role of any one chemical in the increased risk of a disease.46

Toxicologists use a wide range of experimental techniques, depending in part on their area of specialization. Toxicological research may focus on classes of chemical compounds, such as solvents, metals, and pesticides; body system effects, such as effects on the nervous system (neurotoxicology), the liver (hepatotoxicology), the reproductive system (reproductive toxicology), and the immune system (immunotoxicology); chemical effects on complex disease processes such as cancer (chemical carcinogenesis) and birth defects (developmental toxicology); and effects on physiological processes, including inhalation toxicology, cardiovascular toxicology, and dermal (skin) toxicology. Each of these subdisciplines utilizes both in vivo and in vitro research.47 Of importance to all of these types of toxicological studies is the rapidly growing field of molecular toxicology, which examines how certain chemicals interact with biomolecules within the cell, such as proteins, lipids, and DNA. Molecular toxicology studies provide critical insights into cross-species extrapolation, dose–response, and mode of action that demonstrates the biological plausibility used for causal inference following human exposure.

consent. See, e.g., FDA, Clinical Trials Guidance Documents, https://www.fda.gov/science-research/clinical-trials-and-human-subject-protection/clinical-trials-guidance-documents.

45. However, it is from drug studies in which multiple animal species are compared directly with humans that many of the principles of toxicology have been developed.

46. See, e.g., OECD, Considerations for Assessing the Risks of Combined Exposure to Multiple Chemicals (2018), https://perma.cc/L48K-W79J.

47. See, e.g., Casarett & Doull’s, supra note 1.

The insights provided by molecular toxicology are of particular interest to regulatory toxicologists who are exploring whether these insights can form the basis for new, more sensitive screening techniques to determine the risk of new or existing chemicals.48

In Vivo Research: Use of Live Animals in Toxicity Testing and Safety Assessment

Animal research in toxicology generally falls under two headings: safety assessment and classic laboratory research, with a continuum between them. As explained in the section titled “Safety and Risk Assessment” above, safety assessment is a relatively formal approach in which a chemical’s potential for toxicity is tested in vivo or in vitro using standardized techniques often prescribed by regulatory agencies, such as the EPA and the FDA.49

The roots of toxicology in the science of pharmacology are reflected in an emphasis on understanding the absorption, distribution, metabolism, and excretion of chemicals. Basic toxicological laboratory research also focuses on the mechanisms or modes of action of external chemical and physical agents.50 Such research is based on the standard elements of scientific studies, including appropriate experimental design using control groups and statistical evaluation. In general, toxicological research attempts to hold all variables constant except for that of the chemical exposure. Any change in the experimental group not found in the control group is assumed to be caused by the chemical.

Determining Dose–Response Relationships

An important component of toxicological research is dose–response relationships. Thus, most toxicological studies generally test a range of doses of the chemical. Animal experiments are conducted to determine the dose–response relationships of a compound by measuring how response varies with dose,

48. Robert J. Kavlock et al., Accelerating the Pace of Chemical Risk Assessment, 31 Chem. Rsch. Toxicology 287 (2018), https://doi.org/10.1021/acs.chemrestox.7b00339.

49. See, e.g., Michael Dorato et al., The Toxicologic Assessment of Pharmaceutical and Biotechnology Products, in Hayes’ Principles and Methods of Toxicology 325 (A. Wallace Hayes & Claire L. Kruger eds., 6th ed. 2014), https://doi.org/10.1201/b17359.

50. The terms mechanism of action and mode of action are conceptually similar and are used to describe the specific molecular, biochemical, and cellular changes that are responsible for the observed toxic effects following the administration of a chemical. Although not identical, the two terms have similar meaning. Generally, mode of action is somewhat broader and refers to the generalized sequence of molecular events that lead to toxicity, whereas mechanism of action refers to a specific cellular, biochemical, or molecular target of the substance (e.g., a specific receptor).

including diligently searching for a dose that has no measurable adverse effect. This information is useful in understanding the mechanisms of toxicity and in extrapolating data from animals to humans.51

Acute Toxicity Testing and the Lethal Dose 50% (LD50)

To determine the dose–response relationship for a compound, a short-term lethal dose 50% (LD50) may be derived experimentally. The LD50 is the dose at which a compound kills 50% of laboratory animals and usually is determined from a single exposure, although the observation may extend for a period of days to weeks. The use of this easily measured endpoint for acute toxicity largely has been replaced, in part because recent advances in toxicology have provided other pertinent endpoints, and in part because of animal welfare concerns that have focused on reduction, refinement, or replacement of the use of animals in laboratory research.52 Although determining the LD50 was frequently the primary goal of acute toxicity testing, much additional useful information is obtained, such as the identification of the target organ(s) of effect, overall characterization of the symptoms, and progression of toxicity following single doses. Acute toxicity studies are also necessary to establish the dose range necessary for subsequent repeated-dose (subacute, usually fourteen days; and subchronic, usually ninety days) toxicity studies.

No Observed Adverse Effect Level (NOAEL)

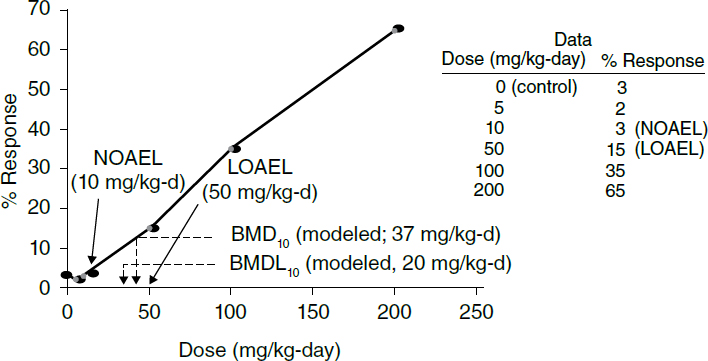

A dose–response study also permits the determination of another important characteristic of the biological action of a chemical—the no observed adverse effect level (NOAEL; Figure 1).53 The NOAEL sometimes is called a threshold, because it is the level above which observed adverse or toxic effects in test

51. See sections titled “Benchmark Dose (BMD),” “Linearized Non-Threshold (LNT) Model and Determination of Cancer Risk,” and “Maximum Tolerated Dose (MTD) and Chronic Toxicity Tests” below.

52. Dale M. Cooper et al., The Humane Use and Care of Laboratory Animals in Toxicology Research, in Hayes’ Principles and Methods of Toxicology 1023, supra note 49.

53. For example, undiluted acid on the skin can cause a horrible burn. As the acid is diluted to lower and lower concentrations, less and less of an effect occurs until there is a concentration sufficiently low (e.g., one drop in a bathtub of water, or a sample with less than the acidity of vinegar) that no effect occurs. This “no observed effect” concentration differs from person to person. For example, a baby’s skin is more sensitive than that of an adult, and skin that is irritated or broken responds to the effects of an acid at a lower concentration. However, the key point is that there is some concentration that is completely harmless to the skin.

animals are believed to occur and below which no toxicity is observed.54 Of course, because the NOAEL is dependent on the ability to observe an effect, the level is sometimes lowered after more sophisticated methods for detecting effects are developed. Typically, NOAEL studies involve the administration of the test chemical at varying different doses, daily over a period of ninety days. At least three doses (plus the “zero dose” control) are used, with doses generally spanning a dose range of thirty- to one hundred-fold, with the highest dose causing clear evidence of toxicity, and the lowest dose showing no evidence of toxicity. Typically, rats are used, with at least ten males and ten females for each dose group. The ultimate goal is to establish a dose–response curve, with at least one dose that does not show any evidence of toxicity. In circumstances where the lowest dose tested resulted in an adverse effect, the terminology for that dose is the lowest observed adverse effect level (LOAEL), which may then be used in place of a NOAEL, with additional caveats (e.g., uncertainty factors) if used in risk assessment.

Benchmark Dose (BMD)

For regulatory toxicology, the NOAEL has been replaced by a more statistically robust approach known as the benchmark dose (BMD; Figure 1). The BMD is determined based on dose–response modeling and is defined as the exposure associated with a specified low incidence of risk, generally in the range of 1% to 10%, of a health effect, or the dose associated with a specified measure or change of a biological effect (thus, if the lower range on the BMD is set at a 10% response, it is referred to as a BMD10). To model the BMD, sufficient data must exist, such as at least a statistically or biologically significant dose–related trend in the